PRE2023 3 Group3: Difference between revisions

| (207 intermediate revisions by 6 users not shown) | |||

| Line 1: | Line 1: | ||

<big>This project was guided from start to finish by Dr. Ir. René van de Molengraft and Dr. Elena Torta.</big> | |||

== Group members == | == Group members == | ||

| Line 33: | Line 34: | ||

== Problem statement == | == Problem statement == | ||

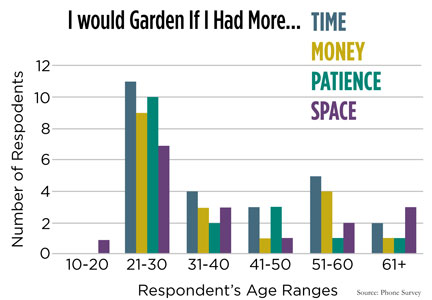

[[File:Manchester University Survey.png|frame|<ref>https://www.growertalks.com/Article/?articleid=20101</ref> Manchester University survey on why people don't garden.]] | [[File:Manchester University Survey.png|frame|<ref name=":0">[https://www.growertalks.com/Article/?articleid=20101 ''A College Class Asks: Why Don’t People Garden?'' (n.d.). Www.growertalks.com. https://www.growertalks.com/Article/?articleid=20101]</ref> Manchester University survey on why people don't garden.]] | ||

In western society, having a family, a house, and a good job | In western society, having a family, a house, and a good job is what many people aspire to have. As people strive to achieve such aspirations, their spending power increases, which allows them to be able to afford to buy a nice home for their future family, with a nice garden for the kids and pets. However, as with many things, in our capitalist world, this usually comes at a sacrifice: free time. According to a study conducted by a student team at Manchester University<ref name=":0" />, the three '''main reasons''' '''why people don't garden''' which made up '''60% of the survey''' responses included '''time constraints''', '''lack of knowledge/information''', and '''space restraints'''. Gardening should be encouraged, due to its environmental benefits and many other advantages<ref>[https://schultesgreenhouse.com/Benefits.html#:~:text=Plants%20act%20as%20highly%20effective,streams%2C%20storm%20drains%20and%20roads. ''Benefits of gardening''. (n.d.). https://schultesgreenhouse.com/Benefits.html]</ref>. | ||

In the past decade, robotics has been advancing across multiple fields rapidly as tedious and difficult tasks become increasingly automated <ref>[https://www.bbvaopenmind.com/en/articles/a-decade-of-transformation-in-robotics/#:~:text=The%20advancements%20in%20robotics%20over,their%20environment%20in%20unique%20ways. https://www.bbvaopenmind.com/en/articles/a-decade-of-transformation-in-robotics/ | In the past decade, robotics has been advancing across multiple fields rapidly as tedious and difficult tasks become increasingly automated <ref>[https://www.bbvaopenmind.com/en/articles/a-decade-of-transformation-in-robotics/#:~:text=The%20advancements%20in%20robotics%20over,their%20environment%20in%20unique%20ways. Rus, D. (n.d.). ''A Decade of Transformation in Robotics | OpenMind''. OpenMind. https://www.bbvaopenmind.com/en/articles/a-decade-of-transformation-in-robotics/.]</ref>, this is not any different in the field of agriculture and gardening <ref>[https://www.mdpi.com/2075-1702/11/1/48 Cheng, C., Fu, J., Su, H., & Ren, L. (2023). Recent Advancements in Agriculture Robots: Benefits and Challenges. ''Machines'', ''11''(1), 48. https://doi.org/10.3390/machines11010048]</ref>. In recent years, many robots have become available that aid farmers in important aspects such as irrigation, plantation, and weeding. These robots are large mechanical structures sold at a very high price meaning their only true usage is in large-scale farming operations. Unfortunately, '''one common user group has been left behind''' and not considered when developing many features of this new technology in gardening and agriculture; '''the amateur gardener'''. Amateur gardeners, often lacking in-depth knowledge about plants and gardening practices, face challenges in maintaining their gardens. Identifying issues with specific plants, understanding their individual needs, and implementing corrective measures can be overwhelming for their limited expertise. It is not surprising that traditional gardening tools and resources often fall short of providing the necessary guidance for optimal plant care, so another solution must be found. This is the problem that our team's robot will be the solution to. We cannot help the fact that some people do not have a space to garden, but we can address the two other common problems. So, '''the questions we asked ourselves were:''' | ||

"How do we make gardening more accessible for the amateur gardeners?" | "How do we make gardening more accessible for the amateur gardeners?" | ||

| Line 44: | Line 45: | ||

== Objectives == | == Objectives == | ||

The objectives for the project that we hope to accomplish | The objectives for the project deliverables that we hope to accomplish in the next 8 weeks can be represented as MoSCoW requirements. To determine the importance of each requirement we will be sorting them into 4 categories of priority. These 4 categories of priority are: Must, Should, Could and Would. Normally, for “MoSCoW” Won’t is used for ‘W’. However, for most projects it is not really needed to make clear what we won’t be doing, therefore, it is better to use a fourth category of priority instead; Would. Since for this project we want to definitely complete most of the requirements that we set out, we define most requirements as Must's. | ||

{| class="wikitable" | |||

|Requirement ID | |||

|Requirement | |||

|Priority | |||

|- | |||

| colspan="3" |The Robot | |||

|- | |||

|R001 | |||

|The robot shall cut the grass while traversing the environment. | |||

|M | |||

|- | |||

|R002 | |||

|The robot shall map the garden and store it in its memory. | |||

|M | |||

|- | |||

|R003 | |||

|The robot shall traverse the garden avoiding any obstacles on its way. | |||

|M | |||

|- | |||

|R004 | |||

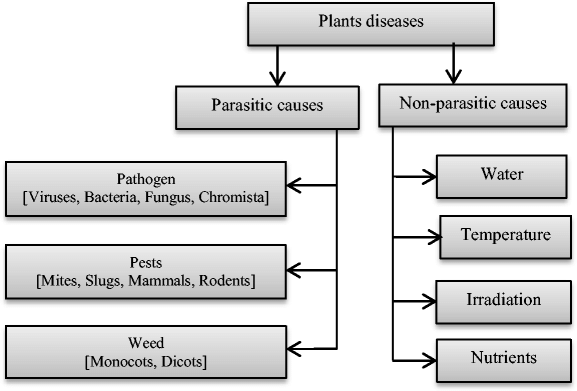

|The robot shall detect different types of plant diseases and their location through the use of cameras and sensors. | |||

|M | |||

|- | |||

|R005 | |||

|The robot shall know its GPS/RTK location at all times. | |||

|M | |||

|- | |||

|R006 | |||

|The robot shall send a signal to the mobile application when it detects a diseased plant. | |||

|M | |||

|- | |||

|R007 | |||

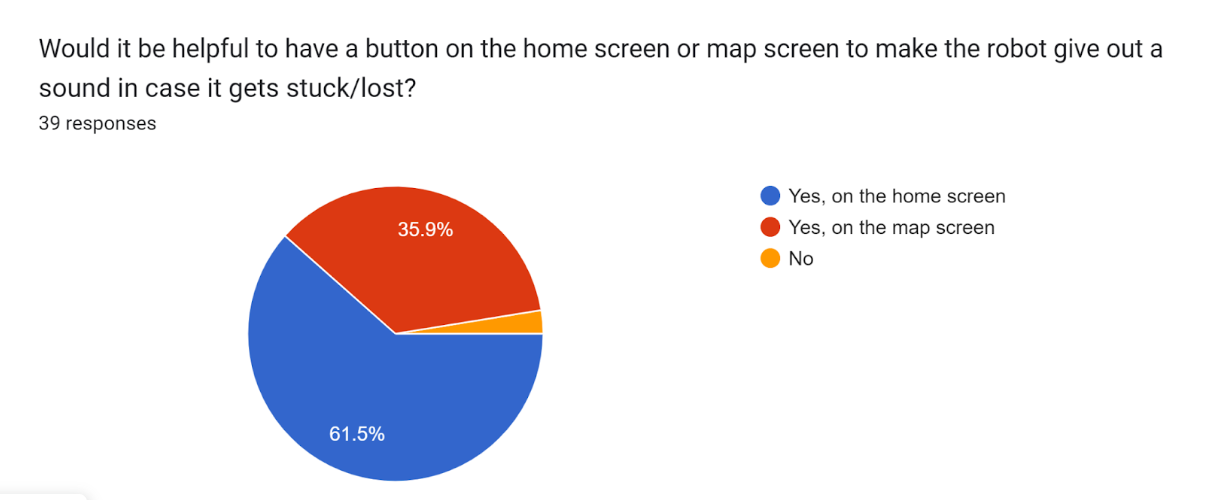

|The robot shall make a noise when the user wishes to find the robot through the app. | |||

|S | |||

|- | |||

| colspan="3" |The App | |||

|- | |||

|R101 | |||

|The app shall provide a button to start the robot. | |||

|M | |||

|- | |||

|R102 | |||

|The app shall provide a button to stop the robot. | |||

|M | |||

|- | |||

|R103 | |||

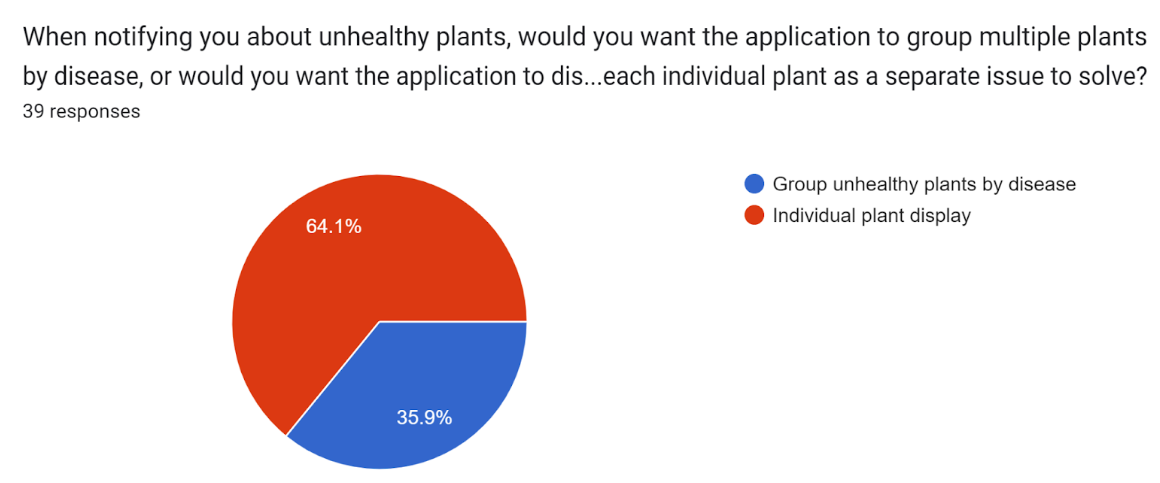

|The app shall display a notification to the user when a plant disease is detected in a specific region. | |||

|M | |||

|- | |||

|R104 | |||

|The app shall display the location of the robot on the map at all times. | |||

|M | |||

|- | |||

|R105 | |||

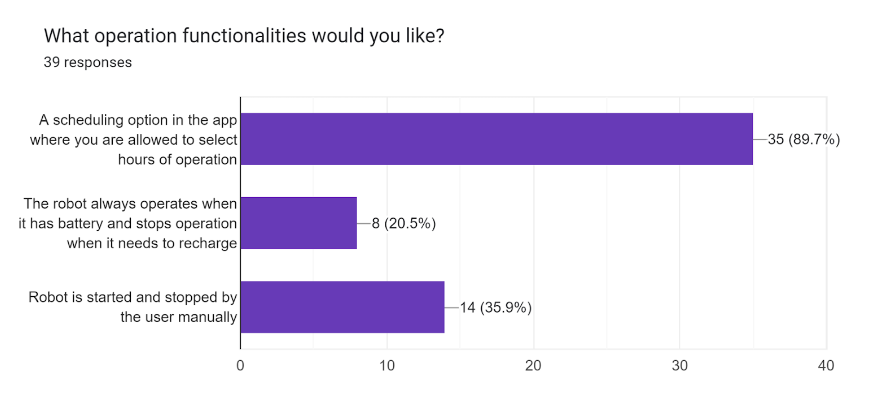

|The app shall present the user with an option to schedule the operation times of the robot. | |||

|M | |||

|- | |||

|R106 | |||

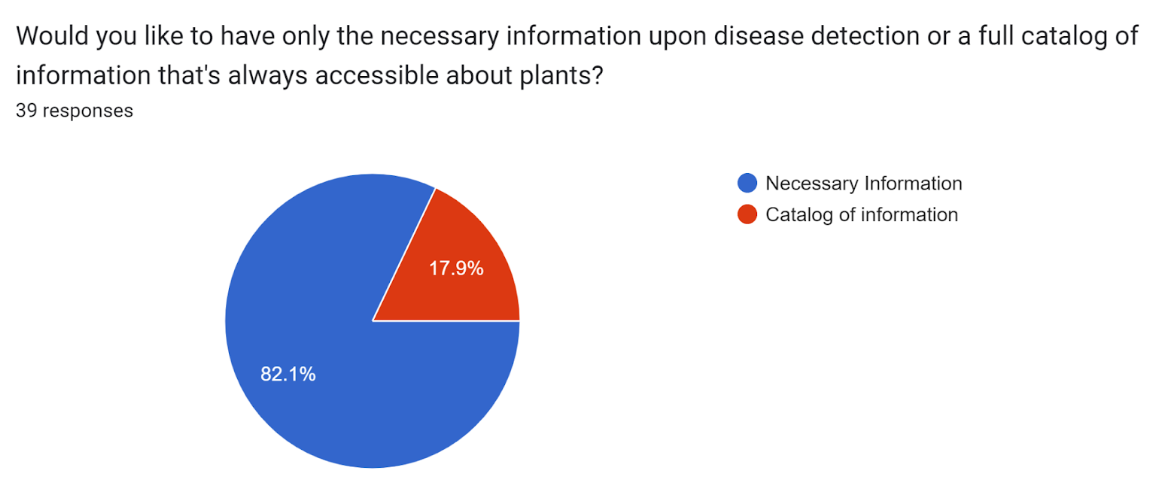

|Upon disease detection, the app shall provide the user with necessary information to aid the affected plant. | |||

|M | |||

|- | |||

|R107 | |||

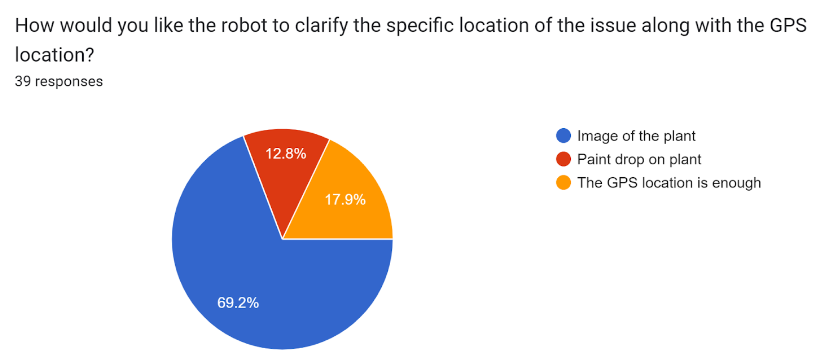

|The app shall display the location of the unhealthy plant on the map when a user clicks on a specific notification. | |||

|M | |||

|} | |||

== Users == | == Users == | ||

<u>Who are the users?</u> | <u>Who are the users?</u> | ||

The users of the product are garden-owners who need assistance in monitoring and maintaining their garden. This could be due to the fact that the users do not have | The users of the product are garden-owners who need assistance in monitoring and maintaining their garden. This could be due to the fact that the users do not have the necessary knowledge to properly maintain all different types of plants in their garden, or would prefer a quick and easy set of instructions of what to do with each unhealthy plant and where that plant is located. This would optimise the users routine of gardening without taking away the joy and passion that inspired the user to invest into the plants in their garden in the first place. | ||

<u>What do the users require?</u> | <u>What do the users require?</u> | ||

The users require a robot which is easy to operate and does not need unnecessary maintenance and setup. The robot should be easily controllable through a user interface that is tailored to the users needs and that displays all required information to the user in a clear and concise way. The user also requires that the robot may effectively map their garden and identify where certain | The users require a robot which is easy to operate and does not need unnecessary maintenance and setup. The robot should be easily controllable through a user interface that is tailored to the users needs and that displays all required information to the user in a clear and concise way. The user also requires that the robot may effectively map their garden and identify where a certain plant is located. Lastly, the user requires that the robot is able to accurately describe what actions must be taken, if any are necessary, for a specific plant at a specific location in the garden. | ||

== Deliverables == | == Deliverables == | ||

* Research into AI plant detection mapping a garden and best ways of manoeuvring through it. | * Research into AI plant detection, mapping a garden and best ways of manoeuvring through it. | ||

* Research into AI identifying plant diseases and infestations. | * Research into AI identifying plant diseases and infestations. | ||

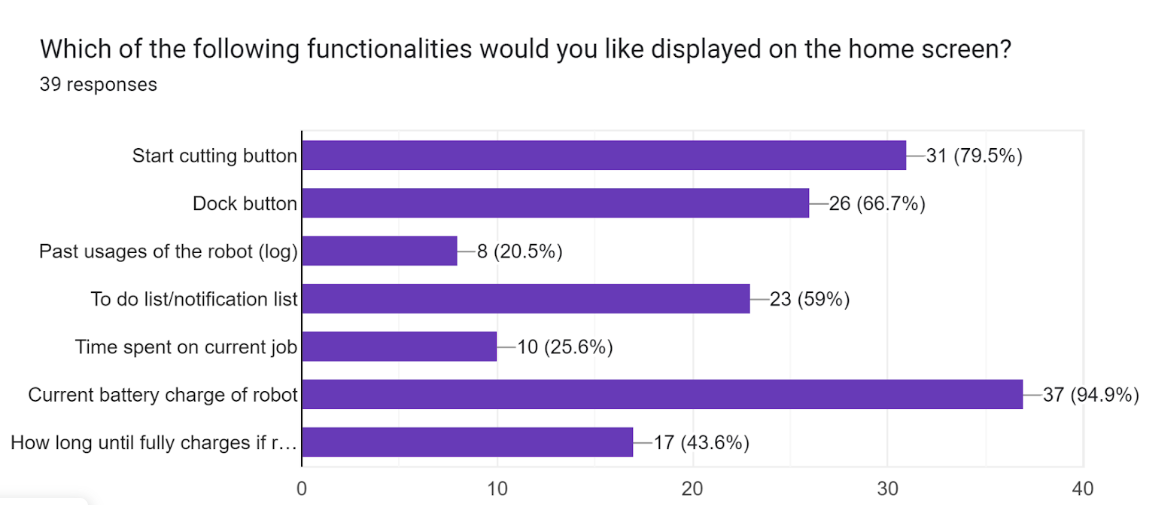

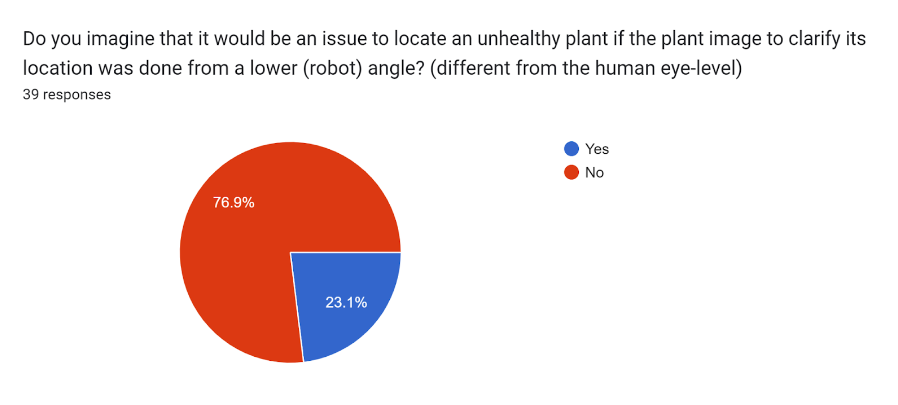

* Survey confirming | * Survey confirming and asking about further functions of the robot. | ||

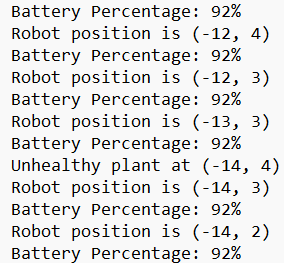

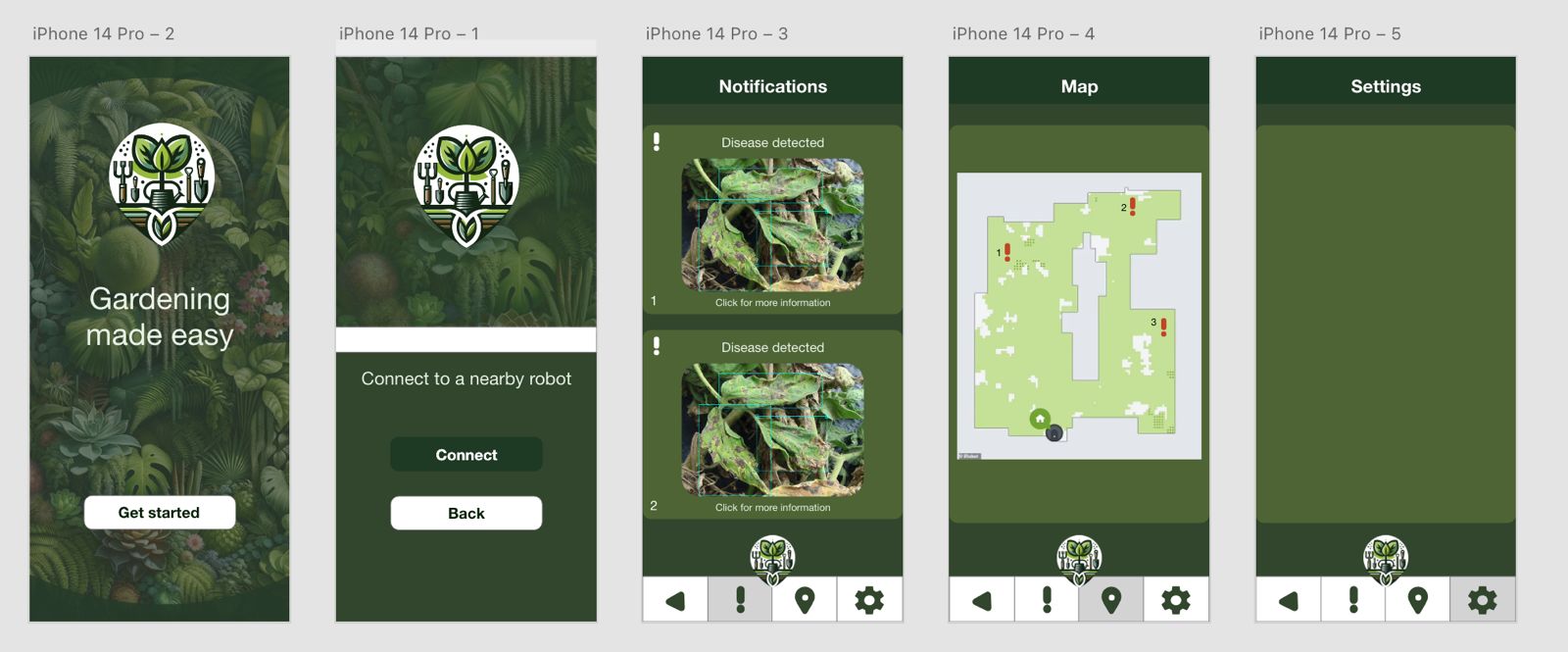

* Interactive UI of an app that will allow the user to control the robot remotely | |||

* Interactive UI of an app that will allow the user to control the robot remotely, which implements the user requirements that we will obtain from the survey. The UI will be able to be run on a phone and all of its features will be able to be accessed through a mobile application. | |||

* This wiki page which will document the progress of the group's work, decisions that have been made, and results we obtained. | * This wiki page which will document the progress of the group's work, decisions that have been made, and results we obtained. | ||

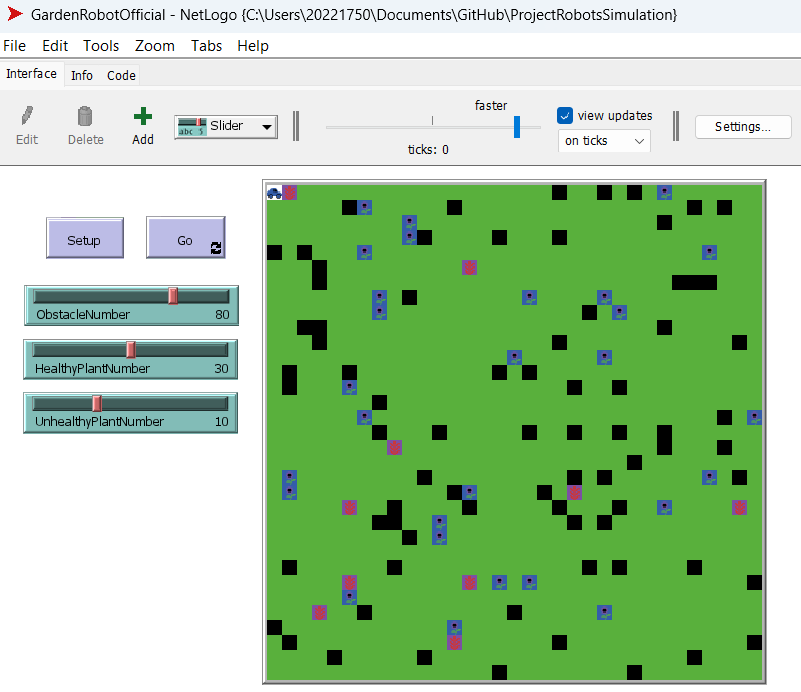

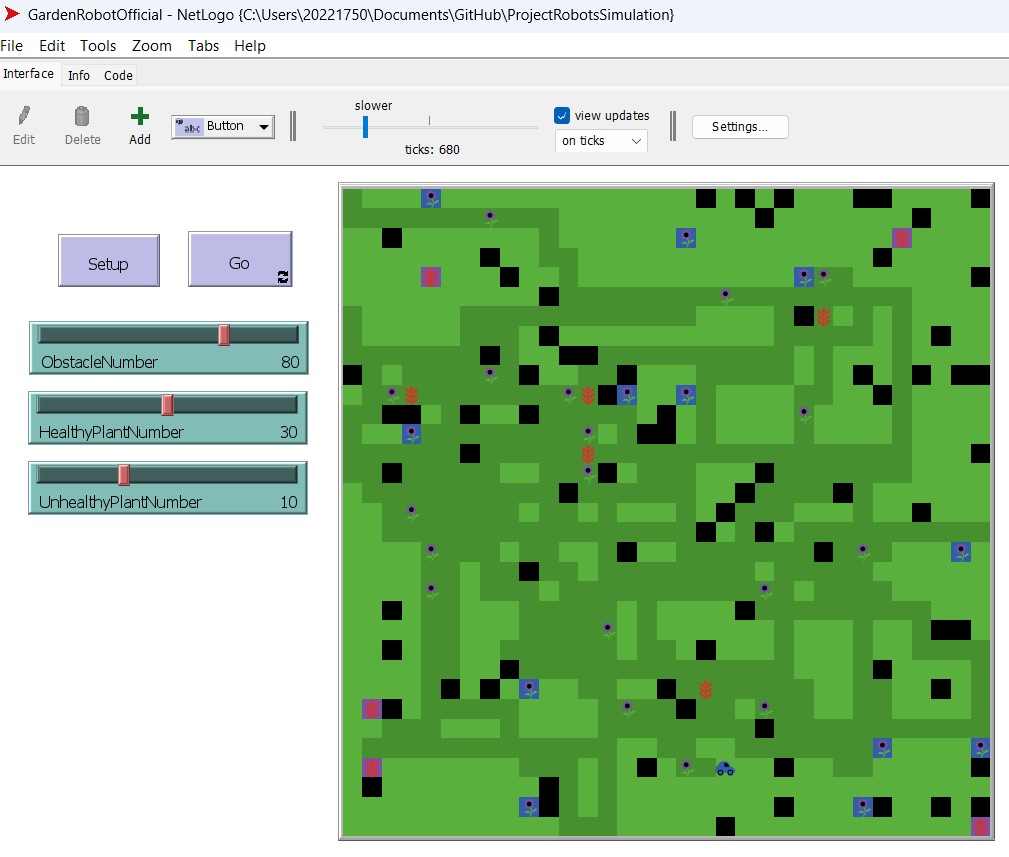

* A simulation in NetLogo that shows the operation/movement of the robot in the environment. | |||

* A trained model for recognising plant diseases. | |||

* Final design of the envisioned robot. | |||

* | |||

Through these deliverables, we aim to showcase the design of our robot and the user experience. These deliverables are nicely tied together. The research that we do stands at the core of our other deliverables, in particular, it aids the training of the plant recognition model and that of the final design. The survey that will be sent out, will help us design the user interface of our mobile application and confirm some of our literature and features. The trained model will show that it is feasible to have reliable plant detection when it comes to the designed robot, and will set the foundation for an extensive plant disease recognition model. The simulation in NetLogo shows how the robot will navigate the field and some of the information from this deliverable is sent to the mobile application, exactly as the robot would if it had already be manufactured. Finally, everything related to these deliverables and their progress is shown on this wiki page. | |||

== State of Art == | |||

Our robot idea can be separated into multiple functionalities: automated grass cutting, disease detection in plants and an app to control your automated robot. The combination of all of these features in a gardening robot targeted to amateur users is currently non-existent, however these individual features have already been implemented in more specialised robots. Therefore, it is very useful to explore the current state of the art of all of these distinct features individually, with the end goal of then using state of the art to avoid creating existing technology from scratch for our final robot. Moreover, it allows us to identify whether such a market for these technologies exists, and to understand what our target costumers will prefer. | |||

=== Automated Gardening Robots === | |||

==== TrimBot2020 ==== | |||

[[File:Trimbot-3.jpg|thumb|250x250px|TrimBot2020<ref>[https://www.google.com/url?sa=i&url=https%3A%2F%2Fwww.mapix.com%2Fcase-studies%2Ftrimbot%2F&psig=AOvVaw1FjRTo-o8kvkexjEZ-oKAG&ust=1712865219272000&source=images&cd=vfe&opi=89978449&ved=0CBIQjRxqFwoTCIjxiaq2uIUDFQAAAAAdAAAAABAE R''obotics - TrimBot2020 - Mapix technologies''. (2020, March 30). Mapix Technologies. https://www.mapix.com/case-studies/trimbot/]</ref>|center]] | |||

The TrimBot2020 was the first concept for an automated gardening robot for bush trimming and rose pruning. It began as a collaboration project between multiple universities, including ETH Zurich, University of Groningen and University of Amsterdam. Trimbot2020 was designed to autonomously navigate through garden spaces, maneuvering around obstacles and identifying optimal paths to reach target plants for trimming, which was done with a robot arm with a blade extension. | |||

==== EcoFlow Blade ==== | |||

[[File:Ecoflow.jpg|thumb|250x250px|EcoFlow BLADE Robotic Lawn Mower<ref>[https://www.google.com/url?sa=i&url=https%3A%2F%2Fwww.toolstop.co.uk%2Fecoflow-blade-robotic-lawn-sweeping-lawnmower%2F&psig=AOvVaw1-bKEXBXWGre7cLTrn1PrB&ust=1712865107416000&source=images&cd=vfe&opi=89978449&ved=0CBIQjRxqFwoTCJixiZ62uIUDFQAAAAAdAAAAABAE Toolstop. (n.d.). ''EcoFlow Blade Robotic Lawnmower | ToolsTop''. https://www.toolstop.co.uk/ecoflow-blade-robotic-lawn-sweeping-lawnmower/]</ref>|center]] | |||

Standing at nearly 2600€, the EcoFlow Blade is an automated grass trimming robot, meant to reduce the time needed to maintain the user’s garden. At first use after purchase, the user will use a built-in application on their smartphone to direct the robot, tracing the edges of their garden. This feature saves the user the need to add barriers to their garden, allowing a more straightforward interaction with the user. Once done, the robot will have a map of where to cut, for it to work automatically. TMoreover, the robot comes with x-vision technology designed to avoid obstacles in real time, ensuring that it doesn't break and that it won't destroy objects or hurt people. | |||

==== Greenworks Pro Optimow 50H Robotic Lawn Mower ==== | |||

[[File:Greenworks Pro Optimov.jpg|thumb|250x250px|Greenworks Pro Optimow 50H Robotic Lawn Mower<ref>[https://www.google.com/url?sa=i&url=https%3A%2F%2Fwww.pcmag.com%2Freviews%2Fgreenworks-pro-optimow-50h-robotic-lawn-mower&psig=AOvVaw1kn1TCEKtiuhvpqtDdITlW&ust=1712865085351000&source=images&cd=vfe&opi=89978449&ved=0CBIQjRxqFwoTCNis6Oq1uIUDFQAAAAAdAAAAABAE PCMag. (2021, August 25). ''GreenWorks Pro Optimow 50H Robotic Lawn Mower Review''. PCMAG. https://www.pcmag.com/reviews/greenworks-pro-optimow-50h-robotic-lawn-mower]</ref>|center]] | |||

Standing at 1600€, the Greenworks gardening robot also focuses on mowing gardens. Greenworks has made multiple versions for different garden sizes, spanning from 450-1500m2. The Pro Optimow’s features are also integrated with their own app, which allow the user to schedule and track the robot, as well as specifying any areas that need to be managed more carefully, like areas that are more prone to flooding. The boundaries of the garden are set with a wire, and the robot navigates the garden with random patterns, cutting small amounts at a time. | |||

==== Husqvarna Automower 435X AWD ==== | |||

[[File:Husqvarna AWD MRT19 3.jpg|thumb|Husqvarna Automower 435X AWD<ref>[https://www.google.com/url?sa=i&url=https%3A%2F%2Fwww.munstermanbv.nl%2Factueel%2F1619-nieuwe-husqvarna-automower-435x-awd-met-ai&psig=AOvVaw3Ofp9sTZwu9SFIphh_U2Ju&ust=1712864913895000&source=images&cd=vfe&opi=89978449&ved=0CBIQjRxqFwoTCLC87pm1uIUDFQAAAAAdAAAAABAE ''Nieuwe Husqvarna automower 435X AWD''. (2019, March 11). Munsterman BV. https://www.munstermanbv.nl/actueel/1619-nieuwe-husqvarna-automower-435x-awd-met-ai]</ref>|center]] | |||

Finally, the Husqvarna Automower is designed for large, hilly landscapes, capable of mowing up to 3500m2 of lawn, as well as having great manoeuvrability and grip for rough and slanted terrains. This robot again has an integrated app, which works with the robot’s built-in GPS to create a virtual map of the user’s lawn. Moreover, the app allows the user to customise the robot’s behaviour in different areas, whether it be cutting heights, zones to avoid, etc. The Husqvarna gardening robot also uses ultrasonic sensors to detect objects and avoid them. The robot also requires the user to set up boundary wires to map out the garden. Finally, the Husqvarna is integrated with voice controls such as Amazon Alexa and Google Home, allowing the user to command the robot easily. | |||

=== Plant (Disease) Detection Systems === | |||

==== LeafSnap ==== | |||

[[File:Leafsnap.png|center|thumb|Leafsnap App screen capture<ref>[https://www.google.com/url?sa=i&url=https%3A%2F%2Fleafsnap.app%2F&psig=AOvVaw1yqY2sT8lNVX2JWMp_vOnq&ust=1712864889334000&source=images&cd=vfe&opi=89978449&ved=0CBIQjRxqFwoTCMjehI61uIUDFQAAAAAdAAAAABAE ''Leafsnap - Plant Identifier App, top mobile app for plant identification''. (n.d.). LeafSnap - Plant Identification. https://leafsnap.app/]</ref>]] | |||

LeafSnap is an app on iOS and Android that claims to have plant identification and disease identification built in, by scanning images through the camera. They claim to have an accuracy rate of 95% at identifying the species of the plant, as well as having instructions for how to care for each specific species. Moreover, it sends reminders to the user to water, fertilise and prune their plants. LeafSnap is able to identify plants thanks to a database with more than 30000 species. | |||

==== PlantMD ==== | |||

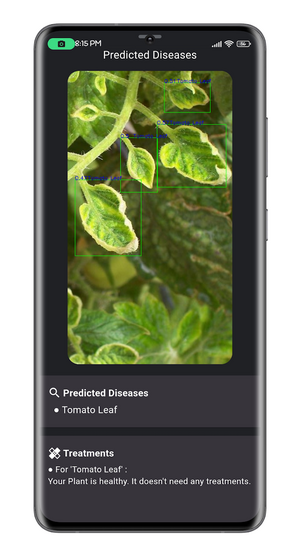

[[File:Plantmd.webp|center|thumb|PlantMD screen capture<ref>[https://play.google.com/store/apps/details?id=com.plant_md.plant_md&hl=kr ''Plant Medic - PlantMD - apps on Google Play''. (n.d.). https://play.google.com/store/apps/details?id=com.plant_md.plant_md&hl=kr]</ref>]] | |||

PlantMD is an application that employs machine learning to detect plant diseases. More specifically, they used TensorFlow, an open-source software library for machine learning developed by Google, focused on neural networks. The development of PlantMD was inspired by PlantVillage, a dataset from Penn State University, which created Nuru, an app aimed at helping farmers improve cassava cultivation in Africa. | |||

==== Agrio ==== | |||

[[File:Agrio.jpg|center|thumb|Agrio app screen capture<ref>[https://www.google.com/url?sa=i&url=https%3A%2F%2Fagrio.app%2F&psig=AOvVaw3v6f3fPTEgKqT14llFNlJ-&ust=1712864789556000&source=images&cd=vfe&opi=89978449&ved=0CBIQjRxqFwoTCKiDrt20uIUDFQAAAAAdAAAAABAE Agrio. (2023, November 27). ''Agrio | Protect your crops''. https://agrio.app/]</ref>]] | |||

The app allows farmers to utilise machine learning algorithms for diagnosing crop issues and determining treatment needs. Users can snap photos of their plants to receive diagnosis and treatment recommendations. Additionally, the app features AI algorithms capable of rapid learning to identify new diseases and pests in various crops, enabling less experienced workers to actively participate in plant protection efforts. Geotagged images help predict future problems, while supervisors can build image libraries for comparison and diagnosis. Users can edit treatment recommendations and add specific agriculture input products tailored to crop type, pathology, and geographic location. Treatment outcomes are monitored using remote sensing data, including multispectral imaging for various resolutions and visit frequencies. The app provides hyper-local weather forecasts, crucial for predicting insect migration, egg hatching, fungal spore development, and more. Inspectors can upload images during field inspections, with algorithms providing alerts before symptoms are visible. | |||

=== Inspection Robots in Agriculture === | |||

==== Tortuga AgTech<ref>''Tortuga AgTech''. (n.d.). Tortuga AgTech. <nowiki>https://www.tortugaagtech.com/</nowiki></ref> ==== | |||

[[File:Tortuga AgTech Robot.jpg|center|thumb|Tortuga Harvesting Robot picking strawberries.<ref>[https://www.google.com/url?sa=i&url=https%3A%2F%2Fsummerberry.co.uk%2Fnews%2Fextended-partnership-between-the-summer-berry-company-and-tortuga-agtech-a-robotics-harvesting-company%2F&psig=AOvVaw3KyBnHjxl6CRNeUwIhEkQC&ust=1712864763164000&source=images&cd=vfe&opi=89978449&ved=0CBIQjRxqFwoTCJCyyNC0uIUDFQAAAAAdAAAAABAE Westlake, T. (2023, October 11). ''Extended Partnership between The Summer Berry Company and Tortuga Agtech, a robotics harvesting company''. The Summer Berry Company. https://summerberry.co.uk/news/extended-partnership-between-the-summer-berry-company-and-tortuga-agtech-a-robotics-harvesting-company/]</ref>]] | |||

The winners of Agricultural Robot of the Year 2024 award, Tortuga AgTech revolutionised the field of automated harvesting robots. The Tortuga Harvesting Robot are autonomous robots designed for harvesting strawberries and grapes, using two robotic arms that “identify, pick and handle fruit gently”. To do this, each arm has a camera at its end, and the AI algorithms identify the stem of the fruit, and command its two fingers to remove the fruit from the stem. Moreover, the AI has the ability to “differentiate between ripe and unripe fruit”, to ensure that fruit is picked only when it should be. After picking a fruit, it will place them in one of the many containers it has in its body, having the ability to pick “tens of thousands of berries every day”. | |||

==== VegeBot<ref>''Robot uses machine learning to harvest lettuce''. (2019, July 8). University of Cambridge. <nowiki>https://www.cam.ac.uk/research/news/robot-uses-machine-learning-to-harvest-lettuce</nowiki></ref> ==== | |||

[[File:Vegebot.jpg|center|thumb|Vegebot Robot, from Cambridge University<ref>[https://www.google.com/url?sa=i&url=https%3A%2F%2Fwww.agritechfuture.com%2Frobotics-automation%2Frobot-uses-machine-learning-to-harvest-lettuce%2F&psig=AOvVaw15CSRsHvUrOZh5igAAKe2Y&ust=1712864730684000&source=images&cd=vfe&opi=89978449&ved=0CBIQjRxqFwoTCNDpocK0uIUDFQAAAAAdAAAAABAE ''Robot uses machine learning to harvest lettuce | Agritech Future''. (2021, July 1). Agritech Future. https://www.agritechfuture.com/robotics-automation/robot-uses-machine-learning-to-harvest-lettuce/]</ref>]] | |||

Designed at the University of Cambridge, the VegeBot is a robot made for harvesting iceberg lettuce, a crop that is particularly difficult to harvest with robots, due to its fragility and growing “relatively flat to the ground”. This makes it more prone to damage the soil or other lettuces that are in the robots surroundings. The VegeBot has a built-in camera, which is used to identify the iceberg lettuce, and to check its condition, including its maturity and health. From there, its machine learning algorithm decides whether to pick it off, and if so, cuts the lettuce off the ground, and gently picks it up and places it on its body. | |||

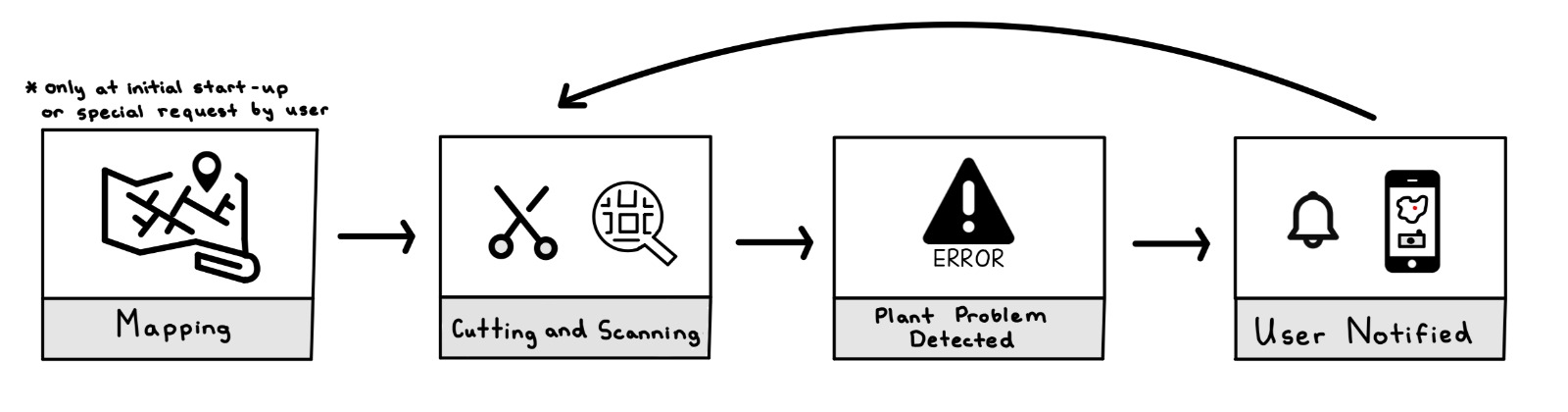

== Regular Robot Operation == | == Regular Robot Operation == | ||

As with any piece of technology it is important that the users are aware of its proper operation method and how the robot functions | As with any piece of technology it is important that the users are aware of its proper operation method and how the robot functions in general terms. It is important that this is clear for our robot as well. Upon the robot's first use in a new garden or when the garden owner has made some changes to the garden layout, the mapping process must be instantiated in the app. This mapping will be a 2D map of the garden which will then later allow the robot to efficiently traverse the entire garden during its regular operation without leaving any part of the garden unvisited. In order to better understand this feature one, can compare it to the iRobot Roomba. After the initial setup phase has been completed the robot will be able to begin its normal operation. Normal operation includes the robot being let out into the garden from its charging station and traversing through the garden cutting grass while its camera scans the plants in its surroundings. Whenever the robot detects an irregularity in one of the plants, it will notify the user through the usage of the app, where the robot will send over a picture of the plant with an issue as well as its location on the map of the garden. The user will then be able to navigate in the app to view all plants that need to be taken care of in their garden. This means that not only will the user have a lawn which is well kept but also be aware of all unhealthy plants keeping the user's garden in optimal condition at all times. | ||

[[File: | [[File:Operation Robot.jpg|center|thumb|748x748px|Regular Operation of the Robot]] | ||

== Maneuvering | == Maneuvering == | ||

=== Movement === | === Movement === | ||

One of the most important design decisions when creating a robot or machine with some form of mobility is deciding what mechanism the robot will use to traverse its operational environment. This decision is not always easy as many options exist which have their unique pros and cons. Therefore is is important to consider the pros and cons of all methods and then decide which method is most appropriate for a given scenario. In the following section I will explore these different methods and see which are expected to | One of the most important design decisions when creating a robot or machine with some form of mobility is deciding what mechanism the robot will use to traverse its operational environment. This decision is not always easy as many options exist which have their unique pros and cons. Therefore is is important to consider the pros and cons of all methods and then decide which method is most appropriate for a given scenario. In the following section I will explore these different methods and see which are expected to work the best in the task environment our robot will be required to function in. | ||

==== Wheeled Robots ==== | ==== Wheeled Robots ==== | ||

It may be no surprise that the most popular method for movement within the robot industry is still a robot with circular wheels. This is due to the fact that robots with wheels are simply much easier to design and model<ref>[https://www.robotplatform.com/knowledge/Classification_of_Robots/legged_robots.html#:~:text=First%20and%20foremost%20reason%20is,%2C%20orientation%2C%20efficiency%20and%20speed. https://www.robotplatform.com/knowledge/Classification_of_Robots/legged_robots.html | It may be no surprise that the most popular method for movement within the robot industry is still a robot with circular wheels. This is due to the fact that robots with wheels are simply much easier to design and model<ref>[https://www.robotplatform.com/knowledge/Classification_of_Robots/legged_robots.html#:~:text=First%20and%20foremost%20reason%20is,%2C%20orientation%2C%20efficiency%20and%20speed. ''Robot Platform | Knowledge | Wheeled Robots''. (n.d.). https://www.robotplatform.com/knowledge/Classification_of_Robots/legged_robots.html.]</ref>. They do not require complex mechanism of flexing or rotating an actuator but can be fully functional by simply altering rotating a motor in one of two directions. Essentially they allow the engineer to focus on the main functionality of the robot without having to worry about the many complexities that could arise with other movement mechanisms when that is not necessary. Wheeled robots are also convenient in design as they rarely take up a lot of space in the robot. Furthermore, as stated by Zedde and Yao from the University of Wagenigen, these types of robots are most often used in industry due to their simple operation and simple design<ref>[https://edepot.wur.nl/575608 Zedde, R., & Yao, L. (2022). Field robots for plant phenotyping. In Burleigh Dodds Science Publishing Limited & A. Walter (Eds.), ''Advances in plant phenotyping for more sustainable crop production''. https://doi.org/10.19103/AS.2022.0102.08]</ref>. Although wheeled robots seem as a single simple category there are a few subcategories of this movement mechanism that are important to distinguish as they each have their benefits and issues they face. | ||

===== Differential Drive ===== | ===== Differential Drive ===== | ||

[[File:Differential Drive Demo.png|thumb|Differential Drive Robot Functionality]] | [[File:Differential Drive Demo.png|thumb|Differential Drive Robot Functionality<ref>Elsayed, M. (2017, June). ''Differential Drive wheeled Mobile Robot''. ResearchGate. <nowiki>https://www.researchgate.net/figure/Differential-Drive-wheeled-Mobile-Robot-reference-frame-is-symbolized-as_fig1_317612157</nowiki></ref>]] | ||

Differential drive focuses on independent rotation of all wheels on the robot. Essentially one could say that each wheel has its own functionality and operates independently of the other wheels present on the robot. Although rotation is independent it is important to note that all wheels on the robot work as one unit to optimize turning and movement. The robot does this by varying the relative speed of rotation of its wheels which allow the robot to move in any direction without an additional steering mechanism<ref>https://search.worldcat.org/title/971588275</ref>. In order to better illustrate this idea consider the following scenario - suppose a robot wants to turn sharp left, the left wheels would become idle and the right wheel would rotate at maximum speed. As can be seen both wheels are rotating independently but are doing so to reach the same movement goal. | Differential drive focuses on independent rotation of all wheels on the robot. Essentially one could say that each wheel has its own functionality and operates independently of the other wheels present on the robot. Although rotation is independent it is important to note that all wheels on the robot work as one unit to optimize turning and movement. The robot does this by varying the relative speed of rotation of its wheels which allow the robot to move in any direction without an additional steering mechanism<ref>[https://search.worldcat.org/title/971588275 ''Wheeled mobile robotics : from fundamentals towards autonomous systems | WorldCat.org''. (2017). https://search.worldcat.org/title/971588275]</ref>. In order to better illustrate this idea consider the following scenario - suppose a robot wants to turn sharp left, the left wheels would become idle and the right wheel would rotate at maximum speed. As can be seen both wheels are rotating independently but are doing so to reach the same movement goal. | ||

{| class="wikitable" | {| class="wikitable" | ||

|+Differential Drive | |+Differential Drive | ||

| Line 114: | Line 195: | ||

|- | |- | ||

|Easy to design | |Easy to design | ||

|Difficulty | |Difficulty with straight line motion on uneven terrains | ||

|- | |- | ||

|Cost-effective | |Cost-effective | ||

|Wheel skidding can completely mess up algorithm and confuse the robot of its location | |Wheel skidding can completely mess up algorithm and confuse the robot of its location | ||

|- | |- | ||

|Easy | |Easy manoeuvrability | ||

|Sensitive to weight distribution - big issue with moving water in container | |Sensitive to weight distribution - big issue with moving water in container | ||

|- | |- | ||

| Line 130: | Line 211: | ||

===== Omni Directional Wheels ===== | ===== Omni Directional Wheels ===== | ||

[[File:Triple Rotacaster commercial industrial omni wheel.jpg|thumb|Omni Wheel produced by Rotacaster]] | [[File:Triple Rotacaster commercial industrial omni wheel.jpg|thumb|Omni Wheel produced by Rotacaster<ref>''Omni wheel''. (2020, March 8). Wikipedia. <nowiki>https://en.wikipedia.org/wiki/Omni_wheel</nowiki></ref>]] | ||

Omni-directional wheels are a specialized type of wheel designed with rollers or casters set at angles around their circumference. This specific configuration allows a robot which has these wheels to easily move in any direction, whether this is lateral, diagonal, or rotational motion<ref>https://gtfrobots.com/what-is-omni-wheel/</ref>. By allowing each wheel to rotate independently and move at any angle, these wheels provide great agility and precision, which makes this method ideal for applications which require navigation and precise positioning. The main difference between this method and differential drive is the fact that omni directional wheels are able to move in any direction easily and do not require turning of the whole robot when that is not necessary due to their specially designed roller on each wheel. | Omni-directional wheels are a specialized type of wheel designed with rollers or casters set at angles around their circumference. This specific configuration allows a robot which has these wheels to easily move in any direction, whether this is lateral, diagonal, or rotational motion<ref>[https://gtfrobots.com/what-is-omni-wheel/ Admin, G. (2023, August 10). ''What is Omni Wheel and How Does it Work? - GTFRobots | Online Robot Wheels Shop''. GTFRobots | Online Robot Wheels Shop. https://gtfrobots.com/what-is-omni-wheel/]</ref>. By allowing each wheel to rotate independently and move at any angle, these wheels provide great agility and precision, which makes this method ideal for applications which require navigation and precise positioning. The main difference between this method and differential drive is the fact that omni directional wheels are able to move in any direction easily and do not require turning of the whole robot when that is not necessary due to their specially designed roller on each wheel. | ||

{| class="wikitable" | {| class="wikitable" | ||

|+Omni Directional Wheels | |+Omni Directional Wheels | ||

| Line 140: | Line 221: | ||

|Complex design and implementation | |Complex design and implementation | ||

|- | |- | ||

|Superior | |Superior manoeuvrability in any direction | ||

|Limited load-bearing capacity | |Limited load-bearing capacity | ||

|- | |- | ||

| Line 154: | Line 235: | ||

==== Legged Robots ==== | ==== Legged Robots ==== | ||

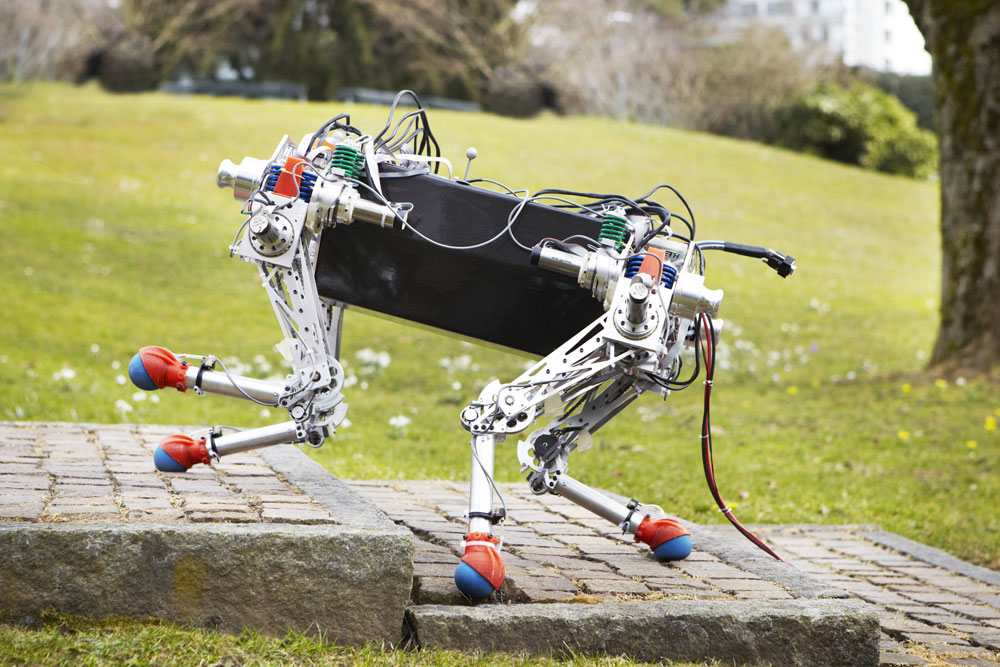

[[File:Starleth quadruped robot .jpg|thumb|Legged robot traversing a terrain]] | [[File:Starleth quadruped robot .jpg|thumb|Legged robot traversing a terrain<ref>''Four-legged robot that efficiently handles challenging terrain - Robohub''. (n.d.). Robohub.org. <nowiki>https://robohub.org/four-legged-robot-that-efficiently-handles-challenging-terrain/</nowiki></ref>]] | ||

Over millions of year organisms have evolved in thousands of different ways, giving rise to many different methods of brain functioning, how an organisms perceives the world and what is important in our current discussion, movement. It is no coincidence that many land animals have evolved to have some form of legs to traverse their habitats, it is simply a very effective method which allows a lot of versatility and adaptability to any obstacle or problem an animal might face<ref>[https://www.scientificamerican.com/article/how-fins-became-limbs/#:~:text=Four%2Dlegged%20creatures%20may%20have,ditching%20genes%20guiding%20fin%20development.&text=The%20loss%20of%20genes%20that,vertebrates%2C%20according%20to%20a%20study. https://www.scientificamerican.com/article/how-fins-became-limbs/ | Over millions of year organisms have evolved in thousands of different ways, giving rise to many different methods of brain functioning, how an organisms perceives the world and what is important in our current discussion, movement. It is no coincidence that many land animals have evolved to have some form of legs to traverse their habitats, it is simply a very effective method which allows a lot of versatility and adaptability to any obstacle or problem an animal might face<ref>[https://www.scientificamerican.com/article/how-fins-became-limbs/#:~:text=Four%2Dlegged%20creatures%20may%20have,ditching%20genes%20guiding%20fin%20development.&text=The%20loss%20of%20genes%20that,vertebrates%2C%20according%20to%20a%20study. ''How fins became limbs''. (2024, February 20). Scientific American. https://www.scientificamerican.com/article/how-fins-became-limbs/.]</ref>. This is no different when discussing the use of legged robots, legs provide superior functionality to many other movement mechanisms due to the fact that they are able to rotate and operate freely in all axis. However, with great mobility comes the great cost of their very difficult design, a design with which top institutions and companies struggle with to this day<ref>[https://ietresearch.onlinelibrary.wiley.com/doi/full/10.1049/csy2.12075 Zhu, Q., Song, R., Wu, J., Masaki, Y., & Yu, Z. (2022). Advances in legged robots control, perception and learning. ''IET Cyber-Systems and Robotics'', ''4''(4), 265–267. https://doi.org/10.1049/csy2.12075]</ref>. | ||

{| class="wikitable" | {| class="wikitable" | ||

|+Legged Robots | |+Legged Robots | ||

| Line 178: | Line 259: | ||

==== Tracked Robots ==== | ==== Tracked Robots ==== | ||

[[File:Autonomous-mobile-robots-1024x683.jpg|thumb|Tracked robots used for navigating rough terrain]] | [[File:Autonomous-mobile-robots-1024x683.jpg|thumb|Tracked robots used for navigating rough terrain<ref>Amphibious Tracked Vehicles | Autonomous Military Robots & Crawlers (defenseadvancement.com)</ref>]] | ||

Tracked robots, which can be characterized by their continuous track systems, offer a dependable method of traversing a terrain that can be found in applications across various industries. The continuous tracks, consisting of connected links, are looped around wheels or sprockets, providing a continuous band that allows for effective and reliable movement on many different surfaces, terrains and obstacles<ref>https://www.robotplatform.com/knowledge/Classification_of_Robots/tracked_robots.html</ref>. It is therefore no surprise that their most well known usages include vehicles which operate in uneven and unpredictable, such as tanks. Since tracks are flexible it is even common that such robots can simply avoid small obstacles by driving over them without experiencing any issues. This is particularly favorable for the robot we are designing as naturally gardens are never perfectly flat surfaces often littered by many | Tracked robots, which can be characterized by their continuous track systems, offer a dependable method of traversing a terrain that can be found in applications across various industries. The continuous tracks, consisting of connected links, are looped around wheels or sprockets, providing a continuous band that allows for effective and reliable movement on many different surfaces, terrains and obstacles<ref>[https://www.robotplatform.com/knowledge/Classification_of_Robots/tracked_robots.html ''Robot Platform | Knowledge | Tracked Robots''. (n.d.). Www.robotplatform.com. Retrieved March 9, 2024, from https://www.robotplatform.com/knowledge/Classification_of_Robots/tracked_robots.html]</ref>. It is therefore no surprise that their most well known usages include vehicles which operate in uneven and unpredictable, such as tanks. Since tracks are flexible it is even common that such robots can simply avoid small obstacles by driving over them without experiencing any issues. This is particularly favorable for the robot we are designing as naturally gardens are never perfectly flat surfaces often littered by many natural obstacles such as stone, dents in the surface or even possibly branches that have fallen on the ground due to rough wind. | ||

{| class="wikitable" | {| class="wikitable" | ||

|+Tracked Robots | |+Tracked Robots | ||

| Line 189: | Line 270: | ||

|- | |- | ||

|Effective Traction | |Effective Traction | ||

|Limited | |Limited Manoeuvrability | ||

|- | |- | ||

|Versatility in Terrain | |Versatility in Terrain | ||

| Line 205: | Line 286: | ||

==== Hovering/Flying Robots ==== | ==== Hovering/Flying Robots ==== | ||

[[File:Cover-Story-Agriculture2.jpg|thumb|Flying robot in action]] | [[File:Cover-Story-Agriculture2.jpg|thumb|Flying robot in action<ref>Chuchra, J. (2016b, October 7). ''Drones and Robots: Revolutionizing the Future of Agriculture''. Geospatial World. <nowiki>https://www.geospatialworld.net/article/drones-and-robots-future-agriculture/</nowiki></ref>]] | ||

Hovering/Flying robots provide without a doubt the most unique way of movement from the previously listed. This method unlocks a whole new wide range of possibilities as the robot no longer has to consider on-ground obstacles; whether that is rocks or uneven terrain. The robot is able to view and monitor a very large terrain from one position due to its ability to position itself at a high altitude and quickly detect major problems in a very large area. This method also unlocks the possibility of the robot to optimize its movement distance as it is able to move from point A to point B directly in a straight line saving energy and time. However, as is the case with any solution, flying/hovering has its major problems. It is by far the most expensive method, as flying apparatus is far more costly and high maintenance than any other solution. This makes this unreliable and likely a method far out of the technological needs and requirements of our gardening robot. Furthermore, its operation is best in large open fields which perfectly suits the large farms of the agriculture industry, however, this is not the aim of the robot we are designing. Most private gardens are of a small size meaning its main strength could not be used. Additionally, it is likely that a robot which has aerial abilities would find difficulty in maneuvering through the tight spaces of a private garden and would have to avoid many low hanging branches or pushes ultimately making its operation unsafe. | Hovering/Flying robots provide, without a doubt, the most unique way of movement from the previously listed. This method unlocks a whole new wide range of possibilities as the robot no longer has to consider on-ground obstacles; whether that is rocks or uneven terrain. The robot is able to view and monitor a very large terrain from one position due to its ability to position itself at a high altitude and quickly detect major problems in a very large area. This method also unlocks the possibility of the robot to optimize its movement distance as it is able to move from point A to point B directly in a straight line saving energy and time. However, as is the case with any solution, flying/hovering has its major problems. It is by far the most expensive method, as flying apparatus is far more costly and high maintenance than any other solution. This makes this unreliable and likely a method far out of the technological needs and requirements of our gardening robot. Furthermore, its operation is best in large open fields which perfectly suits the large farms of the agriculture industry, however, this is not the aim of the robot we are designing. Most private gardens are of a small size, meaning its main strength could not be used. Additionally, it is likely that a robot which has aerial abilities would find difficulty in maneuvering through the tight spaces of a private garden and would have to avoid many low hanging branches or pushes ultimately making its operation unsafe. | ||

{| class="wikitable" | {| class="wikitable" | ||

|+Hovering/Flying Robots | |+Hovering/Flying Robots | ||

| Line 232: | Line 313: | ||

=== Sensors Required For Navigation, Movement and Positioning === | === Sensors Required For Navigation, Movement and Positioning === | ||

Sensors are a fundamental component of any robot that is required to interact with its environment, as they aim to replicate our sensory organs which allow us to perceive and better understand the environment around us<ref>https://www.wevolver.com/article/sensors-in-robotics-the-common-types</ref>. However, unlike with living organisms, engineers are given the choice to decide what exact sensors their robot needs and must be careful with this decision in order to pick the sufficient options to be able to allow the robot to have its full functionality without picking any redundant options that will make the robot unnecessarily expensive. This decision is often based on researching and considering all possible sensors that are available on the market which are related to the problem the engineer is trying to solve and selecting the one which | Sensors are a fundamental component of any robot that is required to interact with its environment, as they aim to replicate our sensory organs which allow us to perceive and better understand the environment around us<ref>[https://www.wevolver.com/article/sensors-in-robotics-the-common-types Ayodele, A. (2023, January 16). ''Types of Sensors in Robotics''. Wevolver. https://www.wevolver.com/article/sensors-in-robotics-the-common-types]</ref>. However, unlike with living organisms, engineers are given the choice to decide what exact sensors their robot needs and must be careful with this decision in order to pick the sufficient options to be able to allow the robot to have its full functionality without picking any redundant options that will make the robot unnecessarily expensive. This decision is often based on researching and considering all possible sensors that are available on the market which are related to the problem the engineer is trying to solve and selecting the one which fulfils the requirements of the robot most accurately<ref>[https://www.sciencedirect.com/science/article/abs/pii/S0079642500000116#:~:text=The%20selection%20of%20an%20appropriate,as%20cost%2C%20and%20impedance%20matching. Shieh, J., Huber, J. E., Fleck, N. A., & Ashby, M. F. (2001). The selection of sensors. ''Progress in Materials Science'', ''46''(3-4), 461–504. https://doi.org/10.1016/s0079-6425(00)00011-6]</ref>. In this section we will specifically be looking into sensors which will aid our robot in traversing our environment, a garden. This means that we must consider the fact that the sensors we select must be able to work in environments where the lighting level is constantly changing as well as possible mis inputs due to high winds and/or uneven terrain. Additionally, it is important to note that unlike the discussion in the previous section, one type of sensor/system is rarely sufficient to fulfil the requirements and most robots must implement some form of sensor fusion in order to operate appropriately and this is no different in our robot<ref>[https://www.sciencedirect.com/topics/engineering/sensor-fusion#:~:text=Sensor%20fusion%20is%20the%20process,navigate%20and%20behave%20more%20successfully. Gupta, S., & Snigdh, I. (2022). Multi-sensor fusion in autonomous heavy vehicles. In ''Elsevier eBooks'' (pp. 375–389). https://doi.org/10.1016/b978-0-323-90592-3.00021-5]</ref>. | ||

==== LIDAR sensors ==== | ==== LIDAR sensors ==== | ||

[[File:LIDAR.jpg|thumb|337x337px|Lidar Sensor in automotive industry]] | [[File:LIDAR.jpg|thumb|337x337px|Lidar Sensor in automotive industry<ref>''The Lasers Used in Self-Driving Cars''. (2018, July 30). AZoM.com. <nowiki>https://www.azom.com/article.aspx?ArticleID=16424</nowiki></ref>]] | ||

LIDAR stands for Light Detection and Ranging. These types of sensors allow robots which utilize them to effectively navigate the environment they are placed in as they provide the robot with object perception, object identification and collision avoidance<ref>[https://www.mapix.com/lidar-applications/lidar-robotics/#:~:text=LiDAR%20(Light%20Detection%20and%20Ranging,doors%2C%20people%20and%20other%20objects. https://www.mapix.com/lidar-applications/lidar-robotics/ | LIDAR stands for Light Detection and Ranging. These types of sensors allow robots which utilize them to effectively navigate the environment they are placed in as they provide the robot with object perception, object identification and collision avoidance<ref>[https://www.mapix.com/lidar-applications/lidar-robotics/#:~:text=LiDAR%20(Light%20Detection%20and%20Ranging,doors%2C%20people%20and%20other%20objects. ''LiDAR sensors for robotic Systems | Mapix Technologies''. (2022, December 23). Mapix Technologies. https://www.mapix.com/lidar-applications/lidar-robotics/.]</ref>. These sensors function through sending lasers into the environment and then calculating how long it takes the signals they send to return back to the receiver to determine the distance to the nearest objects and their shapes. As can be seen, LIDAR’s provide robots with a vast amount of crucial information and even allow them to see the world in a 3D perspective. This means that not only are robots able to see their closest object, but whenever faced with an obstacle they can instantaneously derive possible methods of avoidance and to traverse around it<ref>Shan, J., & Toth, C. K. (2018). ''Topographic Laser Ranging and Scanning''. CRC Press.</ref>. | ||

LIDAR’s are often the preferred option by engineers in robots that operate outdoors as they are minimally influenced by weather conditions<ref>[https://intapi.sciendo.com/pdf/10.2478/agriceng-2023-0009#:~:text=The%20LiDAR%20sensor%20allows%20the,which%20allows%20clustering%20and%20positioning. https:// | LIDAR’s are often the preferred option by engineers in robots that operate outdoors as they are minimally influenced by weather conditions<ref>[https://intapi.sciendo.com/pdf/10.2478/agriceng-2023-0009#:~:text=The%20LiDAR%20sensor%20allows%20the,which%20allows%20clustering%20and%20positioning. Hutsol, T., Kutyrev, A., Kiktev, N., & Biliuk, M. (2023). Robotic Technologies in Horticulture: Analysis and Implementation Prospects. ''Inżynieria Rolnicza'', ''27''(1), 113–133. https://doi.org/10.2478/agriceng-2023-0009]</ref>. Often sensors rely on visual imaging or sound sensors which both get heavily disturbed in more difficult weather conditions, whether that is rain on a camera lens or the sound of rain disturbing sound sensors, this is not the case with LIDAR's as their laser technology does not malfunction in these scenarios. However, an issue that our robot is likely to face when utilizing a LIDAR sensor is that of sunlight contamination<ref>[https://opg.optica.org/oe/fulltext.cfm?uri=oe-24-12-12949&id=344314 ''Optica Publishing Group''. (n.d.). https://opg.optica.org/oe/fulltext.cfm?uri=oe-24-12-12949&id=344314]</ref>. Sunlight contamination is the effect the sun has on generating noise in the sensor’s data during the daytime and therefore possibly introducing errors within it. Since our robot needs to work optimally during the daytime it is crucial that this is considered. However, the LIDAR possesses many additionally positive aspects that would be truly beneficial to our robot such as the ability to function in complete darkness and immediate data retrieval. This would allow the users of our robot to turn on the robot before they go to sleep at night and wake up to a complete report of their garden status. Furthermore, these features are necessary for the robot as they would allow it to work in a dynamic and constantly changing environment, which is of high importance to as our robot is to operate in a garden. The outdoors can never be a fully controlled environment and that has to be considered into the design of the robot. | ||

As can be seen the LIDAR sensor has many excellent features that our robot will likely require, therefore it is a very important candidate when making our next design decisions. | As it can be seen, the LIDAR sensor has many excellent features that our robot will likely require, therefore it is a very important candidate when making our next design decisions. | ||

==== Boundary Wire ==== | ==== Boundary Wire ==== | ||

[[File:Boundary wire.jpg|thumb|Boundary Wire being placed by user]] | [[File:Boundary wire.jpg|thumb|Boundary Wire being placed by user<ref>''Robomow''. (n.d.-c). Robomow. Retrieved April 11, 2024, from <nowiki>https://www.robomow.com/blog/detail/is-it-possible-to-extend-the-perimeter-wire-or-change-it-later</nowiki></ref>]] | ||

A boundary wire is likely the most cost efficient and commonly implemented technique in state-of-the-art garden robots that are on the private consumer market today. It is not a complicated technology but still a very effective one when it comes to robot navigation. A boundary wire in the garden acts as a virtual barrier that the robot cannot cross, similar to a geo-cage in drone operation<ref>https://www.thalesgroup.com/en/markets/aerospace/drone-solutions/scaleflyt-geocaging-safe-and-secure-long-range-drone-operations</ref>. In order to begin utilizing it, the robot user must first lay out the wire on the boundaries of their garden and then dig them approximately 10 cm below the ground's surface, so that the wire is safe from any external factors. This is a tedious task for the user but has to only be completed once and the robot is now fully operational and will never leave the boundaries set by the user. It is important for the user to take their time in the first setup as any change they will want to make will require digging up many meters of wire and once again putting it in the ground after relocation. | A boundary wire is likely the most cost efficient and commonly implemented technique in state-of-the-art garden robots that are on the private consumer market today. It is not a complicated technology but still a very effective one when it comes to robot navigation. A boundary wire in the garden acts as a virtual barrier that the robot cannot cross, similar to a geo-cage in drone operation<ref>[https://www.thalesgroup.com/en/markets/aerospace/drone-solutions/scaleflyt-geocaging-safe-and-secure-long-range-drone-operations ''ScaleFlyt Geocaging: safe and secure long-range drone operations''. (n.d.). Thales Group. https://www.thalesgroup.com/en/markets/aerospace/drone-solutions/scaleflyt-geocaging-safe-and-secure-long-range-drone-operations]</ref>. In order to begin utilizing it, the robot user must first lay out the wire on the boundaries of their garden and then dig them approximately 10 cm below the ground's surface, so that the wire is safe from any external factors. This is a tedious task for the user but has to only be completed once and the robot is now fully operational and will never leave the boundaries set by the user. It is important for the user to take their time in the first setup as any change they will want to make will require digging up many meters of wire and once again putting it in the ground after relocation. | ||

The boundary wire communicates with the robot by emitting a low voltage, around 24V, signal which is picked up by a sensor on the robot<ref>https://www.robomow.com/blog/detail/boundary-wire-vs-grass-sensors-for-robotic-mowers</ref>. This means that when the robot detects the signal it knows that the wire is underneath it and it should not to continue moving in its direction. As is displayed above, the boundary wire is a very simple technology which with a slight amount of effort of the user can perform the basic navigability tasks. However, its functionality is fairly limited, it cannot detect any objects within the area of its operation and therefore avoid them meaning that its environment has to be maintained and clear throughout its operation. | The boundary wire communicates with the robot by emitting a low voltage, around 24V, signal which is picked up by a sensor on the robot<ref>[https://www.robomow.com/blog/detail/boundary-wire-vs-grass-sensors-for-robotic-mowers ''Boundary wire vs. Grass sensors for robotic mowers | Robomow''. (n.d.). Robomow. https://www.robomow.com/blog/detail/boundary-wire-vs-grass-sensors-for-robotic-mowers]</ref>. This means that when the robot detects the signal it knows that the wire is underneath it and it should not to continue moving in its direction. As is displayed above, the boundary wire is a very simple technology, which with a slight amount of effort of the user can perform the basic navigability tasks. However, its functionality is fairly limited, it cannot detect any objects within the area of its operation and therefore avoid them meaning that its environment has to be maintained and clear throughout its operation. | ||

==== GPS/GNSS ==== | ==== GPS/GNSS ==== | ||

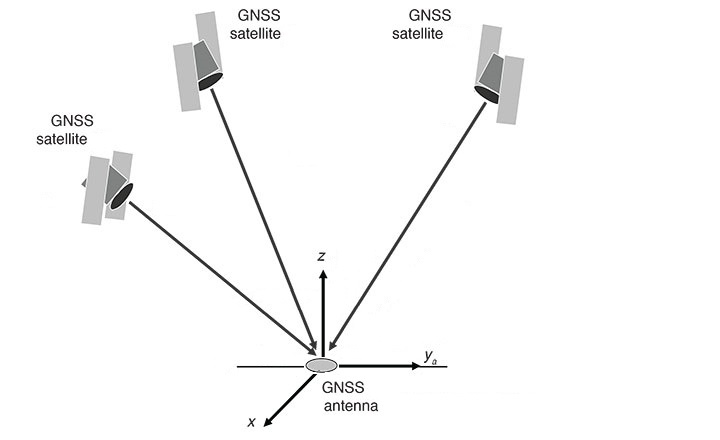

[[File:GNSS.jpg|thumb|GNSS operation depiction]] | [[File:GNSS.jpg|thumb|GNSS operation depiction<ref>''Veripos Help Centre''. (n.d.). Help.veripos.com. Retrieved April 11, 2024, from <nowiki>https://help.veripos.com/s/article/How-Does-GNSS-Work</nowiki></ref>]] | ||

GPS/GNSS are groups of satellites deployed in space that allow robots and devices to receive signals from them which aid them in positioning. Over the past few years these systems have gotten extremely accurate and can position devices to the nearest meter<ref>[https://www.ardusimple.com/rtk-explained/#:~:text=Introduction%20to%20centimeter%20level%20GPS%2FGNSS&text=Under%20perfect%20conditions%2C%20the%20best,accuracy%20of%20around%202%20meters. https://www.ardusimple.com/rtk-explained/#:~:text=Introduction%20to%20centimeter%20level%20GPS%2FGNSS&text=Under%20perfect%20conditions%2C%20the%20best | GPS/GNSS are groups of satellites deployed in space that allow robots and devices to receive signals from them, which aid them in positioning. Over the past few years these systems have gotten extremely accurate and can position devices to the nearest meter<ref>[https://www.ardusimple.com/rtk-explained/#:~:text=Introduction%20to%20centimeter%20level%20GPS%2FGNSS&text=Under%20perfect%20conditions%2C%20the%20best,accuracy%20of%20around%202%20meters. ''RTK in detail''. (n.d.). ArduSimple. Retrieved March 9, 2024, from https://www.ardusimple.com/rtk-explained/#:~:text=Introduction%20to%20centimeter%20level%20GPS%2FGNSS&text=Under%20perfect%20conditions%2C%20the%20best]</ref>. This happens through a process called triangulation, where multiple satellites calculate their distance to a device and establish its location<ref>[https://first-tf.com/general-public-schools/how-it-works/gps/#:~:text=GNSS%20positioning%20is%20based%20on,each%20of%20the%20visible%20satellites. ''GNSS - FIRST-TF''. (2015, June 4). https://first-tf.com/general-public-schools/how-it-works/gps/]</ref>. The usage of this sensor in our robot is very encouraging as it has been proven to be effective in the large scale gardening industry for many years, more specifically the precision farming domain<ref>[https://therobotmower.co.uk/2021/12/02/robot-mowers-without-a-perimeter-wire/ C3pmow. (2023, October 24). 12 Robot Mowers without a Perimeter Wire | The Robot Mower. ''The Robot Mower''. https://therobotmower.co.uk/2021/12/02/robot-mowers-without-a-perimeter-wire/]</ref>. An important distinction to note is that of GPS and GNSS. Although GPS is likely the term many are more familiar with from navigation applications they have used in the past, it is really just a subpart of GNSS which represents all Constellation Satellite Systems and GPS is simply one of them. If it is equipped with a sensor that can communicate with satellites and fetch its location at all times, our robot will be able to precisely ping the location it has found sick plants or any plants that need care and send that information to the users device. Once again promising to be a very key component in our robot design. | ||

==== Bump Sensors ==== | ==== Bump Sensors ==== | ||

[[File:Roomba bump sensor.png|thumb|Roomba bump sensors<ref>''iRobot Roomba 880 Bumper Sensors Replacement''. (2017, June 7). IFixit. <nowiki>https://www.ifixit.com/Guide/iRobot+Roomba+880+Bumper+Sensors+Replacement/88840</nowiki></ref>]] | |||

Bump sensors, commonly referred to as collision or impact sensors, are sensors designed to detect physical contact or force, a robot could encounter while traversing its environment<ref>[https://joy-it.net/en/products/SEN-BUMP01 ''Products | Joy-IT''. (n.d.). Joy-It.net. https://joy-it.net/en/products/SEN-BUMP01]</ref>. These sensors can be seen being utilized across various industries in robotics in order to increase the safety and allow for greater automation of the robots they are integrated into. Additionally, these devices are crucial in robotics and many different types of vehicles as they allow the robot to replicate the human ability of touch and have a tactile interface with the environment. | |||

In order to have this feature, bump sensors are typically composed of accelerometers, devices that are able to detect and measure a change in acceleration forces, or simply a switch which gets pressed as soon as the robot applies pressure on it from factors in its environment<ref>[https://nl.farnell.com/sensor-accelerometer-motion-technology#:~:text=An%20accelerometer%20is%20an%20electromechanical,moving%20or%20vibrating%20the%20accelerometer. ''Sensors - Accelerometers | Farnell Nederland''. (2023). Farnell.com. https://nl.farnell.com/sensor-accelerometer-motion-technology]</ref>. In its many applications the contact the robot experiences is with larger objects, so the sensor must not be extremely sensitive. This could not be the case in our gardening robot, as the robot would have to consider smaller and more fragile objects, requiring the sensor to have a much higher sensitivity. Bump sensors are most commonly used to make sure a robot does not drive into and collide with large objects, it allows the robot to detect that a change of direction in its motion must occur before continuing its operation. Although in many industries bump sensors are a last resort form of defense against the robot breaking or destroying important elements in our environment, in the robot we are designing it is a lot less of an issue if the robot were to detect an object through this sensor<ref>[https://www.researchgate.net/figure/The-iRobot-and-its-Sensors_fig1_224570540#:~:text=The%20bump%20sensors%20are%20used,it%20on%20its%20right%20side. Mukherjee, D., Saha, A., Pankajkumar Mendapara, Wu, D., & Q.M. Jonathan Wu. (2009). ''A cost effective probabilistic approach to localization and mapping''. https://doi.org/10.1109/eit.2009.5189643]</ref>. The robot would have to signal contact that it has collided with an object and change its direction of motion without further applications. However, this should still be unlikely to happen as in our robot the LIDAR sensor should have detected the problem beforehand and dealt with it. Nevertheless, technology is not always reliable and having a backup system ensures the robot experiences fewer errors in its operation, especially with the possibility of faults of the LIDAR due to sun contamination. | |||

==== Ultrasonic sensors ==== | ==== Ultrasonic sensors ==== | ||

[[File:Robot using ultrasonic sensor.png|thumb|Robot using ultrasonic sensor for navigation<ref>Hassall, C. (2012, September). ''A robust wall-following robot that learns by example''. ResearchGate. <nowiki>https://www.researchgate.net/figure/NXT-Tribot-with-pivoting-ultrasonic-sensor-before-and-after-modification-Modifications_fig1_267841406</nowiki></ref>]] | |||

Ultrasonic sensors, or put in more simple terms, sound sensors, are another type of sensor which allows a robot to measure distances between objects in its environment and its current position. These sensors also find widespread applications in robotics whether that is liquid level detection, wire break detection or even counting the number of people in an area. Their strength is that they allow robot that have them to replicate human depth perception in a method similar to that of a dolphin<ref>[https://ponceinletwatersports.com/how-do-dolphins-communicate/#:~:text=Dolphins%20emit%20high%2Dfrequency%20ultrasound,with%20each%20other%20and%20us. ''How do dolphins communicate?'' (2022, August 8). Ponce Inlet Watersports. https://ponceinletwatersports.com/how-do-dolphins-communicate/.]</ref>. | |||

Ultrasonic sensors function by emitting high-frequency sound waves through its transmitters and measuring the time it takes for the waves to bounce back and be received by its receivers. This data allows the robot to calculate the distance to the object, enabling precise navigation and obstacle detection. Although this once again is similar to the function of a LIDAR sensor, it allows the robot to work in a frequently changing environment without the use of state-of-the-art and expensive technology. One thing that must be considered in the usage of this sensor is that it tends to perform worse when attempting to detect softer materials which our team will have to take into account and make sure the sensor is able to detect the plants it is approaching<ref>[https://maxbotix.com/blogs/blog/advantages-limitations-ultrasonic-sensors MaxBotix. (2019, September 11). ''Ultrasonic Sensors: Advantages and Limitations''. MaxBotix. https://maxbotix.com/blogs/blog/advantages-limitations-ultrasonic-sensors]</ref>. | |||

In our gardening robot, ultrasonic sensors could once again play an important role in supplementing the functionality of more advanced sensors like the LIDAR. Through its simple and reliable solution, ultrasonic sensors provide essential functionality, improving the robot's operational reliability in a very wide range of gardens it could encounter in its deployment. | |||

==== Gyroscopes ==== | ==== Gyroscopes ==== | ||

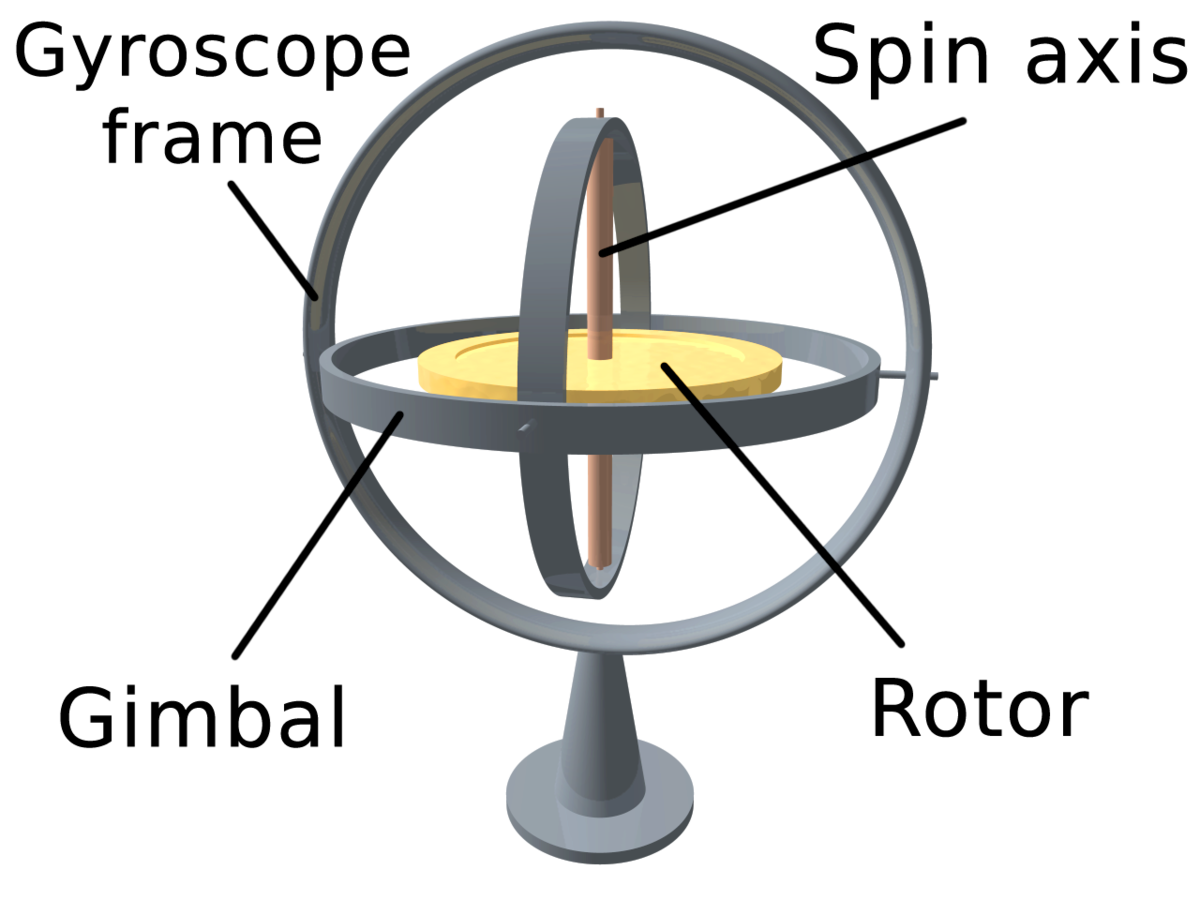

[[File:3D Gyroscope.png|thumb|Gyroscope basic model<ref>Wikipedia Contributors. (2019, October 24). ''Gyroscope''. Wikipedia; Wikimedia Foundation. <nowiki>https://en.wikipedia.org/wiki/Gyroscope</nowiki></ref>]] | |||

Gyroscopes are essential components in the field of robotics that help in providing stability and precise orientation control in a wide range of industrial areas. These devices use the principles of angular momentum to constantly maintain the same reference direction, in order to not change its current orientation<ref>[https://science.howstuffworks.com/gyroscope.htm#:~:text=A%20gyroscope%20is%20a%20mechanical,of%20gimbals%20or%20pivoted%20supports. Brain, M., & Bowie, D. (2023, September 7). ''How the Gyroscope Works''. HowStuffWorks. https://science.howstuffworks.com/gyroscope.htm] </ref>. This allows robots to improve their stability and therefore enhance their operational abilities. | |||

In order to perform their functionality, gyroscopes consist of a spinning mass, mounted on a set of gimbals. When the orientation of the gyroscope changes, its mechanism of conserving angular momentum means that it will apply counteracting force essentially keeping it in the same orientation. This feature is very important in the field of robotics as it allows the robot to know its current angle with regards to the ground and when that gets too large the gyroscope helps the robot to not flip over or fall during its operation. | |||

Since thousands of relevant sensors exist, we can only discuss the most important ones. Sensors such as lift sensors, incline sensors and camera systems can also be included in the robot for navigation purposes, however in the design of our robot they are either too complex or unnecessary. | |||

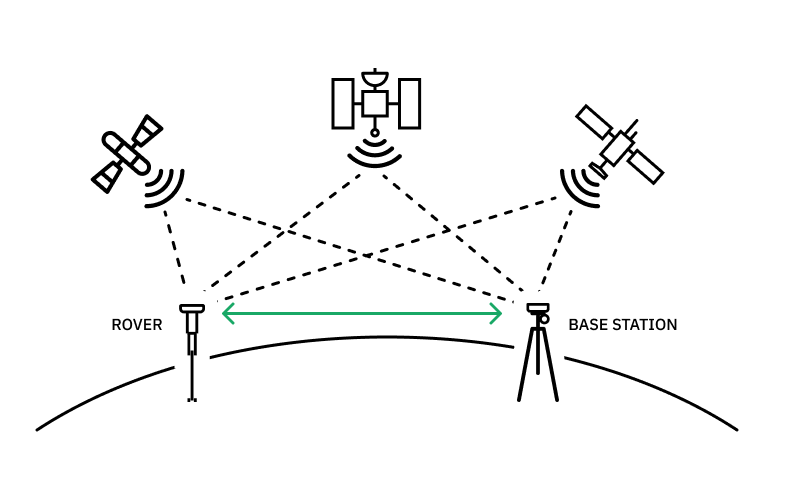

==== RTK sensor ==== | |||

RTK, or Real-Time Kinematic, is a really advanced positioning technology that allows for positioning of devices that is extremely precise.<ref>''RTK GPS: Understanding Real-Time Kinematic GPS Technology''. (2023, January 14). Global GPS Systems. <nowiki>https://globalgpssystems.com/gnss/rtk-gps-understanding-real-time-kinematic-gps-technology/</nowiki></ref> This precision being able to only have an error of 0.025m.<ref>''NEO-M8P u-blox M8 high precision GNSS modules Data sheet ''. (n.d.). Retrieved January 5, 2023, from <nowiki>https://content.u-blox.com/sites/default/files/NEO-M8P_DataSheet_UBX-15016656.pdf</nowiki></ref> Therefore it is no surprise that this technology can be seen in various applications such as in agriculture and construction.<ref>''RTK Applications: Precision Agriculture''. (n.d.). ArduSimple. Retrieved April 10, 2024, from <nowiki>https://www.ardusimple.com/precision-agriculture/</nowiki></ref> At its core, an RTK system utilizes a combination of GPS (Global Positioning System) satellites and ground-based reference stations which aid in positioning a robot. Unlike traditional GPS systems that offer accuracy within several meters, RTK improves this precision significantly, making it very necessary for tasks that require precision such as our plant identification robot which needs to be able to send the location of an unhealthy plant to the user with centimeter precision. | |||

[[File:7-Real-time-kinematic-positioning-RTK-20230721-FINAL.png|alt=https://pointonenav.com/news/is-build-your-own-rtk-really-worth-it/|thumb|RTK system in action <ref>Nathan, A. (2023, December 14). ''How to Build Your Own RTK Base Station (& Is It Worth It?) [2024]''. Point One Navigation. <nowiki>https://pointonenav.com/news/is-build-your-own-rtk-really-worth-it/</nowiki></ref>]] | |||

The key component of an RTK system is the RTK receiver, which is installed within the robot itself. This receiver communicates with GPS satellites to determine its position, but what makes this system unique from all others is its ability to also receive corrections from nearby reference stations.<ref>''How RTK works | Reach RS/RS+''. (n.d.). Docs.emlid.com. <nowiki>https://docs.emlid.com/reachrs/rtk-quickstart/rtk-introduction/</nowiki></ref> These reference stations are able to precisely measure their own positions and then broadcast correction signals to the RTK receiver that is mounted on the robot. Essentially, this means that the robot knows its slightly inaccurate location obtained from just the GPS signal but due to the fact that it also receives a signal from a reference station it can correct its GPS reading by comparing them with the ones received from the reference station allowing the robot to achieve centimeter-level accuracy. | |||

Although at first the idea may seem complex, the main idea of the operation of an RTK system is called carrier phase measurement. Unlike regular GPS systems, which rely on pseudorange measurements to determine the position of its user, RTK receivers use carrier phase measurements.<ref>''Carrier Phase - an overview | ScienceDirect Topics''. (n.d.). Www.sciencedirect.com. <nowiki>https://www.sciencedirect.com/topics/engineering/carrier-phase#:~:text=The%20carrier%20phase%20measures%20the</nowiki></ref> This process involves measuring the phase of the GPS carrier signal, allowing for highly accurate positioning. However, carrier phase measurements alone are subject to errors due to atmospheric conditions and other factors.<ref>Liu, H., Yang, L., & Li, L. (2021). Analyzing the Impact of Climate Factors on GNSS-Derived Displacements by Combining the Extended Helmert Transformation and XGboost Machine Learning Algorithm. ''Journal of Sensors'', ''2021'', e9926442. <nowiki>https://doi.org/10.1155/2021/9926442</nowiki></ref> This is where the corrections from the reference stations come into play, enabling the RTK receiver to mitigate these errors and achieve centimeter-level accuracy in real-time. | |||

To make communication between the reference stations and the RTK receiver possible, methods such as radio links, cellular networks, or satellite-based communication systems are needed.<ref>Rizos, C. (2003). ''Reference station network based RTK systems-concepts and progress''. ResearchGate. <nowiki>https://www.researchgate.net/publication/225442957_Reference_station_network_based_RTK_systems-concepts_and_progress</nowiki></ref> Regardless of the communication method used, the goal is to ensure that the RTK receiver receives timely and accurate correction data to correct its current position. | |||

As can be seen this technology solves a major issue our robot was facing that was kindly pointed out to us by one of our tutors, Dr. Torta. This issue being the fact that the robot can not simply use the GPS to send the location of the plant to the user as its accuracy is of a couple meters. This would mean that if the user were to have multiple plants within a 2-3 meter radius, even if provided with an image of the plant the user would have a difficult time finding the plant that is unhealthy. | |||

=== | === Mapping === | ||

===== | ===== Introduction ===== | ||

Mapping will be one of the most important features of our robot as it will be the very first thing the robot performs after being taken out of its box by the user and will rely on the quality of this process for the rest of its operation. Mapping is the idea of letting the robot familiarize itself with its operational environment by traversing it freely without performing any of its regular operations and simply analyzing where the boundaries of the environment are and how it is roughly shaped. This allows the robot to gain the required knowledge so that during its normal operation throughout its lifecycle it is aware of its positioning in the garden, areas it has visited in the current job and areas it still must visit. Essentially it turns the robot from a simple-reflex-agent to a robot which has knowledge stored in its database and can access it to make better informed decisions for more efficient operation. Mapping can be done both in 3D and in 2D depending on the needs of the robot and user. Initially, we considered 3D mapping in this project, enabling the robot to also memorize plant locations in the garden for easier access in the future, however since plants grow very rapidly, the environment would change quickly and the mapping process would have to be repeated on a daily basis, leading to a very inefficient process. Now that the decision was made to implement 2D mapping, similar to that of the Roomba vacuum cleaning robots, the purpose of the map would be to learn the dimensions and shape of the garden. It may come at no surprise, but as is the case in most design problems, there is rarely one solution and that is no different in the case of mapping. Nonetheless, the most optimal method for our robot is using the already existing method developed by Husqvarna AIM (Automower Intelligent Mapping) technology. | |||

===== | ===== Husqvarna AIM technology<ref>''Husqvarna AIM Technology''. (n.d.). Www.husqvarna.com. Retrieved April 10, 2024, from <nowiki>https://www.husqvarna.com/nl/leer-en-ontdek/husqvarnas-aim-technology/</nowiki></ref> ===== | ||

[[File: | Husqvarna Automower's intelligent mapping system operates through taking advantage of multiple cutting-edge technologies, allowing gardeners to maintain their gardens even easier than they previously could. At the core of its functionality the technology uses a combination of GPS, onboard sensors, and intelligent algorithms. The process begins when the robot is first taken out of its packaging and turned on. The robot straight away begins exploring the garden by first moving randomly through it as it initially has no information to reference. As discussed in the course Rational Agents we could say that the robots knowledge base is initially empty. However, as the robot is exploring, simultaneously, GPS technology aids in mapping the layout of the lawn, providing precise coordinates to guide the robot's movements. Through GPS, the robot establishes a blueprint of the terrain similar to that of the well known and loved Roomba, enabling it to navigate efficiently and cover every patch of grass. | ||

[[File:Husqvarna Mapping Technology Demo.png|thumb|527x527px|Husqvarna Mapping Technology Demo<ref>''Automower® Intelligent Mapping Technology – Zone Control''. (n.d.). Www.youtube.com. Retrieved April 10, 2024, from <nowiki>https://www.youtube.com/watch?v=KPvfUezE3NE</nowiki></ref>]] | |||

Once the GPS mapping is complete, the robot that has this technology is able to traverse the garden with great precision. Although mapping is very reliable, it is important that the robot is equipped with onboard sensors, including collision sensors and object detection technology, so the robot can detect obstacles in its path and adjust its movement. This ensures that no matter what, the safety of the robot will be maintained and other objects such as plants in the vicinity will not be harmed. Moreover, the intelligent mapping system enables the robot to adapt to changes in terrain and navigate complex landscapes effortlessly. This means that even if the robot is faced with slopes, tight corners, or irregularly shaped lawns, the AIM technology’s algorithms can find the best way the robot shall proceed at all points. | |||

Furthermore, Husqvarna Automower's intelligent mapping system incorporates features that enhance user experience and customization. Users can designate specific areas within the lawn for prioritized mowing or exclude certain zones altogether. This level of customization allows for tailored lawn care according to individual preferences and requirements. Additionally, the robot’s connectivity features enable remote control and monitoring via smartphone applications, providing users with real-time updates on mowing progress and allowing them to adjust settings as needed. Although not really relevant with our project, the robot using this technology may connect to many smart home devices such as the Google Home or Amazon Alexa. | |||

Another feature of Husqvarna Automower's intelligent mapping system is its ability to customize the robots mowing schedules based on current weather conditions, and energy efficiency. Although this seems almost impossible, the robot can do this by analyzing data gathered from its onboard sensors and weather forecasts that it can connect to. This approach to lawn maintenance saves a vast amount of time and effort for users, but also a lot of electricity, which does not have to be used when the robot decides it is unnecessary. | |||

=== Navigation | In conclusion, we can confidently say that Husqvarna Automower's intelligent mapping system is truly a groundbreaking advancement in robotic lawn care technology. Through utilization of the GPS and RTK sensors, and intelligent algorithms, the robot which utilizes this technology is able to successfully and efficiently navigate any garden it is utilized in. This is crucial for our plant identification robot as it will allow us to not worry about the navigation of the robot and we can be ensured that the robot's navigation will be efficient and let the robot visit every location in the garden without a problem allowing it to detect all sick plants that need care. | ||

== Hardware == | |||