PRE2019 1 Group3

Adaptive learning software for mathematics

Group Members

| Name | Study | Student ID |

|---|---|---|

| Ruben Haakman | Electrical Engineering | 0993994 |

| Tom Verberk | Software Science | 1016472 |

| Peter Visser | Applied Physics | 0877628 |

Planning

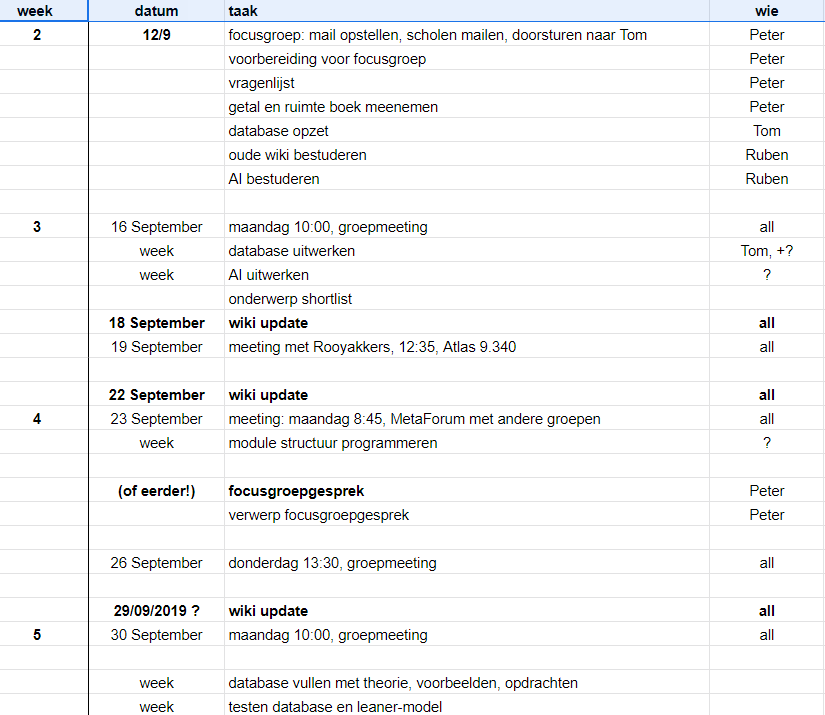

Every week we will have 2 meetings, in between the meetings we will work on individual tasks, results of the individual tasks will be examined in the meetings, the tasks discussed are the time when the tasks has to be done. Once a week a meeting with the tutor(s) is arranged to discuss progress and teamwork. In week 8 we will present our prototype to the class, and afterwards we will finalize the wiki. (One can click on the images to enlarge them!)

Introduction

There has been a big increase of technology in education; smart boards, laptops, tablets and online learning systems are now commonly used in classrooms. A lot of students have troubles with learning mathematics. Recent technologies in online learning software can help those students learn faster and keep them motivated. It also reduces the workload for teachers.

Problem Statement

Currently, most students make all math exercises from a book. The only feedback they get is if their answer is right or wrong. The exercises are the same for every student and are made to match the general level of all students, resulting in questions which are too simple or too difficult for most of the students. In this way the only way to give personal support is by the teacher which does not have time to help everyone individually. Adaptive Learning Software for Mathematics can help with this problem.

State of the art

Articles

Title: Math Aversion (State of the Art)

Link: https://ieeexplore-ieee-org.dianus.libr.tue.nl/document/6210554

Relevance: incorporate conceptual thinking and illustrations to make students understand mathematical ideas

Title: The Math Wars

Link: https://journals-sagepub-com.dianus.libr.tue.nl/doi/pdf/10.1177/0895904803260042

Relevance: The article provides an overview of the didactic discussion on math in the past century, as well as the latest controversy, the math war (maybe part of a larger culture war?). It boils down to a fervent discussion between ‘traditionalists’ and ‘modernists’, and their attempts to influence governmental educational policies on math (such as ‘the Standards’ and ‘the Framework’). The text is focussed on the US, but this is likely a trend in the West in general. It is useful to have some knowledge about these philosophical-didactic discussions, although in our limited time we should focus on how to implement the suggested methods of the two groups, not so much on the arguments.

Title: Mathematics is about the world - R.E. Knapp

Link: (book)

Relevance: A book about the role of mathematics in our lives, and therefore useful for thinking about how to teach the subject. The book claims that mathematics is abstract, but nevertheless is about the world around us, which we try to understand. That discovering quantitative relationships suits our needs for indirect measurement(s), such as the ‘tool’ of establishing geometric relationships. Trying to concretize the notion - that math is a powerful tool for humans - in our program will help to motivate students to engage with the topic, and help them understand new ‘tools’.

Title: Preparation, practice, and performance: An empirical examination of the impact of Standards-based Instruction on secondary students’ math and science achievement

Link: https://journals.sagepub.com/doi/pdf/10.7227/RIE.81.5

Relevance: One set of studies on the impact of ‘SBI’ (standards-based instruction) methods, such as: student self-assessment, inquiry-based activities, group-based projects, hands-on experiences, use of computer technologies, and the use of calculators. ‘Non-SBI practices’: teacher lecture, individual student drill and practice worksheets, and computer drill and practice programmes, etc.

overview of (SBI) student-centred methods: - using manipulatives or hands-on materials, such as styrofoam balls and toothpicks for building molecular models, dominoes, base ten blocks, tangrams, spinners, rulers, fraction bars, algebra tiles, coins, and geometric solids. - incorporating inquiry, discovery, and problem-solving approaches, such as making binoculars out of recycled materials, using scenarios from nature and everyday life events for groups of students to research and investigate using math and science concepts - applying math and science concepts to real-world contexts, such as banking, energy concerns, environmental issues, and timelines; - connecting mathematics and science preparation skills to specific careers and occupations - using calculators and technologies for capturing and analysing original data from original math and science experiments - communicating math and science concepts, through journal writing, small-group discussions, and laboratory/technical reporting of experiments and results.

Results: - SBI practices that were found to be significant contributors to students’ math achievement include the use of manipulatives, self-assessment, co-operative group projects, and computer technology. - SBI practices that were found to be significant contributors to students’ science achievement include the use of inquiry, self-assessment, co-operative group projects, and computer technology. - Virtually none of the observed non-SBI practices was found to be a significant contributor to student math or science achievement by gender or ethnic groupings.

Useful, because looking at effective methods is one way to know which side is right in the math war, or at least what methods we can use in our program. Our program might in a (superficial?) way fit into SBI, although that will ultimately depend on the type of exercises and methods we will include.

Title: Didactic material confronted with the concept of mathematical literacy

Link: https://link-springer-com.dianus.libr.tue.nl/content/pdf/10.1023%2FB%3AEDUC.0000017693.32454.01.pdf

Relevance: this essay is critical of the ‘highly technocratic’ vision ‘from the top’ that aims to let experts device didactic materials to be used by teachers and students, whilst ignoring: - why is math taught and what is the role of didactic material?, - how and why do students actually use such materials?, - In which ways do didactic materials shape the teachers’ activities? - What does it mean that didactic material is never adopted but always adapted?

Therefore the author claims it is more useful to focus on ‘valuable mathematical activities’ instead of ‘innovative didactic materials’.

Furthermore, the author claims that “mathematical literacy” should be the leitmotiv for the teaching and learning of mathematics (up to secondary school). Mathematical literacy conceives “the relationship between mathematics, the surrounding culture, and the curriculum”. He mentions how this should influence didactic materials, and what these materials should look like. He critiques the ‘optimism’ and ‘exclusivity’ approaches of teaching math,and supports the ‘inclusivity’ approach, which presents math as ‘a method to understand the social and economic world we live in. This strategy considers mathematical activity as potentially critical, political, loaded with values, and informative’ and “The cognitive style of daily routine is of high relevance within these mathematical activities, since it is a fundamental aim of the strategy to empower common sense. It is intended to develop the attitude of daily life towards an attitude of critical consciousness.”.

Useful because it really focuses on the users of didactic material (like our program!), an approach we can use to increase the value students (and teachers) find in our program. We should consider/confirm what mathematical literacy is, and whether it is the right standard to determine what is a valuable mathematical activity. The ‘inclusivity’ approach seems very interesting. However, the author seems very interesting in using math to discuss politics, if not to politicize (young) students, this seems a bad idea.

Title: Geometrical analogies in mathematics lessons

Link: https://academic-oup-com.dianus.libr.tue.nl/teamat/article/26/4/201/1664642

Relevance: A summary of possibilities of mathematics lessons regarding the use of analogies in teaching geometry for different age groups. Useful because we might apply this in the exercises to teach users geometry.

Title: Open Learner Models: Research Questions Special Issue of the IJAIED

Relevance: good summary of “learner models” and discussion of relevant aspects , very detailed, but good to use in a brainstorm for concretising the project.

Title: Intelligent Agent-Based e-Learning System for Adaptive Learning

Link: https://www-igi-global-com.dianus.libr.tue.nl/gateway/article/full-text-pdf/58052

Relevance: Adaptive learning approach: support learners to achieve the intended learning outcomes through a personalized way.

The main idea: to personalize the learning content in a way that can cope with individual differences in aptitude. NOT: personalizing the presentation style of the learning materials

model: - Aptitude-Treatment Interaction theory (ATI): there is a strong bond between the effectiveness of an instructional strategy (i.e. treatment) and the aptitude level of students -- aptitude: the capability to learn in a specific area either because of having talent or having prior knowledge in this area - Biggs’ Constructive Alignment Model: (use to operationalize ATI): an effective curriculum depends on adequately describing the educational goals desired. Biggs views curriculum as a teaching system, ultimate goal of system is to guide students towards the desired educational goals. He advocates the alignment of individual components in the system like teaching and learning activities (TLAs) and assessment tasks (ATs). It is a hierarchical framework. -- inherits the central idea of constructivism that education is a way to train students to be a self-learner > aim: improving students’ learning outcomes through enhancing their intrinsic motivation

“Students with lower cognitive skill require highly structured instructional environments than students with higher cognitive skills (Snow, 1989).”

Title: Personalized Adaptive Learner Model in E-Learning System Using FCM and Fuzzy Inference System

Link: https://link-springer-com.dianus.libr.tue.nl/content/pdf/10.1007%2Fs40815-017-0309-y.pdf

Relevance: Some new dimensions of adaptivity are discussed here, like automatic and dynamic detection of learning styles. This is more precise and quicker than previous ones. It is a literature-based approach in which a personalized adaptive learner model (PALM) was constructed. This proposed learner model mines learner’s navigational accesses data and finds learner’s behavioural patterns which individualize each learner and provide personalization according to their learning styles in the learning process. Fuzzy cognitive maps and fuzzy inference system, soft computing techniques, were introduced to implement PALM. Result shows that personalized adaptive e-learning system is better and promising than the non-adaptive in terms of benefits to the learners and improvement in overall learning process. Thus, providing adaptivity as per learner’s needs is an important factor for enhancing the efficiency and effectiveness of the entire learning process.

Title: Elo-based learner modeling for the adaptive practice of facts

Link: https://link-springer-com.dianus.libr.tue.nl/content/pdf/10.1007%2Fs11257-016-9185-7.pdf

Relevance: - computerized adaptive system for practicing factual knowledge. - widely varying degrees of prior knowledge. - modular approach: 1. an estimation of prior knowledge, 2. an estimation of current knowledge, and 3. the construction of questions. - detailed discussion of learner models for both estimation steps (1 & 2), -- a novel use of the Elo rating system for learner modeling. --- results, and variations in model and effectiveness

very useful, only change the topic

Titel: The Roles of Artificial Intelligence in Education: Current Progress and Future Prospects Link: https://files.eric.ed.gov/fulltext/EJ1068797.pdf Abstract: This report begins by summarizing current applications of ideas from artificial intelligence (Al) to education. It then uses that summary to project various future applications of Al--and advanced technology in general--to education, as well as highlighting problems that will confront the wide scale implementation of these technologies in the classroom. (relevance): This report gives an example of an already thought of algebra learning AI. However the program doesn’t automatically figure the level of the student. These things are called intelligence tutoring systems (or ITS). Overall very useful article.

Titel: Permutations of Control: Cognitive Considerations for Agent-Based Learning Environments Link: https://www.researchgate.net/publication/251779583_Permutations_of_Control_Cognitive_Considerations_for_Agent-Based_Learning_Environments Abstract: While there has been a significant amount of research on technical issues regarding the development of agent-based learning environments (e.g., see the special issue of Journal of Interactive Learning Research, (1999, v10(3/4)), there is less information regarding cognitive foundations for these environments. The management of control is a prime issue with agent-based computer environments given the relative independence and autonomy of the agent from other system components. This paper presents four dimensions of control that should be considered in designing agent-based learning environments: Instructural purpose, Feedback, relationship, confidence in AI. (relevance): More focussed on the cognitive foundation for Artificial intelligence environment. Interesting for the Usefulness of our ideas.

Titel: Introducing the Enhanced Personal Portal Model in a Synchromodal Learning Environment Link: https://www.researchgate.net/publication/251779583_Permutations_of_Control_Cognitive_Considerations_for_Agent-Based_Learning_Environments Abstract: Study that simulated a digital classroom (by placing camera’s students etcetera) (relevance): Not really relevant for us but interesting to take notice of (perhaps also making a digital environment for our idea)

Titel: Intelligence Unleashed Link: https://www.pearson.com/content/dam/corporate/global/pearson-dot-com/files/innovation/Intelligence-Unleashed-Publication.pdf Abstract: this short paper has two aims in mind. The first was to explain to a non-specialist, interested reader what AIEd (Artificial Intelligence in Education) is: its goals, how it is built, and how it works. The second aim was to set out the argument for what AIEd can offer learning, both now and in the future, with an eye towards improving learning and life outcomes for all. (relevance): This is a company who does research in this topic, it works together with teachers and researchers, therefore this might come as a big

Titel: Web intelligence and artificial intelligence in education. Link: https://www.researchgate.net/publication/220374721_Web_Intelligence_and_Artificial_Intelligence_in_Education Abstract: This paper surveys important aspects of Web Intelligence (WI) in the context of Artificial Intelligence in Education (AIED) research. WI explores the fundamental roles as well as practical impacts of Artificial Intelligence (AI) and advanced Information Technology (IT) on the next generation of Web-related products, systems, services, and activities. (relevance): More information on Web Intelligence and how it works together with AIED, it focusses on practical inpacts and advanced information technology, especially the first part is interesting for us.

Titel: 10 roles for artificial intelligence in education Link: https://www.teachthought.com/the-future-of-learning/10-roles-for-artificial-intelligence-in-education/ Abstract: This article explores 10 roles for artificial intelligence in education Being: Automate, such as grading Adapt to student needs Point out improvements Ai tutors. Helpfull feedback changes how we find and interact with inforamtion. change role of teachers trial and error less intimidating change how schools find, teach and support students AI may change where students learn, who teaches them, and how they acquire basic skills. (relevance): It can show us some new thing AI helps teachers, which we haven’t thought of yet.

Titel: Exploring the impact of artificial intelligence on teaching and learning in higher education Link: https://www.researchgate.net/publication/321258756_Exploring_the_impact_of_artificial_intelligence_on_teaching_and_learning_in_higher_education Abstract: This paper explores the phenomena of the emergence of the use of artificial intelligence in teaching and learning in higher education. It investigates educational implications of emerging technologies on the way students learn and how institutions teach and evolve. Recent technological advancements and the increasing speed of adopting new technologies in higher education are explored in order to predict the future nature of higher education in a world where artificial intelligence is part of the fabric of our universities. (relevance): It shows the use of Artificial intelligence already in higher education, it might give us some learingpoints while developing our own artificial intelligence.

Titel: The roles of models in Artificial Intelligence and Education research: a prospective view

Link: https://telearn.archives-ouvertes.fr/hal-00190395/ Abstract: In this paper I speculate on the near future of research in Artificial Intelligence and Education (AIED), on the basis of three uses of models of educational processes: models as scientific tools, models as components of educational artefacts, and models as bases for design of educational artefacts. In terms of the first role, I claim that the recent shift towards studying collaborative learning situations needs to be accompanied by an evolution of the types of theories and models that are used, beyond computational models of individual cognition. In terms of the second role, I propose that in order to integrate computer-based learning systems into schools, we need to 'open up' the curriculum to educational technology, 'open up' educational technologies to actors in educational systems and 'open up' those actors to the technology (i.e. by training them). In terms of the third role, I propose that models can be bases for design of educational technologies by providing design methodologies and system components, or by constraining the range of tools that are available for learners. In conclusion I propose that a defining characteristic of AIED research is that it is, or should be, concerned with all three roles of models, to a greater or lesser extent in each case. (relevance): It can be used to explain a model in which our artificial intelligence solution wolud be beneficial to use.

Titel: Evolution and Revolution in Artificial Intelligence in Education

Link: https://link.springer.com/article/10.1007/s40593-016-0110-3 Abstract: The field of Artificial Intelligence in Education (AIED) has undergone significant developments over the last twenty-five years. As we reflect on our past and shape our future, we ask two main questions: What are our major strengths? And, what new opportunities lay on the horizon? We analyse 47 papers from three years in the history of the Journal of AIED (1994, 2004, and 2014) to identify the foci and typical scenarios that occupy the field of AIED. (relevance): It can give us a quick and ordered view of what research has already been done in the form of AI and where there lie some possibilities for us (written in 2016)

Title: Towards Emotionally Aware AI Smart Classroom: Current Issues and Directions for Engineering and Education

Link: https://ieeexplore.ieee.org/abstract/document/8253436

Abstract: Paper about a emotionally-aware AI smart classroom which can take over the role of a teacher.

Title: AI and education: the importance of teacher and student relations

Link: https://link.springer.com/article/10.1007/s00146-017-0693-8

Abstract: Paper about the difference in relationship between student-teacher and student-AI

Title: Designing educational technologies in the age of AI: A learning sciences‐driven approach

Link: https://doi.org/10.1111/bjet.12861

Abstract: How to develop an AI algorithm based on studies about how people learn.

Title: Effectiveness of Intelligent Tutoring Systems: A Meta-Analytic Review

Link: https://journals.sagepub.com/doi/10.3102/0034654315581420

Abstract: This review describes a meta-analysis of findings from 50 controlled evaluations of intelligent computer tutoring systems.

Title: Artificial Intelligence as an Effective Classroom Assistant

Link: https://ieeexplore.ieee.org/abstract/document/7742268

Abstract: Article about blended learning, wherein the teacher can offload some work to the AI system.

Title: Integrating learning styles and adaptive e-learning system: Current developments, problems and opportunities

Link: https://www.sciencedirect.com/science/article/pii/S0747563215001120

Abstract: Review on how learning styles were integrated into adaptive e-learning systems.

Title: Learning Computer Networks Using Intelligent Tutoring System

Link: https://philpapers.org/rec/ALHLCN

Abstract: This paper describes an intelligent tutoring system that helps student study computer networks.

Title: Mathematics Intelligent Tutoring System

Link: https://philpapers.org/rec/ABUMIT

Abstract: Intelligent tutoring system for teaching mathematics that help students understand the basics of math and that helps a lot of students of all ages to understand the topic.

Title: TECH8 intelligent and adaptive e-learning system: Integration into Technology and Science classrooms in lower secondary schools

Link: https://www.sciencedirect.com/science/article/pii/S0360131514002875

Abstract: The purpose of this research is to demonstrate the design and evaluation of an adaptive, intelligent and, most important, an individualised intelligent tutoring system (ITS) based on the cognitive characteristics of the individual learner.

Other groups with similar subject

http://cstwiki.wtb.tue.nl/index.php?title=PRE2016_3_Groep18: Elementary school. Made 4 small educational games for children.

http://cstwiki.wtb.tue.nl/index.php?title=PRE2017_3_Groep14: Elementary school. Made a simple math game for young children.

http://cstwiki.wtb.tue.nl/index.php?title=PRE2017_3_Groep8: High school. Made an adaptive gamified online learning system using Moodle. The goal of this group is similar to our goal, but they focused more on gamification and less in making the exercises personalized for each student. They used Moodle as an open source online learning system. The big advantage of Moodle is the wide range of plugins that already exist, so it was possible to build further upon those plugins. However creating quizzes and exercises especially mathematical expressions was difficult and time consuming. Many of the plugins they used had no documentation which made it hard to make changes.

Currently available software

An overview of already existing software and their limitations

Getal & Ruimte

- Limited number of exercises, only a digitalized version of the exercises from the book.

- Does not remember previously made mistakes in questions.

- Does not repeat previously incorrectly made exercises.

- No hints and feedback after a question. Students must look up the answers in a digital book.

Khan Academy

- No specific feedback based on mistakes.

- Does not remember previously made mistakes in questions.

Wolfram Alpha Problem Generator

- No specific feedback based on mistakes.

- Does not remember previously made mistakes in questions.

- No automatic problem selection, users must decide when to go to the next level.

Mathspace

- Does not cover all the material of high school.

- Does not remember previously made mistakes in questions.

Why is our program better?

The software of Getal & Ruimte is specifically made for high school students, follows the structure of the book and covers all the material . However it is mostly a digitalized version of the book with some adaptiveness. The program does not repeat incorrectly made questions or common made mistakes. Newer programs like Khan Academy, Wolfram Alpha and Mathspace are smarter and are build from the beginning as an online program instead of starting from an existing book. Khan Academy has a system to decide when to go to the next level, Wolfram Alpha covers almost all the material and can give step by step solutions for all problems. Mathspace gives specific feedback and can also give feedback on intermediate steps. They all lack the possibility to repeat questions where the student had difficulty or made the same mistake.

Users, stakeholders and their requirements

Primary users: high school mathematics students

Our primary users will be high school mathematics students (or people who want to study this on their own). The subject of mathematics is a vital one for developing abstract thinking and applied in many ways in technical fields, and the skill of problem solving can be applied in many ways in life. At the same time mathematics is often considered difficult by students. For these reasons we think the subject of mathematics is where good value can be provided with our web-based AI-enhanced learning tool. Additionally, mathematics (like other hard sciences) allows for easier checking of answers than the type of language-based (short) essay answers that are required for social sciences. Vocabulary would be a suitable topic as well, however we are unaware of a shortage in German or French translators, whereas there is a shortage in engineering and in the skilled trades. Since highschool in the bridge between primary and college, that is where our program could be most valuable. The introductory test to assess the mathematics level can incorporate primary school topics, and we could offer such exercises to the slightly more mature student as well, whereas primary school children are less self-directed.

By estimating the current level of understanding and the learning style (speed, etc.) of the individual student, we can offer a tailored learning experience that will help the student get quick feedback (and hopefully more positive results), which will help with building confidence in tackling (new) mathematics problems and might even make the subject more enjoyable. Using students to beta-test our program will be a useful way to interact with these users, since they might be less able to communicate exactly what it that is lacking in their mathematics course. The proof of the pudding is in the eating, measuring success and especially engagement over time will show how well our program works. Once the students have an actual product to work with they might give valuable feedback on why they kept using it, or why they stopped using it. Of course here we need to take into account that some students might have learning difficulties that need more direct coaching or are just plainly uninterested in improving their lack of mathematical skill. Our program might help some of these kinds of students, but assuming it will be the mathematics panacea is unwise. We aim to get a prototype early b-test with students done at the end of the project.

- HAVO/VWO!

Primary users: high school mathematics teachers

Other primary users will be high school mathematics teachers. Students can of course start using the web-program on their own, but if high school teachers find it valuable enough to recommend it to students, that could be a good sign. Of course we will have to consider their biases in didactics and their general mindset in terms of improving education (for some it might be lacking). Nevertheless, their impact can be useful, by for instance finding out what in their experience are the main difficulties students have, and trying to adapt for those thing in our program (content-wise, but also in terms of engagement). We will form a focus group of a few of these teachers to make qualitatitve study on the difficulties of teaching mathematics. Their input will be used to determine the direction and attributes of our prototype. Later on we might get them to evaluate it (in combination with a beta-test on students?).

Secundairy users: Headmasters

Headmasters are stakeholders, since they have a say in the way mathematics is taught in their school. Financial cost will be always be in the back of their minds, and as such they will critically assess the performance, robustness and scalability of the program. But, they are clearly concerned about the rates at which students progress through key-courses like mathematics (in the Netherlands it has certain higher requirements than some other courses in terms of passing classes and graduating). If our program can help with that, this is an opportunity. Maybe, our program’s introductory test can be used as the intro-test for new students, and the program can help bridging the gap (the school may decide to used other ways to help these students as well). Depending on the school the headmasters may also have didactical views that are key to the identity of the school that may or may not match with what we decide to use in our program. Given the diversity in education-land, this simply means there will always be some less enthusiastic headmasters with respect to adopting our program. It could be tempting to go with the majority, but we have to independently assess whether the majority is correct, maybe the majority view is related to the problems in teaching mathematics.

Tertiary users / stakeholders

Ministry of Education

At a more distant level the ministry of education has similar concerns as the headmasters in terms of money spend and passing rates, but they also bound to more ideological/didactic points of view that are determined by the parliament and the current minister, tough on the other hand the bureaucracy itself might also have a mainstream point of view that is somewhat different. These views will somewhat affect the chances of our program ultimately getting adopted in individual school, if for instance certain funding is allocated to, or withdrawn from, computer-based mathemathics/learning aids – with certain requirements, etc. However, the ministry does not determine for the school what teaching aids they must use in particular.

(Technical) Universities / STEM departments

Technical universities and STEM departments at others have two stakes, one is a higher level of mathematics ability of incoming students, since it is the basis on which many majors (if not all) depend. This could save money in terms of additional efforts, and can bring in more money (if students progress/graduate quicker). Secondly, the more engaging mathematics program we aim to develop might induce more student to choose to go to a technical university or a STEM major instead of a alpha or gamma major.

(Tech) companies

Given the lack of workers in the skilled trades and in engineering, technical companies have a clear stake in students being better in (applied) mathematical problems solving. And such skills can in fact be useful in many jobs, so companies in general might benefit, although it might sound less interesting than clean-desk or scrum or feng shui.

Approach/milestones/deliverables

We will start with some up front research, we will make some sort about didactics and how to apply this in our webpage we want to create. While doing research about these topics we will start working on our webpage. We are planning to build some sort of web page or program. This artifact will have some sort of artificial intelligence which keeps track of the level of skill of the student and gives exercises matching the skill level of the student. After being done with the research about didactics. We will lay the proposal of our artifact in front of several high school teachers. We want to have their input, as the artifact is build for there purpose. We then apply the given advise in our artifact. Lastly we plan to test our improved application for use, we will go to the same (or other) high school teachers and ask if we can test them in their classes. We then come up with a conclusion and finish the research.

Our milestones will be the finish of our research, the alpha version of our application, then the comments of the teachers, then the beta version of our application. The findings of the test subject and finally the final version.

Our deliverables will be a research about the current software and possible use of AI in education, the findings we got from talking to teachers, the test results found when testing on students and finally our artifact, described on this wiki. Furthermore, we deliver a presentation on our project. (Note: we ended up not using artificial intelligence for our project, it was the direction we decided to study in the first week).

Requirements

- Gives students individualized support such as hints, feedback, and problem selection

- Hints and feedback based on the learning style of the student (Felder and Silverman model)

- Recognizes common mistakes and gives explanation if those mistakes are made multiple times

- Repeat previously incorrectly made questions

- Simple, intuitive and motivating user interface

- Consistensy across all pages

- No distractive elements

- Motivates students to make exercises

- Shows progress of different modules

- Level of the exercises matches the level of the student

- Collaborative learning

- Students can help each other with exercises

- Competitive gamification

Using an adapting collabrative learning system can help students learning the subject and also motivate students[1].

Questions and feedback can be personalised for every students learning style by using the Felder and Silverman model[2]. This model describes four learning categories where each category is characterized by two opposite attributes. The Felder and Silverman’s main four categories are the following:

- Sensing versus Intuitive

- Visual versus Verbal

- Active versus Reflective

- Sequential versus Global

Course satisfaction has a significant effect on performance but performance does not have a strong positive effect on course satisfaction. Previous online learning experience influences self-regulated learning directly. [3]

Motivation and emotion significantly influence student learning experiences, including achievement, satisfaction, and passing vs. nonpassing; whereas the use of learning strategies did not.[4]

Concept

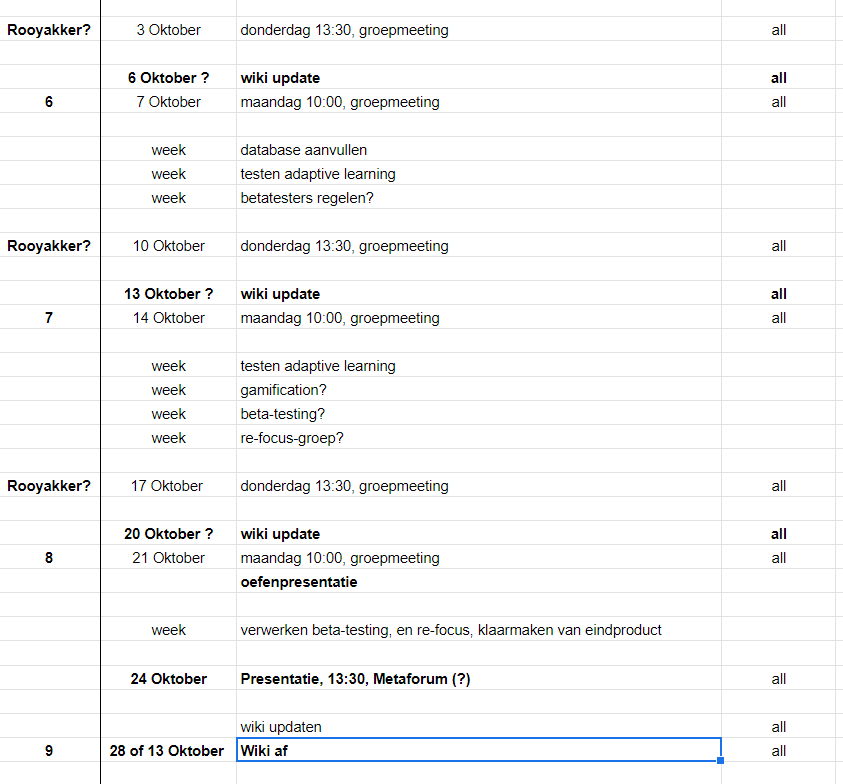

Hierarchy of mathematics modules

In the figure below is a sketch of what the structure of the program can look like. The modules might be related more complexely, this we need to assess. Modules can have sub-modules. The number of exercises is one key aspect in attuning to the individual learner.

Didactics of mathematics

Desk study: Getal en Ruimte studybook

To orient ourselves into the well-established mainstream didactic methodology, we ordered a book from the most used mathematics book-serie in the Netherlands: "Getal and Ruimte". We ordered the first book for VWO 3, since we considered that the to be an interesting class, the one before the choice for the alpha (maatschappij) or beta (wetenschap) direction is made. We decided to focus on the material of the first chapter, since our project ran during the beginning of the school year. This choice would allow us to let some students in that year try our software at the end of the project, to get some user-feedback. The topic of linear equation also lends itself to our purposes, since we do not intent to recreate Wolfram Mathematics-like problem solving tools, but instead we wanted to focus on the development of an adaptive learning program, with mathematics as the subject.

Qualitative study: focus group

In order to understand the problems with teaching highschool math, a focus study will be held with a few teachers. This qualitative approach will give us valuable in-depth knowlegde on the praxis of the didactics of mathematics. In this short time period it is more useful than a small questionnaire with generally even fewer responses. In a focus group the partipants can all add to the discussion, react to each other, and the interviewer can ask more suitable follow-up questions.

The invitation mail

On thursday the 12th the following mail was send to 17 secondary HAVO/VWO schools in Eindhoven and area.

"Uitnodiging focusgroep voor wiskunde software

Wij zijn een groepje van drie derdejaars bachelor-studenten op de Technische Universiteit van Eindhoven die graag in contact zouden komen met HAVO/VWO wiskunde leraren voor het ontwikkelen van een online wiskunde hulpmiddel. Wij zijn bezig met een project van twee maanden, waarbij de wensen van gebruikers van technologie centraal staan.

Door middel van een groepsgesprek van ongeveer een uur met enkele wiskundedocenten willen wij bespreken welke problemen zij ervaren in de les en op welke manier individu-gerichte software hen daarbij zou kunnen helpen. Voor dit gesprek komen wij graag naar uw school.

Met de hulp van deze focusgroep zal het doel van ons prototype worden bepaald. In overleg met de school zouden wij dit prototype enkele weken later (kort) willen laten testen door leerlingen.

Graag horen wij of een of meerdere wiskunde docenten op uw school interesse hebben in dit gesprek!

Met vriendelijke groet,

Peter Visser, mede namens Tom Verberk en Ruben Haakman"

Responses

From four schools (Eckart college, Were Di college, Carolus Borromeus college and Stedelijk college) we have received positive reactions, all with groups of two or more teachers. Due to their full agenda's and time-constraints, we decided it would be easier to have seperate focus-group conversations at each school. In this way the different didactic methods of the schools can be discussed more in depth as well. We could use results from earlier talks in later talks to have some (one-way) feedback between teachers. Two more school reacted, only to indicate that they did not have time, though they found the project interesting. In the case of no positive reactions, these schools would have been called, to follow up on the mail. However, given the positive reactions, this was not necessary.

Due to some delay between mails, and the busy schedules of the teachers, the two interviews that materialised were held on the 30th of September (4 teachers, Eckart college) and the 1st of October (2 teachers, Were Di college). The contact with Carolus Borromeus took much longer to react, and eventually did not react, so sadly this option had to be removed from our focusgroup. The fourth school reacted only in the second-to-last week of our project, and any feedback from this meeting (likely to occur even later) would not be useful in our prototype-development.

Preparation

A question list has been prepared, with possible follow up aspects, to guide the discussion of the teachers in the focusgroup, and to try to optimize useful information for our design choices. The points will not be checked off like an interview, but are a guide for the discussion. The concept of quantitative studies, and specifically the focus group (or group discussion) format has been studied with the help of a basic textbook (An Introduction to Qualitative Research: Learning in the Field - Rossman & Rallis). The question-points, and sub-points, are shown below, in Dutch, since the subjects and interviewer are Dutch, and this will improve the quality of the discussion. First the interviewer will shortly introduce himself and explain the project and the goal of the discussion.

Note: Due to the relatively slow process of setting up meetings, due to slow mail-contact and full teacher agenda's) the interviews happened later in our project that we had envisioned. For this reason the nature of the interview changed somewhat. The initial questionlist was still used, but relatively less time was spend on these questions, and that time was used to ask more specific question about the design-decision we had already made (in order to progress in our limited-time project). These questions naturally fitted after the initial questions.

1. korte introductie van elke docent: opleiding, ervaring (jaren, klassen, niveaus)

2. didactische methode van school: boek, lesgeven, hulpmiddelen - pluspunten - verbeterpunten

3. individuele methoden van docenten

4. problemen met wiskunde-overbrengen?

- wat ter tafel komt!

- concentratie?

- hoofdrekenen vs rekenmachine?

- hoeveelheid oefenen (buiten de les)?

- verschillen tussen leerlingen?

5. Op welke manier probeert men deze problemen het hoofd te bieden, wat werkt wel en niet?

6. Op welke manier zou een (online) individueel-adaptief programma hieraan kunnen bijdragen?

7. Wat is jullie ideale voorstelling van zo’n dergelijke programma?

8. specifieke vragen over doelstelling programma

-- diagnostische toets

-- goede leerlingen: verder werken

-- zwakke leerlingen: extra oefenen

-- vervangen van deel van oefenen met boek

-- klassikaal toetsen (meteen oefenen van hoofdrekenen?)

-- helemaal zelfstandig

-- Herhalen van de theorie in het programma, of juist focus op oefenen?

9. Manieren om studenten 'engaged' te houden (over langere tijd)?

10. Het idee van deel-hints voor het helpen oplossen van een probleem (ipv simpelweg het antwoord of de hele uitwerking)?

11. Gedurende het jaar toetsen over stof van voorgaande hoofdstukken om kennis couranter te houden?

12. Vooral focus op studenten die meer oefening nodig hebben?

13. functie: extra oefenmateriaal, op termijn vervanging van de opdrachten in het boek, maar theorieboek en uitleg van docent blijven nodig?

14. Verdere aspecten die ter tafel komen.

Results

Both interviews were recorded, in order for the interviewer to focus on the conversation instead of note-taking, and also for the ease of listening back to certain parts that afterwards seemed bussy with talk. For the ease of this report, these recordings have been summarised below, with a focus on distilling the general feedback on functionality and requirements.

Eckart college (Eindhoven): Interview-audio: https://drive.google.com/open?id=169vw4nwV3GwrsKWE_7hQmYYKpcWNHXN7

Over het algemeen waren de 4 docenten te spreken over het idee. Ze hebben al wel een soort software, maar zonder hints, en ze moeten zelf de opdrachten inprogrammeren. Ze gebruiken dat niet echt kreeg ik de indruk. Hoofdrekenen vonden ze niet echt een probleem, omdat ze in de les de rekenmachines niet laten gebruiken, dus leerlingen ontwikkelen de vaardigheid op deze manier al. Het idee van hints waren ze erg over te spreken, als verbetering op een antwoordboekje (of de hele uitwerking). Ook het idee dat ze een beter diagnose middel hebben met deze software sprak ze aan. Verder vonden ze het vooral interessant als aanvulling op de les, en (deelse) vervanging van de opdrachten uit het boek.

Voor de verschillende niveau’s en jaren de problemen nogal verschillend. Specifiek voor VWO 3 speelt dat er een tweedeling is tussen wie waarschijnlijk wiskunde a en wie waarschijnlijk wiskunde b gaan doen. De ene groep heeft meer uitleg nodig, en herhaling van de simplere opdrachten, de andere groep heeft dingen eerder door (en door verveling kunnen die lastig zijn in de les).

Daarom willen de docenten ook een toepassing voor die betere leerlingen, niet per sé ‘extra’ werk, maar vervangende opdrachten, die interessanter zijn, o.i.d. (Dit hadden we zelf ook bedacht, maar valt dus buiten ons prototype).

Een andere tip is dat leerlingen de mogelijkheid moeten hebben om een opdracht (of opdrachtsoort) op te slaan, om die vervolgens dan makkelijk te kunnen laten zien aan de docent in de les.

Verder vonden de docenten het ook een goed idee als leerlingen elkaar (online) kunnen helpen met een opdracht, en daar dan misschien iets van punten voor kunnen krijgen. (Dit lijkt me buiten het prototype vallen, maar kunnen we meenemen in de verbeterpunten)

Ook nog een tip dat we het goed moeten opdelen in blokjes, zodat het niet te lang duurt, en ervoor moeten zorgen dat leerlingen kunnen zien hoe ver ze zijn, bijv. Een progressie-balkje.

Een docent wilde ook een soort vragenuurtje organiseren buiten de les, waar leerlingen dan vragen over de software kunnen stellen. De andere drie waren hier niet enthousiast over. Die vinden dat de software vooral moet dienen om de leerling te helpen richting zelfstandig leren te werken. Hetzelfde geld voor mailtjes over vragen in de software.

Wel vonden ze dat er een feedback middel moet zijn om technische problemen met de software of opdrachten te kunnen aangeven.

Voor de prototype test zijn er twee docenten met een vwo3 klas. Ze zitten tussen een vakantie en een toetsweek, dus hebben geen tijd om in begin van week 43 het prototype in de klas te proberen. Wel vonden het een goed idee om (nadat ze het zelf hebben bekeken) een link door te sturen. Omdat de toets over hoofdstukken 1 en 2 gaat, is qua prototype vooral handig voor de leerlingen (en dus voor user-feedback) als de invulling voor hoofdstuk 1 dat wij hebben gekozen, vooral een soort uitgebreide diagnostische toets is. De uitbereiding is dan qua het soort vragen, en qua herhaling van vragen bij foute (of pas na hints opgeloste) vragen.

Verder moeten ipv inlognaam ‘nicknaam’ gebruiken, ipv met privacy van leerlingen die vaak onder de 16 jaar oud zijn. Een vraag over klas of docent zou volgens hen wel kunnen, om het uit elkaar te houden, en omdat dit niet individueel te traceren is.

Een goede vraag was ook of we wel het huidige aanbod in de markt hebben bestudeerd. Dat is denk ik iets wat wel in de presentatie en/of wiki moet bespreken.

Were Di college (Valkenswaard): Interview-audio: https://drive.google.com/open?id=16ZZhRjL8b0mgmnFvsUzFZEmQQ3dZZr0L

Over het algemeen waren de 2 docenten te spreken over het idee. Ze hebben ook al wel een soort software, maar zonder hints, en ze moeten zelf de opdrachten inprogrammeren. Ze gebruiken dat niet echt kreeg ik de indruk. Hoofdrekenen vonden ze wel echt een probleem, als onderdeel van een algemeen gebrek aan rekenvaardigheden, als ze van de basisschool afkomen. Hiervoor kan het idee van diagnostische toets voor nieuwe leerlingen dus handig zijn, zodat men sneller en gerichter kan inspringen op gaten in deze vaardigheden. Ook diagnose voor nieuwe klassen (voor een docent nieuw) vonden ze een goed idee.

Het idee van hints waren ze erg over te spreken, als verbetering op een antwoordboekje (of de hele uitwerking). Verder vonden ze het vooral interessant als aanvulling op de les, en (deelse) vervanging van de opdrachten uit het boek.

Daarom willen de docenten ook een toepassing voor die betere leerlingen, niet per sé ‘extra’ werk, maar vervangende opdrachten, die interessanter zijn, o.i.d. (Dit hadden we zelf ook bedacht, maar valt dus buiten ons prototype). Probleem hierbij is hoe groot het de verschillen worden, en in hoeverre één les dan nog toereikend is voor de grote verschillen.

Het idee om een opdracht (of opdrachtsoort) op te slaan, om die vervolgens dan makkelijk te kunnen laten zien aan de docent in de les, vonden ze erg handig.

Ook nog een tip dat we het goed moeten opdelen in blokjes, zodat het niet te lang duurt, en ervoor moeten zorgen dat leerlingen kunnen zien hoe ver ze zijn, bijv. Een progressie-balkje. Volgens de docenten zou dit soort ‘gamification’ (er een spelletje van maken) het vooral voor jongens interessanter kunnen maken.

De docenten hebben allebei niet vwo3 als klas, en op deze school is er binnenkort geen toets over hoofdstuk 1 + 2. Dus hier is het test-idee voor de andere school niet zo nuttig. Wel kunnen we de link van het programma doorsturen aan de ene docent, die het dan wil doorgeven aan de betreffende docenten, maar ik denk dat we hier niet veel van moeten verwachten, omdat het voor de leerlingen dan puur herhalen is zonder ‘noodzaak’ zoals een toets…

Ook hier was een goede vraag of we wel het huidige aanbod in de markt hebben bestudeerd. Dat is denk ik iets wat wel in de presentatie en/of wiki moet bespreken. Maar zelf hadden ze nog niet van dit soort software gehoord.

Discussion and implementation

The importance of the following requirements has been affirmed with the help of the focusgroup:

- exercise practice tool (as opposed to theory-laden)

- use contextual hints to help students learn (compared to merely showing the answer or the whole derivation)

- repeat exercises until the student has solved a few without hints

- show progress to students

- the diagnostic functionality for teachers: student performance overview and details

- for later: exercises for the faster students so they can use their time in highschool worthwhile

The following requirements have been added with the help of te focusgroup:

- easy to use for teachers (an end-product, no need to program in questions, etc.)

- use nicknames instead of 'name' with respect to privacy of students under 16.

- keep the (sub)modules short enough, so that student can complete one in a timespan that fits their concentration-arc

- ability to save an exercise, in order to discuss it with students

- feedback option, so students can report problems to the developers

- later on: possibility to discuss problems on an online platform ?

Design choices

Homework-support tool

After studying the didactic articles, the Getal & Ruimte book, and the focusgroup discussions, we decided that our mathematics software would be a homework-support tool, or an assisted homework tool, instead of a full-fledged independent studies program. The main problem for students is that they need to spend enough time on their homework, not that the teachers are doing a very bad job in explaining the theory, or that the book does not explain the theory that well. Doing it better than the current school would require a breakthrough on didactics on our part, which has not much to do with software, and more with philosophy and psychology.

The reality of current students is that they have two tools for understanding the theory (teacher and book), but that they have but one real tool for making homework, which is checking if their answer is correct (or figuring out why that answer is correct). Or asking the teacher in the next lesson, but students seem to do this very little, they write question marks in their notebooks, but then just skip to the next question, according to the teachers we spoke with. Of course, teachers would be unable to answer all such question marks in limited classroom time. For this reason helping students make their homework with software is our chosen goal of this project.

New software

Based on our review of current software, we decided that implementing our ideas about adaptive learning required new software, where we could easily manage users, and add functionalities in the programming language, Python, we (to a greater or lesser extent) had experience in. Furthermore Python is a much used language, with extensive documentation and importable modules such as SciPy.

Topic

For the prototype we wanted to choose one chapter. We decided that an interesting group would be VWO3, since those students face the choice to go into the beta or the alpha direction (with their respective math-levels), and if successful the possibility to recruit more people into the beta-sciences, perhaps even prospective Tue students. In order to test the prototype with the student of teachers we interviewed, we decided that we would pick the first chapter of the book, linear equations. We bought this book to study the widely accepted didactic method ‘Getal & Ruimte’ as an example and stepping stone.

Adaptive hints

One main aspect of our concept of adaptive learning is adaptive hints, so that based on the errors of students they can choose to get a tip on how to solve the type of problem. This instead of either looking up the answer, or looking up the fully worked out solution. Especially for students who have difficulties with math, ‘reverse-engineering’ the method to get to the right answer might not be the best way to learn mathematics, and seeing the whole solution does not teach one to think through problems. In our software we want to give them a hint, and let them redo a similar question (with different numbers), this can happen with multiple errors in a row, from fundamental, to making a mistake with a minus sign in the final answer. This is an attempt to automatize the kind of ‘activating’ tips that (good) teachers or homework-tutors tend to give. Another way we give adaptive hints is by giving a student an indication if he has made a particular type of error multiple times, this will help him to understand what the mistake is, and we can suggest to look up the theory in book, or to ask this question to the teacher in the next class. This is meant as a fail-safe, but also implemented in an activating way.

Adaptive repetition

Another key aspect of adaptive learning is adaptive repetition. We decided to give this two forms. The first way is on the level of questions (question-types, really). In order to make sure the students has understood the particular solution strategy for a question type, we aim to make the student give a correct answer three times. This means that the repetition for a student depends on how well they make exercises, if they get it right from the start, and work diligently, they can move on after 3 questions of one type. However, the more students struggles with applying concepts, or with working problems out consistently, the more repetition the student will get. This works somewhat similar to the book, which often has subquestions that are similar. The faster students can usually skip half of them, whereas the students who struggle might need all of them.

Another form of adaptive repetition is our idea to make the size diagnostic test depend on how well a student has done in that module, with a basic minimum. Furthermore, our idea is to also use the program to repeat exercises from previous module(s) during the final testing of the next module, so that the various topics in a year stay somewhat familiar, which is useful for follow-up chapters (in the same or a next year). This repetition can also depend on how well students did a particular module, maybe depend also a grades of school tests, and perhaps on how well a student generally seems to retain knowledge over longer periods. These latter repetition forms go beyond our the scope of our prototype.

Progress, but not score

We decided that students would not get a score for how many good or bad answers they gave, since the aim is to foster learning, not grading. We want to indicate how many good answers they have given on a particular question, when they are working on it, so that they know when they could go to the next question-type. Furthermore we can indicate how many question-types there are in a module, and where they are in that regard. A percentage would not work well, since that will change depending on each good and wrong answer.

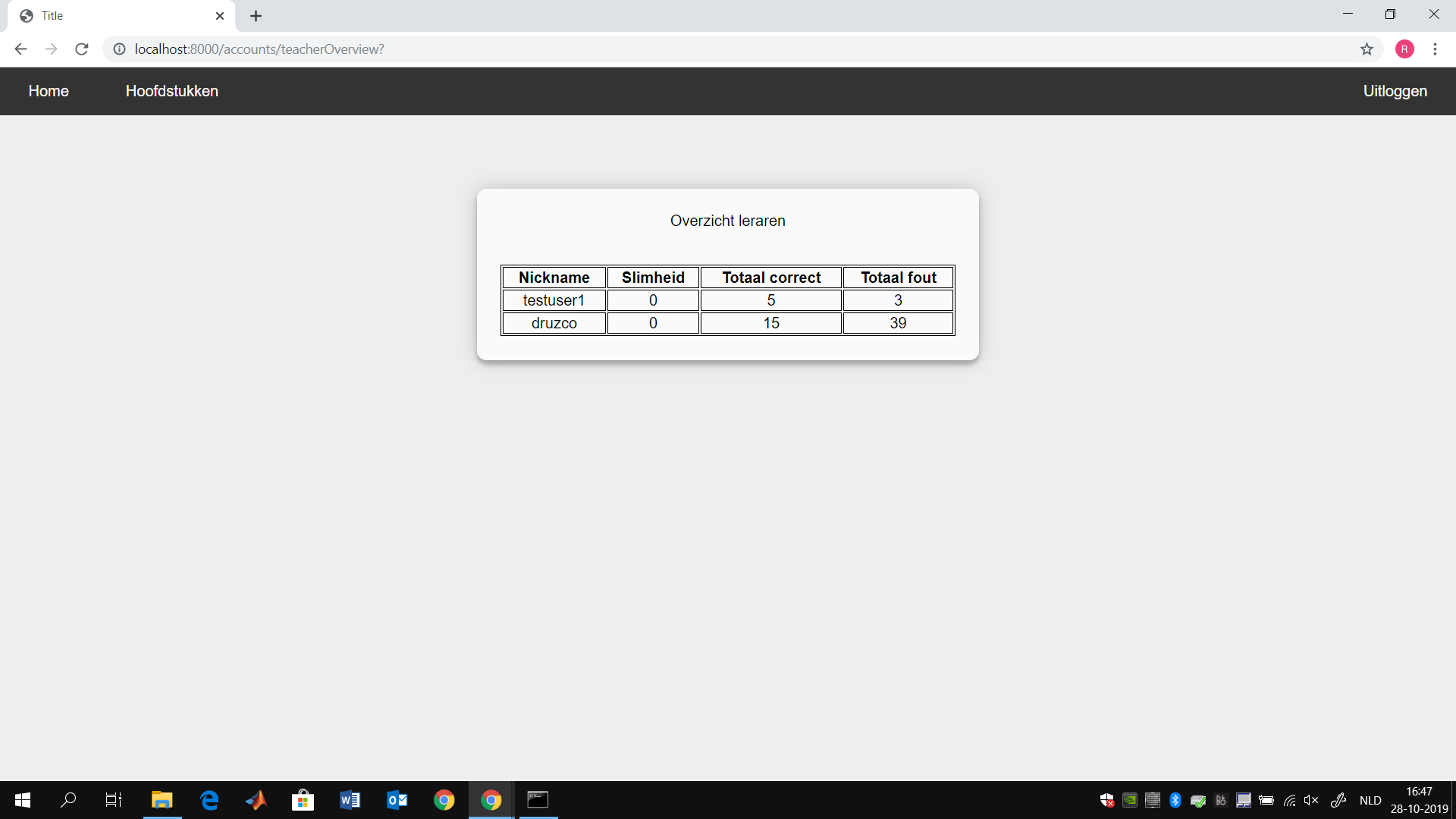

Teacher overview

We decided that teachers would be helped by a overall overview of the performance of students, so they can see how many questions each students has attempted, and how often they made errors. They can quickly see which students have not done anything, and which ones are struggling and which ones are doing very well. This is something teachers like to have, especially in the beginning of a school year, but also to track changes in terms of effort. Furthermore we could make an overview for each question, and which ones seemed most difficult for the students.

Question-types and implementation

We selected a few question-types for our program for chapter one of the the ‘Getal & Ruimte’ book for VWO3. We selected for question-types that would be easier to program, so that we did not select anything with graphs. This is because we wanted to focus on showing our adaptive learning system idea, not on programming such images. Furthermore, based on teacher feedback, we decided to skip questions with text, and selected for ‘exact’ problems. Next, we wanted a logical progression in question difficulty, which is also the didactic method of the book itself.

In the beginning we selected questions from all 5 paragraphs, but eventually we decided that we would only showcase a few questions, since each took quite some time, even after the implementation-basics were set-up. This had two causes, one, the need to make sure answers were whole numbers, since that is the method of the book as well, to insure students can calculate numbers by head, and the increased complexity with each question-type.

Below, for each question we will show an example, the generalized form, and discuss the way in which the right answer was pre-calculated. Question 1: reduce / herleid - example: 0x + - 10 + 9x + - 1 - solution: 9x - 11 - generalized: ax + b + cx + d - calculation: (a+c)x + (b+d)

Implementation: The variables a,b,c,d are random integer values, which can be positive or negative. We ensured that the constants would not be zero here, since that would be too trivial, a zero in front on an ‘x’ is les trivial (for this level). We split up the answer in two answers, one for each sum of variables. Based on these two answer, we could give hints about the summation of x-terms and constants (both, or one being mistaken). Question 2: reduce, made sure only x-terms are on the left - example: -9x + - 5 = -1x + 1 - solution: -8x = 6 - generalized: ax + b = cx + d - calculation: (a-c)x = (b-d)

Implementation: This question is quite similar in terms of programming, we did make sure not let the two x-term constants (a and c) have the same value, since that would result in a trivial question. Question 3: solve the linear equation for y - example: -13y + - 3 = -12y + 4 - solution: y = -7 - generalized: ay + b = cy + d - calculation: y = (b-d)/(a-c) Implementation: This question is more difficult to make, because of the division, and the requirement for the answer x to be an integer. So to calculate the variables, we start with a random integer, make sure it is not zero, then give the denominator (a-c) a random value, and make the nominator (b-d) equal to the product of the answer and the denominator. To make sure it checks out, a is defined as the denominator minus c (random value), and d is defined as the nominator plus b (random value). Furthermore we made sure both terms of the division would not be zero.

In terms of hints, we only get one value from the user, and we used errors from the simple minus sign, to calculating if the user had perhaps made errors in either nominator (such as ‘b+d’) and denominator (such as ‘a+c’). We also can see if the person calculated these two terms correctly, but then divides them in the wrong way. This all gives the possibility of wrong answers with decimals, and to make things work we changed the button input form, and relatedly had to change the values to floats, and use round-offs. Question 4: solve the linear equation for t - example: -17(1t + 0) + 0(-8t + 0) = 1(-5t + -111) + -3(1t + -7) - solution: t = 10 - generalized: a(bt + c) + d(et + f) = g(ht + i) + j(kt + l) - calculation: t = (g*i + j*l - a*c - d*f) /( a*b + d*e - g*h - j*k) Implementation: This question is much more difficult to implement, since it has 12 variables. The approach is somewhat similar to question 3, but to make sure it works with many random variables, including zero’s, it was difficult to program. To make sure the integer answer, the programming starts from there again. To ensure the denominator is solved for, a is calculated with the help of the ‘bottom’ value, and divided by b (which is set as 1, to prevent problems). The same is done with the nominator, with the help of top, by calculating i (which depends on a as well), by dividing by g (which is set as 1). Attempts to randomize all values, and based on that solve the problem in different ways were made for many hours, but eventually we settled for a slightly more practical way.

In terms of hints, a problem with a one-value answer as given by the users, is that backwards calculations to find out where mistakes were made become basically impossible, in the sense that it can give false ‘positives’, if answers are wrong. This will be noted in the discussion, it is probably best to make questions with step-answers to familiarize students with problems, and then give a similar question-type with only the one-value answer form, to ensure that they have learned the steps by heart this can be done by repetition at later point. Similarly, for diagnostic tests the current question-form is more useful, since students would otherwise be given too much hints to solve problems. So a difference between homework-question and diagnostic-question answer forms is a future solution to improve on our goal of adaptive math software.

Technical aspects

In this part of the wiki the technical aspects of our application will be explained. First the foundations of our application will be discussed, next the database structure of the application will be discussed, thereafter the layout of the web page will be discussed. Following that specific methods used in the code will be viewed in more detail and explained in a clear and structured manner, lastly the interface of the application will be discussed.

The following is documentation for our github repository. To view the working application click the following link and do the steps described in the readme.

https://github.com/tomverberk/accounts

Foundation

Most of our application is programmed using Python 3.0. As a web framework we used Django: "an free and open source web application written in python. A framework is nothing more than a collection of modules that make development easier.The official project site describes Django as "a high-level Python Web framework that encourages rapid development and clean, pragmatic design". For the interface we used a application wide CSS template.

The main application can be split into 3 parts: Login module, Question module and Teacher module.

Login Module:

The login module consists of the actual login mechanism, This includes an register form, a login form, a landing page (page where you "land" when you enter the url) and a home page.

Question module:

The queston module consists of 2 main parts. The General Question part and the actual question part. The General question part mainly contains method that are used for all modules in general, or are related to routing. (E.G. the select current module module). The actual question part is related to the individual questions.

The genaral questions part contains: current module section, select module section.

The actual question part contains: All the seperate questions, answer pages to all the questions and the "answer next question" part.

Teacher module:

The teacher module consist of all the teacher functionality. This includes an teacher verification question and the student overview, once the teacher is verified.

Database

An sqlLite database was used to manage our data. To manage the data in the best way possible and without keeping unused data we choose the following database tables in our database

Customuser

Customuser is the standard User database table python has, only it is adjusted to serve us the way we want it. We added 3 extra values untop of the values that were standard. The standard data is given in italics, our new data is given in bold, in brackets the type of data is given. All the data that is in the Customuser table is:

Id(integer): The Id that is given to a user.

password(varchar(128)): The password filled in by the user.

last_login(datatime): The last time the user has logged in (NULL if user has not logged in).

is_superuser(bool): If a user is able to access all pages (Not used in our website).

username(varchar(150)): The username the user filled in.

first_name(varchar(30)): The first name of the user (not used in our application due to privacy reasons, our focusgroup suggested this change for us).

last_name(varchar(150)): The last name of the user (also not used).

email(varchar(254)): The email of the user, filled in during sign up.

is_staff(boolean): To denote if some user is part of the development staff, not used in our application (this will allow the user to access all the admin functionality, which is not something we want teachers to be able to do.

is_active(boolean): To denote if someone is active, this is checked based on the lastlogin time.

date_joined(datetime): The date and time the user has signed up an account.

general intelligence(integer): The intelligence modifier we keep track of to determine how smart someone is.

isTeacher(boolean): Boolean that states if a user is a teacher.

Module

The Module table is a simple auxilerary table to make sure some data about the modules is contained. The data in the module database has to be changed via some sort of database inserter or management program. We did this beforehand, adding some modules to the database.

id(integer): The id that is given to a certain module.

title(varchar(200)): The title of an given module.

text(text): Some text explaining what the module is about. EG if a module contains quadratic formulas with 2 variables, the text for that module will be this.

module_user

The module_user table is where most of the actions in our database take place. It is the main factor that connects the users to the modules. Every time somebody changes something in the database (except adding teacher or signing up). This table will be selected. As said this table connects the users to the modules, it does this in such a way that it keeps track of how many questions a student has correct, wrong etc. It changes the intelligence of the student of this module to better simulate how smart a student is. The table with its values looks as follows:

id(integer): The ID of the combination such that it is easy to find. This ID is an unique value and is automaticly assigned by the database upon creating such an module_user entry.

currentModule(integer): To denote if the user is currently active in this module. The decision to make this an integer value and not a simple boolean value is because in this way we can keep track of which question of the module the user is working on and not just the module in general.

amountCorrect(integer): The total amount of questions the user has correct in the given module.

amountWrong(integer): The total amount of questions the user has wrong in the given module.

amountHints(integer): The total amount of hints the user requested in the given module, (we decided not to incorperate this later on).

moduleScore(integer): The score of the module.

mistake1(integer): The amount of time the user has made mistake 1 in the given module.

mistake2(integer): The amount of time the user has made mistake 2 in the given module.

mistake3(integer): The amount of time the user has made mistake 3 in the given module.

mistake4(integer): The amount of time the user has made mistake 4 in the given module.

mistake5(integer): The amount of time the user has made mistake 5 in the given module.

currentQuestionHints(integer): The amount of hints the user has asked for the current question.

currentQuestionCorrect(integer): The amount of correct answers the user has given to the current question.

module_id(integer)(ForeignKey): A foreignkey which couples this table coupled to the CustomUser table.

user_id(integer)(ForeignKey): A foreignkey which couples this table to the Module table.

Other Tables

Their were some tables that would be helpfull in the application but were not implemented in the final version due to lack of time. Some of these tables are the Chapters table and the mistake table. The idea of the Chapter table was that each module would be part of a chapter. This table was made but eventually not used. Another idea was the mistake table. In this table common mistakes for each chapter would be indicated in a table to better keep track of the kind of mistakes.

Layout

In this section of the wiki the layout of our application will be discussed. This is done by giving a brief explanation of the web page and the different functionalities it has

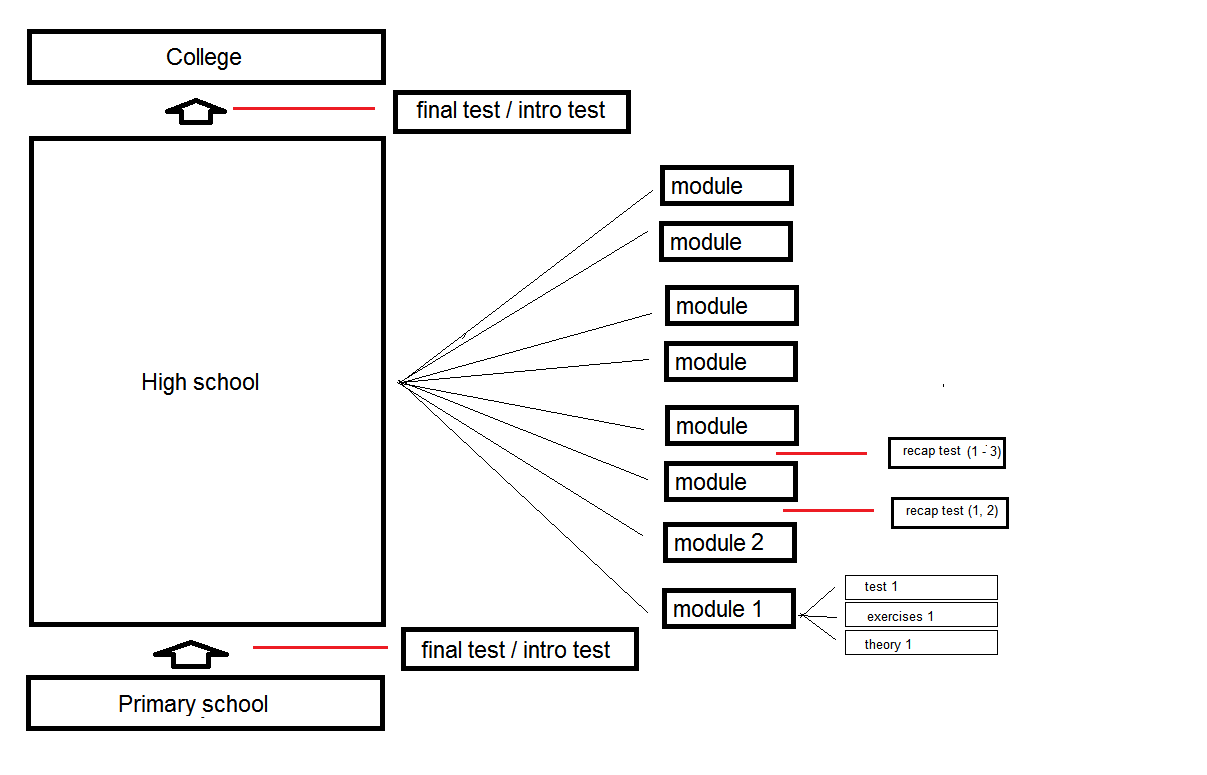

Landing page

The landing page is the page where you “land” when entering the given URL. From this page you can either login or sign up as a new account.

Functionalities:

Log In Button: This button will redirect you to the login page.

Schrijf in Button: This button will redirect you to the sign up page.

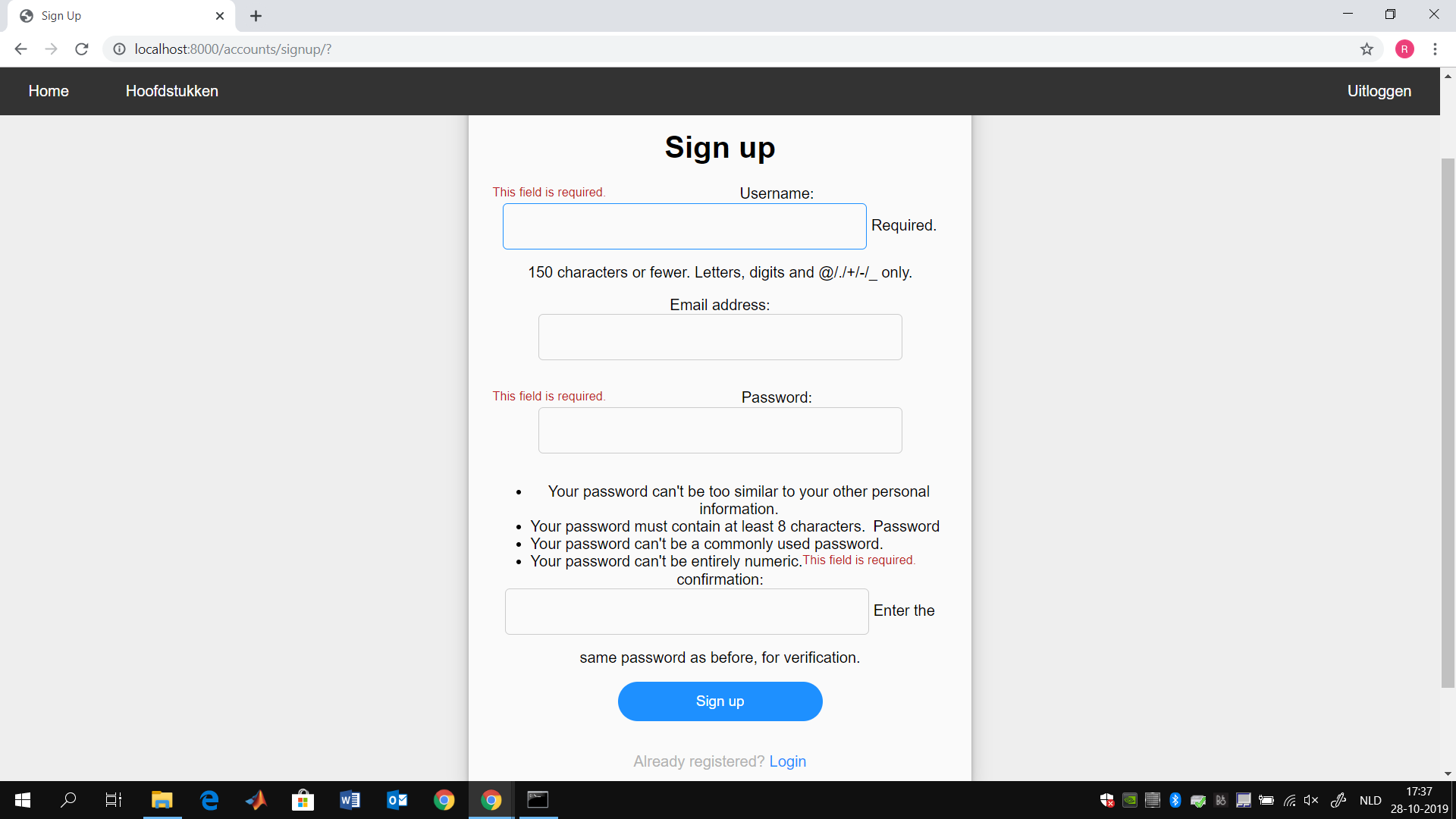

Singup page

The sign up page is the page where you make a new account. You do this by filling in the given form, upon filling in the form in the correct way the website will create an new account for this user. This includes an entry in the ‘’’Customuser’’’ table discussed in the previous section and multiple entries in the ‘’modules_user’’’ table discussed in the previous section one for each module.

Functionalities:

username Field: This field the user has to fill in the username, this username cannot exist in the database yet. There are no further restriction for the username, all given restrictions are given on the web page.

Email Adress Field: This field the user has to fill in his/her emailadress. The box checks if the email addres can be an existing emailadress. (It checks if there is an example@example.example structure).

Pasword Field: The user has to fill in his/her password. The password box checks if the requirements to the password given at the page are met.

Repeat password field: The user has te repeat their password. Such that he will not have accidentely made an type. The page checks if the password was the same as before.

Signup button: When pressing the signup button. The webpage will start the signup procedure once all the above checks give a positive result. The webpage will then redirect the user to the landing page where the user can login.

Login button: This button will redirect to the login page. It is a simple shortcut for the user to take if it turns out he already had an account.

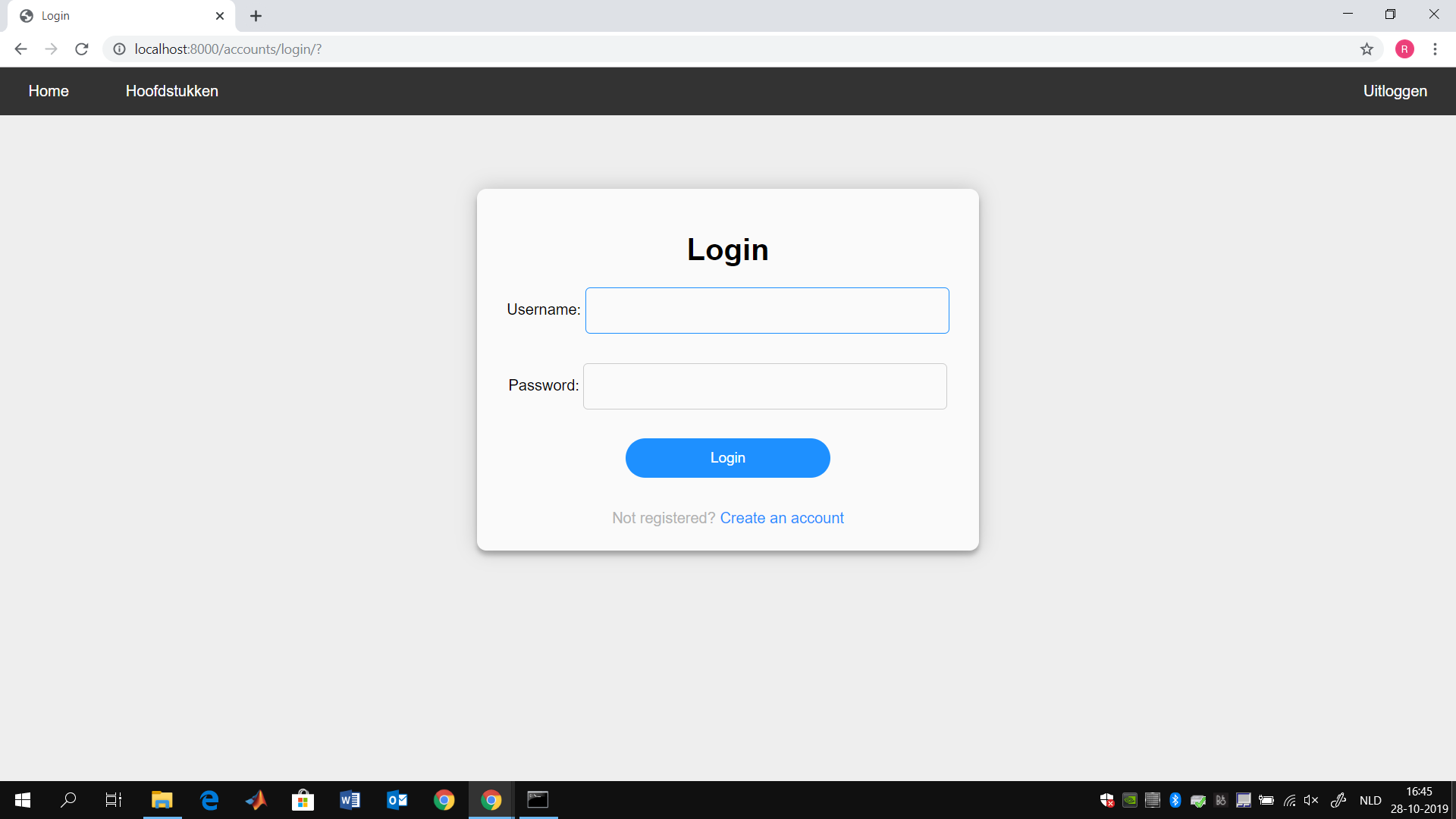

Login page

The loginpage is the page where you login as an user if you already have an account. If the username and password are incorrect the website will give an general error, this means that there is for the website no difference in having a wrong username or having a wrong password.

Functionalities:

Username field: This field the user has to fill in the username with whom they have an account on the website.

Password field: This field the user has to fill in the corresponding password.

Main functionalities once logged in

Once you have logged in as a user you can use the menubar at the top of your screen. This menubar is available at all the pages listed below. The buttons discussed in this subsection will therefore be available but not be discussed during the explanations of the pages that follow.

Functionalities:

Home button: This button redirects to the home page.

Hoofdstukken button: This button redirects to the module overview page.

Uitloggen button: This button will log the user out and redirect the user to the landing page.

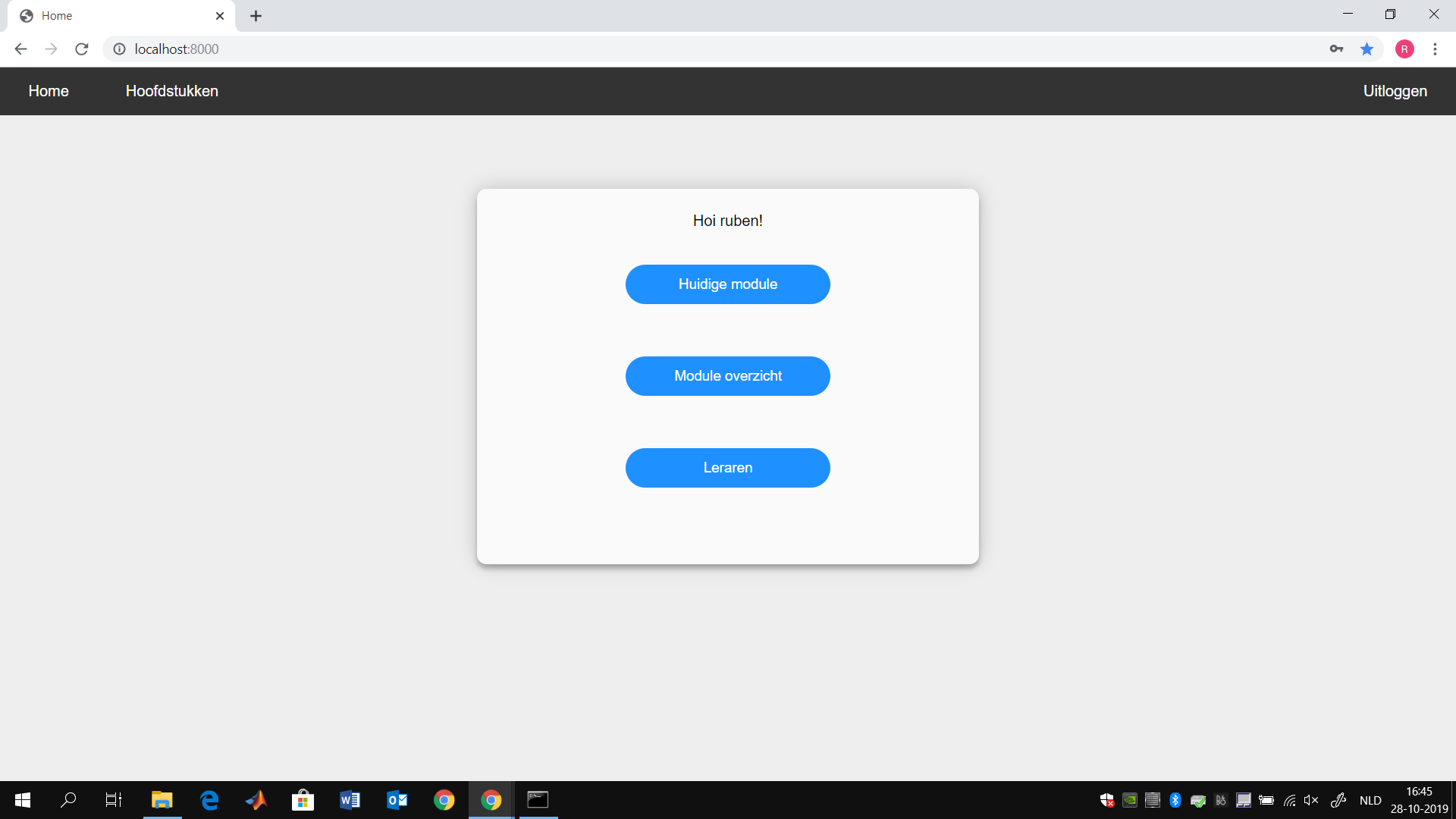

home page

The home page is the page where the user lands when he has filled in the correct username and password. From here on he can access the different possibilities our application has to offer.

Functionalities:

Huidige module button: This button redirects to the current module the user is working on as explained in the “module_user” table section of the database.

Module overzicht button: This button redirects to the module overview page.

Leraren button: This button redirects to the teacher page when the user is not a teacher (discussed in user table of database) and redirects to the “confirmed teacher” page when the user is a teacher.

module overview

From the module overview page users can pick specific modules they want to study a bit more. They can also look ahead of what is to come.

Functionalities:

Specific chapter button: Each button on this page will redirect to a question with a specific question. Within a specific module the user can select the question they want to answer.

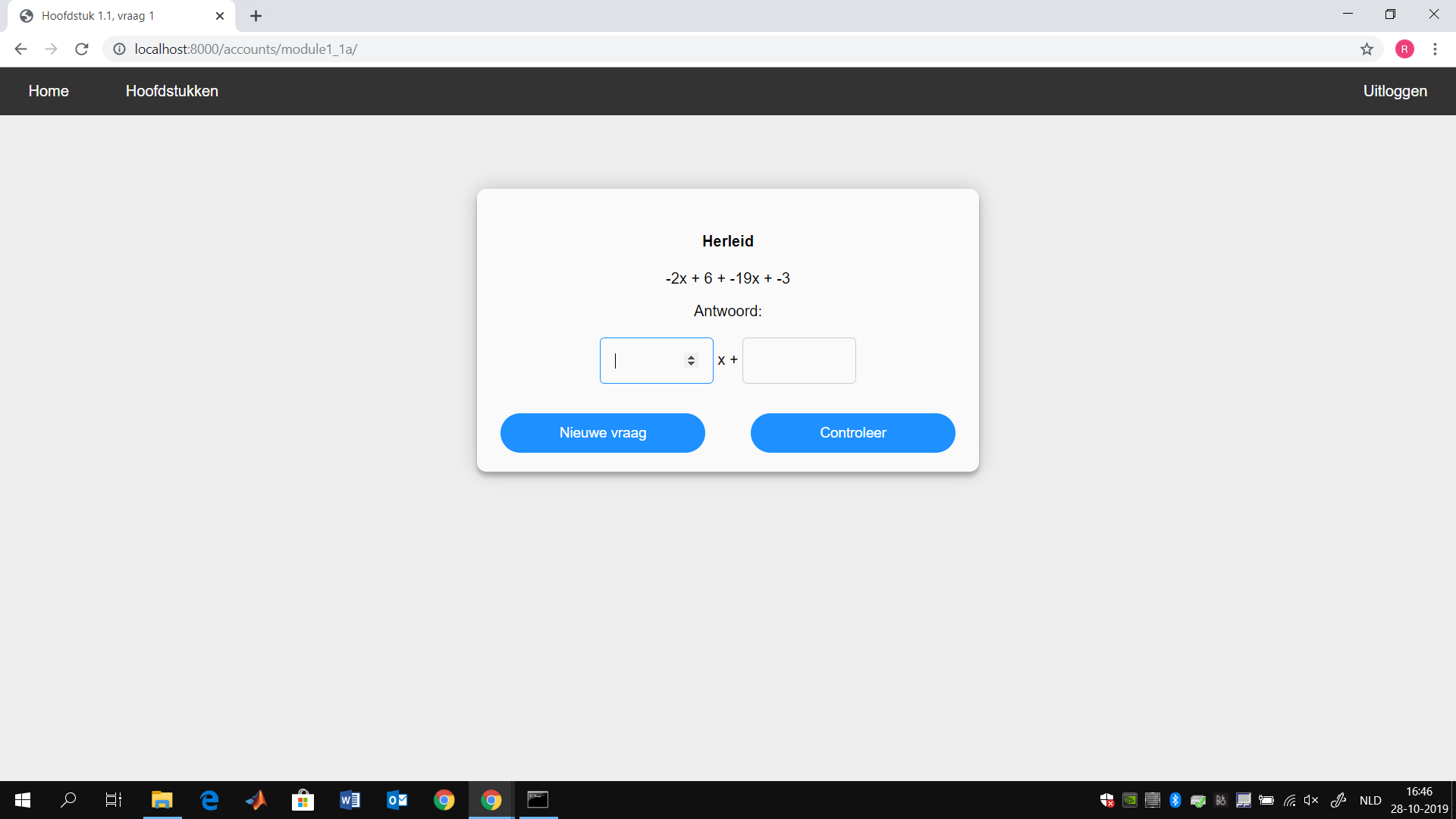

Question view

When answering a certain question the user will always first land on the question view page. On this page a question is shown with (1 or 2) number boxes where answers should be filled in. The user can then request a new question. Or check if their answer is correct.

Functionalities:

Field 1: The first answerfield where the user should fill in the correct answer.

Field 2: The second answerfield where the user should also fill in the correct answer.

Nieuwe vraag button: This button will refresh the page, meaning that the same kind of question will be asked with different variables.

Controleer button: The answer to the question will be checked and the user will be redirected to the Question Answer page.

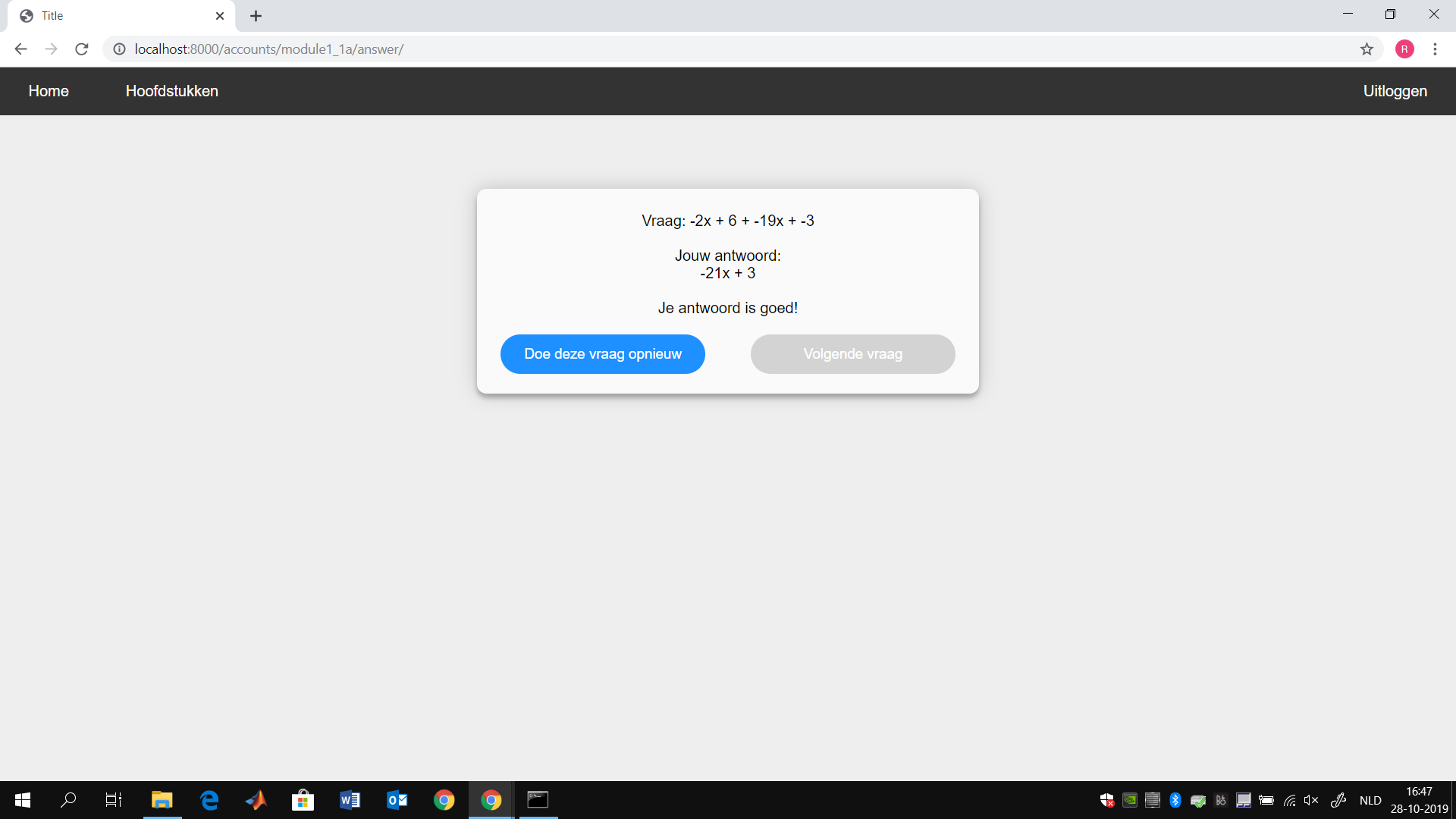

Question Answer

This is the page where the user will be redirected when he has answered a question. For the sake of explanation the user has answered the question wrongly, but has already answered the same question correct the number of times in which he is able to advance to the next question. By assuming this we will see the full functionality of this page.

Functionalities:

Question answer and your answer text”: The page will display the question, your answer and the correct answer, this way you can see where you went wrong. When you have answered the question correct only your answer will be shown.

Bekijk een hint button: When pressing this button the page will explain the mistake you made. This can be used to do the question correct next time. IF you answered the question correct, this button will not be displayed.

multiple same mistake text: The page will display a warning to you if you have made the same mistake multiple times. It will ask you to ask the teacher to explain this to you, since you clearly did not understand it. This will only show up when you made the same mistake multiple times.

Doe deze vraag opnieuw button: This button will ask you the same kind of question again. Meaning this question will be asked again with different variables.

volgende vraag button: This button will redirect you to the next question. You are only able to press this button once you have reached a certain treshhold (This will be discussed in the NextQuestion Method).

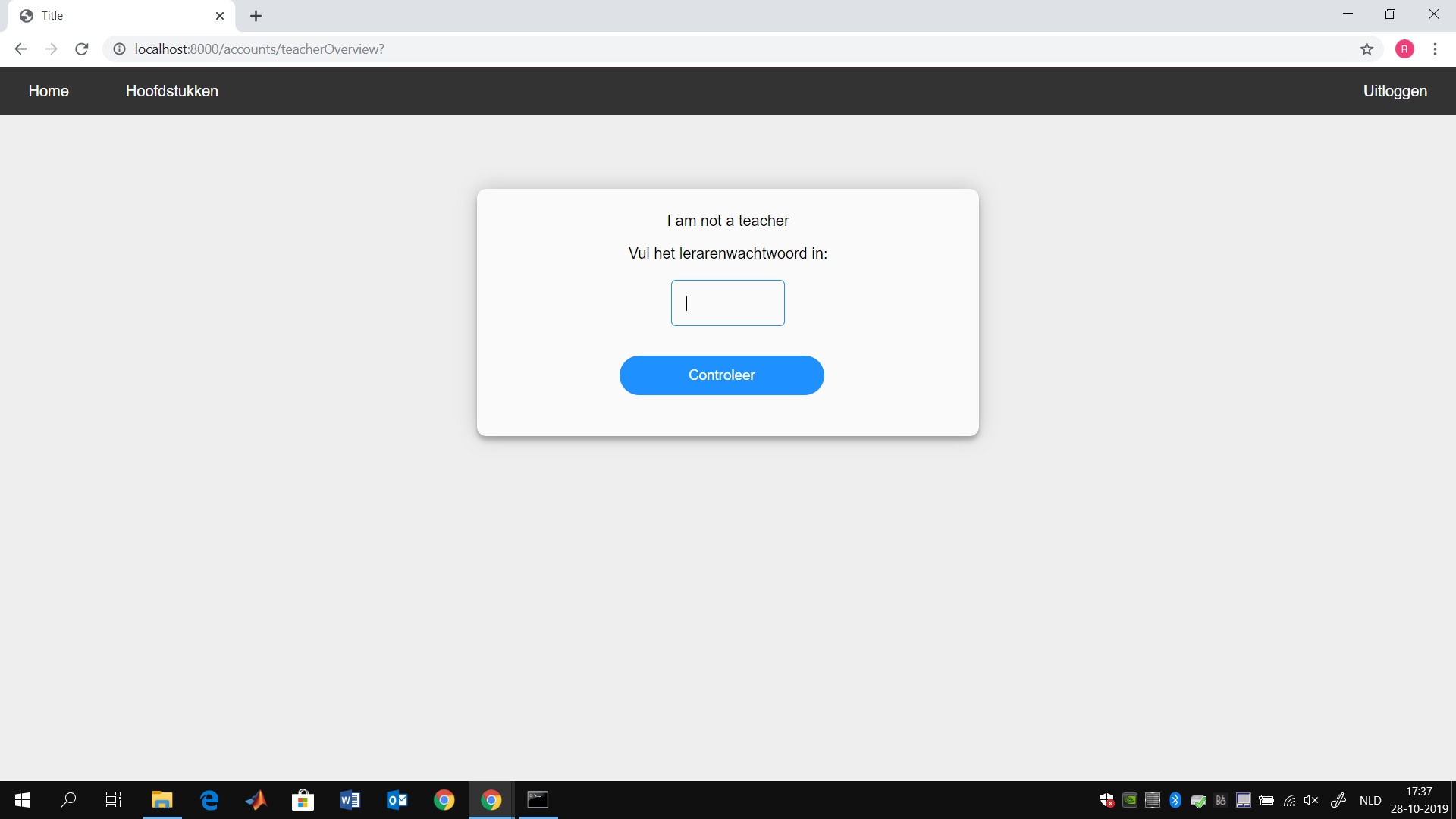

Teacher (not confirmed)

The teacher page is a page where users will find themselves when they press the teacher button when they are not a teacher. Once they are on this page the only thing they can do is fill in the teacher password. If they have done this they will be made a teacher and can access all the teacher possibilities.

Funtionalities:

password field: This is where the user fills in the teacher password.

controleer button: This button will check if the filled in password is correct. If the password is incorrect the page will be reloaded and there will not be a change made. If the password was correct the user will be redirected to the confirm teacher page and the user will be made a teacher in the databes.

confirmed teacher

The confirmed teacher page is an overview for teacher of all the students. It will display for each student the total amount of correct answers, the total amount of wrong answers and the ration between the two. This way teachers can in a quick glance see which students are good and which need some attention.

Functionalities:

Nickname Column: The nickname of the users, this is the username field of the login page.

Slimheid(%) column: The percentile of questions that were answered correct.

totaal correct column: The amount of questions that the user has answered correct.

total fout column: The amount of question that the user has answered wrong.

Methods

In this part of the wiki most of the methods used in the application will be discussed. The general use of the method will be discussed. Also The input of the method and the output of the method will be discussed. The output of a method that does not have an direct output will be denoted as <void>. Lots of methods will have the input Request. We decided that instead of listing request we will list all the variables used inside the request and denote that we get the value out of a request.

InsertNewUser

This method is called when a user has filled in the sign up form in a correct way. After this method is finished all the different user_module entries will be successfully added to the database.

Implementation:

The method first establishes a database connection.

For Each module in the modules table.

Do the createUser method is called.

Input:

User_id: The ID of the user for which an account was made.

Output: <void>

createUser

This method is called in the InsertNewUser method to insert a entry of a user and module to the user_module database. After this method is finished the specific user_module entry will be added to the database.

Implementation:

If it is the first module

Then insert the module and user combination with the currentModule variable set to 1.

Else

Then Insert the module and user combination in a normal way.

Input:

conn: A connection to the database.

user_module: An an object with as first element the id of the user and as second element the id of the module.

Output: <void>

ModuleCurrent

The modulecurrent method checks via the database what the current module is and redirects the user to the page with the current module.

Implementation: