PRE2018 3 Group9: Difference between revisions

| Line 774: | Line 774: | ||

===YOLO demo=== | ===YOLO demo=== | ||

To demonstrate the ATR capabilities of a neural network we have built a demo. This demo shows neural network called YOLO (You Only Look Once) trained to detect humans. Based on the location of these humans in the video frame, a Python script outputs control signals to an Arduino. This Arduino controls a two-axis gimbal on which the camera is attached. This way the system can track a person in its camera frame. Due to the computational weight of this specific neural net and the lacking ability to run of a GPU, the performance is quite limited. In controlled environments, the demo setup was able to run at roughly 3 FPS. This meant that running out of the camera frame was easy and caused the system to “lose” the person. | |||

Video of the YOLO demo: | |||

To also show a faster tracker we have built a demo that tracks so called Aruco markers. These markers are designed for robotics and are easy to detect for image recognition algorithms. This means that the demo can run at a higher framerate. A new Python script determines the error as the distance from the center of the marker to the center of the frame and, using this, generates a control output to the Arduino. This Arduino in turn moves the gimbal. This way, the tracker tries to keep the target at the center of the frame. | |||

Video of the Aruco demo: | |||

See [https://github.com/martinusje/USE_robots_everywhere] for all code. | |||

===Conformation of acquired target=== | ===Conformation of acquired target=== | ||

Revision as of 10:29, 2 April 2019

Preface

Group Members

| Name | Study | Student ID |

|---|---|---|

| Claudiu Ion | Software Science | 1035445 |

| Endi Selmanaj | Electrical Engineering | 1283642 |

| Martijn Verhoeven | Electrical Engineering | 1233597 |

| Leo van der Zalm | Mechanical Engineering | 1232931 |

Initial Concepts

After discussing various topics we came up with this final list of projects that seemed interesting to us.

- Drone interception

- A tunnel digging robot

- A fire fighting drone for finding people

- Delivery uav - (blood in Africa, parcels, medicine, etc.)

- Voice control robot - (general technique that has many applications)

- A spider robot that can be used to get to hard to reach places

Chosen Project: Drone Interception

Introduction

According to the most recent industry forecast studies, the unmanned aerial systems (UAS) market is expected to reach 4.7 million units by 2020.[1] Nevertheless, regulations and technical challenges need to be addressed before such unmanned aircraft become as common and accepted by the public as their manned counterpart. The impact of an air collision between an UAS and a manned aircraft is a concern to both the public and government officials at all levels. All around the world, the primary goal of enforcing rules for UAS operations into the national airspace is to assure an appropriate level of safety. Therefore, research is needed to determine airborne hazard impact thresholds for collisions between unmanned and manned aircraft or even collisions with people on the ground as this study already shows.[2].

With the recent developments of small and cheap electronics unmanned aerial vehicles (UAVs) are becoming more affordable for the public and we are seeing an increase in the number of drones that are flying in the sky. This has started to pose a number of potential risks which may jeopardize not only our daily lives but also the security of various high values assets such as airports, stadiums or similar protected airspaces. The latest incident involving a drone which invaded the airspace of an airport took place in December 2018 when Gatwick airport had to be closed and hundreds of flights were cancelled following reports of drone sightings close to the runway. The incident caused major disruption and affected about 140000 passengers and over 1000 flights. This was the biggest disruption since ash from an Icelandic volcano shut down all traffic across Europe in 2010.[3]

Tests performed at the University of Dayton Research Institute show the even a small drone can cause major damage to an airliner’s wing if they meet at more than 300 kilometers per hour.[4]

The Alliance for System Safety of UAS through Research Excellence (ASSURE) which is FAA's Center of Excellence for UAS Research also conducted a study[5] regarding the collision severity of unmanned aerial systems and evaluated the impact that these might have on passenger airplanes. This is very interesting as it shows how much damage these small drones or even radio controlled airplanes can inflict to big airplanes, which poses a huge safety threat to planes worldwide. As one can image, airplanes are most vulnerable to these types of collisions when taking off or landing, therefore protecting the airspace of an airport is of utmost importance.

This project will mostly focus on the importance of interceptor drones for an airport’s security system and the impact of rogue drones on such a system. However, a discussion on drone terrorism, privacy violation and drone spying will also be given and the impacts that these drones can have on users, society and entreprises will be analyzed. As this topic has become widely debated worldwide over the past years, we shall provide an overview of current regulations concerning drones and the restrictions that apply when flying them in certain airspaces. With the research that is going to be carried out for this project, together with all the various deliverables that will be produced, we hope to shine some light on the importance of having systems such as interceptor drones in place for protecting the airspace of the future.

Problem Statement

The problem statement is: How can autonomous unmanned aerial systems be used to quickly intercept and stop unmanned aerial vehicles in airborne situations without endangering people or other goods.

A UAV is defined as an unmanned aerial vehicle and differs from an unmanned aerial system (UAS) in one major way: a UAV is just referring to the aircraft itself, not the ground control and communications units.[6]

Objectives

- Determine the best UAS that can intercept another UAV in airborne situations

- Improve the chosen concept

- Create a design for the improved concept, including software and hardware

- Build a prototype

- Make an evaluation based on the prototype

Project Organisation

Approach

The aim of our project is to deliver a prototype and model on how an interceptive drone can be implemented. The approach to reach this goal contains multiple steps.

Firstly, we will be going to research papers which describe the state of art of such drones and its respective components. This allows our group to get a grasp of the current technology of such a system and introduce us to the new developments in this field. This also helps to create a foundation for the project, which we can develop into. The state of art also gives valuable insight into possible solutions we can think and whether their implementation is feasible given the knowledge we possess and the limited time. The SotA research will be achieved by studying the literature, recent reports from research institutes and the media and analyzing patents which are strongly connected to our project.

Furthermore, we will continue to analyze the problem from a USE – user, society, enterprise – perspective. An important source of this analysis is the state of art research, where the impact of these drone systems in different stakeholders discussed. The USE aspects will be of utmost importance for our project as every engineer should strive to develop new technologies for helping not only the users but also the society as a whole and also avoid the possible consequence of the system they develop. This analysis will finally lead to a list of requirements for our solutions. Moreover, we will discuss the impact of these solutions on the categories listed prior.

Finally, we hope to develop a prototype for an interceptor drone. We do not plan on making a physical prototype as the time of the project is not feasible for this task. We plan on creating a 3D model of the drone and simulating it, showcasing its functionality in real life. To complement this, we also plan on building an Android application, which serves as a dashboard for the drone tracking different parameters about the drones such as position and overall status. To realize this a list of hardware components will be researched, which would be feasible with our project and create a cost-effective product. Concerning the software, a UML diagram will be created first, to represent the system which will be implemented later on. Together with the wiki page, these will be our final deliverables for the project.

Below we summarize the main steps in our approach of the project.

- Doing research on our chosen project using SotA literature analysis

- Analyzing the USE aspects and determining the requirements of our system

- Consider multiple design strategies

- Choose the Hardware and create the UML diagram

- Work on the prototype (3D model and mobile application)

- Evaluate the prototype

Milestones

Within this project there are three major milestones:

- After week 2, the best UAS is chosen, options for improvements of this system are made and also there is a clear vision on the user. This means that it is known who the users are and what their requirements are.

- After week 5, the software and hardware are designed for the improved system. Also a prototype has been made.

- After week 8, the wiki page is finished and updated with the results that were found from testing the prototype. Also future developments are looked into and added to the wiki page.

Deliverables

- This wiki page, which contains all of our research and findings

- A presentation, which is a summary of what was done and what our most important results are

- A prototype

Planning

The plan for the project is given in the form of a table in which every team member has a specific task for each week. There are also group tasks which every team member should work on. The plan also includes a number of milestones and deliverables for the project.

| Name | Week #1 | Week #2 | Week #3 | Week #4 | Week #5 | Week #6 | Week #7 | Week #8 |

|---|---|---|---|---|---|---|---|---|

| Research | Requirements and USE Analysis | Hardware Design | Software Design | Prototype / Concept | Proof Reading | Future Developments | Conclusions | |

| Claudiu Ion | Make a draft planning | Add requirements for drone on wiki | Regulations and Present Situation | Write pseudocode for interceptor drone | Mobile app development | Proof read the wiki page and correct mistakes | Review wiki page | Make a final presentation |

| Summarize project ideas | Improve introduction | Wireframing the Dashboard App | Build UML diagram for software architecture | Review wiki page | ||||

| Write wiki introduction | Check approach | Start design for dashboard mobile app | Mobile app development | |||||

| Find 5 research papers | ||||||||

| Endi Selmanaj | Research 6 or more papers | Elaborate on the SotA | Research the hardware components | Work on the drone model | Work on 3D drone model / prototype | Reviewing the Wiki and fixing spelling errors | Finalise the Wiki Page | Work on final presantation |

| Write about the USE aspects | Review the whole Wiki page | Purchase or request the needed hardware | Work on the code needed for the electronics | Finish the simulation | Work on the layout of the Wiki | Check on the relaisation of all objectives | ||

| Improve approach | Draw the schematics of electronics used | Start with the simulation | Expand on the material on the Wiki more | |||||

| Check introduction and requirements | ||||||||

| Martijn Verhoeven | Find 6 or more research papers | Update SotA | Research hardware options | Start on 3D model of drone | Work on 3D model | Review wiki page | Finalise wiki page | |

| Fill in draft planning | Check USE | Start thinking about electronics layout | Make a bill of costs and list of parts | Deliver 3D model of drone | ||||

| Write about objectives | ||||||||

| Leo van der Zalm | Find 6 or more research papers | Update milestones and deliverables | Start on 3D model of drone | Work on 3D model | Review wiki page | Continue tasks from week 7 | Finish all lose ends | |

| Search information about subject | Check SotA | Research hardware components | Make a bill of costs and list of parts | Put in new devellopmets from week 3, 4 and 5 | Write future developmetns | Write conclusion/results part | ||

| Write problem statements | USE analysis including references | Start looking at the final presentation | ||||||

| Write objectives | ||||||||

| Group Work | Introduction | Expand on the requirements | Research hardware components | Interface design for the mobile app | Start working on the visualisation | |||

| Brainstorming ideas | Expand on the state of the art | Research systems for stopping drones | Start working on drone prototype | |||||

| Find papers (5 per member) | Society and enterprise needs | Start working on 3d drone model | ||||||

| User needs and user impacts | Work on UML activity diagram | Start working on the simulation | ||||||

| Define the USE aspects | Improve week 1 topics | |||||||

| Milestones | Decide on research topic | Add requirements to wiki page | USE analysis finished | Provide a bill of costs and list of parts | Mobile app prototype finished | Visualisation finished | ||

| Add research papers to wiki | Add state of the art to wiki page | UML activity diagram finished | 3D drone model finished | |||||

| Write introduction for wiki | Research into building a drone | |||||||

| Finish planning | Research into the costs involved | |||||||

| Deliverables | Mobile app prototype | Final presentation | ||||||

| 3D drone model | Wiki page |

USE

In this section we will focus more on analysing the different aspects involving users, society and enterprises in the context of interceptor drones. We will start by identifying key stakeholders for each of the categories and proceed by giving a more in depth analysis. After identifying all these stakeholders, we will continue by stating what our project will mainly focus on in terms of stakeholders. Since the topic of interceptor drones is quite vast depending from which angle we choose to tackle the problem, focusing on a specific group of stakeholders will help us produce a better prototype and conduct better research for that group. Moreover, each of these stakeholders experiences different concerns, which are going to be elaborated separately.

Users

When analysing the main users for an interceptor drone, we quickly see that airports are the most interested in having such a technology. This comes as no surprise when we look at the number of incidents involving rogue drones around airports in the last couple of years. Due to the fact that the airspace within and around airports is heavily restricted and regulated, it is clear that unauthorized flying drones are a real danger not only to the operation of airports and airlines, but also to the safety and comfort of passengers. As was the case with previous incidents, intruder drones which are violating airport airspaces lead to airport shutdowns which result in big delay and huge losses.

Another key group of users is represented by governmental agencies and civil infrastructure operators that want to protect certain high value assets such as embassies. As one can imagine, having intruder drones flying above such a place could lead to serious problems such as diplomacy fights or even impact the relations between the two countries involved. Therefore, one could argue that such a drone could indeed be used with malicious intent to directly cause such tense relations. Another good example worth mentioning is the incident involving the match between Serbia and Albania in 2014 when a drone invaded the pitch carrying an Albanian nationalist banner which lead to a pitch invasion by the Serbian fans and full riot. Needless to say, this incident led to retaliations from both Serbians and Albanians which resulted in significant material damage and damaged even more the fragile relations between the two countries [7].

We can also imagine such an interceptor drone being used by the military or other government branches for fending off terrorist attacks. Being able to deploy such a countermeasure (on a battlefield) would improve improve not only the safety of people but would also help in deterring terrorists from carrying our such acts of violence in the first place.

Lastly, a smaller group of users, but still worth taking into account could be represented by individuals who are prone to get targeted by drones, therefore having their privacy violated by such systems. This could be the case with celebrities or other VIPs who are targeted by the media to get more information about their private lives. To summarize, from a user perspective we think that the research which will go into this project can benefit airport security systems the most. One could say that we are taking an utilitarianism approach to solving this problem, as implementing a security systems for airports would produce the greatest good for the greatest number of people.

Society

When thinking how society could benefit from the existence of a system that detects and stops intruder drones, the best example to consider is again the airport scenario. It is already clear that whenever an unauthorized drone enters the restricted airspace of an airport this causes major concern for the safety of the passengers. Moreover, it causes huge delays and creates big problems for the airport’s operations and airlines which will be losing a lot of money. Apart from this, rogue drones around airports cause logistical nightmares for airports and airlines alike since this will not only create bottlenecks in the passengers flow through the airport but airlines might need to divert passengers on other routes and planes. The cargo planes will also suffer delays and this could lead to bigger problems down the supply chain such as medicine not reaching patients in time. All these problems are a great concern for the society as a whole.

Another big issue for society which interceptor drones hope to solve would be the ability to safely stop a rogue drone from attacking large crowds of people at various events for example. For providing the necessary protection in these situations, it is crucial that the interceptor drone acts very quickly and stops the intruder in a safe and controlled manner as fast as possible without putting the lives of other people in danger. Again, when we think in the context of providing the greatest good for the greatest number of people, the airport security example stands out, therefore this is where the main focus of the research of this paper will be aimed at.

Enterprise

When analysing the impact interceptor drones will have on the enterprise in the context of airport security we identify two main players: the airport security and airlines operating from that airport. Moreover, the airlines can be further divided into two categories: those which transport passengers and those that transports cargo (and we can also have airlines that do both).

From the airport’s perspective, a drone sighting near the airport would require a complete shutdown of all operations for at least 30 minutes, as stated by current regulations [8]. As long as the airport is closed, it will lose money and cause huge operational problems if we think at the number of people left stranded all over the airport waiting for the next flight out. Furthermore, when the airport will open again, there will be even more problems caused by congestion since all planes would want to leave at the same time which is obviously not possible. This can actually lead to incidents on the tarmac involving planes, due to improper handling or lack of space in an airport which is potentially already overfilled with planes.

From the airline’s perspective, whether we are talking about passengers transport or cargo, drones violating an airport’s airspace directly translates in huge losses, big delays and unhappy passengers. Not only will the airline need to compensate passengers in case the flight is canceled, but they would also need to support accommodation expenses in some cases. For cargo companies, a delay in delivering packages can literally mean life or dead if we talk about medicine that needs to get to patients. Moreover, disruptions in the transport of goods can greatly impact the supply chain of numerous other businesses and enterprises, thus these types of events (rogue drones near airports) could have even bigger ramifications.

Finally, after analyzing the USE implications of intruder drones in the context of airport security, we will now focus on researching different types of systems than can be deployed in order to not only detect but also stop and catch such intruders as quickly as possible. This will therefore help mitigate both the risks and various negative implications that such events have on the USE stakeholders that were mentioned before.

Experts

Yet we explained who are main users are, but not how our idea can really be used. In order to find how our idea can be implemented, we contacted our main users. We contacted different airports; Schiphol, Rotterdam The Hague Airport, Eindhoven Airport, Groningen Eelde Airport and Maastricht Aachen Airport. Only Eindhoven Airport responded. We asked the airports the following questions:

- Are you aware of the problem?

- How are you taking care of this problem at the moment?

- Who is responsible?

- How does this proces go? (By this we mean the proces form when a rogue drone is detected untill it is taken down.)

- Do you see room for innovation or are you satisfied with the current proces?

Their response made clear that they are very aware of the problem. But they told us that it is not their responsibility. The terrain of the airport is property of the Dutch army, and also the responsibility of the Dutch army. Because this problem is new, the innovation centre of the army takes care of these problems.

This answer gave us some information but we still don't know anything about the process yet. So we e-mailed the innovation centre of the army. Also we contacted Delft Dynamics, because they also deal with this problems and probably know more about it. The innovation centre of the army and Delft Dynamics both didn't respond.

Requirements

In order to better understand the needs and design for an interceptor drone, a list of requirements is necessary. There are clearly different ways in which a rogue UAV can be detected, intercepted, tracked and stopped. However, the requirements for the interceptor drone need to be analyzed carefully as any design for such a system must ensure the safety of bystandars and minimize all possible risks involved in taking down the rogue UAV. Equally important are the constraints for the interceptor drone and finally the preferences we have for the system. For prioritizing the specific requirements for the project, the MoSCoW model was used. Each requirement has a specific level of priority which stands for must have (M), should have (S), could have (C), would have (W). We will now give the RPC table for the autonomous interceptor drone and later provide some more details about each specific requirement.

| ID | Requirement | Preference | Constraint | Category | Priority |

|---|---|---|---|---|---|

| R1 | Detect rogue drone | LIDAR system for detecting intruders | Does NOT require human action | Software | M |

| R2 | Autonomous flight | Fully autonomous drone | Does NOT require human action | Software & Control | M |

| R3 | Object recognition | Accuracy of 100% | Uses AI bounding box algorithm | Software | M |

| R4 | Detect rogue drone's flying direction | Accuracy of 100% | Software & Hardware | S | |

| R5 | Detect rogue drone's velocity | Accuracy of 100% | Software & Hardware | S | |

| R6 | Track target | Tracking targer for at least 10 minutes | Allows for operator to correct drone | Software & Hardware | M |

| R7 | Velocity of 40 km/h | Drone is as fast as possible | Hardware | S | |

| R8 | Flight time of 10 minutes | Flight time is maximized | Hardware | M | |

| R9 | FPV live feed (with 60 FPS) | Drone records and transmits flight video | Records all flight video footage | Hardware | W |

| R10 | Camera of 1080p | Flight video is as clear as possible | Hardware | C | |

| R11 | Stop rogue drone | Is always successful | Can NOT be violet or endanger others | Hardware | M |

| R12 | Stable connection to operation base | Drone is always connected to base | If connection is lost drone buffers data | Software | S |

| R13 | Sensor monitoring | Drone sends sensor data to base and app | All sensor information is sent to base | Software | S |

| R14 | Drone autonomously returns home | Software | C | ||

| R15 | Auto take off | Control | M | ||

| R16 | Auto landing | Control | M | ||

| R17 | Auto leveling (in flight) | Drone is able to fly in heavy weather | Does NOT require human action | Control | M |

| R18 | Minimal weight | Drone uses carbon fiber materials | Hardware | S | |

| R19 | Cargo capacity of 4 kg | Drone is able to carry two catching devices | Hardware | S | |

| R20 | Portability | Drone is portable and easy to transport | Does NOT hinder drone's robustness | Hardware | C |

| R21 | Fast deployment | Drone can be deployed in under 5 minutes | Does NOT hinder drone's robustness | Hardware | C |

| R22 | Minimal costs | Drone cost is less than 8000 euros | Costs | S |

Justification Requirements

Starting with R1 we see that our drone must to be able to detect a rogue drone in order to take him down. Also our drone has to be able to do this autonomous. We chose this because this works faster and better. Human decisions can be skipped which makes it faster. And also no human imperfections are in the process so the flying and shooting works better. A preference is that the detection is done by a LIDAR system. This LIght Detection And Ranging of Laser Imaging Detection And Ranging gives the drone enough information to further track it. As can be seen at the constraint of R1, we want the drone to be autonomous. This is again specified in R2. The difference here between autonomous and fully autonomous is the detection part. If a rogue drone is detected by the system, our preference is that autonomously an interceptor drone flies away from his based and starts tracking down the rogue drone. We didn’t made this a requirement because we think that this is the choice of the user. It is possible that the users wants human interference at some point.

R3 until R5 are about recognition and following. R3 claims that our drone must be able to recognize different objects. R4 and R5 have a lower priority but are still important. This is because detecting flying direction and detecting speed increases the chance of catching the drone. This is because the interceptor drone can better follow the rogue drone and with better following comes better shooting quality form the net gun. Likewise R3, we prefer that R4 and R5 are done with 100 percent accuracy. Also the drone has to be able to track the rogue drone in order to catch it. If the rogue drone escapes, we can’t find out why the drone was here and aren’t able to prevent it from happening again. This is why R6 gets a high priority. The constraint we made at R6 is because some kind of human interaction is needed. An operator has to be able to, for example cancel the mission, if it endangers human lives. The requirement of R7 is about the minimal velocity. If our drone can’t keep up with the rogue drone, then it will escape. We prefer that our drone is as fast as possible, but we have to keep in mind that the battery not runs out too fast. Otherwise the requirement of R8 will be violated. The drone must have a flight time of ten minutes, because otherwise it has not enough time to detect, follow and take down the drone. Especially on big airports, were the drone has to cover a lot of ground.

R9 and R10 are about sight and live feed. The interceptor drone must have a clear sight in order to detect the rogue drone. 60 FPS in combination with 1080p is enough to detect and distinguish objects. But it is preferred that the view of the drone is as clear as possible. A constrain is that all flight video footage is recorded, so that it can be watched later on to make adjustments to the drone if needed. R11 is the most important RPC, because this is our main goal. The requirement is to stop the rogue drone, and the preference is that this is always successful. By this we mean that it happens at the first shot. No matter what, we are going to stop the rogue drone but maybe the first shot is missed and the drone has to go back to base to get ammo for the net gun. The constraint from R11 comes back at the constraint of R6, because here we see that the drone may never violate human lives or endanger the situation even more. That it is why human interception at some point has to be possible.

In R12 it is about the connection to the operation base. A stable connection to the operation base is required because the operation base needs to know what is happening. The drone can act by itself because it’s autonomous, but maybe human interference is needed. If the operation base is not connected to the drone, human interference is not possible. We prefer that the drone is always connected to the base. But when connection is lost, the drone has to save the data which is gather, so that we can see afterwards what happened in that time. This is why in R13 we have a constraint that all the sensor information is sent to the base. With this information we can adjust the drone for better performance. Also this information helps us to understand incident better, helps us to prepare for upcoming incidents and provide information about how we can solve them even better. We also prefer that the information is send towards the app, so that the information is always available on your phone. R14, R15 and R16 represent the take-off, returning to station and landing. And are tasks that can best be performed autonomously. This happens more precise and faster performed autonomously than manual.

Our drone also needs to be able to withstand different weather types. Auto levelling in flight is required and performed autonomously. This is because the drone needs to be stable even in different weather conditions, like change of wind speed. And this can be performed at best by sensors and computing power instead of human control. We prefer that our drone can also fly in heavy weather conditions so it can always be used. Looking at R7 and R8 we see that we want to reach maximum speed and maximum flight time. For this it is required that our drone is minimal weight(R18). To realize this we prefer using carbon fibre materials, because this is light weighted and strong. Because we need to carry the rogue drone, in R19 is mentioned that we require a cargo capacity of 4 kilograms. The catching device and the rogue drone will not weight more than this. We prefer to have two catching devices because in case we miss the shot (Because we not have 100 percent accuracy; R3,R4,R5), we can shoot another net and don’t have to return to base. In case of emergency the drone should be able to be transported. We prefer that this gets easy, but a constraint is that this not hinder the drone’s robustness. Portability and fast deployment are not so important abilities, so the robustness from the drone should not suffer from adjustments made to increase these abilities. Because not having optimal robustness means lower performances, which means less change of catching the rogue drone. And at last R22 claims that the drone should be delivered at minimal costs. This is because we do not aim on making profit but solve a societal problem. Also we made a preferred price from about 8000 euros, but this is only a rough estimation. The real price can be found in the section Bill of Costs.

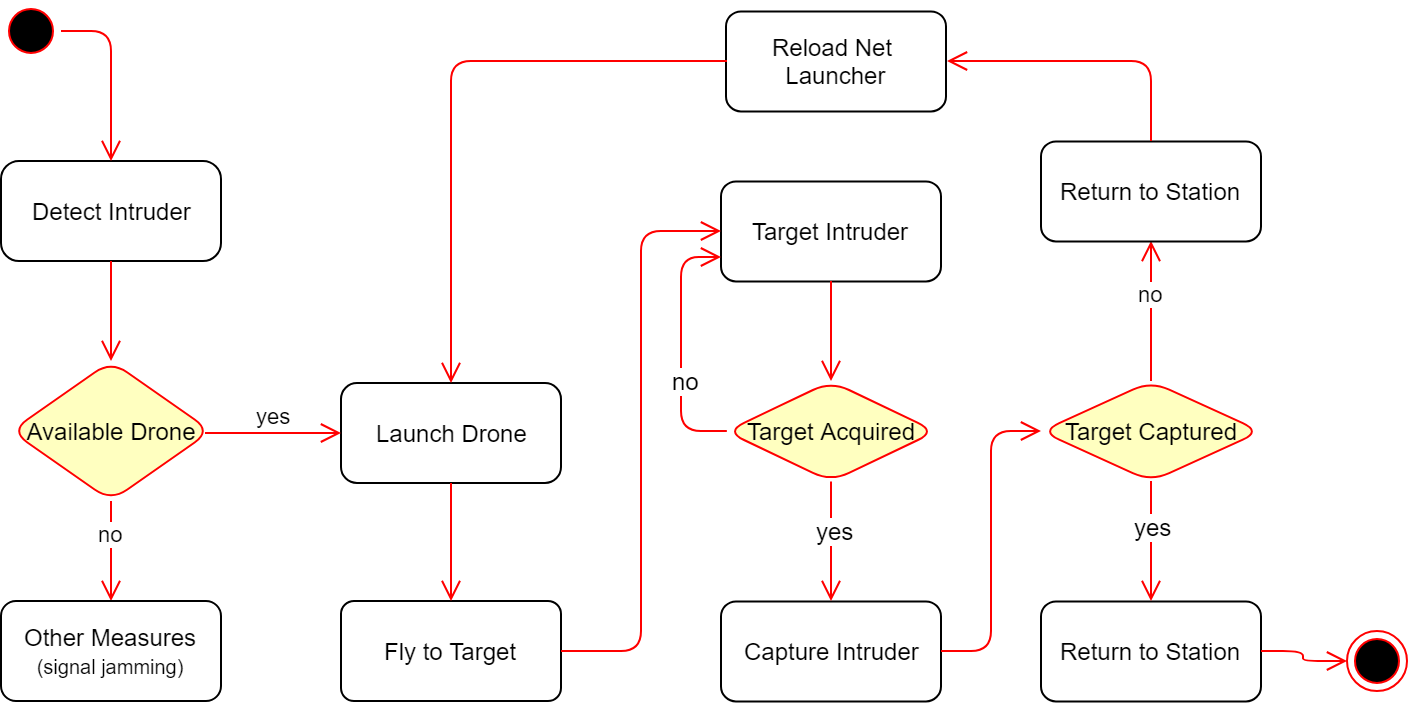

UML Activity Diagram

Activity diagrams, along with use case and state machine diagrams describe what must happen in the system that is being modeled and therefore they are also called behavior diagrams. Since stakeholders have many issues to consider and manage, it is important to communicate what the overall system should do with clarity and map out process flows in a way that is easy to understand. For this, we will give an activity diagram of our system, including the overview of how the interceptor drone will work. By doing this, we hope to demonstrate the logic of our system and also model some of the software architecture elements such as methods, functions and the operation of the drone.

Regulations

The world’s airspace is divided into multiple segments each of which is assigned to a specific class. The International Civil Aviation Organization (ICAO) specifies this classification to which most nations adhere to. In the US however, there are also special rules and other regulations that apply to the airspace for reasons of security or safety.

The current airspace classification scheme is defined in terms of flight rules: IFR (instrument flight rules), VFR (visual flight rules) or SVFR (special visual flight rules) and in terms of interactions between ATC (air traffic control). Generally, different airspaces allocate the responsibility for avoiding other aircraft to either the pilot or the ATC.

ICAO adopted classifications

Note: These are the ICAO definitions.

- Class A: All operations must be conducted under IFR. All aircraft are subject to ATC clearance. All flights are separated from each other by ATC.

- Class B: Operations may be conducted under IFR, SVFR, or VFR. All aircraft are subject to ATC clearance. All flights are separated from each other by ATC.

- Class C: Operations may be conducted under IFR, SVFR, or VFR. All aircraft are subject to ATC clearance (country-specific variations notwithstanding). Aircraft operating under IFR and SVFR are separated from each other and from flights operating under VFR, but VFR flights are not separated from each other. Flights operating under VFR are given traffic information in respect of other VFR flights.

- Class D: Operations may be conducted under IFR, SVFR, or VFR. All flights are subject to ATC clearance (country-specific variations notwithstanding). Aircraft operating under IFR and SVFR are separated from each other, and are given traffic information in respect of VFR flights. Flights operating under VFR are given traffic information in respect of all other flights.

- Class E: Operations may be conducted under IFR, SVFR, or VFR. Aircraft operating under IFR and SVFR are separated from each other, and are subject to ATC clearance. Flights under VFR are not subject to ATC clearance. As far as is practical, traffic information is given to all flights in respect of VFR flights.

- Class F: Operations may be conducted under IFR or VFR. ATC separation will be provided, so far as practical, to aircraft operating under IFR. Traffic Information may be given as far as is practical in respect of other flights.

- Class G: Operations may be conducted under IFR or VFR. ATC has no authority but VFR minimums are to be known by pilots. Traffic Information may be given as far as is practical in respect of other flights.

Special Airspace: these may limit pilot operation in certain areas. These consist of Prohibited areas, Restricted areas, Warning Areas, MOAs (military operation areas), Alert areas and Controlled firing areas (CFAs), all of which can be found on the flight charts.

Note: Classes A–E are referred to as controlled airspace. Classes F and G are uncontrolled airspace.

Currently, each country is responsible for enforcing a set of restrictions and regulations for flying drones as there are no EU laws on this matter. In most countries, there are two categories for drone pilots: recreational drone pilots (hobbyists) and commercial drone pilots (professionals). Depending on the use of such drones, there are certain regulation that apply and even permits that a pilot needs to obtain before flying.

For example, in The Netherlands, recreational drone pilots are allowed to fly at a maximum altitude of 120m (only in Class G airspace) and they need special permission for flying at a higher altitude. Moreover, the drone need to remain in sight at all times and the maximum takeoff weight is 25 kg. For recreational pilots, a licence is not required, however flying at night needs special approval. For commercial drone pilots, one or more permits are required depending on the situations. The RPAS (remotely piloted aircraft system) certificate is the most common licence and will allow for pilots to fly drones for commercial use. The maximum height, distance and takeoff limits are increased compared to the recreational use of such drones, however night time flying still requires special approval and drones still need to be flown in Class G airspace.

Apart from these rules, there are certain drone ban zones which are strictly forbidden for flying and these are: state institutions, federal or regional authority constructions, airport control zones, industrial plants, railway tracks, vessels, crowds of people, populated areas, hospitals, operation sites of police, military or search and rescue forces and finally the Dutch Caribbean Islands of Bonaire St.Eustatius and Saba. Failing to abide by the rules may result in a warning or a fine. The drone may also be confiscated. The amount of the fine or the punishment depends on the type of violation. For example, the judicial authorities will consider whether the drone was being used professionally or for hobby purposes, and whether people have been endangered.

As it is usually the case with new emerging technologies, the rules and regulations fail to keep up with the technological advancements. However, recent developments in the European Parliament hope to create a unified set of laws concerning the use of drones for all European countries. A recent study[9] suggests that the rapid developing drone sector will generate up to 150000 jobs by 2050 and in the future this industry could account for 10% of the EU’s aviation market which amounts to 15 billion euros per year. Therefore, there is definitely need to change the current regulation which in some cases complicates cross border trade in this fast growing sector. As shown with the previous example, unmanned aircraft weighing less than 25 kg (drones) are regulated at a nationwide level which leads to inconsistent standards across different countries. Following a four months consultation period, the European Union Aviation Safety Agency (EASA) published a proposal[10] for a new regulation for unmanned aerial systems operation in open (recreational) and specific (professional) categories. On the 28th February 2019, the EASA Committee has given its positive vote to the European Commission proposal for implementing the regulations which are expected to be adopted at the latest on 15 March 2019. Although these are still small steps, the EASA is working on enabling safe operations of unmanned aerial systems (UAS) across Europe’s airspaces and the integration of these new airspace users into an already busy ecosystem.

The Interceptor Drone

Catching a Drone

The next step is to look at the device which we use to intercept the drone. This could be done with another drone, which we suggested above. But there is also another option. This is by shooting the drone down with a specialized launcher, like the ‘Skywall 100’ from OpenWorks Engineering.[11] This British company invented a net launcher which is specialized in shooting down drones. It is manual and has a short reload time. This way, taking down the drone is easy and fast, but it has two big problems. The first one is that the drone falls to the ground after it is shot down. This way it could fall onto people or even worse, conflict enormous damage when the drone is armed with explosives. Therefore the ‘Skywall 100’ cannot be used in every situation. Another problem is that this launcher is manual, and a human life can be at risk in situations when an armed drone must be taken down.

There remain two alternatives by which another drone is used to catch the violating drone. No human lives will be at risks and the violating drone can be delivered at a desired place. The first option is by using an interceptor drone, which deploys a net in which the drone is caught. This is an existing idea. A French company named MALOU-tech, has built the Interceptor MP200.[12] But this way of catching a drone has some side-effects. On the one hand, this interceptor drone can catch a violating drone and deliver it at a desired place. But on the other hand, the relatively big interceptor drone must be as fast and agile as the smaller drone, which is hard to achieve. Also, the net is quite rigid and when there is a collision between the net and the violating drone, the interceptor drone must be stable and able to find balance, otherwise it will fall to the ground. Another problem that occurs is that the violating drone is caught in the net, but not sealed in it. It can easily fall out of the net or not even be able to be caught in the net. Drones with a frame that protect the rotor blades well are not able to get caught because the rotor blades cannot get stuck in the net.

Drones like the Interceptor MP 200 are good solutions to violating drones which need to be taken down, but we think that there is a better option. When we implement a net launcher onto the interceptor drone and remove the big net, this will result in better performance because of the lower weight. But when the shot is aimed correctly, the violating drone is completely stuck in the net and can’t get out, even if it has blade guards. This is important when the drone is equipped with explosives. In this case we must be sure that the armed drone is neutralized completely, meaning that we know for sure that it cannot escape or crash in an unforeseen location. This is an existing idea, and Delft Dynamics built such a drone.[13]. This drone however is, in contrary to our proposed design, not fully autonomous.

Building the Interceptor Drone

Building a drone, like most other high-tech current day systems, consists of hardware as well as software design. In this part we like to focus on the software and give a general overview of the hardware. This is because our interests are more at the software part, where a lot more innovative leaps can still be made. In the hardware part we will provide an overview of drone design considerations and a rough estimation of what such a drone would cost. In the software part we are going to look at the software that makes this drone autonomous. First, we look at how to detect the intruder, how to target it and how to assure that it has been captured. Also, a dashboard app is shown, which displays the real time system info and provides critical controls.

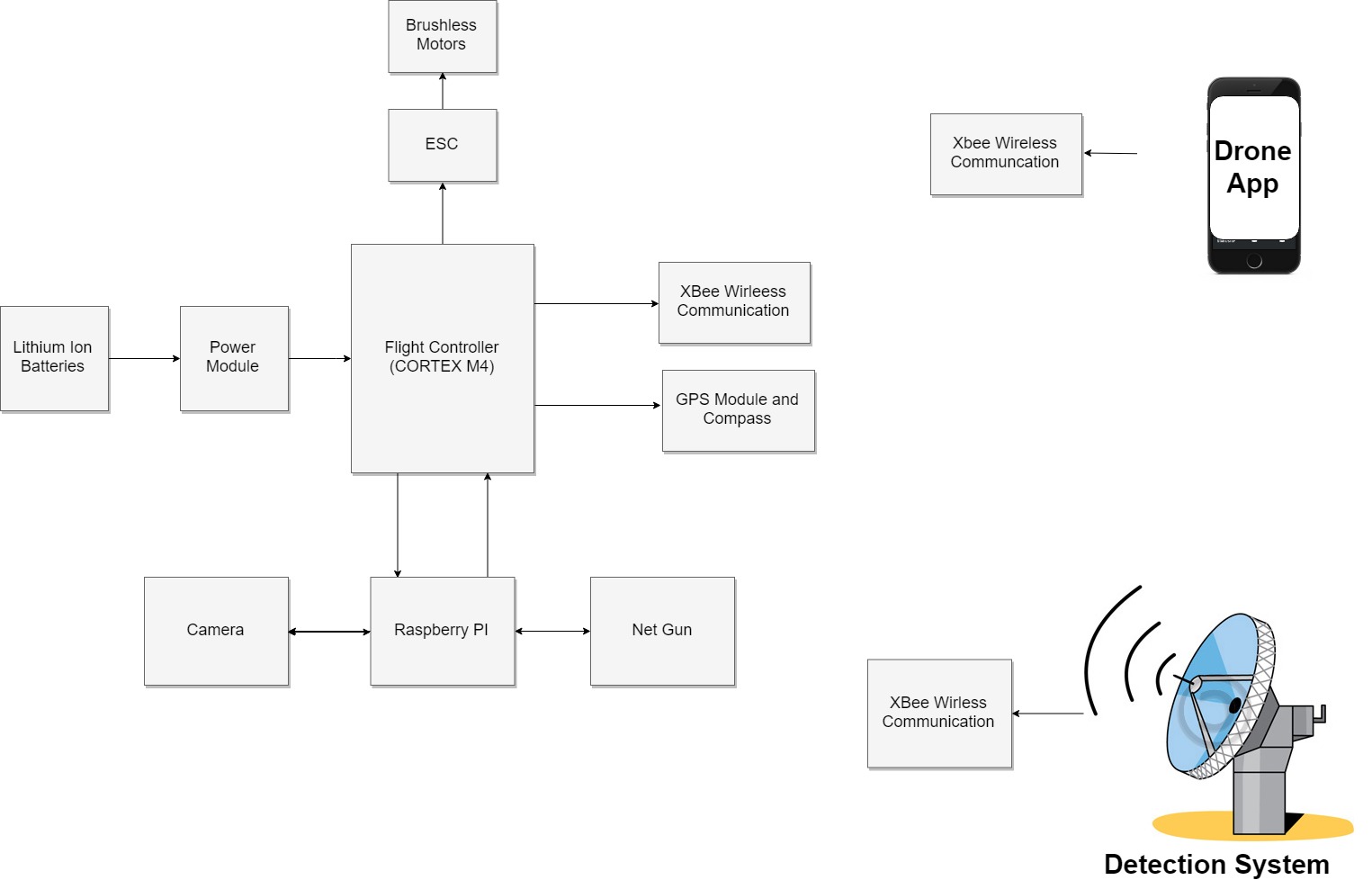

Hardware/Software interface

The main unit of the drone is the flight controller which is made out of an ST Cortex M4 Processor. This unit serves as the brain of the drone. The drone itself is powered by high capacity Lithium-Ion Batteries. The power of the batteries goes through a power module, that makes sure the drone is fed constant power, while measuring the voltage and current going through it, detecting when an anomaly with the power is happening or when the drone needs recharging. The code for autonomous flight is coded into the Cortex M4 chip, which through the Electronic Speed Controllers can control the Brushless DC Motors which spin the propellers to make the drone fly. Each Motor has its own ESC, meaning that each motor is controlled separately.

For the intent of having the drone position in real time, a GPS module is used, which provides a fairly accurate location for outdoor flight. This module communicates with the Cortex M4 to process and update the location of the drone in relation to the location target.

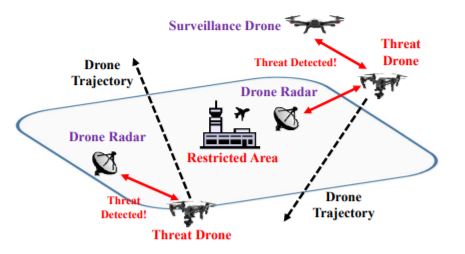

To be able to detect an intruder drone with the help of computer vision, a Raspberry Pi is placed into the drone to offer the extra computation power needed. Raspberry Pi is connected to a camera, with which it can detect the attacking drone. After the target has been locked into position, the net launcher is launched towards the attacking drone. The drone communicates with a system located near the area of surveillance. It uses XBee Wireless Communication to do so, with which it receives and transmits data. The drone uses this first for communicating with the app, where all the data of the drone is displayed. The other use of wireless communication is to communicate with the detection system placed around the area. This can consist of a radar-based system or external cameras, connected to the main server, which then communicates the rough location of the intruder drone back.

Hardware

From the requirements a general design of the drone can be created. The main requirements concerning the hardware design of the drone are:

- Velocity of 40km/h

- Flight time of 10 minutes

- FPV live feed

- Stop rogue drone

- Minimal weight

- Cargo capacity of 8kg

- Portability

- Fast deployment

- At minimal cost

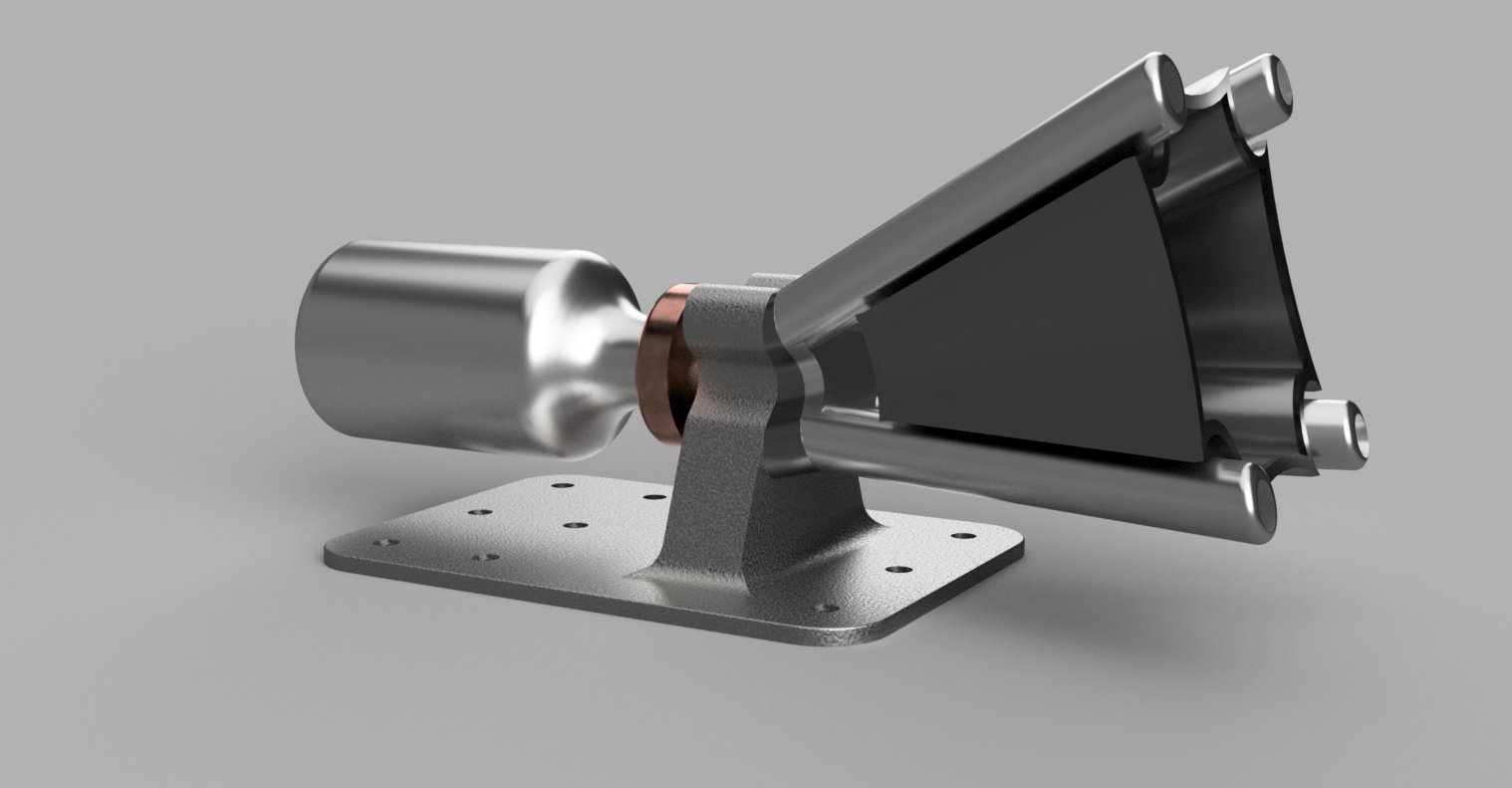

A big span is required, in order to stay stable while carrying high weight (i.e. an intruder with heavy explosives). This is due to the higher leverage of rotors at larger distances. But with the large format the portability and maneuverability decrease. Based on the intended maximum target weight of 8kg, a drone of roughly 80cm has been chosen. The chosen design uses eight rotors instead of the more common four or six (also called an octocopter, [3]) to increase maneuverability and carrying capacity. By doing so, the flight time will decrease and costs will increase. Flight time however is not a big concern as a typical interceptor routine will not take more than ten minutes. For quick recharging, a system with automated battery swapping can be deployed.[14] Another important aspect of this drone will be its ability to track another drone. To do so, it is equipped with two cameras. The fpv-camera is low resolution and low latency and is used for tracking. Because of its low resolution, it can also real time be streamed to the base station. The drone is also equipped with a high resolution camera which captures images at a lower framerate. These images are streamed to the base station and can be used for identification of intruders. Both cameras are mounted on a gimbal to the drone to keep their feeds steady at all times such that targeting and identification gets easier. The drone is equipped with a net launcher in order to stop the targeted intruder as described in the section “How to catch a drone”.

To clarify our hardware design, we have made a 3D model of the proposed drone and the net launcher. This model is based on work from Felipe Westin on GrabCad [4] and extended with a net launcher.

Bill of costs

If we wanted to build the drone, we first start with the frame. In this frame it must be able to implement eight motors. Suitable frames can be bought at a price around 2000 euros. The next step is to implement motors into this frame. Our drone has to able to follow the violating drone, so it has to be fast. Motors with 1280kv are able to reach a velocity of 80 kilometers per hour. This motors cost about 150 euros each. The motors need power which comes from the battery. The price of the battery is a rough estimation. This is because we do not exactly know how much power our drone needs and how much it is going to weigh. Intelligent flight batteries differ in Ampère's. Because this is a price estimation we take a battery which generates 4500mAh and costs about 200 euros. Also two camera's are needed as explained before. FPV-camera's for racing drones cost about 50 euro. High resolution cameras can costs as much as you want. But we need to keep the price reasonable so the camera which we implement gives us 5.2K Ultra HD at 30 frames per second. These two cameras and the net launcher need to be attached on a gimbal. A strong and stable enough gimbal costs about 2000 euros. Further costs are electronics such as a flight controller, an ESC, antennas, transmitters and cables.

| Parts | Number | Estimated cost |

|---|---|---|

| Frame and landing gear | 1 | €2000,- |

| Motor and propellor | 8 | €1200,- |

| Battery | 1 | €200,- |

| FPV-camera | 1 | €200,- |

| High resolution camera | 1 | €2000,- |

| Gimbal | 1 | €2000,- |

| Net launcher | 1 | €1000,- |

| Electronics | €1000,- | |

| Other | €750,- | |

| Total | €10350,- |

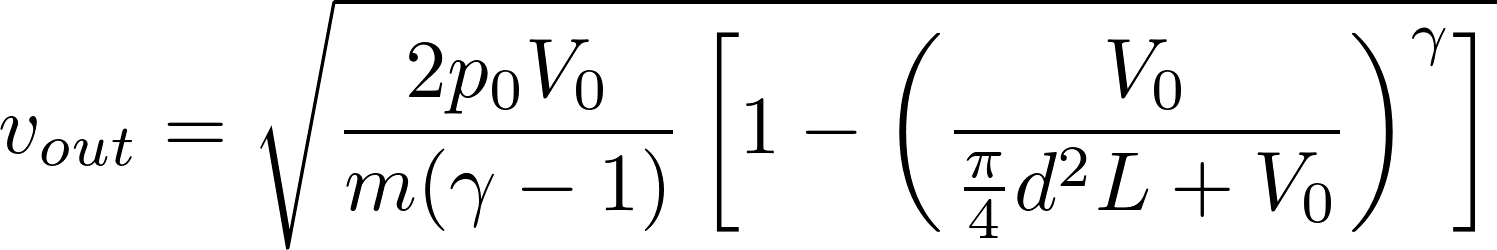

Net launcher mathematical modeling

The net launcher of the drone uses a pneumatic launcher to shoot the net. To fully understand its capabilities and how to design it, a model needs to be derived first.

From Newton's second law, the sum of forces acting on the projectile attached to the corners of the net are:

Where

With p being the pressure on the projectile and A the crossectional area. Using these equations we calculate that:

For the pneumatic launcher to function we need a chamber with carbon dioxide, using it to push the projectile. We will assume that no heat is lost through the tubes of the net launcher and that this gas expands adiabatically. The equation describing this process is:

where [math]\displaystyle{ p }[/math] is the inital pressure, [math]\displaystyle{ v }[/math] is the inital volume of the gas, [math]\displaystyle{ \gamma }[/math] is the ratio of the specific heats at constant pressure and at constant pressure, which is 1.4 for air between 26.6 degrees and 49 degrees and

is the volume at any point in time. After adjusting the previous formulas, we get the following:

After integrating from [math]\displaystyle{ y }[/math] is 0 to L, where L is the length of the projectile tube, we can find the speed which the projectile lives the muzzle, given by:

This equation helps us to design the net launcher, more specifically its diameter and length. As we require a certain speed that the net needs to be shot, we will have to adjust these parameters accordingly, so the required speed is met.

As there is a projectile motion by the net, assuming that friction and tension forces are negligible we get the following equations for the x and y position at any point in time t:

Where [math]\displaystyle{ y }[/math] is the initial height and [math]\displaystyle{ \alpha }[/math] is the angle that the projectile forms with the x-axis.

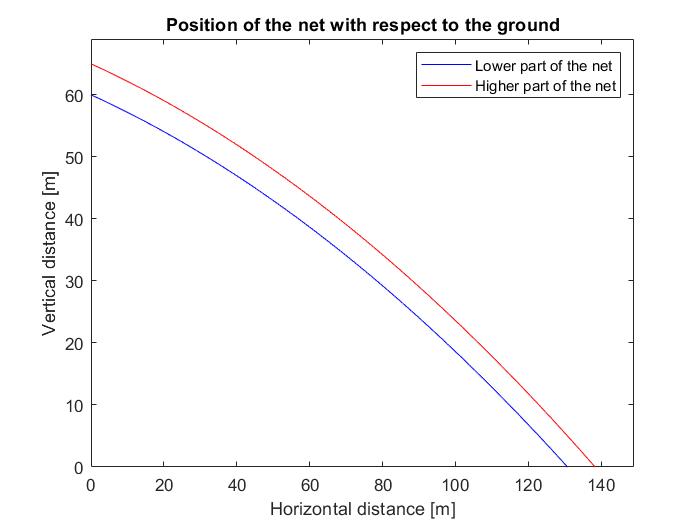

Assuming a speed of 60 m/s, we can plot the position of the net in a certain time point, helping us predict where our drone needs to be in order for it to successfully launch the net to the other drone.

From the plot, it is clear that the drone can be used from a very large distance to capture the drone it is attacking, from a distance as far as 150m. Although this is an advantage of our drone, shooting from such a distance must be only seen as a last option, as the model does not predict the projectile perfectly. Assumptions such as the use of an ideal gas, or neglect of the air friction make for inaccuracies, which only get amplified in bigger distances.

Software

Automatic target recognition and following

Many thanks to Duarte Antunes on helping us with drone detection and drone control theory

For the drone to be able to track and target the intruder, it needs to know where the intruder is at any point in time. To do so, various techniques have been developed over the years all belonging to the area of Automatic Target Recognition (also referred to as ATR). There has been a high need for this research field for a long time. It has applications in for example guided missiles, automated surveillance and automated vehicles.

ATR started with radars and manual operators but as quality of cameras and computation power became more accessible and have taken a big part in today’s development. The camera can supply high amounts of information at low cost and weight. That is why it has been chosen to use it on this drone. Additionally, the drone is equipped with a gimbal on the camera to make the video stream more stable and thus lower the needed filtering and thereby increase the quality of the information.

This high amount of information does induce the need for a lot of filtering to get the required information from the camera. Doing so is computationally heavy, although a lot of effort has been made to reduce the computational lifting. To do this, our drone carries a Raspberry Pi computation unit next to its regular flight controller to do the heavy image processing. One example algorithm is contour tracking, which detects the boundary of a predefined object. Typically, the computational complexity for these algorithms is low but their performance in complex environments (like when mounted to a moving parent or tracking objects that move behind obstructions) is also low. An alternative technology is based around particle filters. Using this particle filter on color-based information, a robust estimation of the target's position, orientation and scale can be made. [15]

Another option is a neural network trained to detect drones. This way, a pretrained network could operate on the Raspberry Pi on the drone and detect the other drone in real time. The advantages of these networks are among others that they can cope with varying environments and low-quality images. A downside of these networks is their increased computational difficulty. But because other methods like Haar cascade can not cope very well with changing environments, seeing parts of the object or changing objects (like rotating rotors). [5] A very popular network is YOLO (You Only Look Once), which is open-source and available for the common computer vision library OpenCV. This network must be trained with a big dataset of images that contain drones, from which it will learn what a drone looks like.

To actually track the intruding drone using visual information, one can use one of two approaches. One way is to determine the 3D pose of the drone and use this information to generate the error values for a controller which in turn tries to follow this target. Another way is to use the 2D position of your target in the camera frame and using the distance of your target from the center of your frame as the error term of the controller. To complete this last method, you need a way to now the distance to the target. This can be done with an ultrasonic sensor, which is cheap and fast, with stereo cameras or with and approximation based on the perceived size of the drone in the camera frame.

Which controller to use depends on the required speed, complexity of the system and computational weight. LQR and MPC are two popular control methods which are well suited to generate the most optimal solution, even in complex environments. Unfortunately, they require a lot of tuning and are relatively computationally heavy. A well-known alternative is the PID controller, which is not able to always generate the most optimal solution but is very easy to tune and also easy to implement. Therefore, such a controller would best suit this project. For future version, we do advise to investigate more advanced controllers (like LQR and MPC).

YOLO demo

To demonstrate the ATR capabilities of a neural network we have built a demo. This demo shows neural network called YOLO (You Only Look Once) trained to detect humans. Based on the location of these humans in the video frame, a Python script outputs control signals to an Arduino. This Arduino controls a two-axis gimbal on which the camera is attached. This way the system can track a person in its camera frame. Due to the computational weight of this specific neural net and the lacking ability to run of a GPU, the performance is quite limited. In controlled environments, the demo setup was able to run at roughly 3 FPS. This meant that running out of the camera frame was easy and caused the system to “lose” the person. Video of the YOLO demo:

To also show a faster tracker we have built a demo that tracks so called Aruco markers. These markers are designed for robotics and are easy to detect for image recognition algorithms. This means that the demo can run at a higher framerate. A new Python script determines the error as the distance from the center of the marker to the center of the frame and, using this, generates a control output to the Arduino. This Arduino in turn moves the gimbal. This way, the tracker tries to keep the target at the center of the frame. Video of the Aruco demo:

See [6] for all code.

Conformation of acquired target

One of the most important things is shooting the net. But after that, the drone has to know what to do next. Because when he misses, he has to go back to base and reload the net gun. Otherwise, when the shot is aimed right and the intruder is caught, the drone should deliver it at a safe place, and his mission has succeeded.

The drone needs to be sure if it had caught the other drone or not. To do this, the drone runs a probability algorithm. This probability algorithm will calculate a so-called ‘belief’ based on the information of the camera. This ‘belief’ is a prediction of the state. In this situation, we have two states, if we have caught the drone or not. The drone should act based on belief. After that, a weight sensor is used to make a final decision if the violating drone is captured or not. This can only be done when the net hangs more or less still underneath the drone. Waiting for the net to hang still underneath the drone takes time, and that is why we first run an algorithm to determine what to do next. The drone starts acting based upon the belief and this belief will be confirmed or not by the weight sensor. If these two contradict, the information that the weight sensor gives overruns the information given by the algorithm.

Base Station

The base station is where all the data processing of drone detection is happening, and all the decision are made. Drone detection is the most important part of the system, as it should always be accurate to prevent any unwanted situation.

There is a lot of state of art technology that can be employed for drone detection such as mmWave Radar [1], UWB Radar [2], Acoustic Tracking [3] and Computer Vision [4]. For use in airport environment radar-based systems work better, as they can identify a drone without problem in any weather condition or even in instances of high noise, which both Computer Vision and Acoustic Tracking can have problems respectively.

As for Acoustic Tracking, there is a lot of interference in an airport, such as airplanes, jets and numerous other machines working in an airport. For a system to be able to work perfectly it needs to detect all of these noises and differentiate from the ones that a drone makes, which can prove to be troublesome. With Computer Vision a high degree of feasibility can be achieved with the use of special cameras equipped with night vision, thermal sensors or Infiniti Near Infrared Cameras. Such cameras are suggested to be used in addition to radar technology for detecting drones, using data fusion techniques to get the best result, since if only Computer Vision is used a large number of cameras is needed and distance of the drone is not computed as accurately as a radar system.

The radar systems considered for drone detection are mmWave and UWB Radar. While mmWave offers 20% higher detection range, distance detection and drone distinguishing offers better results with UWB radar systems, so it is the preferred type of radar for our application. Higher cost can be involved with installing more radar systems, but since the user of the system is an airport, accuracy holds higher importance than cost. Use of such radars enables the user to accurately analyze the Doppler spectrum, which is important from distinguishing from birds and drones and knowing the type of drone that is interfering in the airport area. By studying the characteristics of UWB radar echoes from a drone and testing with different types of drones, a lot of information can be distinguished about the attacking drones such as drone’s range, radial velocity, size, type, shape, and altitude. A database with all the data can be formed which can lead to easy detection of an attacking drone once one is located near an airport. 3D localization is realized by this kind of radar by employing transceivers in the ground station and another in our drone. The considered approach uses the two-way time-of-flight technique and can work at communication ranges up to 80 m. A Kalman filter can finally be sued to track the range of the target since there will also be noise available which we want to filter. Results have shown that the noisy range likelihood estimates can be smoothed to obtain an accurate range estimate to the target the attacking drone.

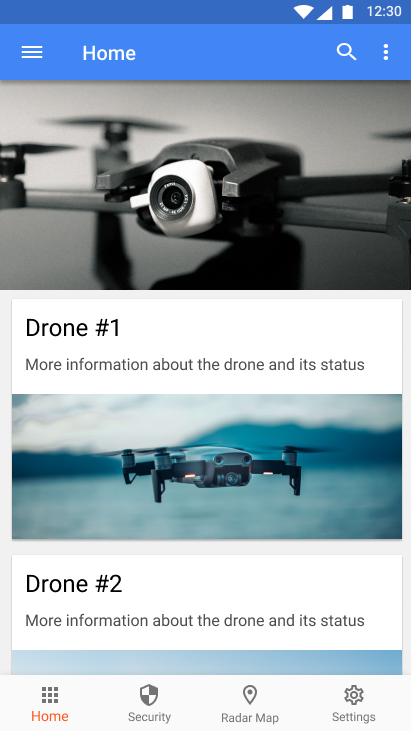

Application

For the interceptor drone system there will be a mobile application developed from which the most important statistics about the interceptor drones can be viewed and also some key commands can be sent to the drone fleet. This application will also communicate with the interceptor drone (or drones if multiple are deployed) in real time, thus making the whole operation of intercepting and catching an intruder drone much faster. Being able to see the stats of the arious interceptor drones in one’s fleet is also very nice for the users and the maintenance personnel who will need to service the drones. A good example that shows how useful the application will be is when a certain drone returns from a mission, the battery levels can immediately be checked using the app and the drone can be charged accordingly.

Note: there will be no commands being sent from the application to the drone. The application's main purpose is to display the various data coming from the drones such that users can better analyze the performance of the drones and maintenance personnel can service the drones quicker.

For building the application, the UI wireframe was first designed. This will make building the final application easier since the overall layout is already known. The UI wireframe for the application’s main pages is shown below together with some details for each of the application's screens explaining the overall functionality.

Securing the Application

Since the application will allow for configuration of sensitive information regarding the interceptor drone fleet, users will be required to Login prior to using the app. This ensures that some user can only make new modifications and change settings to the drone fleet that they own or have permissions for changing. The accounts will require that the owner of the interceptor drone system will be verified prior to using the application which also helps control who will have access to this security system.

The overall security of the system is very important for us. As one can imagine, having an interceptor drone or even a fleet of them could be used with malicious intent by some. Therefore, both the application and the ground control stations would need to be secured in order to prevent such attacks from taking place. This can be done by requiring the users to make an account for using the application and also having the users verified when installing the interceptor drone system as described above. Moreover, the protocols used by the drones to send data to the ground station will be secured to avoid any possible attacks. Also the communication between all the subsystems will happen in a network which will be secured and closely monitored for suspicious traffic. Although we strive to build a package that is as secure as possible, this will not be the main point of this section and in the following, we will concentrate on application’s user interface. The system security section provides more details as to how the whole system will be secured.

Home Page

This is where the users can have an overview of all the interceptor drones in their fleet. The screen displays an informative picture which is representative for each drone. By clicking on the drone’s card, the user will be taken to the drone’s Status page. The Home page also has a bottom navigation bar to all the other pages in the application: Security, Location and Settings. When the user will login into the application, the Home page will be the first screen that will show up.

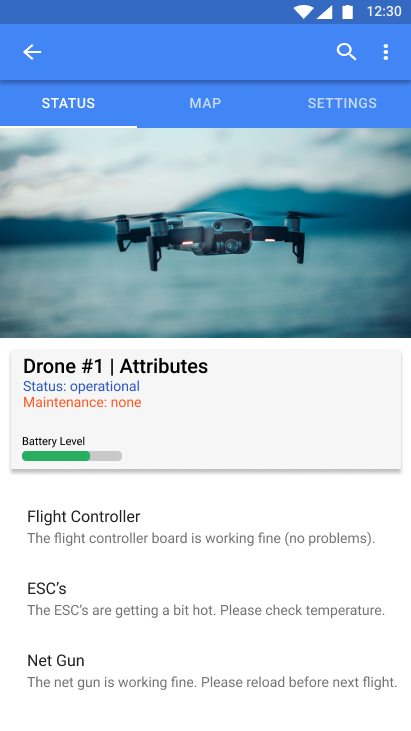

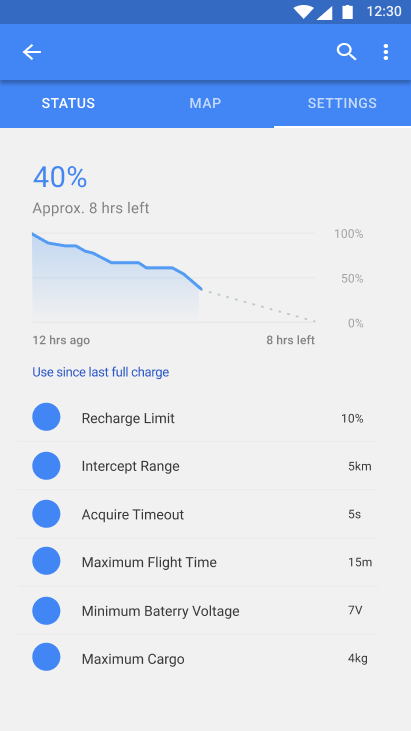

Status Page

Each drone has a status page in the app. This can be accessed by clicking on a drone from the Home page. From this page, users can view whether the drone is operational or not. Any maintenance problems or error logs are also displayed here. This will be of tremendous help for the personnel who will have to take care and maintain the drone fleet. Moreover, the battery level for the drone is also displayed on this page. Each individual subsystem of the drone, such as motors, electronic speed controllers, flight controller, cameras or the net launcher will have their operational status displayed on this page.

Map Page

The Map page can be accessed from the drone’s status page. On the Map page the current location of the drone is displayed. The map can be dragged around for a better view of where the drone is located. This is helpful to not only monitor the progress of the interceptor drone during a mission, but also helps in situations when the drone might not be able to successfully fly back to the base in which case it would need to be recovered from its last known location.

Settings Page

The Settings page can be accessed from the drone’s status page. On the settings page the users can select different configurations for the drone and also view a number of graphs displaying various useful information such as battery draining levels.

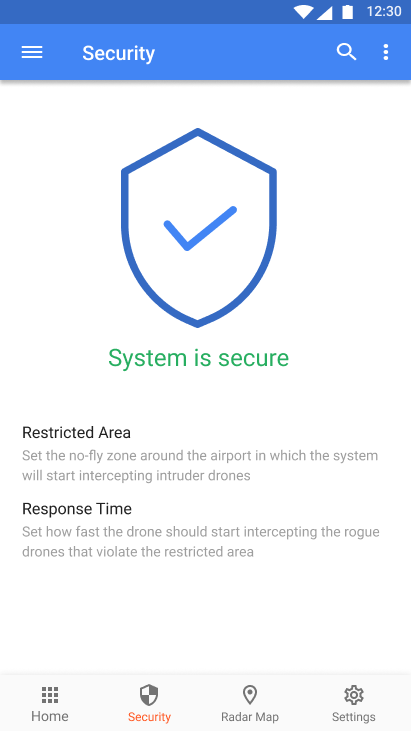

Security Page

This page can be accessed from the Home page by clicking on the Security page button in the bottom navigation menu. From this page users can change different settings which are related to the security of the protected asset such as an airport or a governmental institution. For example, the no-fly zone coordinates can be configured from here together with the sensitivity levels for the whole detection system.

Radar Map Page

This page can be accessed from the Home page by clicking on the Radar Map page button in the bottom navigation menu. On this page, the users can view the location of all the drones nicely displayed on a radar like map. This is different from the drone’s Map page since it shows where all the drones in the fleet are positioned and gives a better overall view of the whole system. It also displays all the airplane related traffic from around the airport.

System Settings Page

This page can be accessed from the Home page by clicking on the Settings page button in the bottom navigation menu. From this page users can change different settings which are not related to the security of the system. These can be things such as assigning different identifiers for the drones to be displayed on the home page and also changing the configuration settings for the communication between the drones and the ground stations.

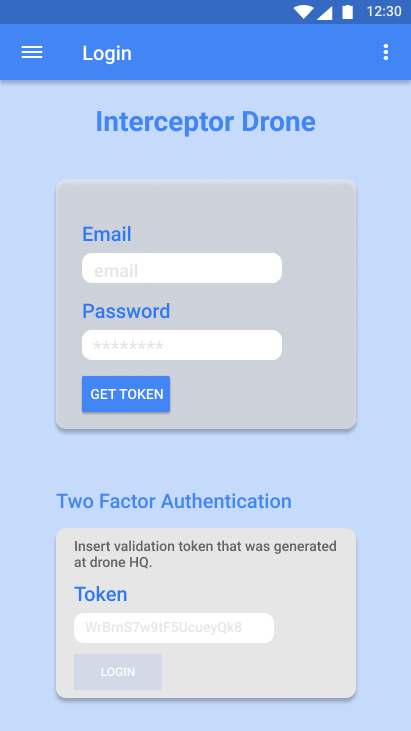

Login Page

This page will be prompted to the user each time the application is opened. It will require an email and password. If these credential are correct, then the user is expected to input a token which is generated at the base station (either by a token generator provided with the system or by some kind of a software running in a secured environment such as computer which is not connected to the internet). Once the token is inserted the user can proceed to logging in. This will allow access to all the other application features. This way we have a two factor authentication and we can ensure a greater level of security overall. For more details about how the communication between the drone and the base station will be secured please read the System Security section.

User Testing

For testing whether the application and its user interface are intuitive and easy to use, a questionnaire was built. The main purpose for this was to see what needed to be improved for the application to be useful for the end users. We will provide both the questionnaire and its results once the user testing phase is finished for the application.

We used Google Forms to build a questionnaire in order to understand more not only about the application but the system as a whole. The form can be found at the following link together with the results. From analysing the data, it was pretty clear that a lot of people working in the industry are well aware about the problems concerning rogue drones flying around airports, which is our main focus for the project. For the application, although the majority had favorable opinions, there is still room for improvement. In the future, more options can be added to both the security screen and the settings pages. Moreover, the radar map can be improved to provide more accurate data and allow to user for better customizing certain options. A search functions for locating flights would also be a nice addition to this screen. All in all, we do think that there are indeed benefits from having the application for the drone interception system.

Security

System Security

This section provides more details as to how the whole system (base station and drone fleet) will be secured. For passing data between the base station and the drone the Transport Layer Security (TLS) protocol will be used. This is widely used over the internet for applications which involve securing sensitive information such as bank payments. It is a cryptographic protocol designed to provide communication security over a computer network. The TLS protocol aims primarily to provide privacy and data integrity between two or more communicating computer applications such as a server and a client.

When using the TLS protocol for securing the data that is being communicated, the system will be using symmetric key for encryption and decryption. Moreover, the identity of the parties which are communicating (in this case the base station and the drones) can be authenticated using public-key cryptography. Public-key cryptography entails that all the parties in the network will have a public key which is known by everyone. Associated with this public key, the parties will also have a private key which should not be disclosed (must be kept secret). For this specific case, the base station will have a private key generated upon installation of the system (at some airport or other facility). This will be kept in cold storage somewhere offline, protected from any entity that might be interested in stealing it and only accessed when required. Moreover, each drone will also be assigned a private key to be used in the communication protocol, which again will be stored on the drone’s internal storage. Since the drones will not have an external IP and and they can only be accessed from the base station’s servers this will be secure. Upon leaving the base station, a TLS handshake between the drone and server can be made to ensure the connection will be established throughout the mission and data can be securely passed back.

Finally, using the TLS protocol for passing data between the drones and the base station will also provide reliability because each message transmitted includes a message integrity check using a message authentication code to prevent undetected loss or alteration of the data during transmission. Therefore, messages that will not match the integrity check will be discarded by the server and resend from the drone. Similarly, all the data that is sent from the base station’s servers (only in case of a mission abort) will also be encrypted. Once the data reaches the drone, it will be checked and if it does not matches the signature of the server it will not be executed.

Using the Transport Layer Security (TLS) protocol for communicating data between the base station and the drone fleet will make the overall system secure and protected from any hacker that might want to gain access to our system and pass commands to the drone or other malicious data to the servers.

Application Security

The drone can be designed to operate autonomously or with a human controlling it. Both of these methods have their own pros and cons. If we were to design the drone to operate autonomously it could completely prevent the possibility of someone hacking into it remotely and disabling it to intercept an attacking drone. Even though this operation mode would be preferred, it means that if something were to go south and the drone starts making dangerous maneuvers, human beings could be endangered by such actions. Because of this, the main legislation governing the essential health and safety requirements for machinery at EU level requires the implementation of an emergency stop button in such types of machines [16], which makes the option of our system being completely autonomous undesirable.

If the drone is to be controlled by a user, what this user can control is really important. The end goal of such an interceptor drone is to be as autonomous as possible, leaving as little as possible in the hands of humans. But what should the interceptive drone system do, once it has detected that an unwanted drone inside the operating area of the airport? Should it intercept immediately or wait for a confirmation from an airport employee?

The flights of drones are unacceptable in any airport area, so the goal would be to get rid of it as soon as possible. Introducing a human factor to confirm the interference of the attacking drone would introduce another extra step to the whole process, which could cause unwanted delays and cost a lot of money to the airport, as the Gatwick accident has shown. The best thing to do is for these drones to try to intercept the target as soon as it is detected, as every drone flying in airport perimiter can be considered unwanted (enemy), whoever is operating it.

In the meantime, this system should notify the user of the system that a target has been detected. This way the employee responsible can also inform the Air Traffic Control (ATC) to hold up the incoming and departing planes. While this can cause inconvenience and create more queues at the airport, it is the best available option. Also, in case there are some real emergencies with planes holding in the pattern for too much time, then the ATC can coordinated with the pilots for a safe landing. The alternative could be too costly, as a drone could hit the engine of an airplane and have devastating results. When a drone is detected by our system, a human will monitor and pass this information to the ATC. This is to avoid a false positive since pausing all the air traffic would cause chaos and incur big losses for the airport therefore it must be avoided at all costs.

The only control on the drone must be the emergency stop button. As this button is of high importance, as it can render the whole system useless if activated, it must be completely secured by any outside factor trying to hack into it. Only putting a stop button in the application that we designed, would not be secure enough, because some backdoor could be found and this would compromise the whole system. That is why a physical button is added to the system either in the ATC tower or at the drone fleet HQ. This means that if you only have access to one of them it would not be enough to stop the system, as both need to be pressed to stop the operation. Only a handful of people authorized by the airport would have the power to stop these drones, while anyone trying to maliciously disable the system has to have physical access to the airport, which considering the high level of security it has, would be hard to achieve.

State of the Art

In this section the State of the Art (or SotA) concerning our project will be discussed.

Tracking/Targeting

To target a moving target from a moving drone, a way of tracking the target is needed. A lot of articles on how to do this, or related to this problem have been published:

- Moving Target Classification and Tracking from Real-time Video. In this paper describes a way of extracting moving targets from a real-time video stream which can classify them into predefined categories. This is a useful technique which can be mounted to a ground station or to a drone and extract relevant data of the target.[17]

- Target tracking using television-based bistatic radar. This article describes a way of detecting and tracking airborne targets from a ground based station using radar technology. In order to determine the location and estimate the target’s track, it uses the Doppler shift and bearing of target echoes. This allows for tracking and targeting drones from a large distance. [18]

- Detecting, tracking, and localizing a moving quadcopter using two external cameras. In this paper a way of tracking and localizing a drone using the bilateral view of two external cameras is presented. This technique can be used to monitor a small airspace and detect and track intruders. [19]

- Aerial Object Following Using Visual Fuzzy Servoing. In this article a technique is presented to track a 3D moving object from another UAV based on the color information from a video stream with limited info. The presented technique as presented is able to do following and pursuit, flying in formation, as well as indoor navigation. [20]

- Patent for an interceptor drone tasked to the location of another tracked drone. This patent proposes a system which includes LIDAR detection sensors and dedicated tracking sensors. [21]

- Patent for detecting, tracking and estimating the speed of vehicles from a moving platform. This patent proposes an algorithm operated by the on-board computing system of an unmanned aerial vehicle that is used to detect and track vehicles moving on a roadway. The algorithm is configured to detect and track the vehicles despite motion created by movement of the UAV.[22]