PRE2017 4 Groep3 planning

Week 1

Meeting on 23-4-2018 and 24-4-2018

- We brainstormed about several ideas for our project and chose the best one, which is described at the top.

- We discussed how to realize this project and how the wiki should look like.

AP For 26-4-2018

Pieter

- Do research into the Users of the project

Marijn

- Do research into Tensorlow image recognition

Tom

- Do research into Environment mapping

Stijn

- Do research into voice recognition

- Fix wiki

Rowin

- Do research into privacy matter

Meeting on 26-4-2018

- Discussed sources.

- Specified the chosen subject, users, their requirements, goals, milestones and deliverables.

- Prepared the feedback meeting.

- Discussed interview questions

AP For 30-4-2018

Everyone

- Make a summary for state of the art.

Week 2

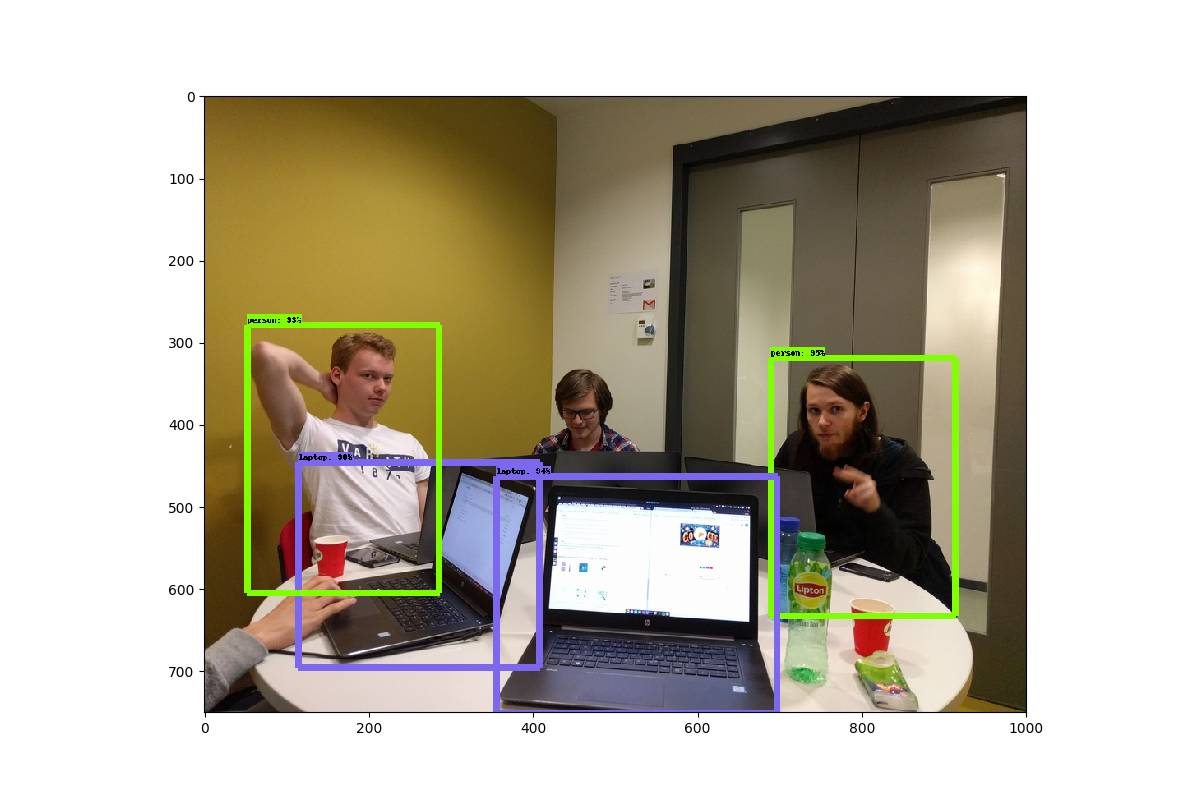

We implemented object detection in a static image, which is based on TensorFlow: Object Detection Repository

The output can be seen in the image below.

We also implemented it successfully on a live video feed, using this tutorial: live object detection The frame-rate and delay is still quite large, which needs to be fixed.

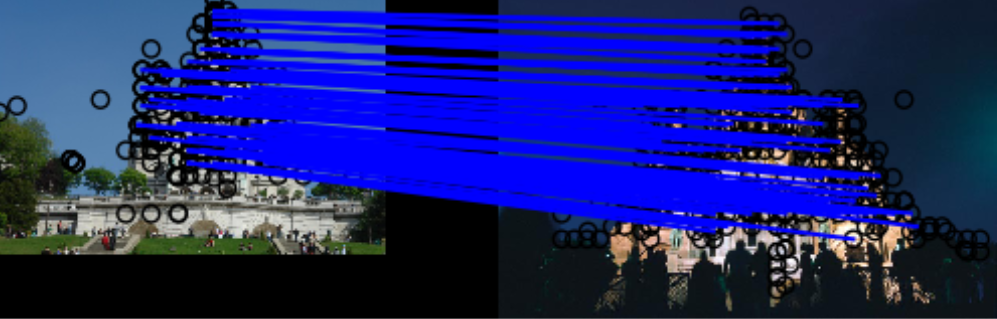

We also implemented DELF feature extraction, which uses feature detection in images which can be used to match two images containing the exact same object. An example matching can be seen in the image below, where we used a pre-trained model for architecture. In this image, lines are drawn between the matched feature points of the two images.

Meeting on 30-4-2018

- Weekly feedback meeting

- We subdivided tasks and worked on them as follows

- Get familiar with voice control (Tom and Stijn)

- Get familiar with object detection (Pieter and Marijn)

- Make and distribute a survey for user research (Rowin)

AP For 3-5-2018

Pieter and Marijn

- Investigate object detection

Tom and Stijn

- Investigate voice control

Rowin

- Analyze survey

Meeting on 3-5-2018

- Find more potential users and discuss results of the user research on the wiki (Rowin)

- Implementing DELF (Marijn and Pieter)

- Research state of the art of voice control to implement a framework (Stijn and Tom)

AP For 7-5-2018

Tom and Stijn

- fix audio ubuntu

Rowin

- Find more survey participants

Marijn

- Implement object detection on live video feed

Pieter

- Explore DELF Point feature extraction and matching

Week 3

Meeting on 7-5-2018

- Weekly feedback meeting

- Completed user research (first version) (Rowin)

- Implementing new voice commands on framework (Tom and Stijn)

- Point feature detection implementation (Pieter and Marijn)

AP For 14-5-2018

Pieter and Marijn

- Able to register new objects

Tom and Stijn

- Implement Coco database

- Look into security

- Create new voice commands

Rowin

- Find study for common lost household items

- Research object location description

- Research for visual impairment uses

Week 4

Meeting on 14-5-2018 and 17-5-2018

- We have specified what needs to be done and subdivided the tasks as seen below

- We have decided to stop with the voice control (for now) as we need to focus on one thing

Goals for week 5:

- Create a database of items that keeps track of where it has been seen last

- A web application will be implemented as interface that can read the database and returns a list of all the times that an item has been seen and its location

- Research the best way to communicate a location to the user (either through photo, a relative location or something else)

Role division

- Marrijn : Web Team

- Pieter : Software Team

- Stijn : Software Team

- Tom : User Research Team

- Rowin: Wiki Team

Week 5

ToDo

- Deploy website to VPS: http://ol.pietervoors.com

- Training own dataset

- Tracking of the same instance of an object

- Way of returning to the user where objects are

Deliverable and attention points for the remainder of the project:

A prototype system with multiple cameras in a home that are connected to a computer. The computer runs a program that keeps track of where items are. These items, because of the current limitation of the basic object detection setup, will be limited to a (to be-)specified set of items. There will be an interface through which the user can request info on where their item is. The system should have support for multiple cameras, as well as multiple instances of the same object (for example, multiple pairs of glasses) and items that have gone out of sight of the cameras. To start, the user interface is a graphical user interface.

The main attention points for the project are the following:

- Tracking of instances of items. This concerns the fact that when you have multiple pairs of glasses, the system needs to figure out which pair of glasses they are. Walking between different rooms, for example, also plays a role here.

- The user interface, which should be made to provide better user interaction, making the system more user-friendly. The way at which the location info is presented to the user plays a big role in this.

- Filtering out or handling wrong detections where the object detection detects items that are not there. The first approach to this will be to train our own dataset.

Week 6

- We have created a user interface at the website http://ol.pietervoors.com.

- The user interface is able to return pictures of last seen objects

- We have implemented basic tracking, which can recognize somewhat whether objects are the same.

- We tried training the network for additional objects, this however is not possible for us to efficiently do.

Week 7

- We improved the website interface, with bounding boxes, tables, etc.

- We improved the tracking of objects.

- We worked on multithreading to implement multiple cameras.

Week 8

- Prepared demo for final presentation

- Prepared presentation for final presentation

- Fixed some bugs in the tracking

- Added the favorites feature in the UI

Week 9

- Final presentation

- Finish wiki and peer review