Mobile Robot Control 2024 Wall-E: Difference between revisions

m (Extended the template of what has to be done) |

|||

| (8 intermediate revisions by 4 users not shown) | |||

| Line 26: | Line 26: | ||

=== Instructions === | === Instructions === | ||

# | |||

#Create a folder called <code>exercise1</code> in the <code>mrc</code> folder. Inside the <code>exercise1</code> folder, create an <code>src</code> folder. Within the <code>src</code> folder, create a new file called <code>dont_crash.cpp</code>. | #Create a folder called <code>exercise1</code> in the <code>mrc</code> folder. Inside the <code>exercise1</code> folder, create an <code>src</code> folder. Within the <code>src</code> folder, create a new file called <code>dont_crash.cpp</code>. | ||

#Add a <code>CMakeLists.txt</code> file to the <code>exercise1</code> folder and set it up to compile the executable <code>dont_crash</code>. | #Add a <code>CMakeLists.txt</code> file to the <code>exercise1</code> folder and set it up to compile the executable <code>dont_crash</code>. | ||

| Line 35: | Line 36: | ||

==== Coen Smeets: ==== | ==== Coen Smeets: ==== | ||

<blockquote>This section has been written with the following techniques. Firstly, I describe in rudimentary terms what should be in a paragraph and let Copilot convert it to Markdown, README.md file on git. This is then checked that it is still correct. Afterwards, I let copilot convert the Markdown to Mediawiki Markup for this page where I double check and manually change incorrect formatting. Is this method allowed within the course?</blockquote>The provided C++ code is designed to prevent a robot from colliding with obstacles by using laser scan data and odometry data. Here's a step-by-step explanation of how it works: | <blockquote> | ||

This section has been written with the following techniques. Firstly, I describe in rudimentary terms what should be in a paragraph and let Copilot convert it to Markdown, README.md file on git. This is then checked that it is still correct. Afterwards, I let copilot convert the Markdown to Mediawiki Markup for this page where I double check and manually change incorrect formatting. Is this method allowed within the course?</blockquote>The provided C++ code is designed to prevent a robot from colliding with obstacles by using laser scan data and odometry data. Here's a step-by-step explanation of how it works: | |||

#'''Initialization''': The code starts by initializing the ''emc::IO'' object for input/output operations and the ''emc::Rate'' object to control the loop rate. It also initializes some variables for later use. | #'''Initialization''': The code starts by initializing the ''emc::IO'' object for input/output operations and the ''emc::Rate'' object to control the loop rate. It also initializes some variables for later use. | ||

#'''Main Loop''': The main part of the code is a loop that runs as long as the ''io.ok()'' function returns ''true''. This function typically checks if the program should continue running, for example, if the user has requested to stop the program. | #'''Main Loop''': The main part of the code is a loop that runs as long as the ''io.ok()'' function returns ''true''. This function typically checks if the program should continue running, for example, if the user has requested to stop the program. | ||

| Line 94: | Line 98: | ||

===== Improvements ===== | ===== Improvements ===== | ||

this method also measures the side notes which will not be optimal if the robot needs to pass trough a small hallway. | this method also measures the side notes which will not be optimal if the robot needs to pass trough a small hallway. | ||

Niels Berkers: | |||

The code uses the standard library header <code><iostream></code>, which is used when printing outputs to the terminal. Furthermore, the <code><emc/io.h></code> package provides the structures and functions to read data from the LiDar sensor and other hardware information needed for robot movement. Finally the package <code><emc/rate.h></code> is used to set the appropriate frequency for a while loop. | |||

===== Implementation: ===== | |||

After the packages are included the main function is called, which starts by creating an IO object of the class IO and setting the frequency to 10 Hz. Afterwards, a while loop starts running that only breaks if the IO tools are not ok. Inside the while loop the reference velocity is set so that the robot drives forward with a velocity of 0.1. Afterwards, if new laser data is obtained, it loops over all indexes of the scan data and checks if the a range is within 0.3 meters. If that is the case, the reference velocity is set to 0 for all directions and thus the robot stops driving. | |||

===== Improvements: ===== | |||

The code can be improved by only looking at a window that is in front of the robot. When this would be implemented, it would also be possible to drive parallel and close to a wall. This would also decrease the amount of indexes that need to be checked. The code can also be improved by sending out a signal when the robot is too close to a wall, which could be handy in a future and possibly bigger algorithm. | |||

==== Joris Janissen: ==== | |||

===== Packages Used ===== | |||

* <code>emc/io.h</code>: This package provides functionalities for interfacing with hardware, including reading laser and odometry data, and sending commands to control the robot's movement. | |||

* <code>emc/rate.h</code>: This package enables control over the loop frequency, ensuring the code executes at a fixed rate. | |||

* <code>iostream</code>: This package facilitates input/output operations, allowing for the printing of messages to the console. | |||

* <code>cmath</code>: This package provides mathematical functions used in the implementation. | |||

===== Implementation ===== | |||

* Initialization: The <code>main()</code> function initializes the IO object, which initializes the input/output layer to interface with hardware, and creates a Rate object to control the loop frequency. | |||

* Data Processing: Within the main loop, the code reads new laser and odometry data from the robot. It then calculates the range of laser scan indices corresponding to a specified angle in front of the robot. | |||

* Obstacle Detection: The code iterates over the laser scan data within the specified range. For each laser reading, it checks if the range value falls within the valid range and if it detects an obstacle within a certain stop distance threshold. | |||

* Action: If an obstacle is detected within the stop distance threshold, the code sends a stop command to the robot and prints a message indicating the detected obstacle and its distance. Otherwise, if no obstacle is detected, the code sends a command to move the robot forward. | |||

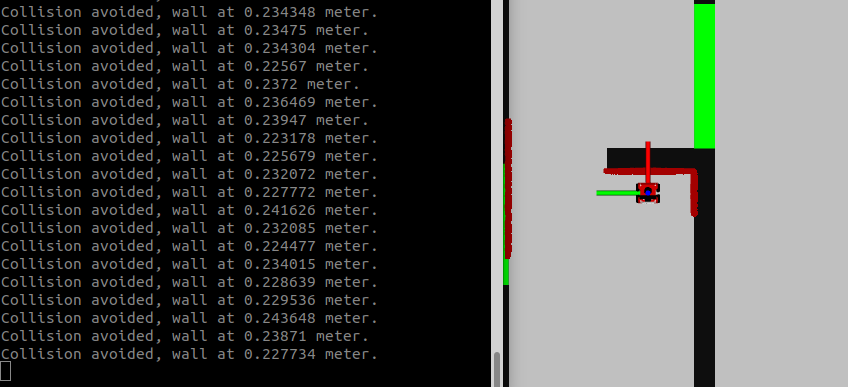

* Loop Control: The loop sleeps for a specified duration to maintain the desired loop frequency using the <code>r.sleep()</code> function.[[File:Result Exercise 1.png|thumb|518x518px]] | |||

===== Improvements ===== | |||

# '''Data segmentation:''' The data points can be grouped to reduce computation time. | |||

# '''Collision message:''' The collision message now spams the terminal, it can be improved to plot only once. | |||

# '''Rolling average:''' The rolling average can be taken from the data points to filter out outliers or noise. | |||

===== Conclusion ===== | |||

This documentation provides insights into the implementation of obstacle avoidance using laser scans in a robotic system. By leveraging the EMC library and appropriate data processing techniques, the robot can effectively detect obstacles in its path and navigate safely to avoid collisions, there are however some improvements. | |||

==== Sven Balvers: ==== | |||

===== implementation: ===== | |||

Header Files: The code includes some header files (emc/io.h and emc/rate.h) which contain declarations for classes and functions used in the program, as well as the standard input-output stream header (iostream). | |||

Main Function: The main() function serves as the entry point of the program. | |||

Object Initialization: Inside main(), several objects of classes emc::IO, emc::LaserData, emc::OdometryData, and emc::Rate are declared. These objects are part of a framework for controlling a robot. | |||

Loop: The program enters a while loop that runs as long as io.ok() returns true. This indicates that the I/O operations are functioning correctly, and thus is connected. | |||

Control Commands: Within the loop, it seems to send a reference command to the robot's base at a fixed rate of 5 Hz using io.sendBaseReference(0.1, 0, 0). This command tells the robot to move forward at a certain velocity. | |||

Data Reading: It reads laser data and odometry data from sensors attached to the robot using io.readLaserData(scan) and io.readOdometryData(odom). | |||

Obstacle Detection: If new laser data or odometry data is available, it checks the laser scan data for any obstacles within a certain range (less than 0.3 units). If an obstacle is detected, it sends a stop command to the robot's base using io.sendBaseReference(0, 0, 0), setting the value of the speed to 0. | |||

Rate Control: The loop sleeps for a fixed duration (r.sleep()) to maintain the specified loop rate. | |||

===== Improvements: ===== | |||

To prevent the noise of the measurement data there are multiple approaches, we can take a moving average of the samples this will cause a delay of the data. We can also take point near one another to merge as one point and taking the average. Than there isn't a delay present. | |||

Another improvement that can be made is to limit the vision of the robot to a certain angle and not the full range from -pi to +pi. This will ensure the robot is able to pass through a narrow hall, which is important for the final code. | |||

== Exercise 1 (practical) == | == Exercise 1 (practical) == | ||

| Line 100: | Line 165: | ||

Go to your folder on the robot and pull your software. | Go to your folder on the robot and pull your software. | ||

===== On the robot-laptop open rviz. Observe the laser data. How much noise is there on the laser? What objects can be seen by the laser? Which objects cannot? How do your own legs look like to the robot. ===== | |||

The noise of the signal from the robot's Lidar is difficult to quantify, as noticed during testing of "dont_crash" file. When the robot drives straight towards an object, it stops abruptly, but when it approaches a wall at a sharp angle, it stutters slightly. This happens because only a limited number of data points are obtained in such cases, making the noise on the sensor apparent. The robot can only see object at the hight of the Lidar. The Lidar has a distribution of measuring points spredding a sertain angle. Due to this, the Lidar can have problems detecting thin objects such as chair legs. Objects it can observe are flat surfaces. The robot's scanning capability doesn't cover a full 360 degrees around itself but instead sees a 180-degree angle centered at the front. | The noise of the signal from the robot's Lidar is difficult to quantify, as noticed during testing of "dont_crash" file. When the robot drives straight towards an object, it stops abruptly, but when it approaches a wall at a sharp angle, it stutters slightly. This happens because only a limited number of data points are obtained in such cases, making the noise on the sensor apparent. The robot can only see object at the hight of the Lidar. The Lidar has a distribution of measuring points spredding a sertain angle. Due to this, the Lidar can have problems detecting thin objects such as chair legs. Objects it can observe are flat surfaces. The robot's scanning capability doesn't cover a full 360 degrees around itself but instead sees a 180-degree angle centered at the front. | ||

===== Take your example of don't crash and test it on the robot. Does it work like in simulation? Why? Why not? (choose the most promising version of don't crash that you have.) ===== | |||

See implementation Coen Smeets. | |||

===== If necessary make changes to your code and test again. What changes did you have to make? Why? Was this something you could have found in simulation? ===== | |||

We modified the code so that the robot doesn't stop when it reaches a wall but instead turns left until it has enough space to resume driving. What's noticeable is that the robot starts and stops repeatedly because it can drive a short distance and then stops again. To prevent this, the robot should turn slightly longer to maintain continuous movement. We could see this in the simulation however we only created this code to try when we were there. | |||

===== Take a video of the working robot and post it on your wiki. ===== | |||

https://tuenl-my.sharepoint.com/:v:/g/personal/s_p_balvers_student_tue_nl/Ed5AHGGWqIRDmUZxi6mSaYsBHEZ2ZXFUevBKeaYkQGoVeQ | |||

=== Answers: === | === Answers: === | ||

==Local Navigation== | |||

=== Artificial Potential Field === | |||

''Make sure to add citation to the book and the slides (Cite with wiki see RPM)'' | |||

=== Dynamic Window Approach === | |||

''To be added'' | |||

==Global Navigation== | |||

===Probabilistic Road Map=== | |||

<blockquote>It must be noted that this section has been heavily influenced by Coen Smeets's BEP report. Ideas and abstracts have been taken from this report as it was written by a groupmember.<ref>Smeets, C., (2023) Active Exploration with Occupancy Grid Mapping for a Mobile Robot. ''Bachelor’s final Project (2022-2023) DC2023.054'' | |||

https://www.overleaf.com/read/vjscmwgpvkdn#47a804</ref></blockquote> | |||

''Probabilistic Road Maps'', henceforth noted as ''RPM'', are a method of creating a network in which a dedicated algorithm can find the shortest route. In order to be a good network for the purpose of navigating a mobile robot, the network has to be a correct representation of the enviroment where safety is of importance; Edges between nodes are safe. There are several methods to create a network, however, Tsardoulias et al. (2016) compared several techniques to optimise path planning and concluded that space sampling methods are most effective in two-dimensional ''Occupancy grid map, OGM''.<ref>Tsardoulias, E. G., Iliakopoulou, A., Kargakos, A., & Petrou, L. (2016). A review of global path planning methods for occupancy grid maps regardless of obstacle density. ''Journal of Intelligent & Robotic Systems'', ''84''(1–4), 829–858. <nowiki>https://doi.org/10.1007/s10846-016-0362-z</nowiki></ref> The data for the environment has been pre-parsed to an occupancy grid map by the course. Space sampling shows great success rate, small execution times and short paths without excessive turns. | |||

For space sampling, the Halton sequence technique presented in Tsardoulias et al. (2016) has been adapted to create a network, G. The network is a simplified representation of the movable space in the OGM. The Halton sequence combines the advantages of random sampling and uniform sampling. In random sampling, every cell can be sampled independently of its location, ensuring an unbiased representation. On the other hand, uniform sampling ensures that the sampled points are evenly distributed. Therefore, the Halton sequence offers the benefits of both approaches simultaneously. The network will consist of nodes, the sampled points in the OGM, and links between nodes, possible paths. The Halton sequence has low discrepancy and thus appears random while being deterministic.<ref name=":0">Halton, J. H. (1964). Algorithm 247: Radical-inverse quasi-random point sequence. ''Communications of the ACM'', ''7''(12), 701–702. <nowiki>https://doi.org/10.1145/355588.365104</nowiki></ref> It samples the space quasi-randomly but with an even distribution. The space is sampled in 2D by using a sequence of bases 2 and 3. The higher the base the more computationally demanding the calculations are, thus, base three is chosen in the evaluation between evenly spreading the nodes and computational power.<ref name=":0" /> The sequences are subsequently scaled to the space the ''OGM'' takes up by using the number of rows and columns for the x and y coordinates, respectively and with the use of the resolution of the map. | |||

The created two-dimensional Halton sequence contains the possible locations of nodes within the OGM. However, each node is additionally checked to determine whether it is located within a cell that is considered free. This check is necessary as the area spanned by all free cells can still contain occupied cells. Nodes should also not be placed in free cells close to occupied cells where collisions between the robot and the obstruction still occur. Free cells close to occupied cells are found by 'inflating' the occupied cells. This process operates by iterating over each pixel in the map, and if the pixel is identified as part of an obstacle, it sets the surrounding pixels within a specified radius to also be part of the obstacle. | |||

When all nodes are placed, edges are created between each node with specific rules to ensure safety and an efficient network. Firstly, each edge should not surpass a distance threshold. This is mostly to optimise the second rule's calculations where it states that each edge should not pass through an occupied cell. This safety rule is checked with the use of Bresenham's line algorithm. Bresenham's line algorithm is an efficient method used in computer graphics for drawing straight lines on a grid by minimizing the use of floating-point arithmetic and rounding errors.<ref>Angel, E., & Morrison, D. (1991). Speeding up Bresenham’s algorithm. ''IEEE Computer Graphics and Applications'', ''11''(6), 16–17. <nowiki>https://doi.org/10.1109/38.103388</nowiki></ref> Edges are only placed when they are safe and not unnecessarily long. An additional minimum threshold for the distance can be added but by the use of the Halton sequence, as explained above, a certain uniformness in distribution of the quasi-random points can be assumed. | |||

Combining these principles of placing nodes and edges, create a coherent network in an environment expressed in an occupancy grid map. This network is subsequently used in the A* path planning algorithm. | |||

=== A* path planning === | |||

''To be added'' | |||

=== Combining PRM with A* === | |||

''To be added'' | |||

=== Questions === | |||

''Add answers of questions'' | |||

1. How could finding the shortest path through the maze using the A* algorithm be made more efficient by placing the nodes differently? Sketch the small maze with the proposed nodes and the connections between them. Why would this be more efficient? | |||

2. Would implementing PRM in a map like the maze be efficient? | |||

3. What would change if the map suddenly changes (e.g. the map gets updated)? | |||

4. How did you connect the local and global planner? | |||

5. Test the combination of your local and global planner for a longer period of time on the real robot. What do you see that happens in terms of the calculated position of the robot? What is a way to solve this? | |||

6. Run the A* algorithm using the gridmap (with the provided nodelist) and using the PRM. What do you observe? Comment on the advantage of using PRM in an open space. | |||

== Citations == | |||

<references /> | |||

Latest revision as of 13:57, 17 May 2024

Group members:

| Name | student ID |

|---|---|

| Coen Smeets | 1615947 |

| Sven Balvers | 1889044 |

| Joris Janissen | 1588087 |

| Rens Leijtens | 1859382 |

| Tim de Wild | 1606565 |

| Niels Berkers | 1552929 |

Exercise 1

Instructions

- Create a folder called

exercise1in themrcfolder. Inside theexercise1folder, create ansrcfolder. Within thesrcfolder, create a new file calleddont_crash.cpp. - Add a

CMakeLists.txtfile to theexercise1folder and set it up to compile the executabledont_crash. - Q1: Think of a method to make the robot drive forward but stop before it hits something. Document your designed method on the wiki.

- Write code to implement your designed method. Test your code in the simulator. Once you are happy with the result, make sure to keep these files as we will need them later.

- Make a screen capture of your experiment in simulation and upload it to your wiki page.

Implementations:

Coen Smeets:

This section has been written with the following techniques. Firstly, I describe in rudimentary terms what should be in a paragraph and let Copilot convert it to Markdown, README.md file on git. This is then checked that it is still correct. Afterwards, I let copilot convert the Markdown to Mediawiki Markup for this page where I double check and manually change incorrect formatting. Is this method allowed within the course?

The provided C++ code is designed to prevent a robot from colliding with obstacles by using laser scan data and odometry data. Here's a step-by-step explanation of how it works:

- Initialization: The code starts by initializing the emc::IO object for input/output operations and the emc::Rate object to control the loop rate. It also initializes some variables for later use.

- Main Loop: The main part of the code is a loop that runs as long as the io.ok() function returns true. This function typically checks if the program should continue running, for example, if the user has requested to stop the program.

- Reading Data: In each iteration of the loop, the code reads new laser scan data and odometry data. If there's new data available, it processes this data.

- Processing Odometry Data: If there's new odometry data, the code calculates the linear velocity vx of the robot by dividing the change in position by the change in time. It also updates the timestamp of the last odometry data.

- Collision Detection: If there's new laser scan data, the code calls the collision_detection function, which checks if a collision is likely based on the laser scan data. If a collision is likely and the robot is moving forward (vx > 0.001), the code sends a command to the robot to stop moving. It also prints a message saying "Possible collision avoided!".

- Sleep: At the end of each iteration, the code sleeps for the remaining time to maintain the desired loop rate.

This code ensures that the robot stops moving when a collision is likely, thus preventing crashes.

Collision detection function

The collision_detection function is a crucial part of the collision avoidance code. It uses laser scan data to determine if a collision is likely. Here's a step-by-step explanation of how it works:

The collision_detection function is a crucial part of the collision avoidance code. It uses laser scan data to determine if a collision is likely. Here's a step-by-step explanation of how it works:

- Function Parameters: The function takes four parameters:

scan,angle_scan,distance_threshold, andverbose.scanis the laser scan data,angle_scanis the range of angles to consider for the collision detection (default is 1.7),distance_thresholdis the distance threshold for the collision detection (default is 0.18), andverboseis a boolean that controls whether the minimum value found in the scan data is printed (default is false). - Zero Index Calculation: The function starts by calculating the index of the laser scan that corresponds to an angle of 0 degrees. This is done by subtracting the minimum angle from 0 and dividing by the angle increment.

- Minimum Distance Calculation: The function then initializes a variable

min_valueto a large number. It loops over the laser scan data in the range of angles specified byangle_scan, and updatesmin_valueto the minimum distance it finds. - Verbose Output: If the

verboseparameter is true, the function prints the minimum value found in the scan data. - Collision Detection: Finally, the function checks if

min_valueis less thandistance_thresholdand within the valid range of distances (scan.range_mintoscan.range_max). If it is, the function returnstrue, indicating that a collision is likely. Otherwise, it returnsfalse.

This function is used in the main loop of the code to check if a collision is likely based on the current laser scan data. If a collision is likely, the code sends a command to the robot to stop moving, thus preventing a crash.

Potential improvements:

While the current collision detection system is effective, it is sensitive to outliers in the sensor data. This sensitivity can be problematic in real-world scenarios where sensor noise and random large outliers are common. Safety is about preparing for the worst-case scenario, and dealing with unreliable sensor data is a part of that. Here are a couple of potential improvements that could be made to the system:

- Rolling Average: One way to mitigate the impact of outliers is to use a rolling average of the sensor data instead of the raw data. This would smooth out the data and reduce the impact of any single outlier. However, this approach would also introduce a delay in the system's response to changes in the sensor data, which could be problematic in fast-moving scenarios.

- Simplifying Walls to Linear Lines: Given that the assignment specifies straight walls, another approach could be to simplify the walls to linear lines in the data processing. This would make the collision detection less sensitive to outliers in the sensor data. However, this approach assumes that the walls are perfectly straight and does not account for any irregularities or obstacles along the walls.

This process involves several steps that may not be immediately intuitive. Here's a breakdown:"- All walls in the restaurant will be approximately straight. No weird curving walls." [1](https://cstwiki.wtb.tue.nl/wiki/Mobile_Robot_Control_2024)

- Data Cleaning: Remove outliers from the data. These are individual data points where the sensor readings are clearly incorrect for a given time instance.

- Data Segmentation: Divide the data into groups based on the angle of the sensor readings. A group split should occur where there is a significant jump in the distance reading. This helps differentiate between unconnected walls.

- Corner Detection: Apply the Split-and-Merge algorithm to each wall. This helps identify corners within walls, which may not be detected by simply looking for large jumps in distance.

Keep in mind that the complexity of the scene can significantly impact the computation time of this algorithm. However, given the scenes shown on the wiki, the additional computation time required to generate clean lines should be minimal. This is because the number of walls is relatively small, and the algorithm can be optimized in several ways. For instance, instead of processing each data point, you could select a subset of evenly spaced points. On average, these selected points should accurately represent the average location of the wall.

Based on my experience, prioritizing safety enhancements in the system is crucial. Establishing a strong safety foundation from the outset is key. By implementing robust safety measures early in the development process, we can effectively mitigate potential risks and issues down the line.

Rens Leijtens

General structure

- including libraries

- main starts

- initialization of objects and variables

- while loop that is active while io.ok() is true

- the laser and odometry data is checked and processed

- the movement is enabled or disabled if necessary

The included libraries are the following:

- iostream for print to terminal

- emc/rate for fixed loop time

- emc/io for connecting with the simulated robot

Movement

The movement is controlled using a simple boolean called "move" which will move the robot if true and stop the robot if false.

Sensor data processing

Check if data is new

check if a measurement point is closer then 0.2 meters then the robot will stop moving.

Improvements

this method also measures the side notes which will not be optimal if the robot needs to pass trough a small hallway.

Niels Berkers:

The code uses the standard library header <iostream>, which is used when printing outputs to the terminal. Furthermore, the <emc/io.h> package provides the structures and functions to read data from the LiDar sensor and other hardware information needed for robot movement. Finally the package <emc/rate.h> is used to set the appropriate frequency for a while loop.

Implementation:

After the packages are included the main function is called, which starts by creating an IO object of the class IO and setting the frequency to 10 Hz. Afterwards, a while loop starts running that only breaks if the IO tools are not ok. Inside the while loop the reference velocity is set so that the robot drives forward with a velocity of 0.1. Afterwards, if new laser data is obtained, it loops over all indexes of the scan data and checks if the a range is within 0.3 meters. If that is the case, the reference velocity is set to 0 for all directions and thus the robot stops driving.

Improvements:

The code can be improved by only looking at a window that is in front of the robot. When this would be implemented, it would also be possible to drive parallel and close to a wall. This would also decrease the amount of indexes that need to be checked. The code can also be improved by sending out a signal when the robot is too close to a wall, which could be handy in a future and possibly bigger algorithm.

Joris Janissen:

Packages Used

emc/io.h: This package provides functionalities for interfacing with hardware, including reading laser and odometry data, and sending commands to control the robot's movement.emc/rate.h: This package enables control over the loop frequency, ensuring the code executes at a fixed rate.iostream: This package facilitates input/output operations, allowing for the printing of messages to the console.cmath: This package provides mathematical functions used in the implementation.

Implementation

- Initialization: The

main()function initializes the IO object, which initializes the input/output layer to interface with hardware, and creates a Rate object to control the loop frequency. - Data Processing: Within the main loop, the code reads new laser and odometry data from the robot. It then calculates the range of laser scan indices corresponding to a specified angle in front of the robot.

- Obstacle Detection: The code iterates over the laser scan data within the specified range. For each laser reading, it checks if the range value falls within the valid range and if it detects an obstacle within a certain stop distance threshold.

- Action: If an obstacle is detected within the stop distance threshold, the code sends a stop command to the robot and prints a message indicating the detected obstacle and its distance. Otherwise, if no obstacle is detected, the code sends a command to move the robot forward.

- Loop Control: The loop sleeps for a specified duration to maintain the desired loop frequency using the

r.sleep()function.

Improvements

- Data segmentation: The data points can be grouped to reduce computation time.

- Collision message: The collision message now spams the terminal, it can be improved to plot only once.

- Rolling average: The rolling average can be taken from the data points to filter out outliers or noise.

Conclusion

This documentation provides insights into the implementation of obstacle avoidance using laser scans in a robotic system. By leveraging the EMC library and appropriate data processing techniques, the robot can effectively detect obstacles in its path and navigate safely to avoid collisions, there are however some improvements.

Sven Balvers:

implementation:

Header Files: The code includes some header files (emc/io.h and emc/rate.h) which contain declarations for classes and functions used in the program, as well as the standard input-output stream header (iostream).

Main Function: The main() function serves as the entry point of the program.

Object Initialization: Inside main(), several objects of classes emc::IO, emc::LaserData, emc::OdometryData, and emc::Rate are declared. These objects are part of a framework for controlling a robot.

Loop: The program enters a while loop that runs as long as io.ok() returns true. This indicates that the I/O operations are functioning correctly, and thus is connected.

Control Commands: Within the loop, it seems to send a reference command to the robot's base at a fixed rate of 5 Hz using io.sendBaseReference(0.1, 0, 0). This command tells the robot to move forward at a certain velocity.

Data Reading: It reads laser data and odometry data from sensors attached to the robot using io.readLaserData(scan) and io.readOdometryData(odom).

Obstacle Detection: If new laser data or odometry data is available, it checks the laser scan data for any obstacles within a certain range (less than 0.3 units). If an obstacle is detected, it sends a stop command to the robot's base using io.sendBaseReference(0, 0, 0), setting the value of the speed to 0.

Rate Control: The loop sleeps for a fixed duration (r.sleep()) to maintain the specified loop rate.

Improvements:

To prevent the noise of the measurement data there are multiple approaches, we can take a moving average of the samples this will cause a delay of the data. We can also take point near one another to merge as one point and taking the average. Than there isn't a delay present.

Another improvement that can be made is to limit the vision of the robot to a certain angle and not the full range from -pi to +pi. This will ensure the robot is able to pass through a narrow hall, which is important for the final code.

Exercise 1 (practical)

You now know how to work with the robot. For your first session, we suggest you start by experimenting with the robot. Document the answers to these questions on your wiki.

Go to your folder on the robot and pull your software.

On the robot-laptop open rviz. Observe the laser data. How much noise is there on the laser? What objects can be seen by the laser? Which objects cannot? How do your own legs look like to the robot.

The noise of the signal from the robot's Lidar is difficult to quantify, as noticed during testing of "dont_crash" file. When the robot drives straight towards an object, it stops abruptly, but when it approaches a wall at a sharp angle, it stutters slightly. This happens because only a limited number of data points are obtained in such cases, making the noise on the sensor apparent. The robot can only see object at the hight of the Lidar. The Lidar has a distribution of measuring points spredding a sertain angle. Due to this, the Lidar can have problems detecting thin objects such as chair legs. Objects it can observe are flat surfaces. The robot's scanning capability doesn't cover a full 360 degrees around itself but instead sees a 180-degree angle centered at the front.

Take your example of don't crash and test it on the robot. Does it work like in simulation? Why? Why not? (choose the most promising version of don't crash that you have.)

See implementation Coen Smeets.

If necessary make changes to your code and test again. What changes did you have to make? Why? Was this something you could have found in simulation?

We modified the code so that the robot doesn't stop when it reaches a wall but instead turns left until it has enough space to resume driving. What's noticeable is that the robot starts and stops repeatedly because it can drive a short distance and then stops again. To prevent this, the robot should turn slightly longer to maintain continuous movement. We could see this in the simulation however we only created this code to try when we were there.

Take a video of the working robot and post it on your wiki.

Answers:

Artificial Potential Field

Make sure to add citation to the book and the slides (Cite with wiki see RPM)

Dynamic Window Approach

To be added

Probabilistic Road Map

It must be noted that this section has been heavily influenced by Coen Smeets's BEP report. Ideas and abstracts have been taken from this report as it was written by a groupmember.[1]

Probabilistic Road Maps, henceforth noted as RPM, are a method of creating a network in which a dedicated algorithm can find the shortest route. In order to be a good network for the purpose of navigating a mobile robot, the network has to be a correct representation of the enviroment where safety is of importance; Edges between nodes are safe. There are several methods to create a network, however, Tsardoulias et al. (2016) compared several techniques to optimise path planning and concluded that space sampling methods are most effective in two-dimensional Occupancy grid map, OGM.[2] The data for the environment has been pre-parsed to an occupancy grid map by the course. Space sampling shows great success rate, small execution times and short paths without excessive turns.

For space sampling, the Halton sequence technique presented in Tsardoulias et al. (2016) has been adapted to create a network, G. The network is a simplified representation of the movable space in the OGM. The Halton sequence combines the advantages of random sampling and uniform sampling. In random sampling, every cell can be sampled independently of its location, ensuring an unbiased representation. On the other hand, uniform sampling ensures that the sampled points are evenly distributed. Therefore, the Halton sequence offers the benefits of both approaches simultaneously. The network will consist of nodes, the sampled points in the OGM, and links between nodes, possible paths. The Halton sequence has low discrepancy and thus appears random while being deterministic.[3] It samples the space quasi-randomly but with an even distribution. The space is sampled in 2D by using a sequence of bases 2 and 3. The higher the base the more computationally demanding the calculations are, thus, base three is chosen in the evaluation between evenly spreading the nodes and computational power.[3] The sequences are subsequently scaled to the space the OGM takes up by using the number of rows and columns for the x and y coordinates, respectively and with the use of the resolution of the map.

The created two-dimensional Halton sequence contains the possible locations of nodes within the OGM. However, each node is additionally checked to determine whether it is located within a cell that is considered free. This check is necessary as the area spanned by all free cells can still contain occupied cells. Nodes should also not be placed in free cells close to occupied cells where collisions between the robot and the obstruction still occur. Free cells close to occupied cells are found by 'inflating' the occupied cells. This process operates by iterating over each pixel in the map, and if the pixel is identified as part of an obstacle, it sets the surrounding pixels within a specified radius to also be part of the obstacle.

When all nodes are placed, edges are created between each node with specific rules to ensure safety and an efficient network. Firstly, each edge should not surpass a distance threshold. This is mostly to optimise the second rule's calculations where it states that each edge should not pass through an occupied cell. This safety rule is checked with the use of Bresenham's line algorithm. Bresenham's line algorithm is an efficient method used in computer graphics for drawing straight lines on a grid by minimizing the use of floating-point arithmetic and rounding errors.[4] Edges are only placed when they are safe and not unnecessarily long. An additional minimum threshold for the distance can be added but by the use of the Halton sequence, as explained above, a certain uniformness in distribution of the quasi-random points can be assumed.

Combining these principles of placing nodes and edges, create a coherent network in an environment expressed in an occupancy grid map. This network is subsequently used in the A* path planning algorithm.

A* path planning

To be added

Combining PRM with A*

To be added

Questions

Add answers of questions

1. How could finding the shortest path through the maze using the A* algorithm be made more efficient by placing the nodes differently? Sketch the small maze with the proposed nodes and the connections between them. Why would this be more efficient?

2. Would implementing PRM in a map like the maze be efficient?

3. What would change if the map suddenly changes (e.g. the map gets updated)?

4. How did you connect the local and global planner?

5. Test the combination of your local and global planner for a longer period of time on the real robot. What do you see that happens in terms of the calculated position of the robot? What is a way to solve this?

6. Run the A* algorithm using the gridmap (with the provided nodelist) and using the PRM. What do you observe? Comment on the advantage of using PRM in an open space.

Citations

- ↑ Smeets, C., (2023) Active Exploration with Occupancy Grid Mapping for a Mobile Robot. Bachelor’s final Project (2022-2023) DC2023.054 https://www.overleaf.com/read/vjscmwgpvkdn#47a804

- ↑ Tsardoulias, E. G., Iliakopoulou, A., Kargakos, A., & Petrou, L. (2016). A review of global path planning methods for occupancy grid maps regardless of obstacle density. Journal of Intelligent & Robotic Systems, 84(1–4), 829–858. https://doi.org/10.1007/s10846-016-0362-z

- ↑ 3.0 3.1 Halton, J. H. (1964). Algorithm 247: Radical-inverse quasi-random point sequence. Communications of the ACM, 7(12), 701–702. https://doi.org/10.1145/355588.365104

- ↑ Angel, E., & Morrison, D. (1991). Speeding up Bresenham’s algorithm. IEEE Computer Graphics and Applications, 11(6), 16–17. https://doi.org/10.1109/38.103388