Football Table Simulation Visualization Tool

Author: Erik Stoltenborg

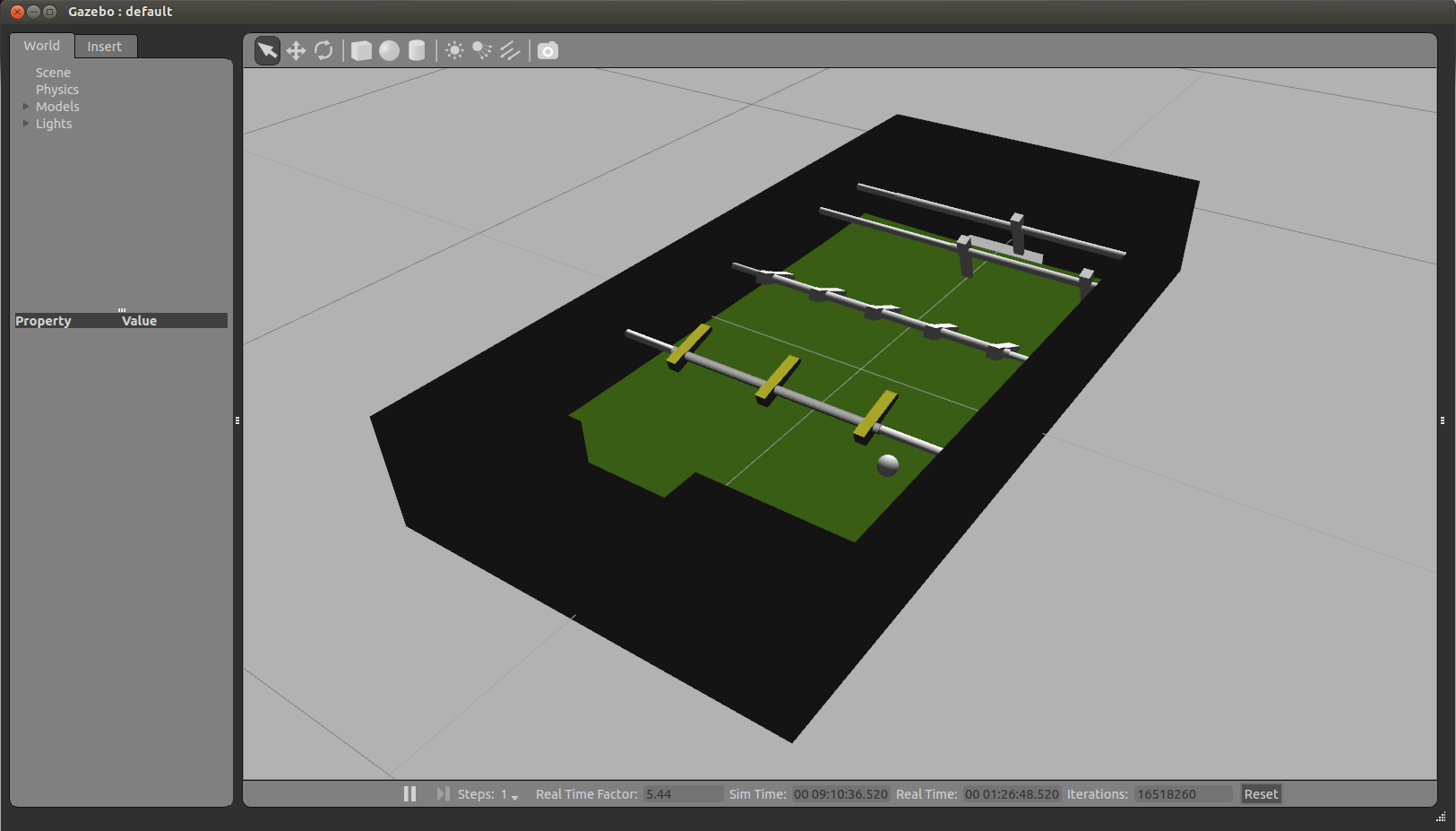

Gazebo

A simulator is developed to easily test new algorithms without depending on the actual robot. In has been developed using gazebo (w/o the use of ROS), a so-called physics abstraction layer, which employs ODE combined with OGRE for rendering. Gazebo has been very well maintained since 2012, since it became the official simulator for the DARPA Robotics Challenge. The environment/robots are described in the SDF format, which is very simular to *.xml. It can be easily combined with CAD-files, in this case is combined with a Collada [1] drawing for more complex geometry. A previous attempt was created using MORSE, however this was aborted because the poor tune-ability of the physics and limited options for communication.

A guide on how to build gazebo can be found here, the latest version that has been tested is 1.8.3 and can be found on the svn. Make sure to source the setup.sh located on the svn, instead of the one that comes with gazebo.

Synchronized Inter-process Communication

To date, Gazebo is mostly used in combination with ROS. However using ROS plugins yields a lot of overhead, moreover the timers and communication provided there or not accurate enough to ensure an accurate casual link. Therefore a plug-in was created enabling fast light-weight inter-process communication, allowing to run simulation to be ran up to 20 times faster than real-time without loss of causality.

This simulation communicates with Matlab Simulink using Interprocess Communication (IPC) wrapper library for the POSIX libraries, the wrapper makes the use of shared memory more accesible and easy to use. It uses shared memory protected by mutexes and condition variables enabling a thread-safe, synchronized, causal communication between two processes e.g. Gazebo and Simulink. This allows us to use the Gazebo simulator as a plant in our simulink control loop. Moreover this wrapper library could be used for (safe) IPC between two arbitrary processes. More on this library, it's basic principles and how it is used can be found here.

Building the libraries/plugins

After building gazebo and its requirements, you can build all libraries used for RL/IPC. These can be build by running the src/shm_ipc/install.sh and /src/rl/install.sh.

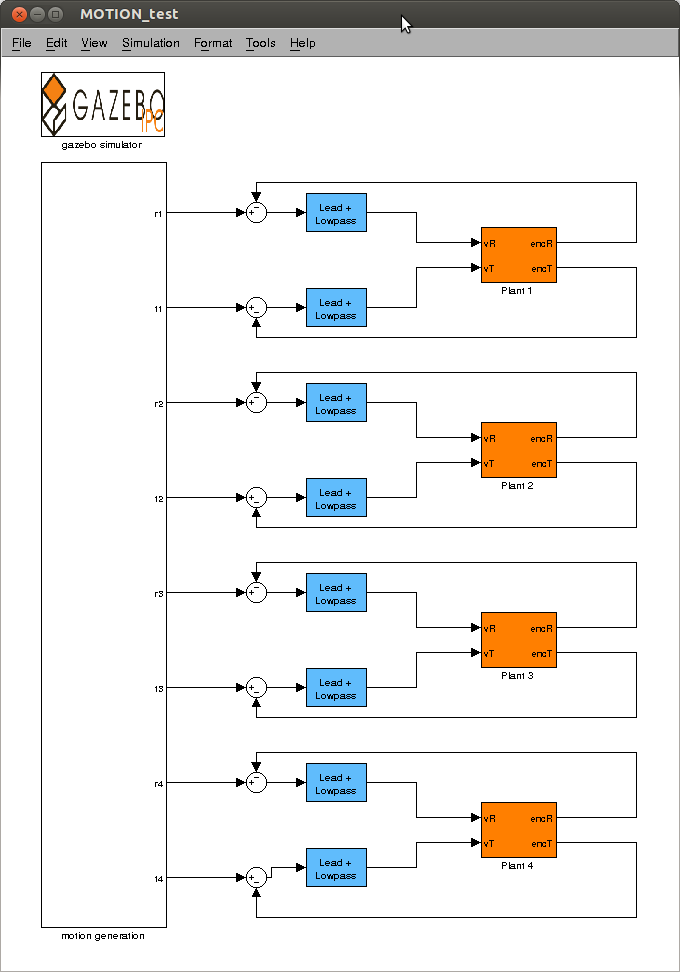

Matlab Simulink

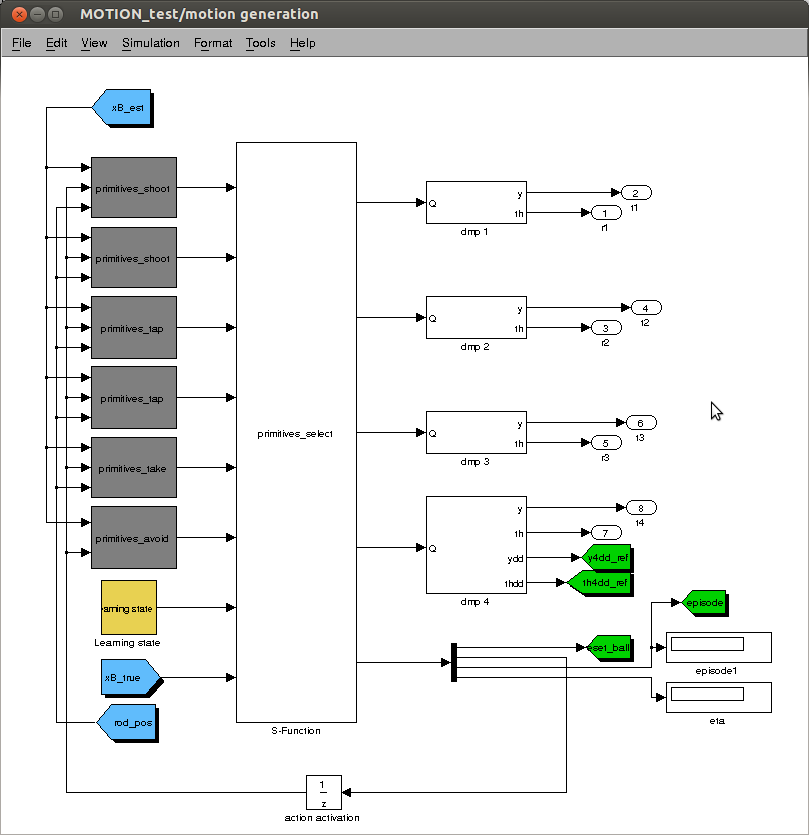

The Simulink side of things can be found in MOTION_test.mdl, this is not an external, but is ran in simulink itself in the regular way. The main window is shown below, the simulator part is the the gazebo sub-system. The motion-generation contains the reinforcement learning, attractor dynamics and constraints for the actions. To start the simulation just press simulation->start. If you want to view what is going on, open a terminal and type gzclient to start the gazebo GUI.

In the motion generation sub-system the ETA (estimated time of arrival) is indicated (how long the simulation will take in minutes). Also the current episode number is displayed.

Reinforcement learning settings

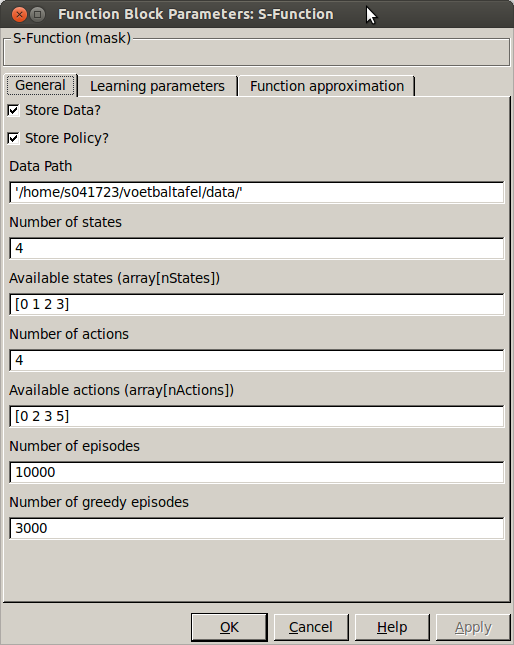

General

Most relevant settings regarding the actions and the reinforcement learning are set in their respective masks. In the mask of primitives_select.cpp you can set all the parameters described in this RL section.

In the tab named general the following settings are available:

- Store Data

- Checkbox to enable the storage of data, this will store the buffers and the performance of the simulation you run.

- Store Policy

- Checkbox to enable storage of the policy. This will store the learned weight vectors [math]\displaystyle{ \theta,~w }[/math], but also other settings such as the node positions (centers), number of states and actions etc.

- Data Path

- Path to the folder in which all data will be stored. A new folder with a timestamped name will be created, also the name of the folder indicates what RL algorithm was used.

- Number of states

- Number of states used as input to the learning agent

- Available states

- Vector with indices of the states, the allow quick changes you can select a couple of seperate states from the input of the simulink block. I.e. we only want the lateral position and speed of the ball. We can then say: Number of states = 2, available states [1 3] indicating index 1 and 3 in the learning state. Make sure to adjust the function approximation accordingly.

- Number of actions

- number of actions available to the learning agent

- Available actions

- Similar to available states, vector with indexes of the actions you want available (0-5).

- Number of samples

- Total of episodes that are done in this simulation until it is stopped. (Make sure the simulation runs long enough in order to finish all of them).

- Number of greedy samples

- Number of episodes in which fully greedy behavior is performed ([math]\displaystyle{ \epsilon=0 }[/math]). If this is the same as the number of samples, then only greedy behavior will be performed.

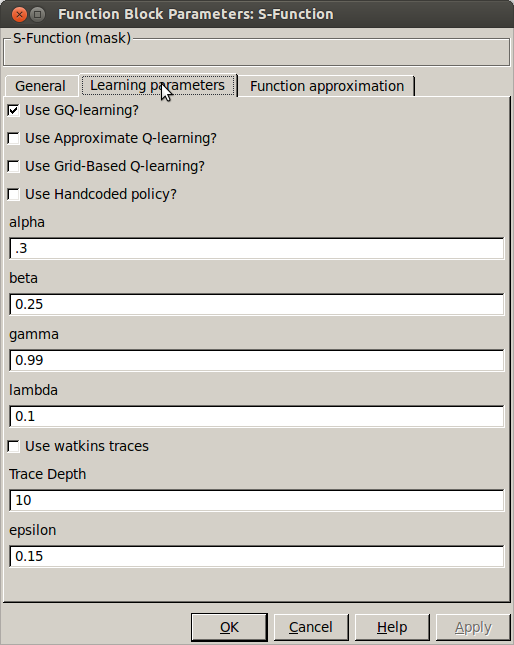

Learning parameters

- Use GQ-learning

- Use greedy-GQ for updating the value function approximation

- Use approximate-Qlearning

- Use approximate-Qlearning for updating the value function approximation

- Use grid-based Q-learning

- Use grid based function approximation. This is no longer available in the latest version, you can still find in

primives_select_old.cpp. - Use hand-coded policy

- Use a user defined policy, this code is too be defined in primives_select.cpp. An actual handcoded policy can be found in

primives_select_old.cpp. - Alpha

- The learning rate [math]\displaystyle{ \alpha }[/math]

- Beta

- The secondary parameter for greedy-GQ [math]\displaystyle{ \beta }[/math]

- Gamma

- The discount factor [math]\displaystyle{ \gamma }[/math]

- Lambda

- Eligibility trace parameter [math]\displaystyle{ \lambda }[/math]

- Use watkins traces

- Use Watkins style traces: cut-off a trace once an non-optimal(/exploratory) action is performed

- Trace depth

- Maximum amount of steps back the trace is updated

- Epsilon

- Exploration rate, the higher it is the more random actions are performed (not based on value).

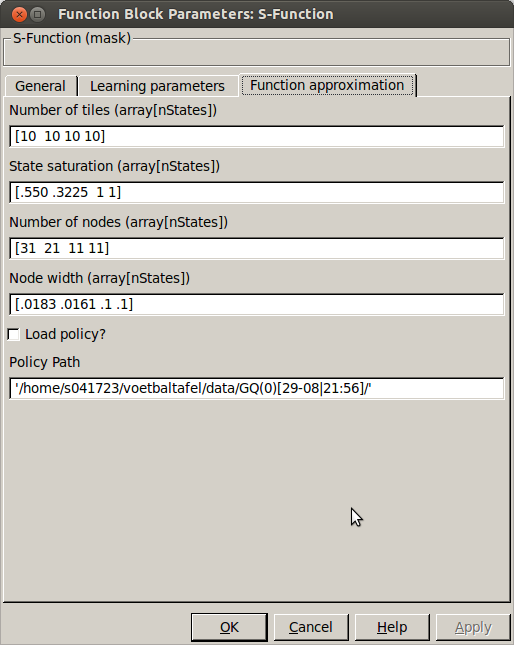

Value function approximation

These settings are parallel to what is explained here. Make sure the size of these arrays matches the number of states defined in the general tab. The settings that are not explained here are:

- Load policy?

- Checkbox indicating whether or not to load an old policy

- Policy Path

- Absolute path pointing to the folder in which the policy files can be found.