Embedded Motion Control 2014 Group 4: Difference between revisions

| Line 135: | Line 135: | ||

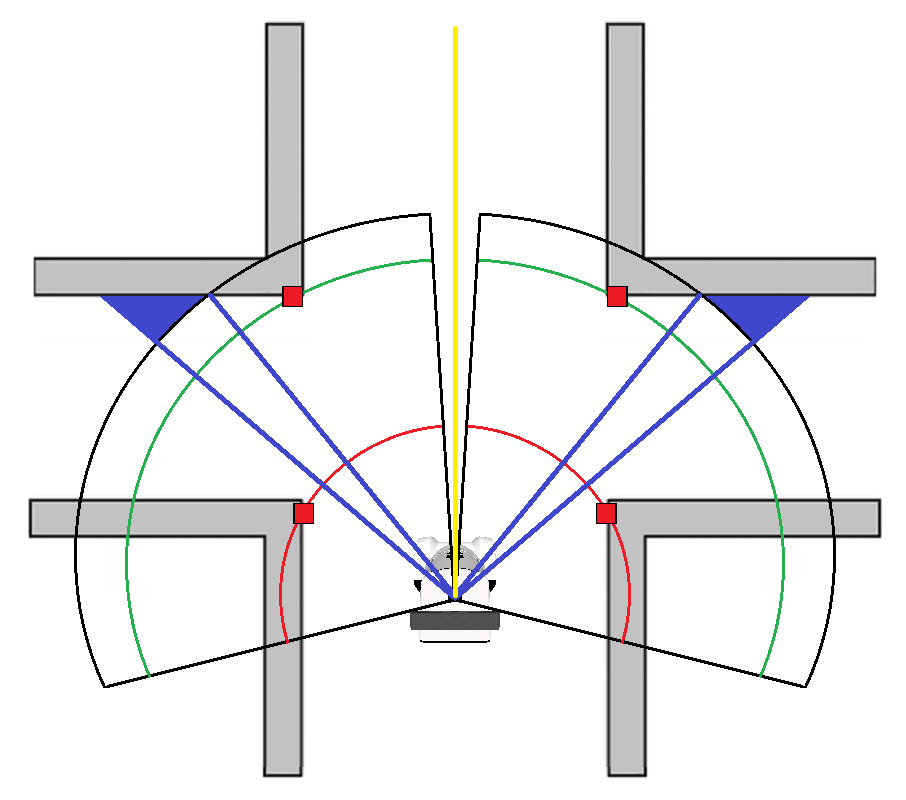

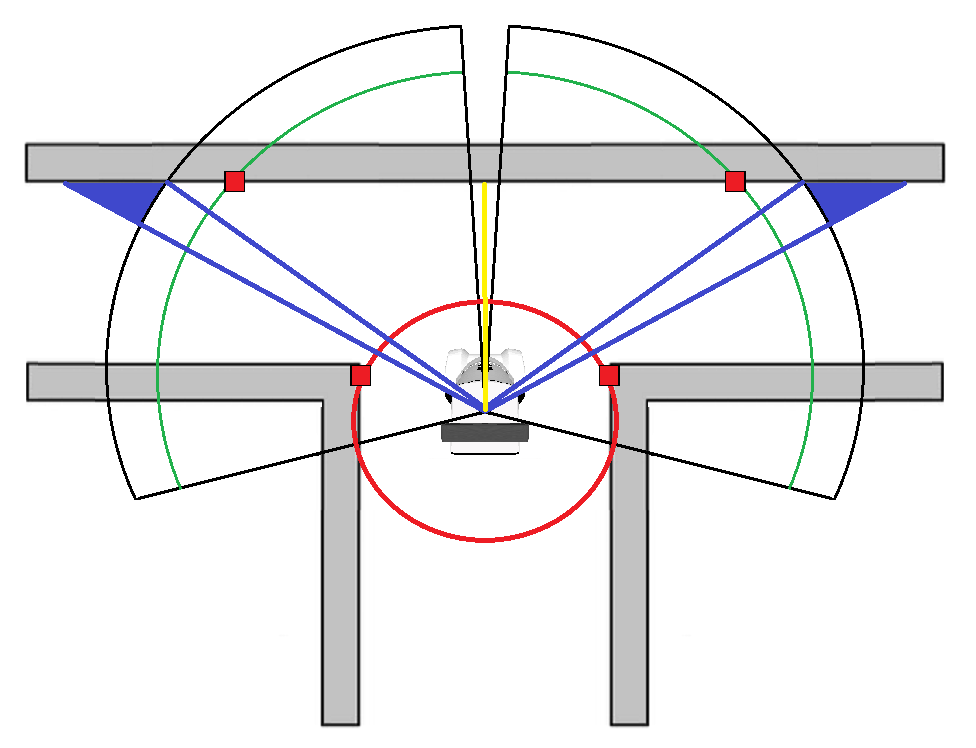

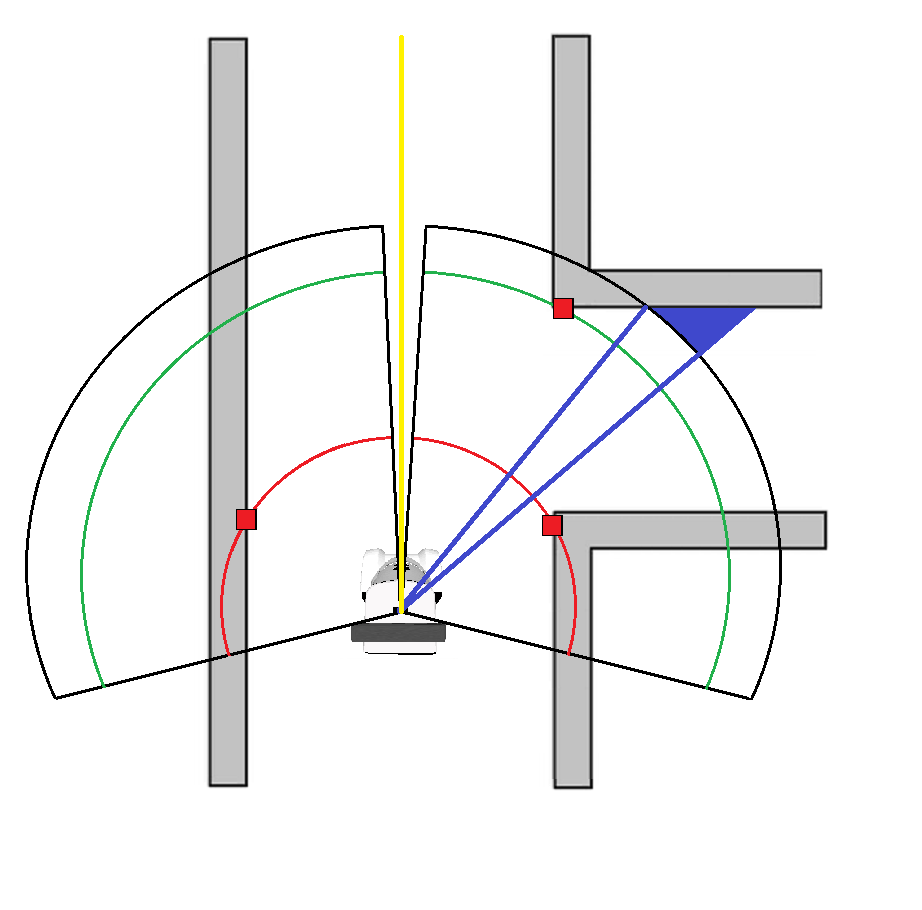

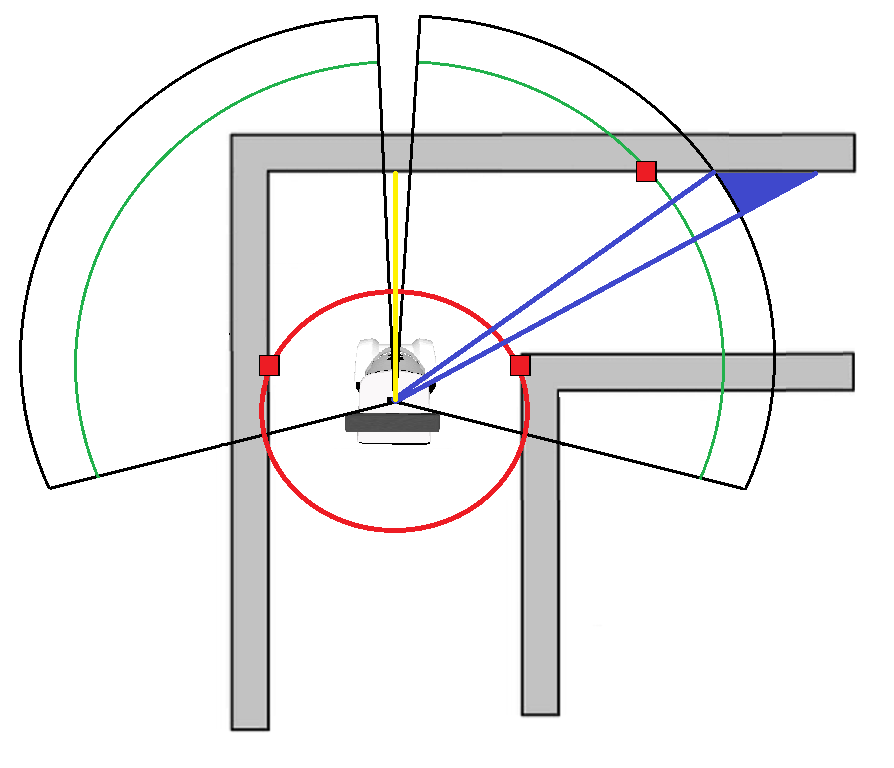

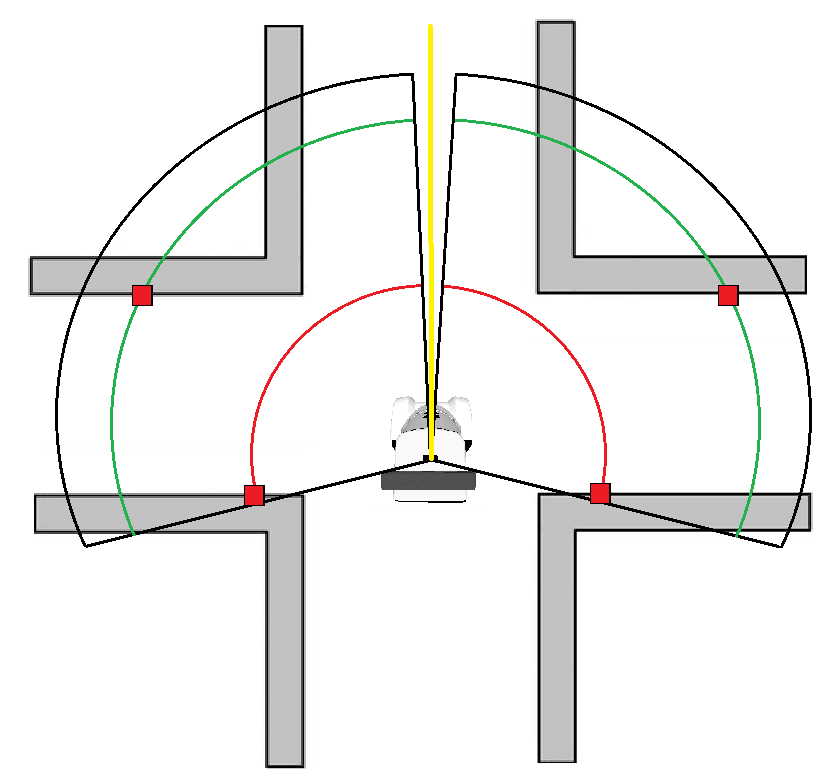

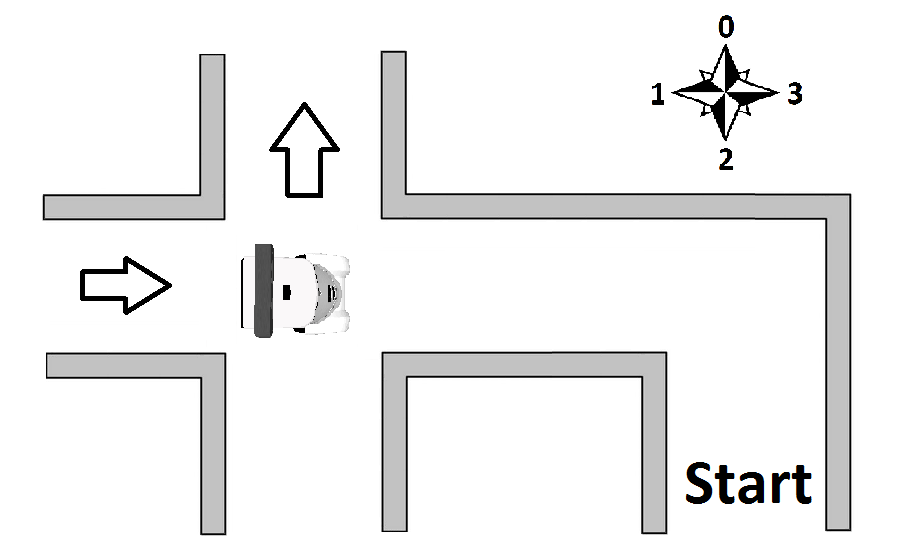

[[File:pico_switchview_final.png|thumb|left|upright=2|Environment detection: Switchview]] | [[File:pico_switchview_final.png|thumb|left|upright=2|Environment detection: Switchview]] | ||

== Maze Tree == | == Maze Tree == | ||

Revision as of 15:57, 1 July 2014

Members of group 4

| Khy, R.N. | Norent |

| Kiriouchine, V.I. | Seva |

| Kuijk, F.J.M. van | Fransis |

| Marx, S. | Sanne |

Media

Find videos of the Pico emc04 tests on our Youtube channel.

See here an animated GIF of simulated Pico, where he takes a corner and stops:

Animation

Planning

Week 1:

-Introduction Lecture

-Determine course goals

Week 2:

-Install Ubuntu, ROS, fix SVN etc.: get everything up and running

-Finish all tutorials: C++, ROS

-Think of first concepts to tackle the corridor problem

-Implement first concepts: wallfinder, angle/distance controller

Week 3:

-Improve angle/distance controller

-Try to implement holonomic concept

-Meet tutor to discuss problems and project progress

-Perform Pico Test

Week 4:

-[Sanne] Place current functions in seperate .cpp files to allow for parallel updating

-[Sanne]Change the sendVelocity function into a twoPointNavigator function which aims to place pico between two lazer scan ranges+angles given as function input.

-[Fransis]Create a corridorFinder function which identifies the two corners of all corridors large enough for pico to pass through. Discard distant corridors and output two lazer scan ranges+angles of the closest found corridor.

-[Seva]Create a function which decides what points are sent to the twoPointNavigator function based on how close pico is to the side corridor found by corridorFinder , allowing pico to seamlessly switch between navigating the straight corridor and turning into the side corridor.

-[Norent]Change the safety radius into a safety box around pico using the dimensions of pico, create an unStuck function which allows pico to safely move away from any obstacle breaching the safety box.

-Implement a finishedCorridor condition which can detect that pico has left the corridor exit and stops all movement.

-[Pico EMC04]Win the corridor competition.

Concepts

wallFinder

Pico should be able to recognize walls. This can be done by using the Laser Range Finder. The data received from the Laser Range Finder must be filtered to get useful data that can be used by our controller.

From this sensor we receive an array with distances, 270 degrees around Pico. This array is split in half to represent vision on the left and right side. In these two arrays we search for the smallest distance that is greater than 0.15 m (a part of the vision is blocked by Pico him/her/it-self). Finally the angle at wich these distances are with repsect to Pico are calculated. This function thus finds two distances and two corresponding angles.

Angle/Distance controller

When Pico navigates through the corridor the distances found by the wallFinder are controlled to be equal. Besides the distances, the angles at which these distances are detected should be controlled to be zero. When the closest distances left and right of pico are equal and the angles zero, Pico is oriented in the middle of the corridor oriented in the longitudinal direction, which is exactly what we want.

Holonomic movement

At the tutor meeting it became clear that Pico is equipped with omni-wheels which means holonomic movement is possible. Currently the Pico in the simulator has differential wheels. With omni-wheels the distance can be controlled independent of the angle.

Cornering

We should think of how Pico can recognize and take corners.

findExit

We should think of how Pico can recognize the exit of the corridor so that it stops moving.

safetyHitbox

TODO

hitbox dimenions (front x side): 2.0e-1 x 1.5e-1.

unstuckSafety

TODO

Implementation

Wallfinder

The wallfinder is called with every lazerscan callback and will return the nearest scanpoint on the left and the nearest scanpoint on the right of pico.

Corridorfinder

The corridorfinder is called with every lazerscan callback and will return the closest corner point of the nearest corridor and the furthest corner point of the closest corridor to pico.

The closest corner is found by looking at the scan data forward of the found wallpoints and determining whether the difference between two subsequent points is large enough. The furtherst corner of the corridor is found by looking forward again past the closest point. When a local minimum is found in the scan distance this is called the furthest corner of the corridor. If the difference between the closest and furthest corner is not large enough for pico to pass through, the corridor is considered a gap.

Masterplanner

The masterplanner determines where to navigate. If the closest corridor corner is very close to one of the points found by the wallfinder, the pico will take the corner using the turnAroundPoint function. If no side corridor is found or the corridor is not close enough, pico will drive forward.

If while taking the corner the closest point on the other side of pico is found at an angle much different from +-90 degrees, the masterplanner knows the pico is mid corner. As soon as the wallfinder again finds the points left and right of pico at the same angle the masterplanner will switch to driving forward.

twoPointNavigator

The twoPointNavigator function will send the velocitys to pico in order to keep pico moving forward between parallel walls. The distance of closest points left and right of pico is controlled to be equal by controlling the linear.y speed. The angle of pico in relation to the walls is controlled by sending an angular.z speed such that the closest point left and right are at and equal angle from the centre of pico.

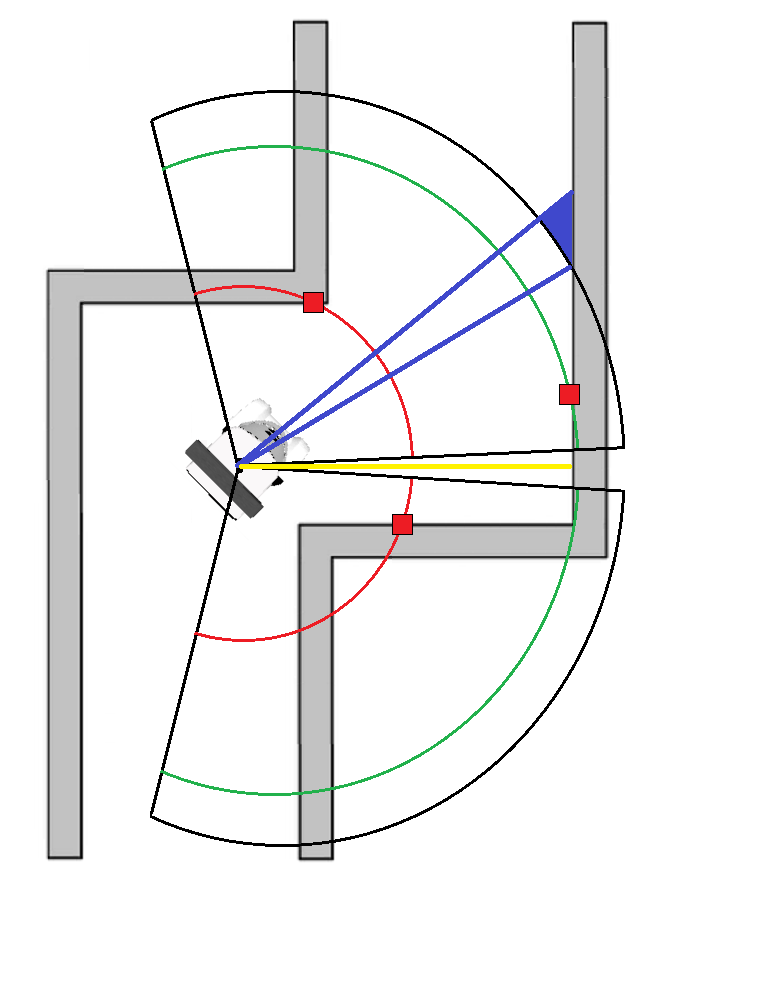

turnAroundPoint

The turnAroundPoint function will send the velocitys to pico in order to keep pico moving forward while keeping a constant distance to one of the wallfinder points, maintaining this point at a +/- 90 degree angle w.r.t. pico depending on what side the side corridor is.

stopFinal

The stopFinal function is called at each lazercallback to see if the pico has exited the maze using two checks. The first check checks whether all lazer range points are larger than a certain distance. This is the minimum distance pico needs to travel in order for it to consider itself outside of the maze. The second check splits up the lazer scan into 10 sectors, calculating the average distance for each sector. The average distance of each sector has to be greater than a certain (larger than the for the first used check) value. This ensures that the pico will stop close to the maze exit when the majority of the surroundings are very far away.

geometryIdentifier

The geometryIdentifier identifies whether pico is close to any corridors, intersections or junctions, it replaces the corridorFinder for the final test.

The steps taken by the geometryIdentifier are given in pseudo code as:

if (found corridor on the left)

- then

- if (found corridor on the right)

- then

- if (left and right corridor are at equal distance to pico

- then

- if (furthest points of both corridors are wide enough to be a corridor)

- then -> Pico has found an intersection

- else -> Pico has found a three way left right junction

- else -> Pico has found two seperate corridors

- else

- if (furthest corridor point is much closer than point in front of pico)

- then -> Pico has has found a three way left forward junction

- else -> Pico has found a corridor to the left

else

- if (found corridor on the right)

- then

- if (furthest corridor point is much closer than point in front of pico)

- then -> Pico has found a three way right forward junction

- else -> Pico has found a corridor to the right

- else -> Pico is in a corridor with no side corridors

Environment Detection

Maze Tree

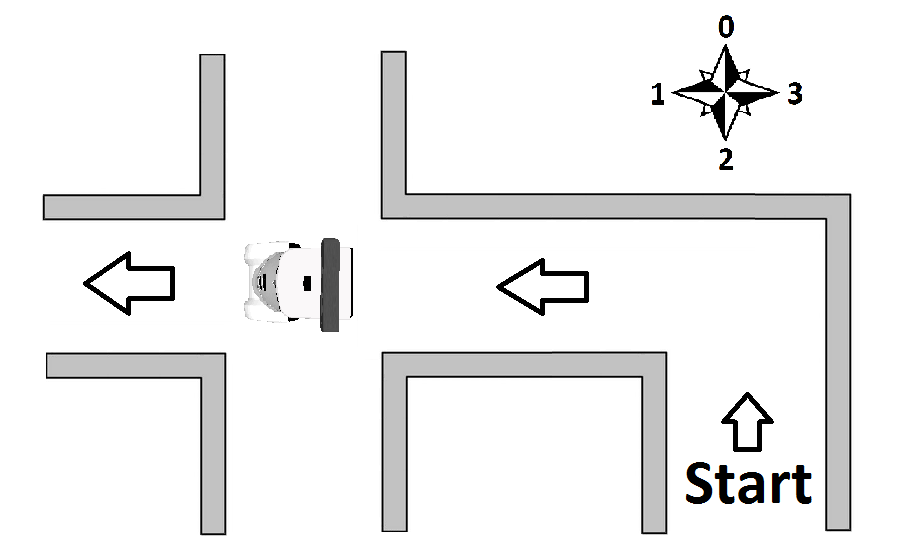

The Maze tree is a self-made set of functions used to track the progress of Pico in exploration and to allow a strategy to be implemented. Each separate junction that Pico encounters is saved as a new entry in an array of maze tree elements. These elements contain an ID, parent ID, child ID’s , orientation with respect to the starting orientation and a set of Booleans indicating whether the 4 cardinal directions around it are explored. The animation below shows how the tree is updated as Pico explores a maze. In it Pico uses the strategy of going forward, then right, then left, with respect to the orientation of the maze tree element Pico is in.

When Pico leaves a tree element through its last unknown direction, that direction is set known in both tree elements it is represented in.

The maze tree decides the direction Pico should go, transforming the cardinal directions into numbers this becomes a simple math problem. Forward becomes 0, left is 1, backwards is 2 and right equals 3. The direction Pico should drive in follows from the difference between the preferred strategy direction and the current orientation of Pico + the orientation of the current maze element. If the resulting direction is backwards it is remapped to be forwards. If an arrow is detected it is immediately set as the strategy direction.

Example: Pico is in a 4 way junction that is completely unexplored; the entrance of the 4 way junction is oriented left with respect to the maze start. Pico is also facing left with respect to the maze start. As the maze is unexplored the strategy is to first go forward.

The navigation direction the follows from:

The backwards direction is remapped to forward and Pico knows from this that it should move straight through the 4 way junction. The added 8 is so no negative directions can be the result.

After exploring the forward direction Pico returns to the 4 way junction, Pico is now facing right with relation to the start and wishes to explore the right corridor of the 4 way junction (the corridor facing up in the image) according to the strategy (Forward->Right->Left)

Pico again calculates where to go in the same manner:

Pico therefor knows that it should turn left in order to explore the right corridor in the 4 way junction.

Arrow recognition

Orientation of image recognition techniques (as discussed in lectures)

Three available tools for image recognition:

- Floodfill

- Line detection

- Corner detection

Our initial ideas:

- Line detection: use the position and derivative of the lines to recognize arrow head, tail and direction

- Corner detection: use the point cloud to identify the arrow tail and determine direction by counting nearby points left and right of the arrow tail.

- Floodfill: use enclosed areas on binary image to find arrow candidates, use the area difference left and right to the center point of a valid arrow candidate to determine arrow direction

Two of the initial ideas where discarded:

- Corner detection: The Harris corner detection was thought to be too complicated to implement robustly.

- Floodfill: Does not account for specific geometry, only area and would not be robust enough.

The idea of the line detection algorithm was thought of as the best candidate for a working and robust method of detecting arrows.

Line detection

The main algorithm consists of three main steps:

- Connect the lines calculated by the Hough transform function in line with each other using their relative proximity and derivative, this new and the original line spaces increase the arrow detection rate.

- Use the new-line space to see if there exist lines that can be a tail of the arrow and a head of an arrow and how many valid combinations of tail and head can be found.

- Use most found arrow combination found in part two and take the mode of the past 20 frames as the final output of this arrow detection algorithm for the current frame.

Implementation structure:

The line three main steps of the detection algorithm are split into the following functions:

- Image manipulation and line recognition

- Convert RGB image to HSV, input: RGB image, output: HSV image

- Filter image for red colours in two domains, input: HSV image, output: two red-filtered binary images

- Add the two red-filtered binary images into one, input: one red-filtered binary images, output: one red-filtered binary image

- Blur the image, input: one red-filtered binary image ,output: blurred binary image

- Canny edge detection, input: blurred binary image, output: edge points on new image

- Probabilistic Hough line detection, input: edge points on new image, output: vector of line coordinates

- Arrow Detection

- Line joining, input: vector of original line coordinates, output: combined vector of original line coordinates and extended line coordinates

- Lines pairing for head and tail, input: combined vector line coordinates, output:

- Arrow recognition: head and tail pairing

- Verification by checking the surrounding area of the arrow for other lines,

- Time mode: Take the most common arrow direction

The output of the arrow recognition is as given in table below.

| message | output |

|---|---|

| nothing | 0 |

| left | 1 |

| right | 2 |

First the RGB image is transformed to HSV and afterwards red-filtered to a binary image. Note the following:

- imshow of RGB image looks the way it should.

- imshow of HSV image does not, this is because imshow is not meant for HSV.

- red-filtering from hue 170 to 10 still needs to be done right. currently 170 to 180 is used.

Step 2 can be broken up into several steps:

- Find Head line pair (two lines intersecting at angle, i.e. between 15 and 45 degrees, when lines are of infinite length )

- Find Tail line pair (two lines approximately (i.e.~5 degrees difference) parallel to each other with a distance , i.e. between 10 and 100 pixels)

- If there exists a Head-Tail line pair combination, where conditions below are fully satisfied, then output direction arrow (1 or 2). Else output no arrow (0).

- Intersection of Head line pair is at the outer left or outer right of head and tail combination.

- the outer ends of head line pair are in proximity of one of either sides of tail line pair.

- one of two sides of tail line pair is at the outer left or outer right of type 1 and 2 combination.

Angle of line:

atan2( y2-y1, x2-x1 ) * 180/CV_PI;

If above 360 degrees, subtract 360 degrees.

If below 0 degrees, add 360 degrees.

Properties of line pairing with L number of lines:

- when using 2 for loops:

- lines cannot be paired with itself

- (i1,j1) == (j2,i2) means the pair was already found

- As a result, use for i,j with (i, 1:L), (j, i+1:L) where L number of lines.

- Number of possible pairings: L²/2-L

- if head pair found, then pair cannot be tail. // mutually exlusive

- Not sure if worth implementing

- Not sure if worth implementing

Properties line pair type 1 with lines l1, l2:

- [ 15 <approx< angle( l1 ) <approx< 45 ] V [ [ 135 <approx< angle( l1 ) <approx< 165 ] V [ 195 <approx< angle( l1 ) <approx< 225 ] V [ [ 315 <approx< angle( l1 ) <approx< 345 ]

- anglediff( l2, l1 ) = angle(l2) - angle(l1); 45 <= anglediff( l2, l1 ) <= 75

- x21 - x11 approx 0 // only if perfect line detection of head

- x22 - x12 approx 0 // only if perfect line detection of head

- if pointing left, y21 - y11 approx 0; // and also y22 - y12 Napprox 0;

- if pointing right, y22 - y12 approx 0; // and also y21 - y11 Napprox 0;

- else not left, right: output 0

Properties line pair type 2 with lines l1, l2:

- angle( l1 ) approx 0;

- anglediff( l2, l1 ) approx 0;

- x21 - x11 approx 0 // only if perfect line detection of tail

- x22 - x12 approx 0 // only if perfect line detection of tail

- abs( y21 - y11 ) > 10 px; //should also be the case for all other y

Properties head-tail type1-2 pair combination:

- if head left, type 1 left, type 2 right. // consistency

- if head right, type 2 left, type 1 right. // consistency

- ytype12 < ytype21,ytype22 < ytype12 // head is wider than tail

For step 2 the following assumptions were made:

- The arrows will always be pointing to left or right with a maximum difference of 20 degrees.

- The maze will not contain too many objects that (that can be interpreted as arrows).

Rejection box around arrow assumption:

- Arrow is printed on a piece of non-red paper.

- This paper with arrow is taped onto the non-red walls.

These assumptions can be used for identification, mainly for rejecting disturbances:

- Around the arrow lines (head and tail pairs) no other red edges/lines should be present.

- For the topside of the rejection box according to the maximum length of either of these ratios:

- 1/3.5 times length of a line from the head pair (10.5 to 3 safe ratio).

- 1/4.5 times length of a line from the tail pair (13 to 3 safe ratio).

- For the other sides (left right bottom) of the rejection box, the maximum length can be chosen very large. We have chosen for a conservative ratio of 1.

Log

Week 2:

Monday 28-04-2014: Group meeting SEL/SOL

Present: Sanne, Seva, Norent, Fransis

- Installed all software on laptops

- Got the svn up and running

- Started with tutorials and discussed problems

Wednesday 30-04-2014: Meeting SEL/SOL

Present: Sanne, Fransis

- Finished tutorials

Friday 02-05-2014: Meeting OGO 2

Present: Sanne, Seva, Norent, Fransis

- Created concepts: wallFinder, angle/distance controller, exitFinder, Cornering

- Implemented the following concepts: wallFinder, angle/distance controller:

- Pico now can detect walls and navigate through the corridor, not controlled optimal yet

Week 3:

Wednesday 07-05-2014: Group meeting OGO 18

Present: Sanne, Seva, Norent, Fransis

- Held first tutor meeting with Sjoerd

- Created holonomic concept

- Prepared for real world Pico test

Thursday 08-05-2014: First experiment with Pico

Present: Sanne, Seva, Norent, Fransis

- Preparation for the experiment by reviewing the tutorials and our work

- Tested code on Pico

- Saved logfiles

- Observed Pico's behaviour as expected:

- Safety precautions work

- Speed limits work

- Robust for gaps in wall

- Robust for small corridors (width: 8e-1 [m])

- Robust for non-straight corridors

- Unable to make the turn for the corridor contest

- Safety precautions work

- Measured Pico rectangular hitbox:

- front: 1.5e-1 [m]

- side: 2.0e-1 [m]

- Made videos of experiments (uploaded soon™)

- front: 1.5e-1 [m]

missing a part

Thursday 28-05-2014: Work session

Present: Sanne, Seva, Norent, Fransis

- Worked in seperate groups on decision making and arrow recognition

- Meeting with Sjoerd

Time Table

Overview of time spent per group member Lectures Group meetings Mastering ROS and C++ Preparing midterm assignment Preparing final assignment Wiki progress report Other activities Week_1_Norent 2 Week_1_Seva 2 6 Week_1_Fransis Week_1_Sanne 2 2 Week_1_Jorrit Week_2_Norent 2 6 Week_2_Seva 2 6 2 Week_2_Fransis 2 4 6 Week_2_Sanne 2 6 4 0.5 Week_2_Jorrit Week_3_Norent 1 3 3 1 Week_3_Seva 2 12 Week_3_Fransis 2 8 Week_3_Sanne 2 12 Week_3_Jorrit Week_4_Norent 13 2 Week_4_Seva 15 2 Week_4_Fransis 15 Week_4_Sanne 15 2 Week_4_Jorrit Week_5_Norent 8 Week_5_Seva Week_5_Fransis 20 Week_5_Sanne Week_5_Jorrit Week_6_Norent Week_6_Seva Week_6_Fransis 20 Week_6_Sanne Week_6_Jorrit Week_7_Norent Week_7_Seva Week_7_Fransis 18 Week_7_Sanne Week_7_Jorrit Week_8_Norent Week_8_Seva Week_8_Fransis 24 Week_8_Sanne Week_8_Jorrit Week_9_Norent Week_9_Seva Week_9_Fransis 24 Week_9_Sanne Week_9_Jorrit Week_10_Norent Week_10_Seva Week_10_Fransis 20 Week_10_Sanne Week_10_Jorrit