PRE2019 4 Group6

Group Members

| Name | Student ID | Study | |

|---|---|---|---|

| Coen Aarts | 0963485 | Computer Science | c.p.a.aarts@student.tue.nl |

| Max van IJsseldijk | 1325930 | Mechanical Engineering | m.j.c.b.v.ijsseldijk@student.tue.nl |

| Rick Mannien | 1014475 | Electrical Engineering | r.mannien@student.tue.nl |

| Venislav Varbanov | 1284401 | Computer Science | v.varbanov@student.tue.nl |

Introduction

For the past few decades, the percentage of elderly people in the population has been rapidly increasing. One of the contributing factors is the advancements in the medical field, causing people to live longer lives. The percentage of the word population aged above 60 years old is expected to roughly double in the coming 30 years [1]. This growth will bring many challenges that need to be overcome. Simple solutions are already being implemented this day, e.g. people will generally work to an older age before retiring.

Problem statement

Currently, this increase in elderly in care-homes already affects the time caretakers have available for each patient, resulting in most of their time being spent on vital tasks like administering medicines and less of a focus on social tasks such as having a conversation. Ultimately, patients in care-homes will spend more hours of the day alone. These statements combined with the fact that the prevalence of depressive symptoms increase with age [2] and the feeling of loneliness being one of the three major factors that lead to depression, suicide and suicide attempts [3] [4], results in a rather grim perspective for the elderly in care-homes. A simple solution could be ensuring that there is a sufficient amount of caretakers to combat staff shortage. Unfortunately, the level of shortage has been increasing for the past years. It is therefore vital that a solution to aid caretakers in any way, shape, or form is of importance for the benefits of the elderly. An ideal solution would be to augment care-home staff with tireless robots, capable of doing anything a human caretaker can. However, since robots are not likely to be that advanced any time soon one could develop a less sophisticated robot that can take over some of the work load of the caretakers rather than all. New advances in Artificial Intelligence (AI) research might assist to a solution here, as it can be used to perform complex human-like algorithms. This enables the creation of a robot capable of monitoring the mental state of a human being and process this information to possibly improve their mental state if it drops beyond nominal levels. There are different kinds of interactions the robot could perform in order to achieve its goal. For instance, the robot could have a simple conversation with the person or routing a call to relatives or friends. For more complex interactions and conversations, the robot requires to understand more about the emotional state of the person. If the robot is able to predict the emotional state of the person reliably, the quality of the interactions may improve greatly. Not being able to make good predictions regarding their mental state will likely cause more frustration or other negative feelings for the person which is highly undesirable.

Objective

The proposal for this project is to use emotion detection in real-time in order to predict how the person is feeling. Using this information the AI should be able to find appropriate solutions to help the person. These readings will not be perfect as it is a fairly hard task to predict the emotional state of a human being, but promising results can be seen when neural networks are used to perform this task [5].

Using a neural network in order to predict the emotional state of a human is not a new invention, however using this information in a larger system that also implements other components such as a chatbot is something that is less researched. The research being done during this project therefore might result in new findings that can be used in the future. However the results found could also endorse similar research done with similar results.

The robot should be able to perform the following tasks:

- Human emotional detection software

- Chatbot function

- Feedback system that tracks the effect of the robot

As for its name, it will from now on be referred to as R.E.D.C.A.(Robotic Emotion Detection Chatbot Assistant).

State of the Art

This is most likely not the first research regarding AI technology for emotion recognition and chatbot interactions. Before we proceed with our own findings and development, it is encouraged to reflect back to older papers and development when it comes to the combination of components we wish to implement. Below one may find the state of the art of Facial Recognition and Chatbot interactions and a summary for each state of the art work. Additionally their limitations will be assessed in order for us to comprehend their design and work around them.

Previous Work

Facial Recognition

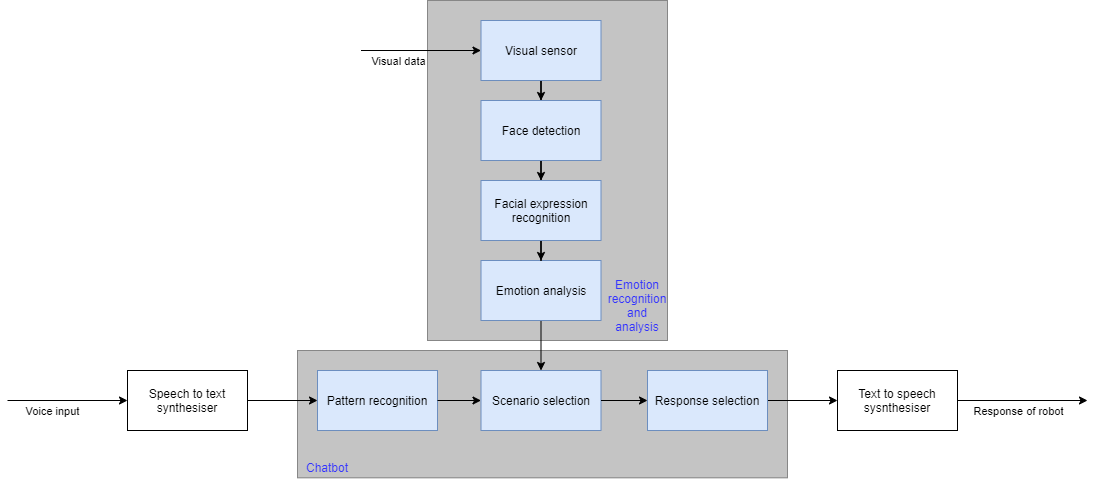

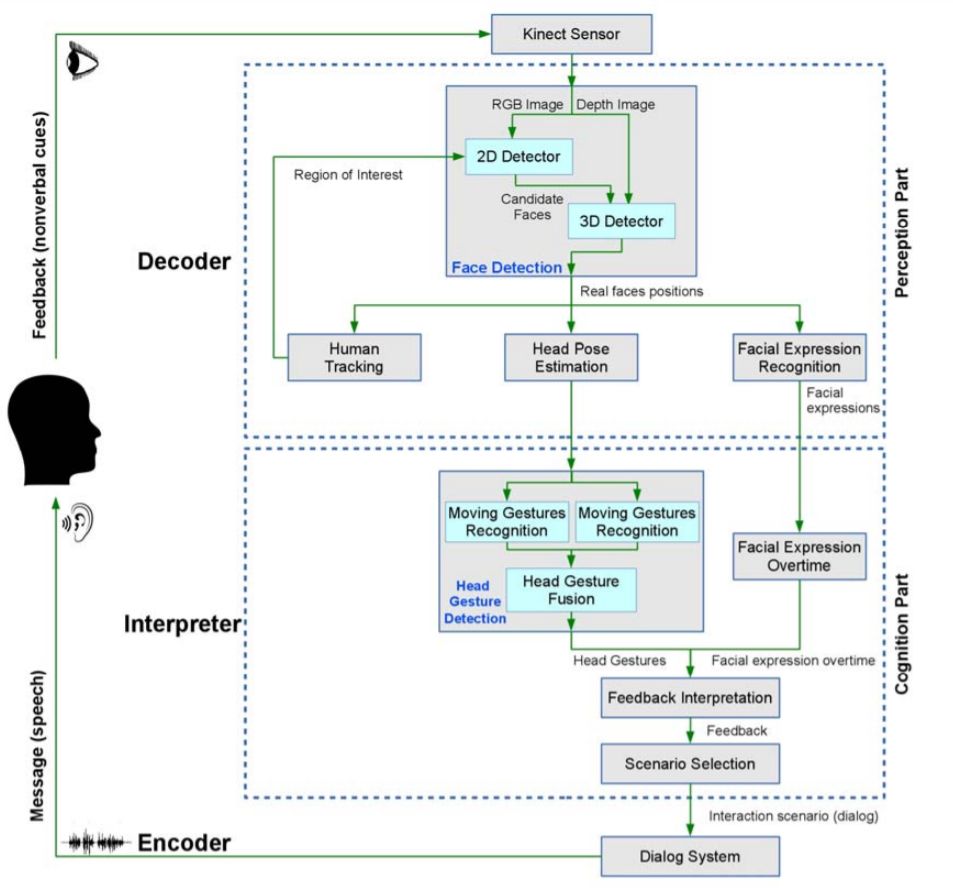

The facial recognition with emotion detection software has already been done before. One method that could be employed for facial recognition is done by the following block diagram:

This setup was proposed for a robot to interact with a person based on the persons' emotion. However, the verbal feedback was not implemented.

Chatbot

% TODO

Care Robots

% TODO

Limitations and issues

% TODO

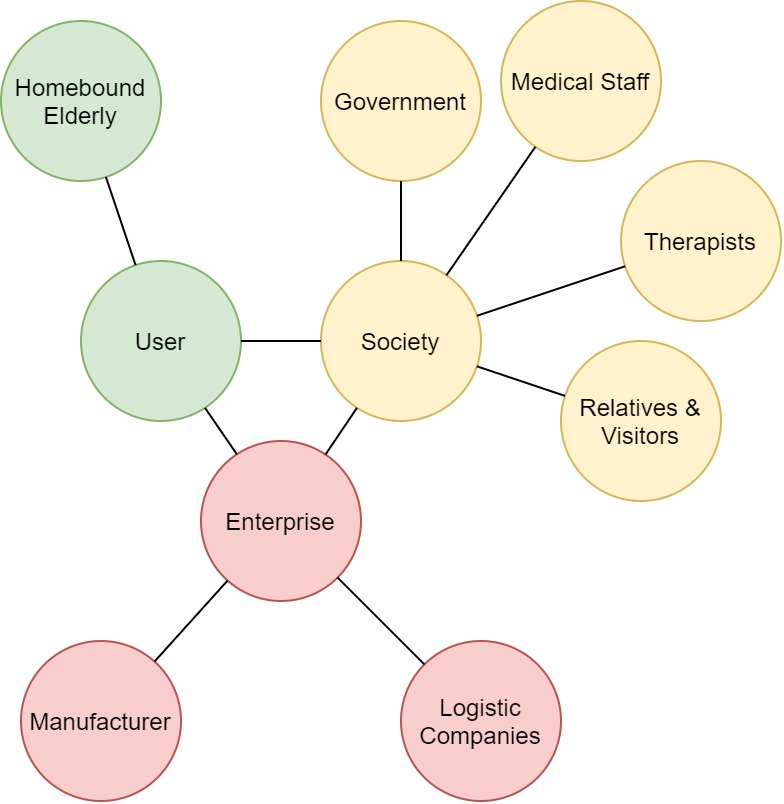

USE Aspects

Researching and developing facial recognition robots requires that one takes into account into what stakeholders are involved around the process. These stakeholders will interact, create or regulate the robot and may affect its design and requirements. The users, society and enterprise stakeholders are put into perspective below.

Users

Lonely elderly people are the main user of the robot. How these elderly people are found is via a government-funded institute where people can make applications in order to get such a robot. These applications can be filled in by everyone(elderly themselves, friends, physiatrists, family, caretakers) with the consent of the elderly person as well. In cases where the health of the elderly is in danger due to illnesses(Final stages dementia etc) the consent of the elderly is not necessary if the application is filled by doctors or physiatrists. If applicable a caretaker/employee of the institute will go visit the elderly person to check whether the care robot is really necessary or different solutions can be found. If found applicable the elderly will be assigned a robot. They will interact with the robot on a daily basis by talking to it and the robot will make an emotional profile of the person which it uses to help the person through the day. When the robot detects certain negative emotions(sadness, anger eg.) it can ask if it can help with various actions like calling family, friends, real caretakers, or having a simple conversation with the person.

Society

Society consists of three main stakeholders. The government, the medical assistance and visitors. The government is the primary funding for an institute that regulates the distribution of the emotion detecting robots. With this set-up, the regulation of the distribution is easier as the privacy violation of the real-time emotion detection is quite extensive. Furthermore, the government is accountable for making laws to regulate what the robots could do and how and what data can be sent to family members or various third-parties. Secondly, the robots may deliver feedback to hospitals or therapists in case of severe depression or other negative symptoms that can not simply solved by a simple conversation. The elderly person who still has autonomy as the primary value must always give consent for sharing data or calling certain people. For people with severe illnesses, this can be overruled by doctors or physiatrics to force the person to get help in the case of emergencies. Finally, any visiting individual may indirectly be exposed to the robot. To ensure their emotions are not unwillingly measured or privacy compromised, laws and regulations must be set up.

Enterprise

Robots must be developed, created and dispatched to the elderly. The relevant enterprise stakeholders, in this case, are the developing companies, the government, the hospitals and therapists to ensure logistic and administrative validity.

Requirement Analysis

The robot and design must follow several standards to uphold to the user requirements.

Regarding Functionality:

The robot shall have mobility. Either being lightweight and portable, or having their own wheels or legs to traverse rooms with.

The robot shall have a not so uncanny resemblance of a companion, avoiding unmotivated participation of the user.

The robot shall have audio and video recording sensors and,

the robot shall be able to distinguish the primary user's face and voice from external noise.

The robot shall have a speech bank of the most commonly used languages.

One robot shall only be assigned to one designated user.

The user shall interact with the robot by speech or computer application.

The robot shall refrain from taking autonomy away from the primary user, unless an authorised person allows this.

The robot shall aim to recharge itself when the battery lifespan is under 10%.

The robot shall endure labour up to twenty years.

Regarding privacy:

The robot shall restrict sharing of the data gathered from the user.

The robot shall must not record and store data of other non-designated user faces.

At the end of the robot's The user is asked whether the company is allowed to use their data for improving the robot design and learning capabilities. If they accept, robot will be recollected by the company for research, memory wiped and redistributed to a new user.

By default, the robot shall wipe its memory and learning data upon recollection.

Approach

This project has multiple problems that need to be solved in order to create a system / robot that is able to combat the emotional problems that the elderly are facing. In order to categorize the problems are split into three main parts:

Technical

The main technical problem faced for our robot is to be able to reliable read the emotional state of another person and using that data being able to process this data. After processing the robot should be able to act accordingly to a set of different actions.

Social / Emotional

The robot should be able to act accordingly, therefore research needs to be done to know what types of actions the robot can perform in order to get positive results. One thing the robot could be able to do is have a simple conversation with the person or start the recording of an audio book in order to keep the person active during the day.

Physical

What type of physical presence of the robot is optimal. Is a more conventional robot needed that has a somewhat humanoid look. Or does a system that interacts using speakers and different screens divided over the room get better results. Maybe a combination of both.

The main focus of this project will be the technical problem stated however for a more complete use-case the other subject should be researched as well.

Papers on Emotions, Creating the AI and creating the Chatbot

Framework

Emotion recognition design

Face detection

Emotion analysis

Chatbot design

Once the facial data is obtained from the algorithm the robot can use this information to better help the elderly to fulfil their needs. How this chatbot will look like is described in the following section.

Why facial emotion recognition

For the chatbot design, there are a lot of possibilities to use different sensors to get extra data to use. However, more data is not equal to a better system. It is important to have a good idea what data will be used for what. For example, it is possible to measure the heart rate of the old person or track his/her eye movements, but what would it add? These are the important questions to ask when building such a chatbot. For our chatbot design there is chosen to use emotion recognition based on facial expressions to enhance the chatbot. The main reason for this is that experiments have shown that an empathic computer agent can promote a more positive perception of the interaction.[7] For example, Martinovski and Traum demonstrated that many errors can be prevented if the machine is able to recognize the emotional state of the user and react sensitively to it.[7] This is because knowing the state of a person can prevent the chatbot from annoying the person by adapting to the persons state. E.g. prevent pushing to keep talking when the person is clearly annoyed by the robot. This can breakdown the conversation and leave the person disappointed. If the chatbot can detect that the queries it gives make the persons' valance more negative it can try to change the way it approaches the person.

Other studies propose that chatbots should have its own emotional model, as humans themselves are emotional creatures. Research done on the connection of a person's current feelings towards the past by Lagattuta and Wellman [8] confirmed that past experiences may influence in certain situations. For example; a person who had experienced a negative event in the past will experience sad emotions while encountering a positive event in the present. This process is known as episodic memory formation. In generating a response, humans use these mappings. To create such mappings and generate responses, the robot’s memory should consist of the following components [8]

- Episodic Memory - Personal experiences

- Semantic Memory - General factual information

- Procedural Memory - Task performing procedures

From these three components, the emotional content is closely associated with episodic memory (long-term memory). The personal experience can be that of the robot or any user with whom the robot is associated. For the robot to speak successfully to the user, it should have its own episodic memory and its user memory. The study proposes a solution for robots to develop their own emotional memories to enhance response generation by;

- Identifying the user’s current emotional state.

- Identifying perception for the current topic.

- Identifying the overall perception of the user regarding the topic.

The chatbot uses two of these techniques. When the robot is operational, it not only continuously monitors the current state of the person but it also provides options to sense how the user feels about a subject.

What is Emotion

Before it is discussed what will be done with the recognized emotions, it will be quickly discussed what exactly emotions are and how an agent could influence how it feels. Everyone has some firsthand knowledge of emotion. However, precisely defining what it is in surprisingly difficult. The main problem is that "emotion" refers to a wide variety of responses. It can, for example, refer to the enjoyment of a nice quiet walk, guilt of not buying a certain stock or sadness watching a movie. These emotions differ in intensity, valance(positivity-negativity), duration and type(primary or secondary). Primary emotions are initial reactions and secondary emotions are emotional reaction to an emotional reaction. To exactly find the construct of emotion, researchers typically characterize several different features of a prototypical emotional response. Such a response has typical features, which may not be present in every emotional reaction. [9]

One feature of emotion is its trigger. This is a psychologically relevant situation being either external(e.g. seeing a sad movie) or internal(e.g. scared for an upcoming situation).

The second feature of emotion is attention. This means that the situation has to be attended to in order for an emotional to occur.

The third feature is the appraisal. In this feature, situations are appraised for their effect on one's currently active goals. Such goals are based on the values, cultural milieu, current situational features, societal norms, developmental stage of life and personality.[10] This means that there can be a difference in how people react to the same situations due to differing in their goals.

Once the situation has been attended to and appraised to the goals, this results in an emotional response which is the fourth aspect. This response can come in three ways. experiential, behavioural and central and peripheral physiological reactions. The experiential reaction is mostly described as "feeling" but this is not equivalent to emotion. Emotions can also influence the behaviour of a person. Think of smiling or eye-widening. Finally, emotions can also make us do something(e.g. fleeing, laughing). The final feature of emotion is its malleability. This means that once the emotional response is initiated the precise path is not predefined and can be manipulated from external inputs. This is the most crucial aspect as this would mean that there is a possibility for regulating the emotions of an agent. These five aspects together form a sequence of actions where a response to a situation is generated, but this response will then also influence the situation again. [9]

Regulating Emotion

Now it is known how emotions can be described it is important to know how these emotions can then be manipulated so that an agent will feel less bad. As stated before emotions can be manipulated. This can be done intrinsically or extrinsically. For this research especially the extrinsic part is of importance as this is where the chatbot can influence the person's emotional state. There are generally five families of emotion regulatory strategies. Each of which will be described below.

-Situation selection: choosing whether or not to enter potentially emotion-eliciting situations.

-Situation modification: change the current situation to modify its emotional impact.

-Attentional deployment: Choosing aspects of situations to focus on.

-Cognitive change: Changing the way one constructs the meaning of the situation.

-Response modulation: Once emotions have been elicited it can that the emotional response is influenced.

The goal of the robot is then to regulate the emotions of the elderly in such a way that they are more in line with the goal of the elderly person itself. To do this the robots needs to know whether the situation is controllable or not. For example, when a person is feeling a little bit sad it can try to talk to the person to change its feeling to a more positive feeling. However, when the situation is not controllable the robot should not try to change the emotion of the elderly, but help the person to accept what is happening to them. For example, when the housecat of the person just died, this can have huge impacts on how the elderly person is feeling. Trying to make them happy will not happen of course due to an uncontrollable situation. In this case, the robot can only try to make the duration and intensity of the negative emotion less by trying to comfort them.

It is of course not possible to know the exact long term goals of the elderly person. It is therefore important that the robot is able to adapt to different situations so that it can adapt how it reacts based on the persons long term goals. This is of course not possible in this project due to time and resource constraints. [9]

Needs and values of the elderly

The utmost important part of the robot is to support and help the elderly person as much as it can. This has to be done correctly however, as crossing ethical boundaries can easily be made. The most important ethical value of an elderly person is their autonomy. This autonomy is the power to make your own choices. [11] For most elderly this value has to be respected more than anything. It is therefore vital that in the chatbot design the elderly person is not forced to do anything. The robot can merely ask. From this also problems can arise if the person asks the robot to do something to hurt him/her. But for this robot design, it is not able to do anything physical that could hurt or help the person. Its most important task is the mental health of the person. Such a robot design is like the PARO robot, a robotic seal that helps elderly with dementia to not feel lonely. The problem of this robot design is that it can not read the persons emotion, as it is treated as a hugging robot, meaning that sensing the head is very difficult. Therefor for this project a different robot design is made. This can be seen in the other section.

Chatbot implementation

Robot user interaction

In this section, the interaction between the elderly and the robot will be described in detail so that it is clear what the robot needs to do once it has the valance and arousal information from the emotion recognition. As described in the robot design section the robot will be around 1 meter high with the camera module mounted on the top to get the best view of the head of the person. The robot will interact with the user with a text to speech module, as having a voice will convey the message of the robot better than just displaying it. The user can interact with the robot by speaking to it. With a speech to text module, this speech will be converted to a text input that the robot can use. The robot will interact with the user with its main goal to make sure the emotions the person is feeling are according to their long-time goals. It will do this by making smart response decisions. This decision is also based on the arousal of the emotion, as such high arousal emotions(e.g. angry) may also result in a deviation of the user from their long-time goal. As stated before implementing individual long-term goals is not doable for this project, but this may also be researched in further projects. Instead, the main focus will be on the following long-term goal:

'Have positive valance'

This is chosen as everyone would want to feel happy and live healthily no matter the age or ethnicity. This means that the robot will try to keep the person on this long time goal as much as it can by saying or doing the right things. It will do this according to the emotion regulation methods described in the Regulating Emotion subsection. These techniques are the core of how the chatbot will work as these are also used by humans to regulate their own emotions. Now not all the described methods are applicable for the robot due to its limitation in intelligence. It is, for example, difficult to do situation selection as this requires knowledge of the future. Also, response modulation is difficult to do as this has more to do with the internal state of the user. Finally, attentional deployment means that the robot should understand a lot of different situations and positive aspects of these situations as well. This is also not possible in this project. However, situation modification and Cognitive Change are possible to do with the robot. How this will be implemented will be explained in the next subsections.

Situation modification

The user can come in a lot of different situations that may divert them from their long-time goal. The robot should try to prevent such situations as much as it can and instead try to steer the user to a good situation. To put it more concretely the goal of the user is to have a positive valance. This means that if the emotion recognition detects that the person has a small negative valence, it may try a few different things. The robot can say to the user: "Would you like to do something?" Where the user can answer what they like, but when the user accepts the robot can suggest a few things it can do like, calling family/friends, having a simple conversation about a different subject in order to make the person happier.

Cognitive Change

This technique tries to modify how the user constructs the meaning of a specific situation. This technique is most applicable in highly aroused situations because these are most of the time uncontrollable emotions. This uncontrollability means that the robot should try to reduce the intensity and duration of the emotion by Cognitive change. As an example, during a very high aroused state the robot should try to comfort the person by asking questions and listening to the user to try to change how the user constructs this bad emotion into a less intense and higher valence emotion.

Framework

The chatbot is build using Visual studio 2019 with SIML code. SIML is an adaption of the XML language specifically made for building chatbots. The chatbot gets the emotions from the emotion recognition software, with which it generates an appropriate response according to the literature and survey results.

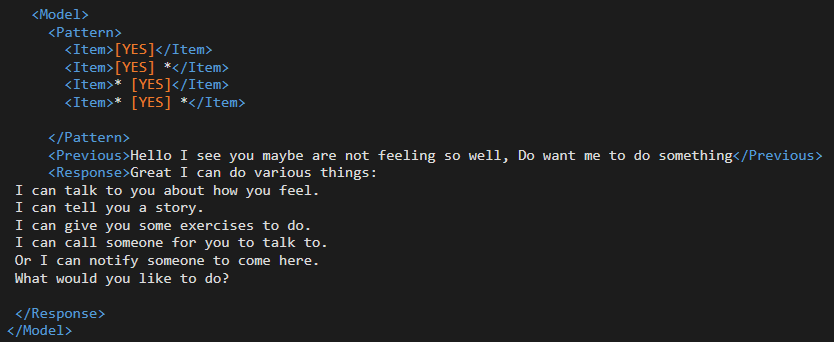

SIML

As stated before SIML is a special language developed for chatbots. SIML stands for Synthetic Intelligence Markup Language [12] and has been developed by the Synthetic Intelligence Network. It uses input patterns that the user says or types and uses this to generate a response. These responses are generated based on the emotional state of the person and the input of the user. This is best explained with an example.

In this example, one model is shown. Such a model houses a user input and robot response. Inside this model, there is a pattern tag. This tag specifies what the user has to say to get the response that is stated in the response tag. So, in this case, the user has to say any form of yes to get the response. This yes is between brackets to specify that there are multiple ways to say yes(e.g. yes, of course, sure etc.). The user says yes or no based on a question the robot asked. To specify what this question was, the previous tag is used. Here the previous response of the robot is used to match when the user wants to interact with the robot. So in short, this code makes sure that when the user says any form of yes on a question it will give a certain response. The chatbot consists of a lot of these models to generate an appropriate response. However, there is no implementation of emotions shown in this example. How the robot deals with different emotions will be explained in the next example.

This model is the first interaction of the robot with the user. This model does not use user input, but a specific keyword used to check the emotional state of the person. This means that every time the keyword AFGW is sent to the user the robot will get into this model. It can be seen that in the response there are 4 different scenarios the robot can detect. These are separated by various if-else statements. These 4 scenarios are the following:

- Positive valence

- Negative valence and low arousal

- Small negative valence and high arousal

- High negative valence and high arousal

In each of these scenarios, there is a section between think tags that is executed in the background. In this case, the robot sends the user to a specific concept. In a concept, all the response specifically for that situation are located. So when the robot detects that the user has low arousal but negative valence it will send him into a specific concept to deal with this state.

Implementation of facial data in chatbot

Once the facial recognition software has gathered information about the emotional state of the person this information has to be implemented somehow. In this section, there will be discussed how the state of the person will help the robot to personalize how it reacts to the person.

Data to use

The recognition software will detect both whether there is a face in view, together with what emotion is expressed and with what intensity. This data can then be used to determine how positive or negative the person is feeling. These negative emotions are very important to detect, as prolonged negative feelings may lead to depression and anxiety. Recognizing these emotions timely can prevent this, negating help needed from medical practitioners.[13] For our model the following outputs will be used:

- Is there a face in view, Yes/No

- What emotions are expressed, happiness/sadness/disgust/fear/surprise/anger.

- How intense are the different emotions, 0 to 1 for every emotion.

Emotional Model

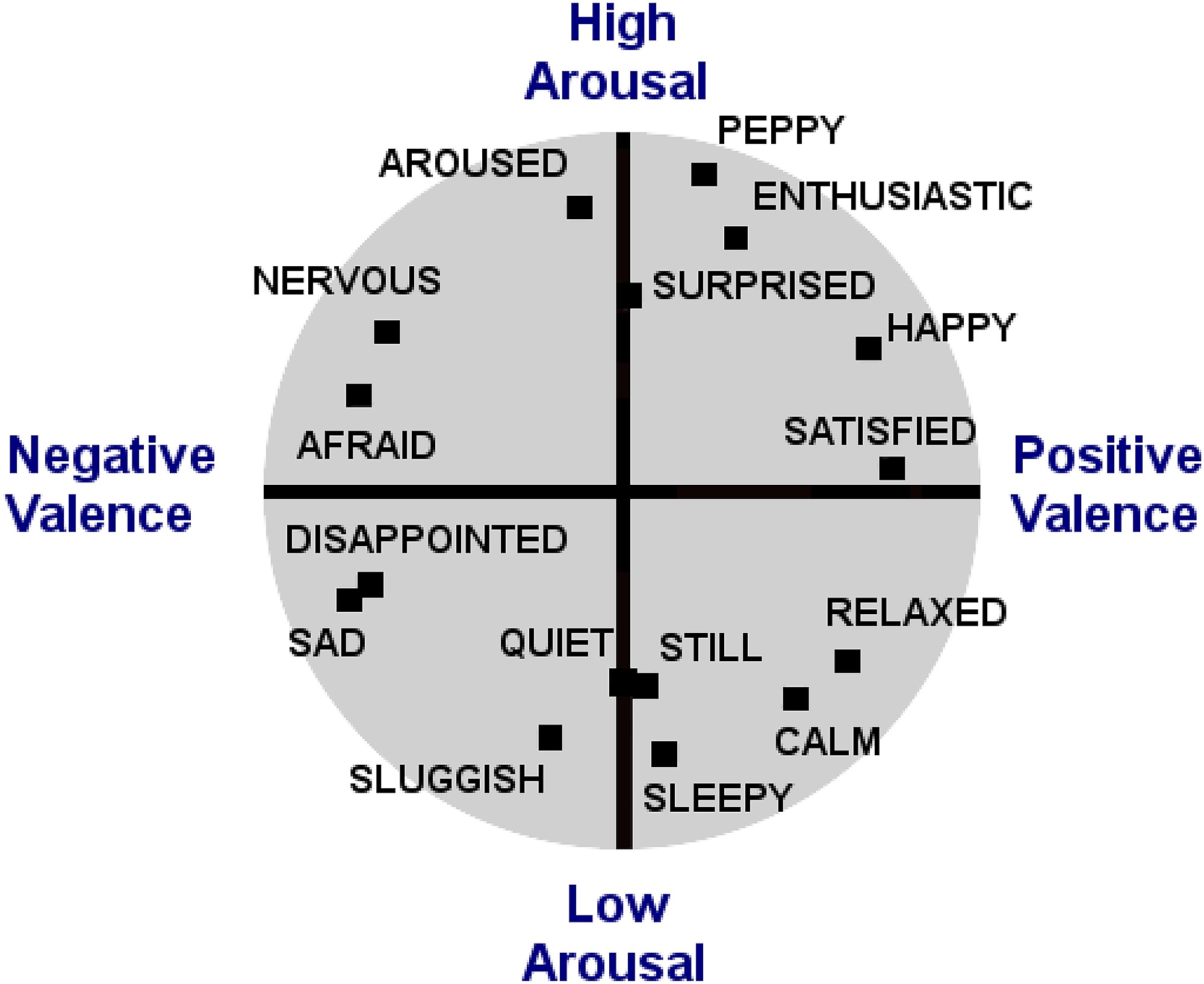

One very important thing is what kind of emotional interpreter is being used, as there are many different models for describing complex emotions. The most basic interpretation uses six basic emotions that were found to be universal between cultures. This interpretation was developed during the 1970s, where psychologist Paul Eckman identified these six basic emotions to be happiness, sadness, disgust, fear, surprise, and anger.[14] As the goal of the robot is to help elderly people through the day, it probably will not be necessary to have a more complex model as it is mostly about the presence of negative feelings. The further classification of what emotion is expressed can help with finding a solution for helping the person. These six basic emotion will be represented by the Circumplex Model. In this model emotional states are represented on a two-dimensional surface defined by a valence (pleasure/displeasure) and an arousal (activation/deactivation) axis. The two-dimensional approach has been criticized on the grounds that subtle variations between certain emotions that share common core affect, e.g., fear and anger, might not be captured in less than four dimensions.[15] Nevertheless, for this project the 2-dimensional representation will suffice as the robot will not need to be 100% accurate in its readings, as it is mostly about the presence of highly negative emotions with high arousal. Such a diagram looks like the following:

How negative or positive a person is feeling can be expressed by the valance state of the person. This valance is the measurement of the affective quality referring to the intrinsic good-ness(positive feelings) or bad-ness(negative feelings). For some emotions, the valance is quite clear eg. The negative effect of anger, sadness, disgust, fear or the positive effect of happiness. However, for surprise it can be both positive and negative depending on the context.[16] Because of this, surprise will not be taken into account for the valance measurement. The valance will be simply calculated with the intensity of the emotion and whether it is positive or negative. When the results are not as expected the weight of different emotions can be altered to better fit the situation.

Arousal is a measure of how active or passive the person is. This means for example that a person that is very angry has a very high arousal or that a person who is feeling sad has low arousal. This extra axis will help to better define what the robot should do as with high arousal a person might panic or hurt someone or themselves. Where exactely the values for these six emotions lay differs from person to person, but the general locations can also be seen from the graph above.

Implementation of valance and arousal

Once the presence of every emotion has been detected and whether the individual is feeling positive or negative and with what arousal, the robot can use this information. How to use this is very important as history has shown that if the robot does not meet the needs and desires of the potential users the robot will be unused.

When the robot detects that the person is having negative emotions the robot will ask if something is wrong. If the person reacts with yes the robot will ask how it can help with the problem. In the case of a light negative feeling, the robot will help with having a simple conversation. When simple psychiatric help is needed the robot can help by using the ELIZA program. This program was developed to make a person feel better by asking questions and encouraging the person to think positive. If this is not wanted by the person the robot can also ask if the person would want to contact family members/friends. If this is also declined the robot will ask if the person is sure the robot can not help. When the answer is again no, the robot will back off for 30 minutes for light negative emotions. When the emotions get more negative, the robot will immediately react again by asking why the person is feeling so negative. When given consent the robot can call medial specialists or physiatrist so that the person can talk to them. If the person declines, the robot will point out to the person that it is there to help them and that calling someone will really help them. If declined again the robot will back off again, respecting the autonomy of the person.

When the person has a specific illness the settings can be changed so that the robot will always call help even if the person does not give consent. In order to have this setting changed a doctor and psychologist have to confirm that the persons' autonomy can be reduced.

Scenarios

This section is dedicated to portraying various scenarios. These scenarios elaborate on moments in our theoretical user’s lives where the presence of our robot may improve their emotional wellbeing. They also demonstrate the steps both robot and user may perform and consider edge cases.

Scenario 1: Chatty User

Our user likes chatting and prefers being accompanied. Unfortunately, they cannot have human company at all their preferred times. In this case, the user may engage with their robot. It will recognise an activation phrase (e.g. “Hey Robot”) and listens to their user’s demand, which is similar to Smart Home devices nowadays. The robot cooperates the recently registered emotion with the questions and phrases spoken to assess appropriate responses. In this case, the expected response is “I wish to talk”, which prompts the robot to prioritise to listen to the user, analysing their phrases. On cues such as pauses or order of the user, the robot may respond in questions or statements generated from the analysed lines. For example, the user talks about their old friend “Angie”. The robot processes that this is a human with attributes and may ask questions about “Angie”. This information may be stored and used again for future conversation. The final result of this is that the user won’t have to reduce their emotional wellbeing by being alone, having a chat companion to fill this necessity.

Scenario 2: Sad Event User

The user has recently experienced a sad event, for example a death of a friend. It is assumed that the user will express more emotions on the negative valence spectrum, including sadness in the timespan after the occurred event. The robot present in their house is designed to pick up on the changed set and frequency of emotions. Whenever the sad emotion is recognised, the robot may engage the user by demanding attention and the user is given a choice to respond and engage a conversation. To encourage the user from engaging, the robot acts with care, applying regulatory strategies, tailoring the question to the user’s need. Once a conversation is engaged, the user may open themselves to the robot and the robot asks questions as well as comforting the user by means attentional deployment. Throughout, the robot may ask the user if they would like to contact a registered person (e.g. personal caretaker or family), whether a conversation has not been engaged or not. This allows for all possible facets of coping to be reachable for the user. In the end, the user will have cleared their heart, which will help in improving sadness.

Scenario 3: Frustrated User

A user may have a bad day. Something bad has happened that has left them in a bad mood. Therefore, their interest in talking with anyone, including the robot has been diminished. The robot has noticed their change in their facial emotions and must alter their approach to reach their goal of conversation and improved wellbeing. To avoid conflict and the user lashing out on the harmless robot, it may engage the user by diverting their focus. Rather than have the user’s mind set on what frustrated them, the robot encourages the user to talk about a past experience they’ve conversed about. It may also engage a conversation themselves by stating a joke, in an attempt to diffuse the situation. When the user still does not wish to engage, the robot may ask if they wish to call someone. In the end, noncompliant users may need time and the robot may best not further engage.

Scenario 4: Quiet User

Some users may not be as vocal than others. They won’t take initiative to engage the robot or other humans. Fortunately, the robot will frequently demand attention, asking the user if they wish to talk. It does so by attempting to engage in a conversation at appropriate times (e.g. between times outside of dinner and recreational events where the user is at home). If the robot receives no input, it may ask again several times in short intervals. In case of no response, the robot may be prompted to ask questions or tell stories on their own, until the user tells it to stop or – preferably – engages with the robot.

Reflection

Conclusion

Evaluation

Feedback

Limitations

Planning

| Week | Task | Date/Deadline | Coen | Max | Rick | Venislav |

|---|---|---|---|---|---|---|

| 1 | ||||||

| Introduction meeting | 20.04 | 00:00 | ||||

| Group meeting: subject choice | 25.04 | 00:00 | 00:00 | 00:00 | ||

| 2 | ||||||

| Wiki: problem statement | 29.04 | 00:00 | ||||

| Wiki: objectives | 29.04 | 00:00 | ||||

| Wiki: users | 29.04 | 00:00 | ||||

| Wiki: user requirements | 29.04 | 01:00 | ||||

| Wiki: approach | 29.04 | 00:00 | ||||

| Wiki: planning | 29.04 | 00:00 | ||||

| Wiki: milestones | 29.04 | 00:00 | ||||

| Wiki: deliverables | 29.04 | 00:00 | ||||

| Wiki: SotA | 29.04 | 00:00 | 00:00 | 00:00 | 00:00 | |

| Group meeting | 30.04 | 00:00 | ||||

| Tutor meeting | 30.04 | 00:00 | ||||

Milestones

| Week | Milestone |

|---|---|

| 1 (20.04 - 26.04) | Subject chosen |

| 2 (27.04 - 03.05) | Project initialised |

| 3 (04.05 - 10.05) | Facial/Emotional recognition research finalised |

| 4 (11.05 - 17.05) | Facial/Emotional recognition software developed |

| 5 (18.05 - 24.05) | Chatbot research finalised |

| 6 (25.05 - 31.05) | Chatbot implemented |

| 7 (01.06 - 07.06) | Facial/Emotional recognition software integrated in Chatbot |

| 8 (08.06 - 14.06) | Wiki page completed |

| 9 (15.06 - 21.06) | Chatbot demo video and final presentation completed |

| 10 (22.06 - 28.06) | N/A |

| 11 (29.06 - 05.07) | N/A |

Logbook

Week 1

| Name | Total hours | Tasks |

|---|---|---|

| Rick | 3.5 | Introduction lecture [1.5], meeting [1], literature research [0.5] |

| Coen | 3 | Introduction lecture [1.5], meeting [1], literature research [1] |

| Max | 5.5 | Introduction lecture [1.5], meeting [1], literature research [3] |

| Venislav | 4 | Introduction lecture [1.5], meeting [1], literature research [1.5] |

Week 2

| Name | Total hours | Tasks |

|---|---|---|

| Rick | 3 | Meeting [1], Research [2] |

| Coen | 4 | Meeting [2], Wiki editing [.5], Google Forms implementation, [.5], Paper research [1] |

| Max | 8 | Meeting [1], literature research [1], rewriting USE[2], writing Implementation of facial data in chatbot [4] |

| Venislav | 8 |

Week 3

| Name | Total hours | Tasks |

|---|---|---|

| Rick | 2 | Reading / Research [2] |

| Coen | 3 | Literature[2] Writing Requirements[1] |

| Max | 4 | Searched for relevant papers about chatbots and how to implement them[4] |

| Venislav | ... | ... |

Week 4

| Name | Total hours | Tasks |

|---|---|---|

| Rick | ... | ... |

| Coen | 9 | Literature about robot design, user requirements and USE groups[5]. Editing USE and Requirements[2]. Working on robot design[2]. |

| Max | 12 | Literature study about Valance/Arousal diagram[2]. Finding information about goal of emotion detection[4], Finding writing about emotion regulation and general emotion[6] |

| Venislav | ... | ... |

Week 5

| Name | Total hours | Tasks |

|---|---|---|

| Rick | ... | ... |

| Coen | 8 | Literature[5] Writing Requirements & Scenarios [2] Meeting[1] |

| Max | 9 | Making framework[1] Reading paper about emotion[3] thinking about implementation of chatbot[4] meeting[1] |

| Venislav | ... | ... |

Week 6

| Name | Total hours | Tasks |

|---|---|---|

| Rick | ... | ... |

| Coen | ... | ... |

| Max | 20 | Making name for project[0.5] Trying and making framework for chatbot[17] meetings[2.5] |

| Venislav | ... | ... |

Week 7

| Name | Total hours | Tasks |

|---|---|---|

| Rick | ... | ... |

| Coen | 15 | chatbot research[4]. chatbot concept implementation[5], presentation[4], meetings[2] |

| Max | 32 | Making final framework for chatbot[14], make patterns for chatbot[13], make demo[5], |

| Venislav | ... | ... |

References

- ↑ World Health Organisation. who.int. Aging and Health. https://who.int/news-room/fact-sheets/detail/ageing-and-health

- ↑ Misra N. Singh A.(2009, June) Loneliness, depression and sociability in old age, referenced on 27/04/2020

- ↑ Green B. H, Copeland J. R, Dewey M. E, Shamra V, Saunders P. A, Davidson I. A, Sullivan C, McWilliam C. Risk factors for depression in elderly people: A prospective study. Acta Psychiatr Scand. 1992;86(3):213–7. https://www.ncbi.nlm.nih.gov/pubmed/1414415

- ↑ Rukuye Aylaz, Ümmühan Aktürk, Behice Erci, Hatice Öztürk, Hakime Aslan. Relationship between depression and loneliness in elderly and examination of influential factors. Archives of Gerontology and Geriatrics. 2012;55(3):548-554. https://doi.org/10.1016/j.archger.2012.03.006.

- ↑ Zhentao Liu, Min Wu, Weihua Cao, Luefeng Chen, Jianping Xu, Ri Zhang, Mengtian Zhou, Junwei Mao. A Facial Expression Emotion Recognition Based Human-robot Interaction System. IEEE/CAA Journal of Automatica Sinica, 2017, 4(4): 668-676 http://html.rhhz.net/ieee-jas/html/2017-4-668.htm

- ↑ Saleh, S.; Sahu, M.; Zafar, Z.; Berns, K. A multimodal nonverbal human-robot communication system. In Proceedings of the Sixth International Conference on Computational Bioengineering, ICCB, Belgrade, Serbia, 4–6 September 2015; pp. 1–10. http://html.rhhz.net/ieee-jas/html/2017-4-668.htm

- ↑ 7.0 7.1

U. K. Premasundera and M. C. Farook, "Knowledge Creation Model for Emotion Based Response Generation for AI," 2019 19th International Conference on Advances in ICT for Emerging Regions (ICTer), Colombo, Sri Lanka, 2019, pp. 1-7, doi: 10.1109/ICTer48817.2019.9023699. Cite error: Invalid

<ref>tag; name "“Emotion4”" defined multiple times with different content - ↑ 8.0 8.1 K. Lagattuta and H. Wellman, "Thinking about the Past: Early

Knowledge about Links between Prior Experience, Thinking, and

Emotion", Child Development, vol. 72, no. 1, pp. 82-102, 2001.

Available: 10.1111/1467-8624.00267 Cite error: Invalid

<ref>tag; name "“Emotion5”" defined multiple times with different content - ↑ 9.0 9.1 9.2 Werner, K., Gross, J.J.: Emotion regulation and psychopathology: a conceptual framework. In: Emotion Regulation and Psychopathology, pp. 13–37. Guilford Press (2010)

- ↑ Lazarus, R. S. (1966). Psychological stress and the coping process. New York: McGrawHill.

- ↑ Johansson-Pajala, R., Thommes, K., Hoppe, J.A. et al. Care Robot Orientation: What, Who and How? Potential Users’ Perceptions. Int J of Soc Robotics (2020). https://doi.org/10.1007/s12369-020-00619-y

- ↑ Synthetic Intelligence Network, Synthetic Intelligence Markup Language Next-generation Digital Assistant & Bot Language. https://simlbot.com/

- ↑ Maja Pantic and Marian Stewart Bartlett (2007). Machine Analysis of Facial Expressions, Face Recognition, Kresimir Delac and Mislav Grgic (Ed.), ISBN: 978-3-902613-03-5, InTech, Available from: http://www.intechopen.com/books/face_recognition/machine_analysis_of_facial_expressions

- ↑ Ekman P.(2017, August) My Six Discoveries, Referenced on 6/05/2020.https://www.paulekman.com/blog/my-six-discoveries/

- ↑ Marmpena, Mina & Lim, Angelica & Dahl, Torbjorn. (2018). How does the robot feel? Perception of valence and arousal in emotional body language. Paladyn, Journal of Behavioral Robotics. 9. 168-182. 10.1515/pjbr-2018-0012.

- ↑ Maital Neta, F. Caroline Davis, and Paul J. Whalen(2011, December) Valence resolution of facial expressions using an emotional oddball task, Available from: https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3334337/#__ffn_sectitle/

A Natural Visible and Infrared Facial Expression Database for Expression Recognition and Emotion Inference, 2010 https://guilfordjournals.com/doi/abs/10.1521/pedi.1999.13.4.329

Emotional factors in robot-based assistive services for elderly at home, 2013 https://www.researchgate.net/publication/240170005_Emotional_factors_in_robot-based_assistive_services_for_elderly_at_home

Evidence and Deployment-Based Research into Care for the Elderly Using Emotional Robots https://econtent.hogrefe.com/doi/abs/10.1024/1662-9647/a000084?journalCode=gro

Development of whole-body emotion expression humanoid robot, 2008 https://www.researchgate.net/publication/221074437_Development_of_whole-body_emotion_expression_humanoid_robot

Affective Robot for Elderly Assistance, 2009 https://www.researchgate.net/publication/26661666_Affective_robot_for_elderly_assistance

Robot therapy for elders affected by dementia, 2008 https://www.researchgate.net/publication/3246531_Robot_therapy_for_elders_affected_by_dementia