PRE2019 4 Group4

Group Members

| Name | Student Number | Study | |

|---|---|---|---|

| Cahitcan Uzman | 1284304 | Computer Science | c.uzman@student.tue.nl |

| Zakaria Ameziane | 1005559 | Computer Science | z.ameziane@student.tue.nl |

| Lex van Heugten | 0973789 | Applied Physics | l.m.v.heugten@student.tue.nl |

Problem Statement

As the years pass, every people get older and they start to lose some abilities, due to the nature of biological human body. Older people get vulnerable in terms of health, their body movements slow down, the communication rate between neurons on their brain decreases which might cause mental problems, etc. Thus, their dependency on other people increases, in order to maintain their lives properly. In other words, older people may need to be cared by someone else, due to deformation of their bodies. However, some of the old people aren’t lucky to find someone to receive their support. For those people, the good news is that the technology and artificial intelligence is developing and one of the great applications is care robots for elder people. Although “care robots” is a great idea and most likely to ease the human life, the technology brings some concerns and ambiguities along with it. Since “communication with elder people” is an essential for caring, the benefit of care robots increases as the communication between the user and the robot gets clearer and easier. Moreover, emotion is one of the most important tools for communication, that’s why we aim to investigate the use of emotion recognition on care robots with the help of artificial intelligence. Our main goal is to make the communication between an old person and the robot more powerful by making the robot understand the current emotional situation of the user and behave correspondingly.

Objectives

Users and their needs

The healthcare service: The healthcare services have a big shortage of human careers, which lead to the use of robots to take care of elderly people. The healthcare services want to provide care robots that are as similar as possible to the human careers.

Elderly people: The elderly people need someone to take care of them both physically, and also share their emotions with, so the care robot need to understand his/her emotions. That way it can understand what the person needs and act based on that.

Enterprises who develop the care robot: The enterprise aim to improve the care robots technology and make it as effective as a human career by adding features that can allow the robot to interact emotionally with people.

USE analysis

Users: <br\> The population of old people keeps growing in a high rate. In Europe, citizens aged 65 and over comprised 20.3 of the population in 2019(1) and it’s expected to keep increasing every year. <br\> As people get older, not only they need someone to take care of them physically, but they also need human interactions, share their emotions, otherwise they become vulnerable to loneliness and social isolation. The leader of Britain’s GPs said in 2017 :“Being lonely can be as bad for someone’s health as having a long term illness such as diabetes or high blood pressure” (2). The fight against social isolation and loneliness is an essential reason for social companion robots.<br\> Introducing the technology of facial emotion recognition in social companion robots for elderly people will help the robot understand the emotions of the person and react based on that, as for example if the person is sad, the robot may use some techniques to cheer up the person, or if the person is happy the robot can ask the person to share what made him/her happy. By sharing emotions, the elderly people will feel less lonely, and by understanding the emotion of the person, the robot will know more about what the person really needs.

<br\>

Society:

This technology can have a big impact on the society. In many countries, the healthcare system can’t provide enough careers to cover all the needs, so this turns to be so difficult for the elderly people and especially for their families. Especially because the phenomena of “living alone” at the end of life has grown enormously in many societies in last decades, even in societies in which traditionally there are strong family ties(1). <br\>

Society will benefit from this technology because it will increase the overall happiness by preventing the social isolation and loneliness of the elderly.

<br\>

enterprise: <br\> Over the years, the companies who develop care robots made a huge development in improving the efficiency of the robot, as it started with a simple robot that can only accomplish some basic physical task, to a robot that can communicate in human language with people, then a robot that can entertain the elderly people in different ways. Those companies always aim to improve its robots to satisfy the needs of the users, and adding the facial emotion recognition to the robot will be a big improvement and is expected to rise the desire of elderly people to have one of these care robots. The companies will definitely benefit from this technology as the need of care robots is expected to rise in the coming years. Studies have shown that in year 2050, the population of people over the age of 65 in Europe will represent 28.5% of the population. So this investment will come up with a huge profits to those companies

Approach

The subject of face recognition has strongly developed in the past few years. One can find face recognition and face tracking in daily life in camera's that automate the focus on the faces in view and of course snapchat filters. This technology will be a good starting point for this project. However, thee consistency of face recognition is very low. Factors like skin color, lighting and deformaties of the face are certain to upset most face recognition systems every once in a while. Especially the point of deformaties is important for our target user. As mentioned above, old age comes with many different effects and the decay of skin is especially relevant for this project. Skin on the face begins to hang, wrinkle and colorize at older age and might create issues for our face recognition software. Tuning this software to work with elderly will be the first milestone.

The second milestone will, of course, be the recognition of emotion. Recognition of emotion is complex. It needs a relevant large database to work correctly. Here we will probably come against the same problems. Namely working with older elderly as target group. Our software should be fine tuned to their face structure as well as creating a database that is created from relevant data. At last, the system should be consistent. This is designed to be part of a care robot. Care robots should relieve a part of the care that is given by human professionals and should therefore be consistent enough te actually take some of the work pressure of their hands.

Planning

Week 1:

- Brainstorming ideas

- Decide upon a subject

- Who are the users and their needs

- Study literatures about the topic

Week 2:

- USE aspects analysis

- Gathers RPCs

- Care robots that already exist

- Make research about the elderly opinion and their wishes/concerns

Week3:

- Analyze how facial expressions changes for different emotions <br\>

- Analyze different aspects of this technology ( for example how the robot should react to different emotions ..)

- The use of convolutional Neural Network for facial emotion recognition

Week4:

- Find a database of face pictures that is large enough for CNN training

- Start the implementation of the facial emotion recognition

Week5:

- Implementation of the facial emotion recognition

Week6:

- Testing and improving

Week7:

- Prepare presentation and finalize the wiki page

Week8:

- Derive conclusions and possible future improvements

- Finalize presentation.

Deliverables:

- The wiki page: this will include all the steps we took to make this project, as well as all the analysis we’ve made and results we achieved.

- A software that will be able to recognize facial emotions

- A final presentation of our project

State of the art

Companion robots that already exist

SAM robotic concierge

Luvozo PBC, which focuses on developing solutions for improving the quality of life of elderly people, started testing its product SAM in a leading senior living community in Washington. SAM is a concierge smiling robot that is the same size as a human, it’s task is to check on residents in long term care settings. This robot made the patients more satisfied as they feel that there is someone checking on them all the time, and it reduced the cost of care.<br\>

ROBEAR

Developed by scientists from RIKEN and Sumitomo Riko Company, ROBEAR is a nursing care robot which provides physical help for the elderly patients, such as lifting patients from a bed into a wheelchair, or just help patients who are able to stand up but require assistance. It’s called ROBEAR because it is shaped like a giant gentle bear.

PARO

Elderly wishes and concerns

The interview will be conducted with working professionals in the elderly care.

Interview Questions

- What are common measurements that elderly take against loneliness?

- Are the elderly motivated to battle their loneliness? If so, how?

- Do lonely elderly have more need of companionship or more need of a interlocutor? For example, a dog is a companion but not someone to talk to while frequently calling a family member is a interlocutor but no companionship.

- Are there, despite installed safety measures, a lot of unnoticed accidents? For example, falling or involuntary defecation.

- If the caregivers could be better warned against one type of accident, which one would it be and why?

- Is it easy for you to recognize the emotion of the patients based on their facial expression?

- how do you react if you notice that someone is sad for example? how do you try to cheer him up? also is there a special way to deal with the patients when they are happy?

- and finally, since we cannot interview the patients, do you think based on your experience that the elderly people would like to have a companion robot that recognizes their emotions?

Results

Loneliness is one of the biggest challenges for caregivers of the elderly. Theoretically, elderly do not have to be lonely. There are many options to find companionship and activity. These are provided or promoted by caregivers. However, old ages comes with many disadvanteges. Activities become to difficult or intens, they may have difficulties keeping a conversation in real life or via phone and they are not able to care for companions like pets.

Common signs of loneliness are visible sadness, confusion, bad eating habits and general self neglect. Attention is the only real cure according to caregivers. Because the elderly lose the abbility to look for this companionship this attention should come from others without input from the elderly.

Conclusions

Since the elderly lose the abillity to find companionship and hold meaningful conversations, loneliness is a certainty, especially when the people close to them die or move out of their direct surrounding. A companion robot that provides some attention may be a useful supplement to the attention that caregivers and family.

RPCs

Requirements

Emotion recognition software requirements

- The software shall capture the face of the person

- The software shall recognize when the person is happy

- The software shall recognize when the person is sad

- The software shall recognize when the person is in pain

Companion robot requirements

- When the person is happy, the robot shall act correspondingly.

- When the person is sad, the robot shall cheer him up

- When the person is in pain for 15 seconds, the robot shall ask the person if he needs him to call an emergency number for help.

- When the user asks the robot for help, the robot shall start a contact with an emergency service within 5 seconds.

- When the robot percieves a pain situation and getting no responses, it shall start a contact with an emergency service within 5 seconds.

Preferences

- The software can recognize different other emotions.

Constraints

- The facial expression differs from a person to another.

- It’s hard to achieve a very high accuracy.

- The software needs to be built within 6 weeks

How do facial expressions convey emotions?

Introduction

Emotions are one of the main aspects of non-verbal communication in human life. They are the reflections of what does a person think and/or feel towards a specific experience s/he had in the past or at the present time. Therefore, they give clues for how to act towards a person which eases and strengthens the communication between two people. One of the main methods to understand someone’s emotions is through their facial expressions. Because humans either consciously or unconsciously express them by the movements of the muscles on their faces. For the goal of our project, we want to use emotions as a tool to strengthen human-robot interaction to make our user to feel safe. Although it’s obvious that having an interaction with a robot never can replace having an interaction with another person, we believe that the effect of this drawback can be minimized by the correct use of emotions for human-robot interaction. Despite that there are different types of emotions, for the sake of simplicity and the focus on our care robot proposal, we will evaluate three main emotional situation which are happiness, sadness and pain through the features of the human face.

Happiness

File:Happyy.png Smile is the greatest reflector of happiness on human face. The following changes on human face describes how a person smile and show his/her happiness:

- Cheeks are raised

- Teeth are mostly exposed

- Corners of the lips are drawn back and up

- The muscles around the eyes are tightened

- “Crow’s feet” wrinkles occur near the outside of the eyes.

Sadness

File:Sad.png Sadness is the hardest facial expression to identify. The face looks like a frown-look and the followings are clues for sad face:

- Lower lip pops out

- Corners of the mouth are pulled down

- Jaw comes up

- Inner corners of the eyebrows are drawn in and up

- Mouth is most likely to be closed

Pain

File:Pain.png Facial expression of pain is not hard to identify and the followings describe the facial changes in a pain situation:

- Eyebrows are lowered

- Eyelids are tightened

- Cheeks are raised

- Mouth can be either wide open or as only teeth are exposed

- Eyes are likely to be closed

Our robot

Our robot is a companion robot that will help the elderly people in living independently. It will have humanoid shape, and will have a screen attached to it that will allow the robot to display some pictures/video to the elderly person. It will also be a robot that can move from a place to another. <br\> (make a drawing of how it can look) <br\> <br\> <br\> <br\> <br\> <br\> <br\>

What our robot can do: <br\>

- Facial recognition: The robot has to recognize the face of the elderly person <br\>

- Emotion recognition: The robot needs to distinguish between different emotions by analyzing the elderly facial expression <br\>

- Speaking: The robot has to verbally communicate with the elderly. <br\>

- Movement: The robot has to be able to move. <br\>

- Calling: The robot has to be able to connect the elderly with other people, both by normal calls or video calls. <br\>

How the robot should react according to the elderly emotion:<br\>

- To Pain:<br\>

Depending on the degree of pain, the robot can react in different ways. If the degree of expressed pain is a low on, then the robot might ask the elderly if he wants to look up something on the internet or if he wants to make him an appointment with a doctor. Otherwise if the expressed pain is a severe one, then the robot needs to ask the elderly whether they it should request help by calling someone or an emergency number. <br\>

an example scenario of that: <br\>

- Robot detects elderly face, analyzes it, and classifies it as low degree pain. <br\>

- Robot approaches the elderly and asks if he wants to make an appointment with the doctor. <br\>

- Elderly responds with a yes <br\>

- Robot look available times and inform the elderly both verbally and by displaying date/time on the screen. <br\>

- The elderly chooses which one is most convenient for him, and tells the robot <br\>

- The robot confirms the appointment <br\>

- The Robot advises the elderly to have some rest <br\>

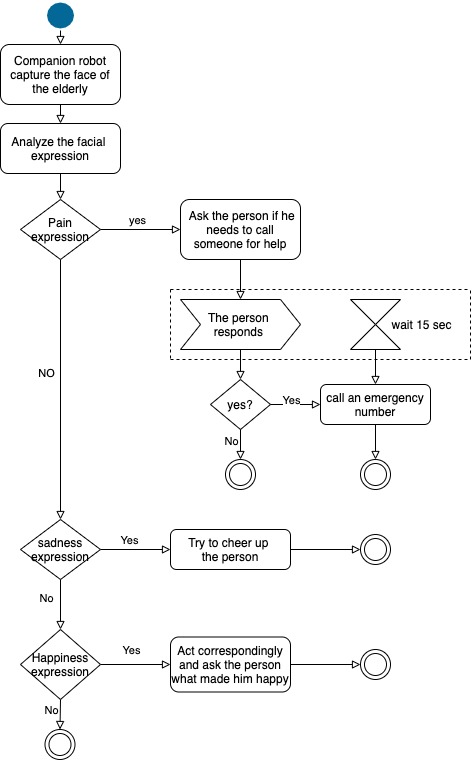

The activity diagram below present how the robot should react to different emotions expressed by the elderly person. <br\>

<br\> First when the companion robot captures the face of the elderly, it analyzes its facial expressions. If it recognizes that the person is expressing pain, then the robot will ask the person if he needs to call someone for help, after that the robot wait for a response from the person, if he doesn’t respond after 15 seconds, the robot immediately calls an emergency number for help, otherwise he just follow the instructions given to him by the person.<br\> If the person is not expressing pain, but expresses sadness, the robot will use some techniques to cheer up the person .<br\> And finally if the person expresses happiness, then the robot should act correspondingly and ask the person what made him happy. <br\>

Companionship and Human-Robot relations

Trust

Relations are build on many complex social structures. One of the most important pillars of relationship, especially dealing with non-human entities, is trust. Trust is defined for a robot as the following: If the robot reacts and behaves as a human expect it to react and behave, the robot is considered trustworthy. Robots should react in a predictable fashion. In our robot we should build trust by being making it behave as a person would expect it to do and prevent breaking that trust by being unpredictable and with inaction. Because predictability is largely grounded in the expectance of the human, design of the robot becomes very important. After all, appearance dictates a lot about what we expect.

Relations

Relations are not binary but rather build slowly over time.

Facial emotion recognition software

The use of convolutional Neural Network:

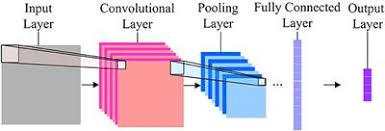

In our project, we will create a convolutional Neural Network that will allow our software to make a distinction between three main emotions (pain, happiness, sadness, and maybe more emotions) by analyzing the facial expression.

What’s Convolutional Neural Network? <br\>

Convolutional neural network are neural networks which are sensitive to spatial information, they are capable of recognizing complex shapes and patterns in an image, and it’s one of the main categories of neural networks to do images recognition and images classifications. <br\>

How it works? <br\>

CNN image classifications takes an input image, process it and classify it under certain categories (eg:sad, happy, pain). The Computers see the input image as an array of pixels and based on the image resolution, it will see h x w x d(height, width, dimension).

Technically, deep learning CNN models to train and test, this training is done by providing to the CNN data that we want to analyze, each input image will pass it through a series of convolution layers with filters, Pooling, fully connected layers and apply Softmax function to classify an object, each classification with a percentage accuracy values. <br\> <br\>

<br\> <br\> Different layers and their purposes: <br\>

- Convolution layer: <br\>

Is the first layer that extract features from the input image, it maintains the relation between pixels by learning image features using small squares of input data. Mathematically, it’s an operation that multiplies the image matrix (h x w x d) with a filter (fh x fw x d) and it outputs a volume dimension of (h – fh + 1) x (w – fw + 1) x 1 <br\>

<br\>

Convolutions of an image with different filters are used in order to blur and sharpen images, and perform other operations such as enhance edges and emboss.

- Pooling layer: <br\>

Reduce the number of parameters when the images are too large. Max pooling takes the largest element from the rectified feature map.

- Fully connected layer: <br\>

We flattened our matrix into vector and feed it into a fully connected layer like a neural network. Finally, we have an activation function to classify the outputs.

Notes taken while building the software

About the software:

We will build a CNN which is capable of classifying different facial emotions, and try to maximize its accuracy.

We’ll use python 3.6 as programming language.

The CNN for detecting facial emotion will be build using keras.

To detect the faces on the images, we will use LBP Cascade Classifier for frontal face detection; it’s available in OpenCV.

dataset: <br\> The images that we will use in this project are from cohn-kanad CK+ database containing 593 image sequences containing facial expressions labeled (neutral, sadness, surprise, happiness, fear, anger, contempt, and disgust) . It's lacking the pain facial expression, during my research i couldn't find any dataset that also include pain expression. I was thinking maybe because the facial expression while on pain differs so much from a person to another, and also for the same person, the facial expression differs each time. So that's something we have to look into it

some steps to take: <br\> Navigates the Ck+ directory structure, fetches the faces from the images and store them in emotion directories according to their labels .<br\> Build the emotion recognition network <br\> Spilt the data into training set, testing set, and validation set. <br\>

Papers

- Blechar, Ł., & Zalewska, P. (2019). The role of robots in the improving work of nurses. Pielegniarstwo XXI wieku / Nursing in the 21st Century, 18(3), 174–182. https://doi.org/10.2478/pielxxiw-2019-0026

Nurses cannot be replaced by robots. Their task is more complex than just the routine tasks that they deliver. However due to the enormous shortage of nurses the pressure on nurses also inhibits them to give this human side of care. Therefore robots cannot replace but can relieve the nurses from the routine care and create more time for empathic and more human care.

- Shibata, T., & Wada, K. (2011). Robot Therapy: A New Approach for Mental Healthcare of the Elderly A Mini-Review. Gerontology, 57(4), 378–386. https://doi.org/10.1159/000319015

The elderly react positive to a robot companion (animal) when it reacts as they would expect. The more knowledge people have about the animal the robot is mimicking the more critical they are of their performance.

- Shibata, T. (2004). An overview of human interactive robots for psychological enrichment. Proceedings of the IEEE, 92(11), 1749–1758. https://doi.org/10.1109/jproc.2004.835383

Humans learn about the behavior of the robot and this changes the relationship. If a robot can learn about the behavior of the human the relation may deepen even more since the relation is no longer one sided. Intelligence and learning capabilities are therefore important in a care robot.

- Xiao, W., Li, M., Chen, M., & Barnawi, A. (2020). Deep interaction: Wearable robot-assisted emotion communication for enhancing perception and expression ability of children with Autism Spectrum Disorders. Future Generation Computer Systems, 108, 709–716. https://doi.org/10.1016/j.future.2020.03.022

Inability to recognize emotion is a serious problem for autistic children. A system to recognize emotions was build. Incorporation of visual and audio cues to recognize emotion. Emotion recognition can be improved if audio cues are also considered.

- Pepito, J. A., & Locsin, R. (2019). Can nurses remain relevant in a technologically advanced future? International Journal of Nursing Sciences, 6(1), 106–110. https://doi.org/10.1016/j.ijnss.2018.09.013

Nurses should be more involved in the development of care robots and other care technology. Nurses can oversee, use and apply the right technology for each specific patient. Seeing nurses as a main user should influence technology.

- Tarnowski, P., Kołodziej, M., Majkowski, A., & Rak, R. J. (2017). Emotion recognition using facial expressions. Procedia Computer Science, 108, 1175–1184. doi: 10.1016/j.procs.2017.05.025

Abstract: In the article there are presented the results of recognition of seven emotional states (neutral, joy, sadness, surprise, anger, fear, disgust) based on facial expressions. Coefficients describing elements of facial expressions, registered for six subjects, were used as features

- Jonathan, Lim, A. P., Paoline, Kusuma, G. P., & Zahra, A. (2018). Facial Emotion Recognition Using Computer Vision. 2018 Indonesian Association for Pattern Recognition International Conference (INAPR). doi: 10.1109/inapr.2018.8626999

Abstract:This paper examines how human emotion, which is often expressed by face expression, could be recognized using computer vision

- Singh, D. (2012). Human Emotion Recognition System. International Journal of Image, Graphics and Signal Processing, 4(8), 50–56. doi: 10.5815/ijigsp.2012.08.07

Abstract: This paper discusses the application of feature extraction of facial expressions with combination of neural network for the recognition of different facial emotions (happy, sad, angry, fear, surprised, neutral etc..)

- Meder, C., Iacono, L. L., & Guillen,, S. S. (2018). Affective Robots: Evaluation of Automatic Emotion Recognition Approaches on a Humanoid Robot towards Emotionally Intelligent Machines. World Academy of Science, Engineering and Technology International Journal of Mechanical and Mechatronics Engineering. doi: 10.1999/1307-6892/10009027

Abstract:In this paper, the emotion recognition capabilities of the humanoid robot Pepper are experimentally explored, based on the facial expressions for the so-called basic emotions, as well as how it performs in contrast to other state-of-the-art approaches with both expression databases compiled in academic environments and real subjects showing posed expressions as well as spontaneous emotional reactions

- Draper, H., & Sorell, T. (2016). Ethical values and social care robots for older people: an international qualitative study. Ethics and Information Technology, 19(1), 49–68. doi: 10.1007/s10676-016-9413-1

This article focuses on the values care robots for older people need to have. Many participants from different countries, including old people, informal careers, and formal careers for old people, were given different scenarios of how robots should be used. Based on that discussion, a set of values was derived and prioritized.

- Birmingham, E., Svärd, J., Kanan, C., & Fischer, H. (2018). Exploring emotional expression recognition in aging adults using the Moving Window Technique. Plos One, 13(10). doi: 10.1371/journal.pone.0205341

This article studies the facial expressions for different age groups. Moving Window Technique (MWT) was used to identify the different facial expression between old adults and young adults.

- Ko, B. (2018). A Brief Review of Facial Emotion Recognition Based on Visual Information. Sensors, 18(2), 401. doi: 10.3390/s18020401

In this paper, Conventional FER approaches are described along with a summary of the representative categories of FER systems and their main algorithms. Focuses on an up-to-date hybrid deep-learning approach combining a convolutional neural network (CNN) for the spatial features of an individual frame and long short-term memory (LSTM) for temporal features of consecutive frames.

- Tan, L., Zhang, K., Wang, K., Zeng, X., Peng, X., & Qiao, Y. (2017). Group emotion recognition with individual facial emotion CNNs and global image based CNNs. Proceedings of the 19th ACM International Conference on Multimodal Interaction - ICMI 2017. doi: 10.1145/3136755.3143008

This paper focuses on classifying an image into one of the group emotion such as positive, neutral or negative. The approach used is based on Convolutional Neural Networks (CNNs)

- Levi, G., & Hassner, T. (2015). Emotion Recognition in the Wild via Convolutional Neural Networks and Mapped Binary Patterns. Proceedings of the 2015 ACM on International Conference on Multimodal Interaction - ICMI 15. doi: 10.1145/2818346.2830587

This paper present a novel method for classifying emotions from static facial images. The approach leverages on the success of Convolutional Neural Networks (CNN) on face recognition problems

Weekly contribution

Week 1

| Name | Tasks | Total hours |

|---|---|---|

| Cahitcan | Research(3h), Problem statement(1.5h), studying papers(2h),brainstorming ideas with the group(2h), help on planning (0.5h) | 9 hours |

| Zakaria | Introduction lecture(2h) - brainstorming ideas with the group(2h) - research about the topic(2h)- planning(0.5h) - Users and their needs(0.5h)- study scientific papers (3h) | 10 hours |

| Lex | Introduction and brainstorm (4h) - Research (3h) - Approach (1.5h) - planning (0.5h) | 9 hours |

Week 2

| Name | Tasks | Total hours |

|---|---|---|

| Cahitcan | meeting (1.5h) - RPCs (1.5h) - state of the art (2h) - USE analysis (1h) | 6 hours |

| Zakaria | meeting(1.5h)- USE analysis(3h)-RPCs(2.5h)- robots that already exist(2h) | 9 hours |

| Lex | meeting (1.5h) - writing the interview (3h) - distribuing the interview (1h) | 5.5 hours |

Week 3

| Name | Tasks | Total hours |

|---|---|---|

| Cahitcan | meeting (1h) - research on how facial expressions convey emotions (4h) - finding&reading papers(3.5h) | 8.5 hours |

| Zakaria | How the robot should react to different emotions (3h)- meeting (1h)- research about CNN(5h) | 9hours |

| Lex | Meeting (1h) - research on trust and companionship (7h) - processing interview | - |

Week 4

| Name | Tasks | Total hours |

|---|---|---|

| Cahitcan | - | - |

| Zakaria | The use of convolutional Neural Network (4h), work on building up the software (download tools, make specifications, start programming)(4.5h) , | - |

| Lex | - | - |