PRE2019 3 Group12

Group Members

| Name | ID | Major | |

|---|---|---|---|

Subject

A real-time camera-based recognition device using capable of identifying vacant seats in areas with dynamic workplaces. These include areas like workspaces at Metaforum for studying, seats at a library or empty desks at flexible workplaces at a company. This information can be communicated to the users which will minimize the time and effort it takes to find a place to work.

Problem Statement

During examination weeks many student want to work and study at the workplaces in the MetaForum. However, during the day it often very busy and it might take a while to find a place to sit, costing effort and precious study time. If students knew the locations of vacant seats it would significantly reduce the amount of time it takes to find them and will probably also reduce the distance that needs to be walked, decreasing the noise for the people already studying. This same issue could arrive at various other places such as public libraries or flexible workspaces. An automatic method to determine the vacant workplaces would be ideal to deal with situations like this.

Objectives

- Libraries and other flexible workplaces

The system should be able to tell the user whether there are vacant seats available and if so, in what area.

- Room reservation

It should be able to detect whether there are people in a room and if that's not the case for a certain amount of time, it should set the room to available for reservation.

Users

The users we plan to target with our project include multiple groups of people, since it can be applied in various environments. Possible users include students, employees making use of flexible work spaces or library visitors. Each of these users can benefit from the technology in two situations. The first is in larger study or working halls, comparable to Metaforum, for checking if there are available places. The second is in smaller study rooms, comparable to booking rooms in Atlas for cancelling reservations if the student doesn't show up or leaves earlier.

Society

Our system could have both positive or negative effects on society as a whole if the assumption is made that our system would be widely adopted by public institutions and companies alike. If our system is widely adopted by companies, it might act as a driving force in the increase of the popularity of flexible workspaces. Since research has shown that flexible workspace can provide several advantages to the environment of employees, leading to increased productivity and work satisfaction, this has the possibility of being a positive force in society.

There might also be negative consequences, such as an increased concern for privacy due to the widespread implementation of our system. Since our technology relies on the use of cameras, there will need to be constant monitoring of the workspaces for it to work properly. This might make people uneasy and lead to the anxiety of being watched. However, since our technology purely uses the filmed footage in order for the algorithm to make a decision in real time, there is no need to record the footage or for it to be viewed by humans at all. This means that properly informing employees and other users of our technology about how it functions can severely limit the concerns regarding privacy.

Enterprise

Companies are able to benefit from our solution in several ways. First and foremost, companies that would implement our system in their flexible workspaces would need less buildings to accommodate their employees due to the increase in efficiency of their use. This will save building costs, especially for larger companies or high tech companies that might require expense equipment in their flexible workspaces.

Approach

Here a number of possible approaches are described with their advantages and disadvantages. Our goal is to use the "camera-based recognition software approach", but if that fails, we have some other possible methods to achieve our goal.

Camera-based recognition software: The system uses a camera to detect empty workplaces. It can tell whether there is a person sitting at the workplace or if it’s free for other people. The camera will be installed in higher places to decrease the chance of other objects obstructing the view of the camera.

Advantages:

- Not only can it tell whether a person is sitting in a certain workplace or not, it can also tell whether a person is only taking a break or leaving the workplace for someone else to take it (by looking if the person has also taken his/her stuff with him/her)

- (Relatively) easy to install

- Limited amount is needed, since one camera (if placed right) can cover a big area, also reducing the overall costs

Disadvantages:

- Visual data of people will get captured, this means that people might feel that their privacy is violated.

- Developing an algorithm that can accurately conclude everything might become difficult

Echo location-based:

The system is based on the reflection of sound waves emitted by a sound source. The reflected sound will be different based on whether a person is sitting on the chair or not. The distance the sound has to travel is shorter if there is a person (taller than the chair) sitting on the chair.

Advantages:

- It does not capture any visual data which people might feel their privacy violated by.

- It can have quite a lot of range for one sensor, since it measures the differences between signals over time and always have something to compare.

Disadvantages:

- Other objects can deteriorate the signal if they’re close to the chair or person, making the measuring of the sound waves less accurate.

- It can only detect if there is someone in the chair, it cannot tell if the person leaves stuff on the desk indicating he or she is coming back or not.

Movement-based:

Movement based cameras can work on multiple premises, passive infrared (PIR), microwave, ultrasonic, tomographic motion detector, video camera software, gesture detector. Many of the current movement-based sensors have a combination of the two but they use the different methods to detect a movement. In general a movement based sensor can also be divided into zones which detect the movement in that zones separately.

Advantages:

- A person studying will generally move while writing, typing etc so it is easy to detect a person sitting in a chair or at a location. Which is good to detect quiet spaces.

- It can not capture any visual data which people might feel their privacy violated by depending on which detection is use.

Disadvantages:

- It can only detect movement, so if there is no movement in a certain zone it does not know if there is an actual chair at that place and it can only see there is no movement.

- A movement based sensor must have a lot of different zones for movement detection, if it is one big zone then any movement in that zone will render it as busy which makes it hard to detect empty chairs.

- Since chairs are not fixed in one location they can sit in between two zones which could render two zones as busy while 1 chair in one of those zones might still be empty which cannot be seen.

Thermographic-based / Infrared:

Thermographic-based detection focuses on the premise of the different temperatures between objects to distinguish them. Since humans, chairs, tables and floor have different temperatures a thermographic visual should be able to distinguish them based upon their temperatures.

Advantages:

- A thermographic based camera detects heat, so when a person is sitting on a chair it will detect a higher temperature which indicates that the chair is occupied, while a colder object is more likely to be a chair.

- Heat lingers when someone leaves a chair, the chair will have a higher temperature than normal since a person just sat on it and transferred heat onto it. This could be used as a sort of buffer to make sure that the chair is empty for a while before stating it as empty.

- While visual data is collected, a thermographic image will make it hard to actually identify a person which will decrease the violation of privacy people might feel.

Disadvantages:

- There probably will be a difference in the base temperature of the chair due to the difference in the weather over the year and even in a single day. A chair next to a window where the sun is shining on will probably have a higher temperature than a chair in the shadow. In the summer, unless the *temperature is really well regulated, the area in the view of the camera will have a general higher temperature than in the winter, which could make it harder to detect differences. So a lot of factors have to be incorporated into the design to even the effect

- Normal consumer thermographic cameras have a small viewing angle, and work precisely relatively close. If you want to detect objects further away the preciseness of the camera goes down so it means you must install more cameras on lower ceilings to have it accurate and it can become quite expensive.

User Requirements

In order to develop a sound engineering product it is important to not become out of touch with the actual requirements and needs that our supposed users have. Although we as students are for a large part also users ourselves, we can still get misdirected in our assumptions of what the user requirements and needs are by the anecdotal problems that we personally face. For this reason we have made a survey with the purpose of determining the stances on some aspects of our project. We have chosen to focus on multiple choice questions over open questions in our survey. The main reason for this is that open questions are a lot harder to properly analyze, since the answers can’t be easily quantified into different categories. However, open questions do have the advantage of removing some of the subjectivity of the creators of the survey from the answers. Although there will remain some subjectivity due to how the creators of the survey choose and formulated the questions, there is only subjectivity to how people will respond if the answers are pre-formulated as well. For this reason we have added a open question at the end that inquires about any miscellaneous thoughts or concerns that the students might have. This is important, since there might be aspects to our project that we have not yet considered and have therefore also not implemented into the multiple choice questions of the survey. The most prominent of these concerns, if there are any, should show up in questionnaire this way. There might also be correlations between answers to the different questions that could tell us more about our users

In order to paint a clear picture of the application of our idea we have selected the library of Metaforum as an example setting for the survey, as it is the busiest and most popular of the open study spaces on the university. The following aspects are inquired about in the survey:

- How often do you approximately study in Metaforum? This is asked in order to ensure the creditability of the other answers.

- How often do you have trouble finding a place to study in Metaforum? This is asked to gauge how much need there is for a solution to our problem statement.

- Would you appreciate and use an app that allows you to see a map of available open study spaces? This is asked to determine if our users agree that our idea is conceptually a suitable solution to the problem statement.

- If you answered "No" to the question above, what is your main reason for doing so? (open question). By asking this open question we try to detect any unnoticed reasons that might obstruct the usage of our application among our users.

- Would you have any concerns regarding privacy if the app made use of a network of cameras at these study places to determine where free places are available? This is asked to gauge of much our users are bothered by the privacy implications of the camera network that is necessary for our application.

- How would you answer the last question if it would be ensured that the footage from the cameras is exclusively used by software for determining where free chairs are located and therefore cannot be viewed by anyone? This is asked to determine the effect of our largest privacy measure, which is keeping the camera measurements in a closed system.

- Do you have any miscellaneous thoughts or concerns about our proposed solution? Finally, we ask another open question to ensure we haven’t missed any aspects of our application.

The preliminary poll can be found here: https://docs.google.com/forms/d/1z4lVlzjKQd_PsPZ4mfLZSS0lYALhH8XBwE3NGsSGTyM/edit?usp=sharing

State-of-the-art

Neural Networks

What is a neural network

Neural networks are a set of algorithms, modeled loosely after the human brain, that are designed to recognize patterns. They interpret sensory data through a kind of machine perception, labeling or clustering raw input. The patterns they recognize are numerical, contained in vectors, into which all real-world data, be it images, sound, text or time series, must be translated. Neural networks help us cluster and classify. You can think of them as a clustering and classification layer on top of the data you store and manage. They help to group unlabeled data according to similarities among the example inputs, and they classify data when they have a labeled dataset to train on. (Neural networks can also extract features that are fed to other algorithms for clustering and classification; so you can think of deep neural networks as components of larger machine-learning applications involving algorithms for reinforcement learning, classification and regression.) The most important applications, in our case are:

Classification

All classification tasks depend upon labeled datasets; that is, humans must transfer their knowledge to the dataset in order for a neural network to learn the correlation between labels and data. This is known as supervised learning. Detect faces, identify people in images, recognize facial expressions (angry, joyful) Identify objects in images (stop signs, pedestrians, lane markers…) Recognize gestures in video Detect voices, identify speakers, transcribe speech to text, recognize sentiment in voices Classify text as spam (in emails), or fraudulent (in insurance claims); recognize sentiment in text (customer feedback) Any labels that humans can generate, any outcomes that you care about and which correlate to data, can be used to train a neural network.

Clustering

Clustering or grouping is the detection of similarities. Deep learning does not require labels to detect similarities. Learning without labels is called unsupervised learning. Unlabeled data is the majority of data in the world. One law of machine learning is: the more data an algorithm can train on, the more accurate it will be. Therefore, unsupervised learning has the potential to produce highly accurate models. Search: Comparing documents, images or sounds to surface similar items. Anomaly detection: The flipside of detecting similarities is detecting anomalies, or unusual behavior. In many cases, unusual behavior correlates highly with things you want to detect and prevent, such as fraud.

Model:

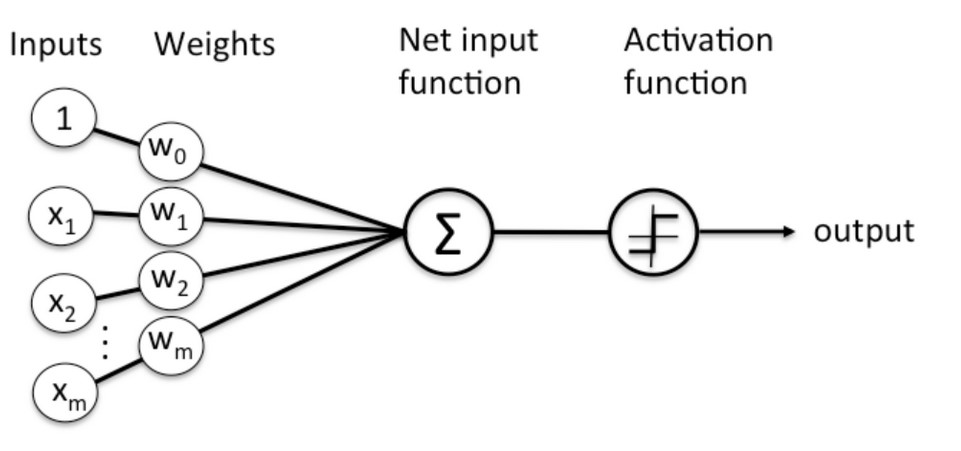

Here’s a diagram of what one node might look like.

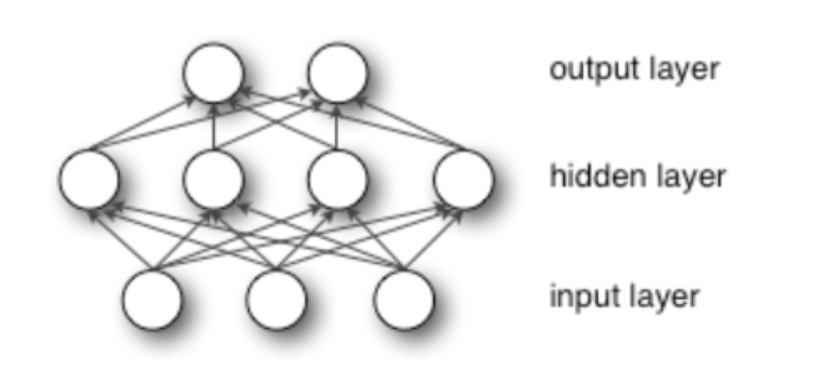

A node layer is a row of those neuron-like switches that turn on or off as the input is fed through the net. Each layer’s output is simultaneously the subsequent layer’s input, starting from an initial input layer receiving your data.

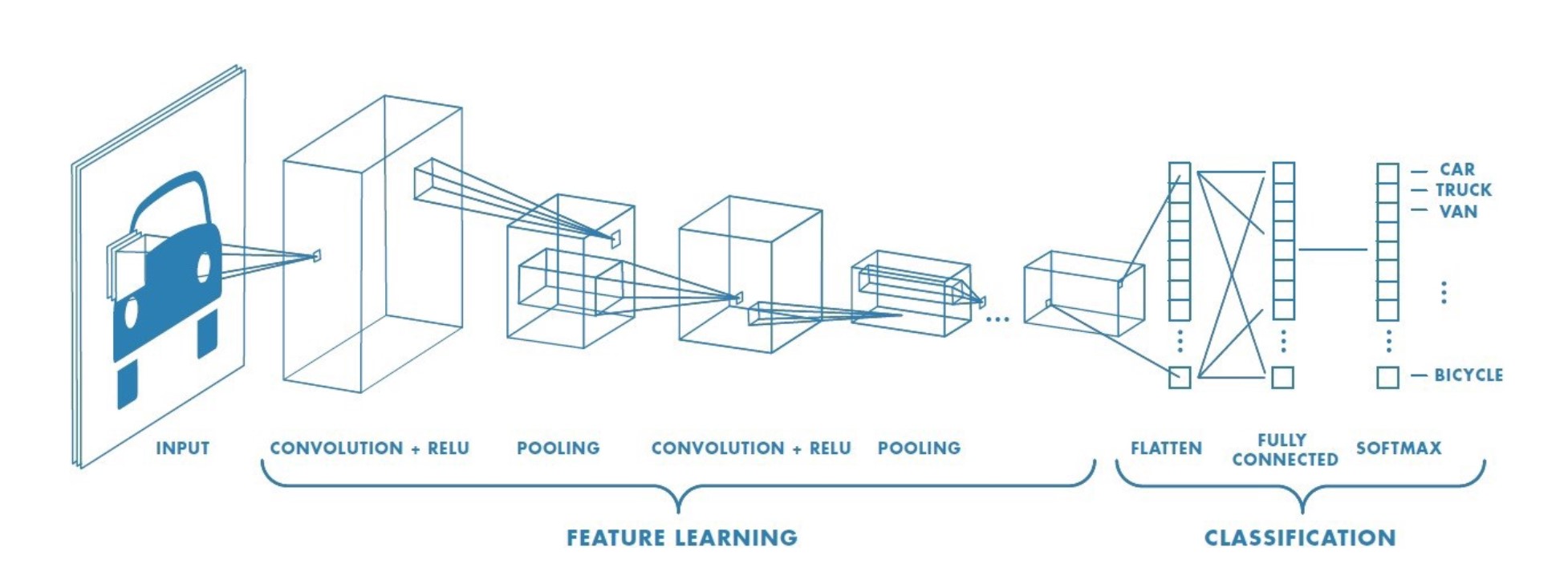

Convolutional Neural Network

For computer vision and image recognition, the most used type of Neural Network is a Convolutional Neural Network A Convolutional Neural Network (ConvNet/CNN) is a Deep Learning algorithm which can take in an input image, assign importance (learnable weights and biases) to various aspects/objects in the image and be able to differentiate one from the other. The pre-processing required in a ConvNet is much lower as compared to other classification algorithms. While in primitive methods filters are hand-engineered, with enough training, ConvNets have the ability to learn these filters/characteristics.

Model:

Current Knowledge

Neural Networks

The current state of the art library for AI and machine learning is OpenCv. OpenCV (Open Source Computer Vision Library) is an open source computer vision and machine learning software library. OpenCV was built to provide a common infrastructure for computer vision applications and to accelerate the use of machine perception in the commercial products. Being a BSD-licensed product, OpenCV makes it easy for businesses to utilize and modify the code.

The library has more than 2500 optimized algorithms, which includes a comprehensive set of both classic and state-of-the-art computer vision and machine learning algorithms. These algorithms can be used to detect and recognize faces, identify objects, classify human actions in videos, track camera movements, track moving objects, extract 3D models of objects, produce 3D point clouds from stereo cameras, stitch images together to produce a high resolution image of an entire scene, find similar images from an image database, remove red eyes from images taken using flash, follow eye movements, recognize scenery and establish markers to overlay it with augmented reality, etc. OpenCV has more than 47 thousand people of user community and estimated number of downloads exceeding 18 million. The library is used extensively in companies, research groups and by governmental bodies.

From those the current state of the heart in terms of object detections is an algorithm called Cascade Mask R-CNN. A multi-stage object detection architecture, the Cascade R-CNN, is proposed to address these problems. It consists of a sequence of detectors trained with increasing IoU thresholds, to be sequentially more selective against close false positives. Comparing it to the last year state of art algorithm , Cascade Mask R-CNN has an overall 15% increase in performance, and a 25% performance increase regarding the state of art of 2016.

This method/apporach can be found in the following papers:

Hybrid Task Cascade for Instance Segmentation[1]

CBNet: A Novel Composite Backbone Network Architecture for Object Detection. [2]

Cascade R-CNN: High Quality Object Detection And Instance Segmentation. [3]

This is a paper which describes the workings, differences and origin of multiple InfraRed cameras and techniques. [4]

Parking Space Vacancy Detection

Similar systems have already been implemented in real-life situation for parking spaces for cars.[5] Many parking environments have implemented ways to identify vacant parking spaces in an area using various methods: Counter-based systems, which only provide information on the number of vacant spaces, sensor-based systems, which requires ultrasound, infrared light or magnetic-based sensors to be installed on each parking spot, and image or camera-based systems. These camera systems are the most cost-efficient method as it only requires a few cameras in order to function and many areas already have cameras installed. This system is however rarely implemented as it doesn't work too well in outdoor situations.

Researchers from the University of Melbourne have created a parking occupancy detection framework using a deep convolutional neural network (CNN) to detect outdoor parking spaces from images with an accuracy of up to 99.7%.[6] This shows the potential that image based systems using CNN's have in these types of tasks. A major difference that our project has from this research however, is the movement of the spaces. Parking spaces of vehicles always stay on the same position. A CNN is therefore able to focus on a specific part of the camera and focus on one parking space. In our situation the chairs can move around and aren't in a constant position. The neural network will therefore first have to find and identify all the chairs in its vision before it can detect whether it is vacant or not, an additional challenge we need to overcome.

Another research group have performed a similar research using deep learning in combination with a video system for real-time parking measurement.[7] Their method combines information across multiple image frames in a video sequence to remove noise and achieves higher accuracy than pure image-based methods.

Seat or Workspace Occupancy Detection

A group students from the Singapore Management University have tried to tackle a similar problem.[8] In their research they proposed a method using capacitance and infrared sensors to solve the problem of seat hogging. Using this they can accurately determine whether a seat is empty, occupied by a human, or the table is occupied by items. This method does require a sensor to be placed underneath each table and since in our situation the chairs move around, this method can't be used everywhere.

A seat occupancy method using capacitive sensing has also been proposed.[9] The research focused on car seats and can also detect the position of the seated person. A prototype has shown that it is feasible in real-time situations.

The Institute of Photogrammetry has also done research into an intelligent airbag system, only this time using camera footage.[10] They were also able to detect if the passenger seat was occupied or not and what the positions of the people were.

A method using cameras is described in the research by the National Laboratory of Pattern Recognition in China for people in seats counting.[11] They propose a coarse-to-fine framework to detect the amount of people in a meeting using a surveillance camera with an accuracy of 99.88%.

Autodesk research also proposed a method for detecting seat occupancy, only this time for a cubicle or an entire room.[12] They used decision trees in combination with several types of sensors to determine what sensor is the most effective. The individual feature which best distinguished presence from absence was the root mean square of a passive infrared motion sensor. It had an accuracy of 98.4% but using multiple sensors only made the result worse, probably due to overfitting. This method could be implemented for rooms around the university but not for individual chairs.

A similar idea was envisioned a few years ago with the creation of an app called RoomFinder which allowed students at Bryant University to find empty rooms around campus to study in.[13] This system, created by one of the students, taps into the sensors used by the automatic lighting system in these rooms, to detect whether a room is currently being used and sends that information to the app. As that system was already implemented around campus, it didn't require any capital investment.

Other Image Detection Research

Aerial and satellite images can also be used for object detection.[14] An automatic content-based analysis of aerial imagery is proposed to mark object or regions with an accuracy of 98.6%.

Planning

Milestones

For our project we have decided upon the following milestones each week:

- Week 1: Decide on a subject, make a planning and do research on existing similar products and technologies.

- Week 2: Finish preparation research on USE aspects and subject and have a clear idea of the possibilities for our projects.

- Week 3: Start writing code for our device and finish the design of our prototype.

- Week 4: Create the dataset of training images and buy the required items to build our device.

- Week 5: Have a working neural network and train it using our dataset.

- Week 6: Test our prototype in a staged setting and gather results and possible improvements.

- Week 7: Finish our prototype based on the test results and do one more final test.

- Week 8: Completely finish the Wiki page and the presentation on our project.

Deliverables

This project plans to provide the following deliverables:

- A Wiki page containing all our research and summaries of our project.

- A prototype of our proposed device.

- A presentation showing the results of our project.

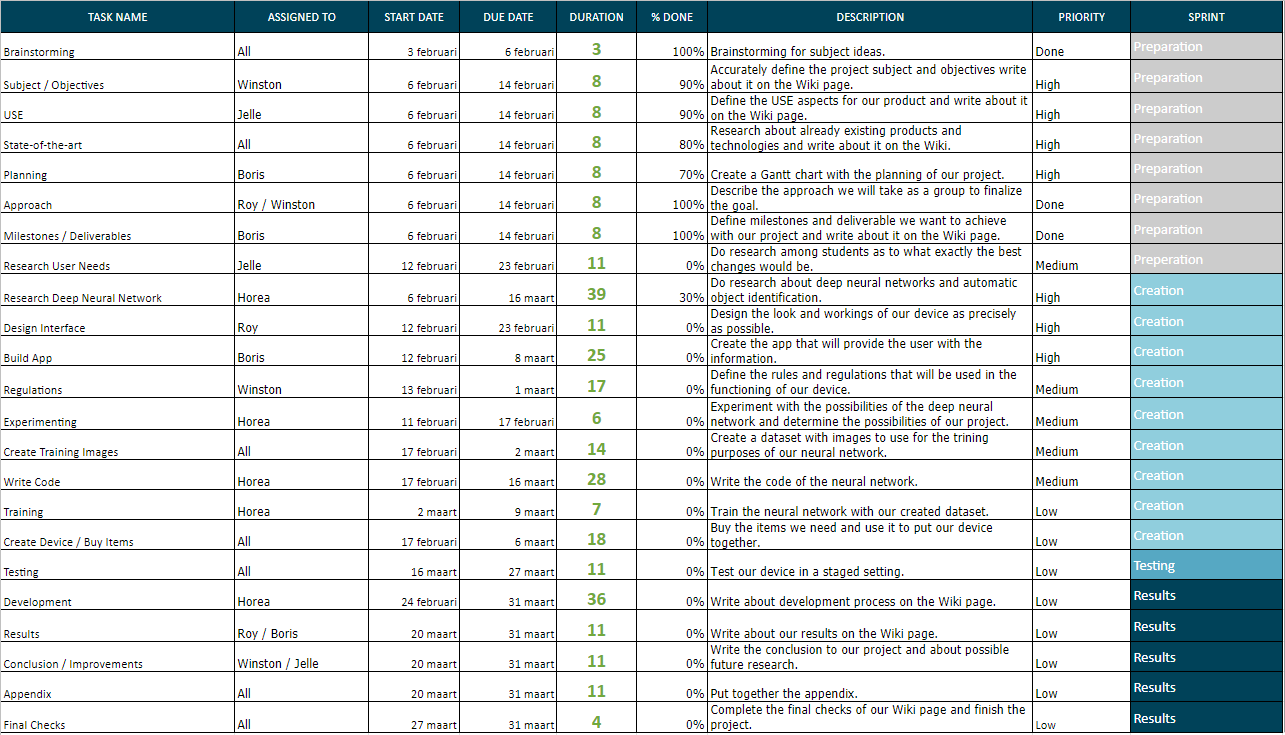

Schedule

Our current planning can be seen in the table below. This planning is not entirely finished and will be updated as the project goes on.

Chairs

State of art at the TU/e

The current system which is used at the Technical University of Eindhoven is based upon Planon. This system manages the reservations for the rooms and the workplaces in auditorium and some others around the campus. Those workplaces are able to be recognised by the stickers and the QR-codes on the tables. These currently can be found in the buildings Auditorium, Vertigo, Flux and Cascade. These tables can be reserved using either Planon or BookMySpace

Currently the system works by reserving the workplace, mostly looking at the table. This is another approach to our suggested system which will be explained further below where we look at the chairs instead of the table. Another distinction is that this system works by reserving the workplace in advance, you can look in BookMySpace or Planon to find an unreserved workplace and you could make a reservation for yourself. However it is concluded currently do not use this system much since in general there is enough place at the places this system is used. One advantage of this system that you could kick out people if you have reserved a chair, while this could lead to some inconveniences and arguments with students which might not want to leave. Which is in turn another distinction with our proposed system which mainly looks at the current empty chairs in an area where you cannot reserve a chair.

Regulations

In order to run the system as optimally as possible, it is highly recommended that people follow the proposed rules and regulations. The reason why these are "highly recommended" and not mandatory, is because it is not possible to persue people who do not follow these rules and regulations. Currently, the system is not able to tell who a person exactly is when they are detected by the program. It treats everything as different "objects" and therefore it cannot distinguish different people from each other. Furthermore, even if the system was able to tell who person is, distinguishing people from each other can also lead to some ethical problems. For instance, some people do not want the system to recognise who they are, which means that implementing this feature will induce some privacy issues. Therefore it is better to leave the system in such a state where it only detects people, but nothing more than that.

The rules and regulations are as follows:

- A person can only stay at a workplace that he or she has reserved

- A person cannot stay at a workplace outside of the reserved time

- A person can only claim one workplace at a time for him or herself

- Chairs should not be removed from the table or workplace they belong to

- No one is allowed to claim any chair or workplace for other people

These rules and regulations can also be treated more like "social norms", since violating these rules will likely not result in a punishment, as mentioned before, but it will of course still decrease the efficiency and performance of the entire system.

Interface Design

Design Choices

There are different ways to convey the information of the cameras to the people who want to use the system. However it should be easily accessible by the people, since if the display is inconvenient the people probably won’t use it. One of the more convenient ways is that the information is accessible through a smartphone since almost everyone has one. Plus most people store their smartphones at an, for them, easily accessible place. It is also mobile which helps people traverse through the environment since you are not stuck at one place in comparison to a computer screen.

So looking at possible ways to convey the information through a smartphone there could be multiple options but the most commonly used are an app or a website which are both accessible from a phone in case that phone has an internet connection. Although to see the information from the server an internet connection is needed since otherwise the data could be old and the people could walk to already occupied chairs.

Both of these ways have their advantages and disadvantages, at first there will be a look at a website. First of all is that a website is/should be accessible through all platforms, so you are not system support dependant. This results in probably less development time for the platform since you only need to develop one platform. One possible downside is that a browser tends to forget things like log-in far more often than an app since most of that information will be deleted from the cache the moment you close the browser

Comparing that to an app, the first thing to notice is that an app is more system OS dependant, there is a chance that you will have to develop different apps for Android, iOS, Windows and other platforms. This will probably increase the development time for the system if changes are to be made. It has the advantage that if a login system will be used that it should be able to store information locally and more easily. Although they do have to be downloaded separately and cannot be accessed anytime since you must have the app.

Leaving the which platform to use to convey the information aside, there is also the problem on how the information should be given to the people. Should the system just count the amount of empty chairs which are at the whole floor? Divide it further into sections which will show the count of empty chairs into that section? Another option would be that you have a complete floor plan with all chairs visible with colours to indicate whether they are occupied or not. On the other hand it is also possible to use a combination to first select an area you want to work in, with a count of the amount of empty chairs, and then see the chairs in that area.

If it is chosen to have a floor plan with all the chairs visible it might have some unintended effects. In case occupied chairs are visible it would also probably indicate that the desk which the chair is located at is probably occupied as well. Since at occasion a chair might be free but there is not room at the desk to sit at at long tables like those in Metaforum at the TU/e. Also it gives people the option to look for a space where they might be able to sit at with multiple people since they can also see the location of the occupied chairs. However this does not matter if chairs should also be located at the same place at one desk, which is more common in flexible work spaces in companies.

This also might lead to an ethical problem, since everyone with access could use the system, if occupied chairs are visible, to track the movement of people sitting there. People with bad intentions could in theory use it to stalk their fellow students or colleagues in case they know their initial position from a safer and further distance. If the occupied chairs moves there is a very big possibility the person who is / was sitting on the chair is on the move as well. Of course there is not guarantee that it is still the same person but it could give you a good estimate.

Coming back to which platform to use, an app or a website, also have to take privacy into account. If anyone can access the system people could track people’s movement for bad intentions as explained above. This can either be solved by making a login system so only certain people can access the system. However, this has the downside that the system is less accessible for everyone and might result in less use. Another option is to remove the visibility of occupied chairs. This results into that people's activity is less trackable since the chairs are not visible, so if someone moves with a chair it is unknown where it will move too. In contrast it will remove the extra functionality to check for empty desks at a long table.

However different kind of institutions and buildings would probably prefer different display options, for possible privacy reasons or other functionality. A library might opt for only empty chairs visible in the system but without a login system so everyone who would want to use the system could easily make use of it. An enterprise in comparison would probably prefer a login system, since then people from outside the company cannot “spy” on the company. Although a bit more far fetched a rivalling company could check the chairs, if there is no login, to see how well the company is doing, or guess depending on the amount of empty and occupied chairs over the day.

Next up is how the interface should work optimally to convey the information in a clear manner. As discussed before you could show the count of an area or a complete floor map will all the floors. Displaying a full map with all chairs can put a lot of strain on the system, since every time a student asks it to refresh the chairs it will put strain on the server. If the location of more chairs are asked the more strain is put on the system. However only displaying the count of the chairs on a floor does not fulfill the purpose for leading people to empty chairs.

A combination of the two options would probably be the best option to have an easy to use interface and the function to see empty or occupied chairs. This could lead to an system which would categorize the buildings and floors first before actually displaying the chairs. All those categories would preferably show the amount of empty chairs in that building, floor or section. So people could first make the decision to which building or floor they want to go to. Then arrived at the place they could pull up the actual map to find the empty chairs.

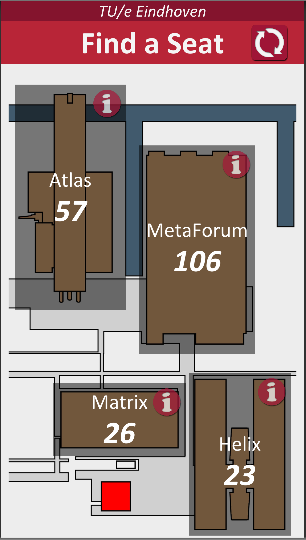

To elaborate further onto this idea, depending on the use of system only displaying the name of a building or section might not be enough for people to find the chair fastly. While people experienced with the building or campus might know where the building is located at new people won’t. Especially those new people also don’t know the locations where it is more likely to find an empty chair which would be a great target user of the system. In case of a one building system you could first divide the buildings into floors, which are very self-explanatory. However if the floor might be divided into sections, like Atlas at the TU/e not everyone knows how those sections work. The optimal display would probably be to display a map of that floor where the sections are visible, in those sections is also a number with the amount of empty chairs. Then if you click on that section you will pull up the map of that section with all the empty chairs visible.

Possible Visualisation

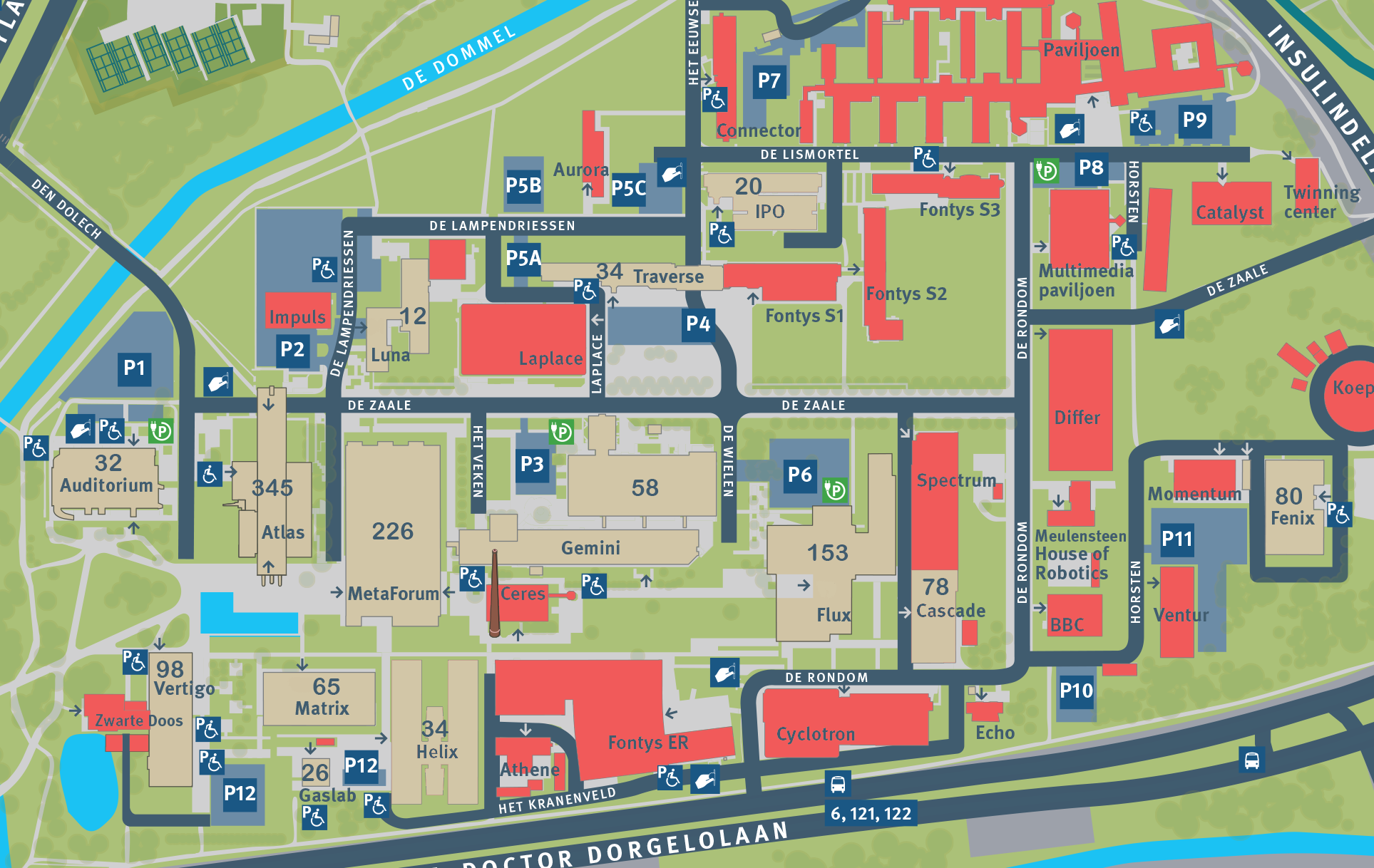

So how will the information be conveyed to the people, the layout of the information in case of an app or website can almost be the same. The only thing is that an website on a bigger browser, in case it is used on a laptop or big screen, that it could combine multiple screens. For an example how this would look the TU/e campus will be taken, since this campus has multiple buildings where there are flexible workplaces for students and staff. At first a general map of the whole campus will be visible. On this map buildings without workplaces would be displayed in such a manner that it is clear that there are no places available. The buildings who have workplaces available are displayed in a different colour with an amount of chairs available. Also next to the amount of chairs visible to the people also the facilities which the building has should be shown. These facilities can be concentration workplaces, general work places and places for groups to sit.

As seen above, the buildings on the campus without workplaces are displayed in red. The non-red buildings have workplaces available, with numbers inside the building which tell the person how many free spaces are in the building.

This map would be favourable for people who are not used to the campus. For those who are a map can be inconvenient since it can be less clear where there the highest amount of chairs are available or if you want to sit in a certain building. As discussed before then a list with the buildings would probably be more convenient for those people.

Insert building selection here

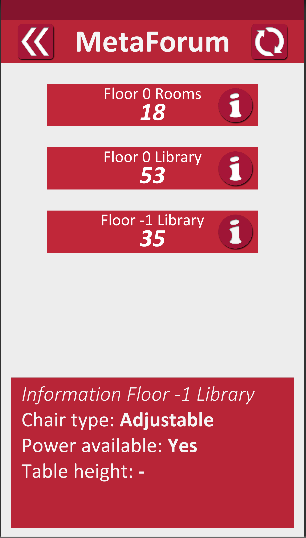

After you have selected a building, a similar screen should pop up, but instead of the different buildings the different floors with places available will pop up. Where people can see the amount of chairs per floor to see where they want to sit preferably.

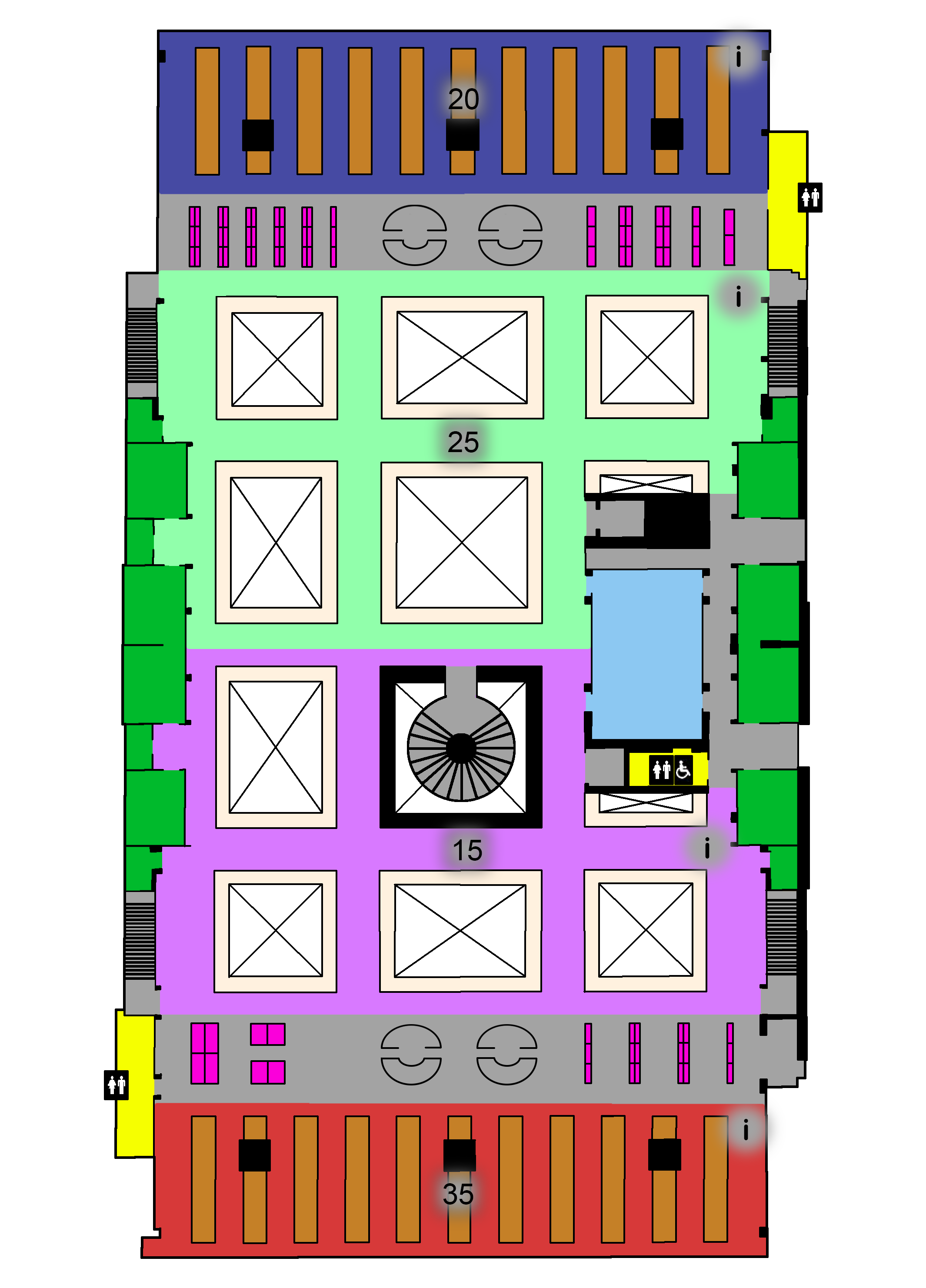

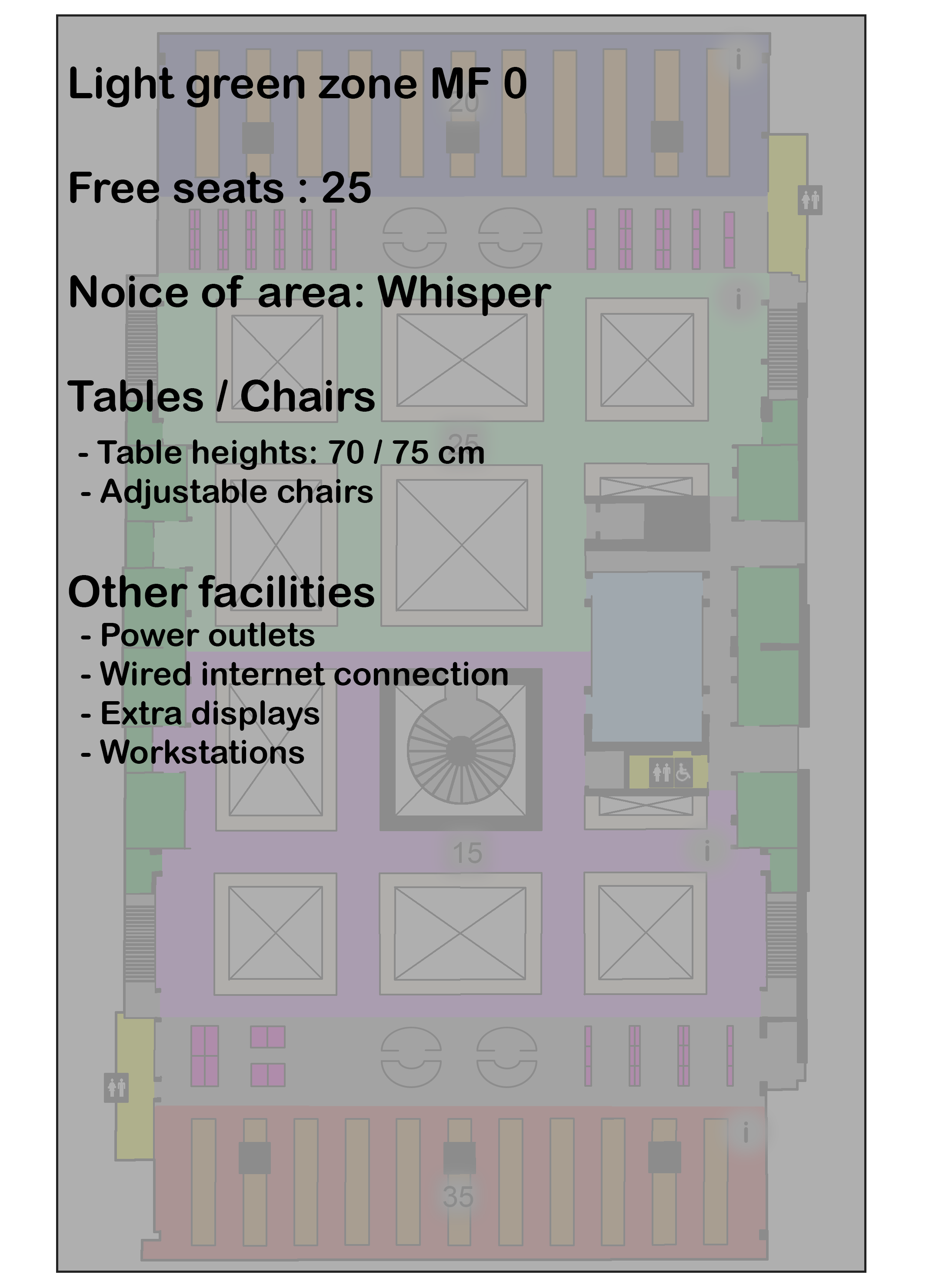

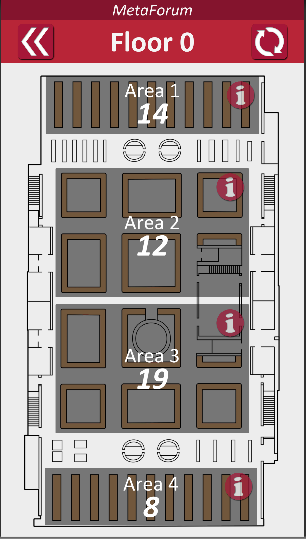

Next up a map of the floor will be given with the area’s which the floor is divided into will be given with a visible amount of chairs number. In case a login system is used you could specify that for certain areas only people with certain perks can see said areas. As example at the TU/e that a student cannot see the flexible workspaces for staff members and the other way around, or that students not of the Faculties of IE & IS cannot see the chairs available specified for those faculties. An addition which should be visible is what kind of facilities the area gives to a student. So for example if there is a possibility for a wired internet connection, how many power outlets there are per student if there is a place students can sit silently etc.

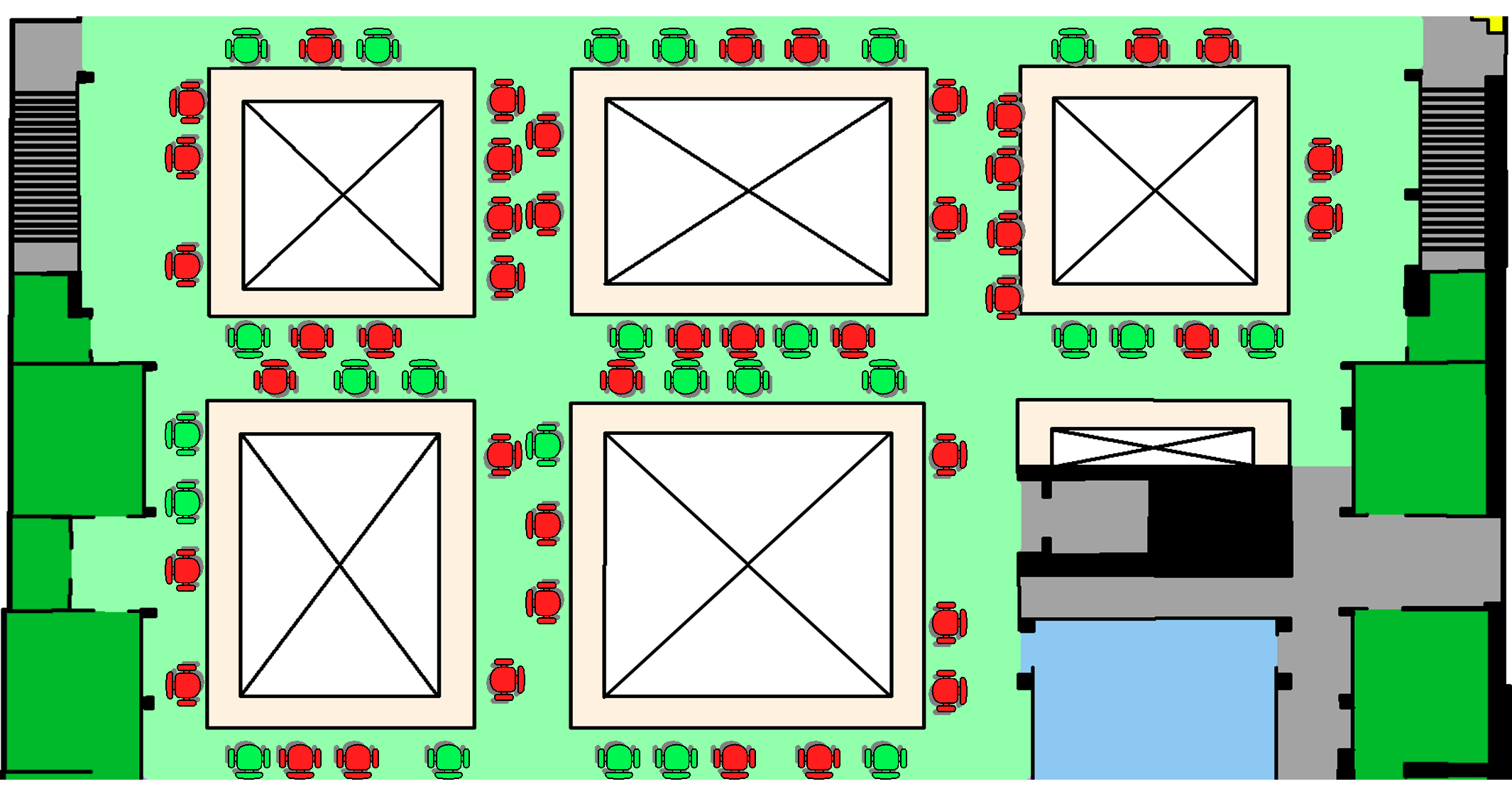

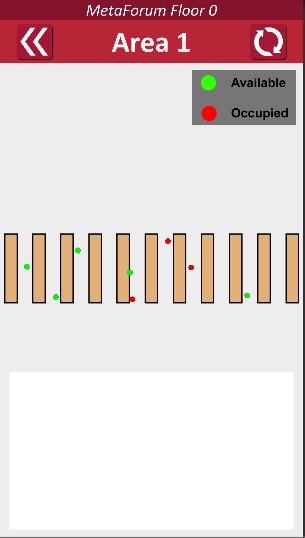

Subsequently if the area the person wants to sit in is selected it shows only that area and it will show all the empty or empty and occupied chairs depending on how the system will be used.

This also might lead to an ethical problem, since everyone with access could use the system, if occupied chairs are visible, to track the movement of people sitting there. People with bad intentions could in theory use it to stalk their fellow students or colleagues in case they know their initial position from a safer and further distance. If the occupied chairs moves there is a very big possibility the person who is / was sitting on the chair is on the move as well.

App Creation

The first thing tried was using the Android Studio to make a suitable app for the user to use. This is relatively easy as a basic app with just some buttons and an interface is easy to create and doesn't take much time. After a few online tutorials a basic interface, where areas on campus can be selected and send you to a different page, was created. This looks quite slick and can be updated quickly and easily. However, if you want to do things beyond just the bare basics, quite some programming knowledge of java or kotlin is required to understand everything. The issue with this are two things. First of all if we want to use a database for the project, implementing this will be a challenge, but some guides on this have already been found and it seems quite doable with a little knowledge of java or kotlin. The second however is an issue with how we want to display the information of the vacant seats to the users. If we want to do this using green dots as discussed, this will be very difficult to do using Android Studio. No way of doing this has been found so far, maybe there is a way somewhere out there but it will surely be tough to implement.

Another way of creating the app could be using Unity. This is a program that can create various types of software on many platform including Android an iOS apps. Experimenting with Unity has been quite successful and in only a relatively short amount of time I was able to get the placement of the green dots to work. The other interface parts of the app were also easy to implement to the point where only two other things will need to be added: the overall design needs to be changes/adapted to be optimal for our users and we need to get the data of the positions of the dots from some database. Currently the positions are imported by the app from a txt file, but changing this to import it from for example an SQL table shouldn't be hard. The basics are already partially implemented as well.

The decision of which method to use depends mostly on the design of the app. If we want to only show the number of seats per area for example, Android Studio would probably be easier. However, if we want to use the green dots, Unity would definitely be best as it already pretty much works on there.

Once the final design has been decided and we have a clearer idea of how we will use the database, finishing the app shouldn't take much time or effort anymore.

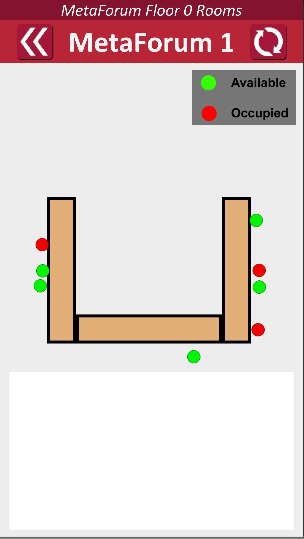

Current Design

The current design of the app consists of 4 screen levels.

The first screen as a map of the campus with all the buildings that have free seats in beige and the buildings without available seats in red. It also shows the name and the number of currently available seats on the screen. There is a refresh button in the top right of the screen that can be used to refresh the data on the number of available seats. The user can click on a building to go to the screen of that specific building. Also each building has a info button with information about the seating in that building.

This second screen a list of buttons is shown with the floors and other areas of the building. Here it again shows the number of available seats on each floor and there is again a refresh button to refresh this data. There is also a back button in the top left to go back to the previous screen. Every area also has a info button that when clicked will show a bar with information about the seats in that area. The user can click on a floor to go to the screen for that specific floor.

On this screen it shows a map of the entire floor with the tables that have free seats in beige. This map will be divided in various areas with each showing the number of available seats. Again the screen has a refresh and back button. The user can click on one of the areas to go to the final screen. Also each area has a info button with information about the seating in that area.

On this final screen a map if the selected area will be shown with red and green dots on it. These green dots represent available seats at their position and the red dots represent seats that are taken. Again the screen also has a refresh and back button.

On the screens with the dots it currently takes the number of available and taken seats from the firebase database. As we don't know the positions of the chairs in our current prototype, this information is assigned to random chairs in random positions around the tables to show that different chair positions can be shown.

To be added

Only images of what the seating area looks like need to be added to the screens with the dots (a white area can already be seen, we just need to make a picture and add it). I unfortunately can't go to campus at the moment to make those pictures.

Chair coordinate transformation

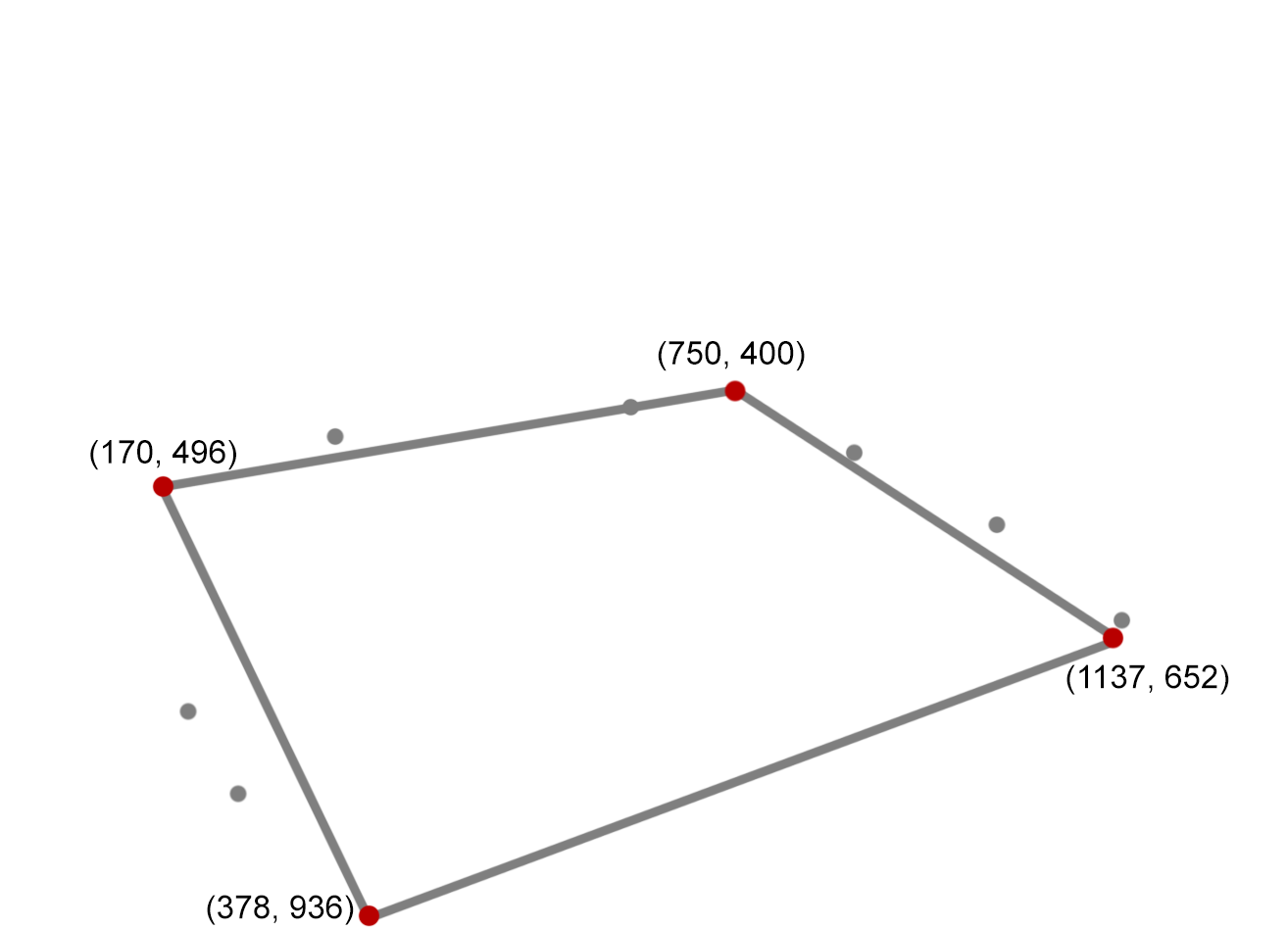

One issue that pops up when trying to show the positions of the chairs in the app is that the coordinates of the chairs on the camera feed don't align with the coordinates they should be at on top view used in the app. This is of course the case because the perspective of the camera is different. Therefore the coordinates determined by the neural network first need to be converted to a different plane. This can be done using perspective transformation.

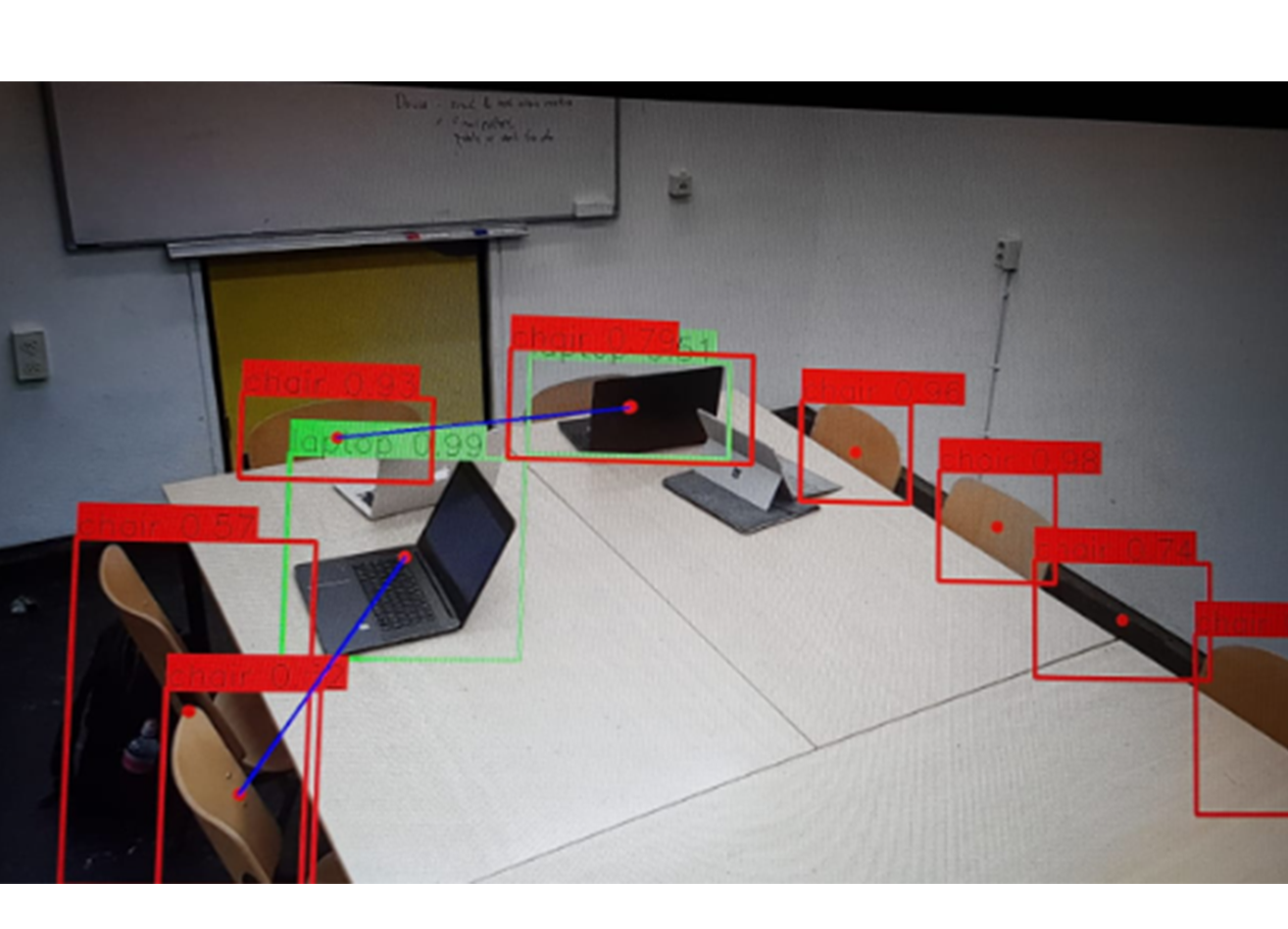

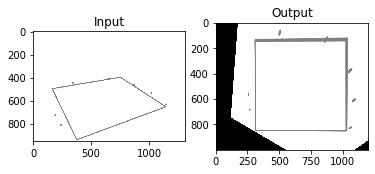

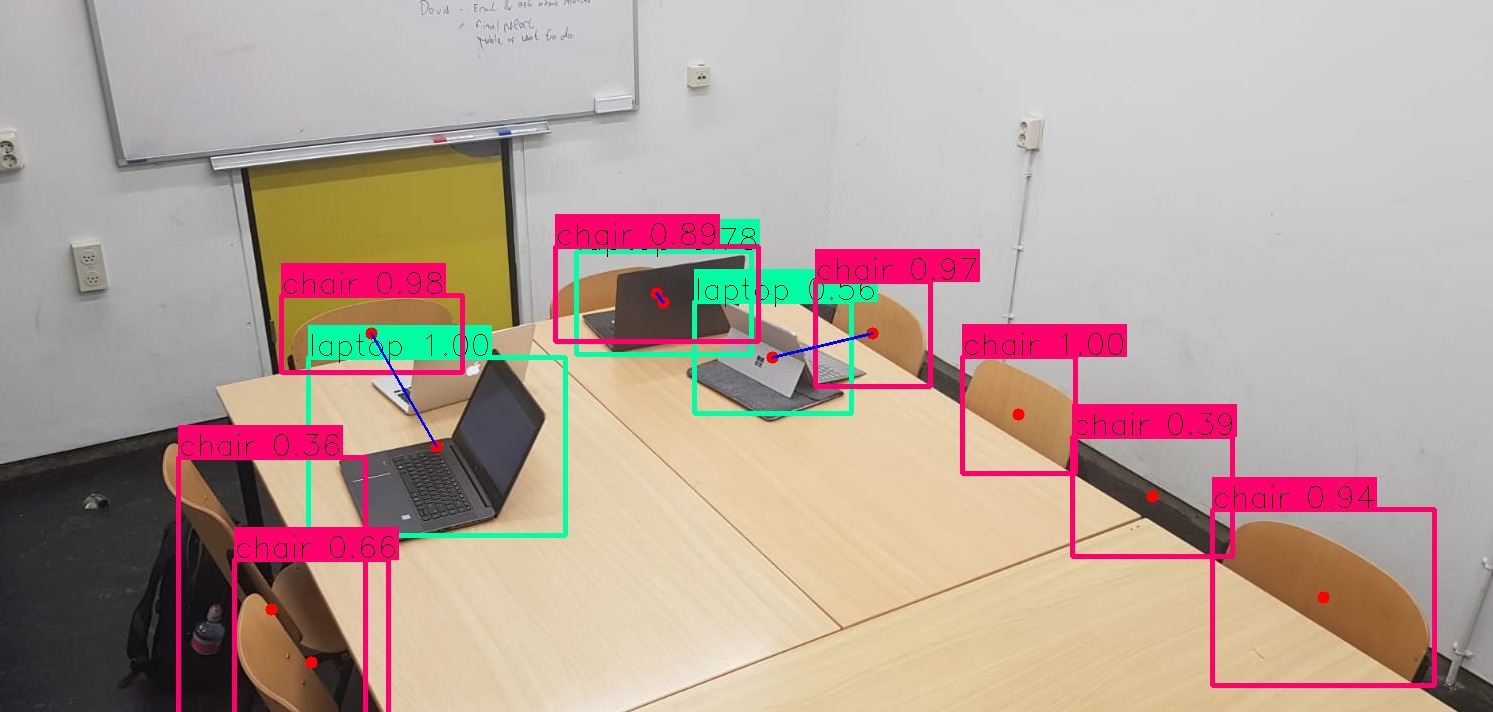

To illustrate this we can take one example image we made using our prototype:

In this image the neural network has detected several chairs and knows their position on the camera feed. For this example we will only take the upper table and the chairs around it. To make the example clearer a simple drawing is made with only the outline of the table and the positions of the chairs.

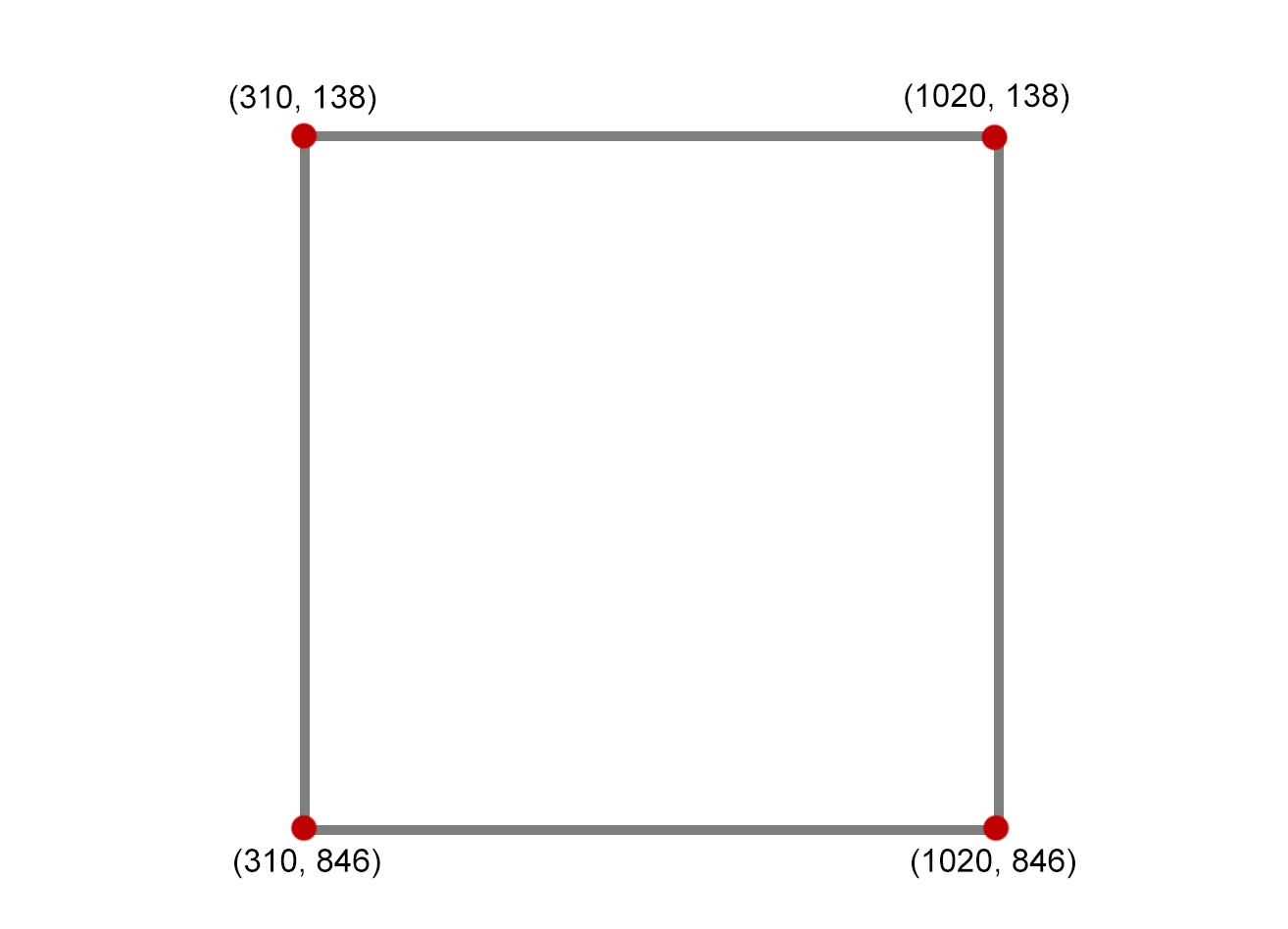

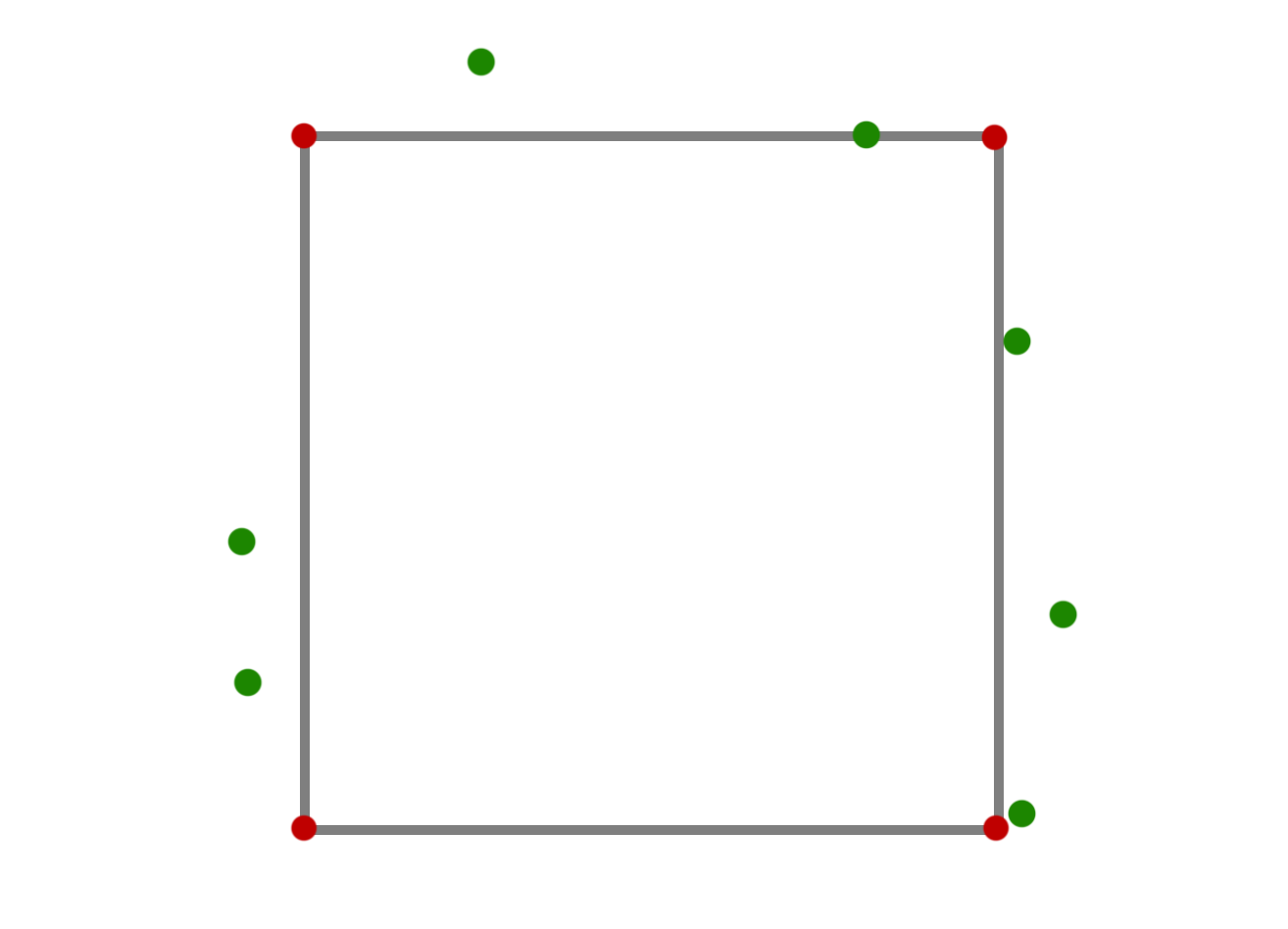

Next we make a drawing of the same table but in the view we want it in the app:

To find the transformation matrix of this transformation we need four coordinates, in this case the coordinates of the four corners of the table. These coordinates from both views can be used to define a transformation matrix. For this example we used the openCV module in python to get this matrix and transform the camera perspective image to the top view perspective image:

Here the table has been converted to the right view and the chairs have been moved with them. We also added a clearer view of what the output will look like. These converted coordinates can than be used for the positions of the dots in the app.

Obviously this transformation matrix is not the same for every room or area. Therefore this matrix will need to be calculated and hard-coded in the app or neural network for every room or area. The time it takes to do this is however very little as you only need to input the corner coordinates once during setup and, if we assume the cameras never move, this won't need to be changed afterwards. Perspective transformation is tehrefore a solid solution to this problem.

Requirements of the user interface

| Id | Description | MoSCoW |

|---|---|---|

| A1 | The android app can retrieve the number of empty chairs for each area from the database | Must have |

| A2 | The android app has a refresh button that can retrieve the newest information on command | Must have |

| A3 | The android app can display this information | Must have |

| A4 | The information is displayed in 4 levels; a map of the different buildings, a list of the floors of that building, a map of the areas of that floor and a map of the chairs in that area | Should have |

| A5 | The android app is accessible to everyone with a TU/e login and not to anyone else | Could have |

| A6 | There is also an app available for iOS | Could have |

Neural Network

Using the Yolo Algorithm

The first thing that we thought of when the discussion of detecting objects came, was a Neural Network. In order to detect an object you need powerful type of algorithms that can classify that object, attributing it a label, which in our case is the name of "chair" and its states "empty" or "occupied". The chosen programming language was Python due to its vast Neural Network community and due to is clear and easier to read syntax.

Building a Neural Network can be done in 2 ways:

- Either create your own model, with your own functions and mathematic formulas behind

- Either use an existing model and update in such a way to make it useful for your desired task.

Due to lack of time to create our own Neural Network model, we chose the second option. In recent years, deep learning techniques are achieving state-of-the-art results for object detection, such as on standard benchmark datasets and in computer vision competitions. Notable is the “You Only Look Once,” or YOLO, family of Convolutional Neural Networks that achieve near state-of-the-art results with a single end-to-end model that can perform object detection in real-time. YOLO is a clever convolutional neural network (CNN) for doing object detection in real-time. The algorithm applies a single neural network to the full image, and then divides the image into regions and predicts bounding boxes and probabilities for each region. These bounding boxes are weighted by the predicted probabilities.

YOLO is popular because it achieves high accuracy while also being able to run in real-time. The algorithm “only looks once” at the image in the sense that it requires only one forward propagation pass through the neural network to make predictions. After non-max suppression (which makes sure the object detection algorithm only detects each object once), it then outputs recognized objects together with the bounding boxes.

With YOLO, a single CNN simultaneously predicts multiple bounding boxes and class probabilities for those boxes. YOLO trains on full images and directly optimizes detection performance.

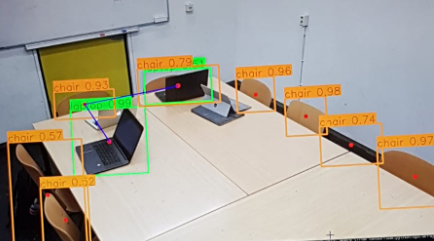

In this project we are using Yolo in order to identify the empty chairs of a classroom. The classification is done as follows:

The chairs can be:

- Empty

- Occupied

These two tags are attributed to the chairs after the following criteria:

* If the chair is clean (it does not have anything in front of it or on it) then the chair is empty * If the chair is empty and someone puts an object in front of it, or sits on the chair, then the chair became occupied

There also exists a small problem, which is that the algorithm does not know at its first look if where is a person there is also a chair. It has to see a chair first in order to give its tag.

Requirements of the model

The model requires multiple processes to be identified accurately. Therefore, we have defined “accurately” in the requirements below as at least x percent for our project, where proof of concept is the most important goal, and y percent for our theoretical application, since a higher accuracy will be necessary for it to function properly. We used the MoSCoW method for assessing the importance of every requirement. Here, "must have" means that the requirement in question is a mandatory requirement. Without it, the product does not work. "Should have" means that the product will run more efficiently or more smoothly with that requirement, but the product still works without it. "Could have" means that the requirement in question was possible to have without various constraints preventing it (time, computational power, etc.). Having the requirement in question will make the product more refined, but it works fine without it. "Won't have" means that the product will not work with the requirement in question.

| Id | Description | MoSCoW |

|---|---|---|

| M1 | The model accurately identifies empty chairs, taken chairs, people and laptops | Must have |

| M2 | The model accurately identifies miscellaneous objects that indicate a taken chairs, such as jackets, books or notebooks | Could have |

| M3 | The model accurately flags chairs with and without a person on it respectively as taken and available (if there are no other objects) | Must have |

| M4 | The model accurately flags chairs with and without laptops in front of it respectively as taken and available | Must have |

| M5 | The model runs in a loop and processes new images from the camera feed | Must have |

| M6 | The model processes at least 1 frame every 10 seconds from the camera feed | Should have |

| M7 | The model saves the number of empty chairs found for each area in a database in the cloud. | Must have |

| M8 | The model updates the database with this information at least once every 10 seconds | Should have |

| M9 | The model keeps track of objects that have been recognized as people. | Won't have |

| M10 | The model recognizes people's faces. | Won't have |

Detection Steps

In order to manage to complete our final goal I decided to take the following approach in the code: First I had to be sure that the algorithm was able to detect 3 class in our frames: persons, chairs, and laptops because in a optimal environment, I concluded that these 3 classes can provide you a good model of occupied/unoccupied chairs. This will be discussed in the "Future Improvements"' subsection.

In order to detect these specific classes, the Neural Network was trained in the cloud to get the optimal values of its weights and global parameters. After this an own model was created, using the Yolo method in order to find and display the results.

Regarding the Yolo method, it has the following traits:

- It take only one run of a frame in order to give a result. This implies that:

- It trades quality of the result for performance or vice-versa. This means that if you change the input image of the algorithm form 416x416 pixels to 608x608 then the result will be better(a bigger image means a higher quantity of pixels from which you extract the features) but the performance will be lowered.

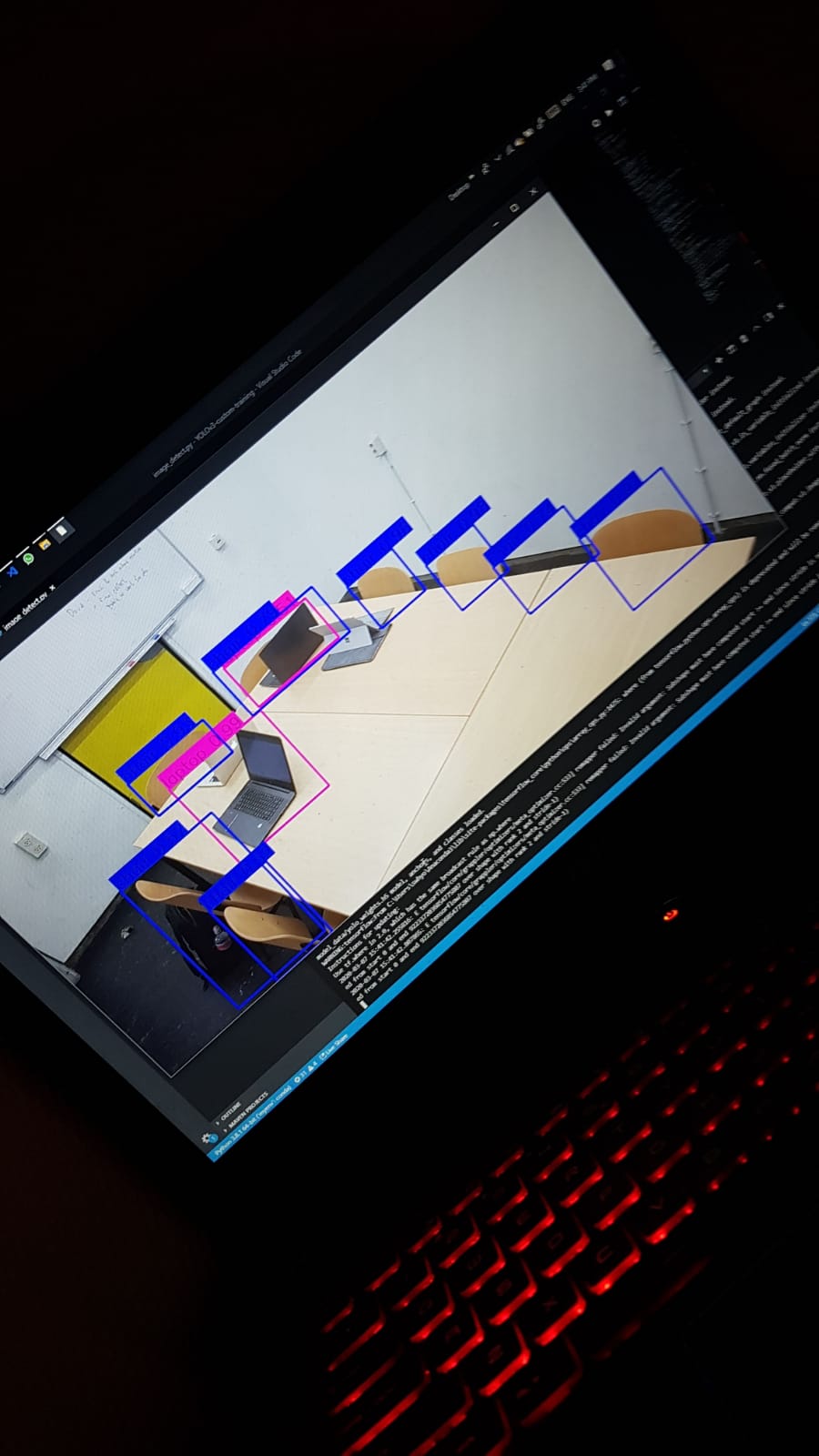

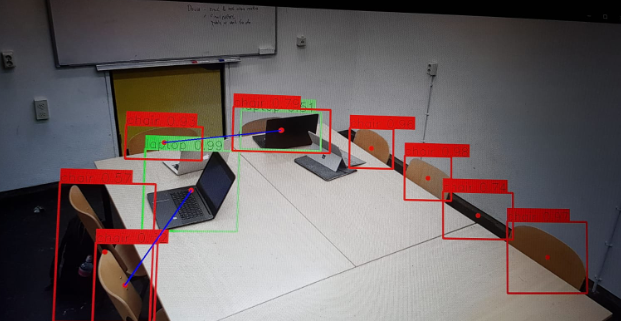

This can be seen here in the first photo. Input image resolution was 416x416

After the Neural Network was traded and the classes were identified, a start on the Classification problem has been made, which stated "How does the algorithm knows that a chair is occupied or not?" For this, the approach was a follows:

- When computing the classes, we also save the number of items found of that specific class

- When computing the coordinates of the framing boxes also compute and store coordinates of the centers of the objects

- When the framing boxes are drawn also draw the center of the centers

This me approach gave me the possibility to see each frame box ore clear and prepared the data to be classified.

The classification was done as follows:

- For each of the laptops detected

- For each of the chairs detected

- Compute the score between the the laptop and each of the chairs

- Take only store the coordinates of the center of the chair that has the minimum score

- Draw the line between the laptop and the chair

- If the selected chair is already take by a laptop then take the chair that has the next minimum score

- For each of the chairs detected

The first iteration of the score formula was as follows:

- Score = ceil((chair[0] - laptop[0])^2 + (chair[1] - laptop[1])^2)

Where the chair and laptop a list of 2 elements:

- First element [0]: the x coordinate of the chair's/laptop's center

- Second element [1]: the y coordinate of the chair's/laptop's center

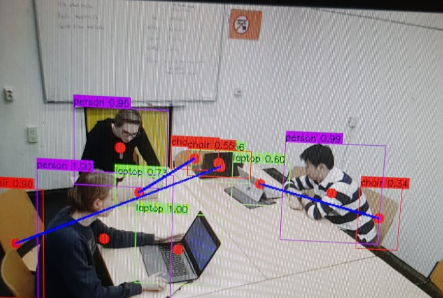

The progress can be see in the following 3 images, where the last one has its input image resolution increased to 608x608 pixels in order to get much more information processed

Regarding the third variable in our process, the class of persons increases the difficulty of the object identification and classification.

This can be seen in the following image that has a input image resolution of 608x608.

The problem appears when a person is sitting on a chair but this we be debated in the subsection Future Improvements and in Problems encountered , solutions and workarounds

Data Classification

The classes that our model can detect are as follows:

person bench, backpack, umbrella handbag, suitcase, bottle, cup, fork, knife, spoon, banana, apple, sandwich, orange, chair, dining table, laptop, mouse, keyboard, cell phone, book, clock, scissors,

The main 3 classes on which we are focusing, in order to prove our concept, are: chair, person and laptop We chose these 3 classes, because in an optimal environment to prove our concept, a student is coming to the classroom only with his/her laptop and either places the laptop on the table in order to take seat for himself for later or he sits on the chair and places the laptop on the table.

Furthermore, a student can only sit on a chair thus occupying it. These are our 2 optimal case on which we focus to prove our proof of concept.

Problems encountered , solutions and workarounds

Third class problem

During the development of the algorithm, the following problems were encountered. The first problem was the presence of the persons:

- A person can either sit on a chair

- A person can stay near the table

The problem was that if a person would sit on a chair, then that chair would be obstructed by the person and it would not be recognized by the algorithm. The solution of this problem was as follows.

First our algorithm assumes 2 things at first:

- The number of the initial chairs from a classroom is known

- Then number of chairs is fixed so no one can bring a chair with him from another classroom

Secondly whenever there are persons in the room we estimate the total number of the empty chairs as follows:

- Let n be the number of total chairs present in the classroom from the beginning.

- Then after the algorithm complete the classification of the objects, the total number of occupied chairs is as follows:

average_h_l = math.ceil((len(center_of_persons) + len(center_of_laptops))/2)

estimated_value_of_occupied_chairs = math.ceil((len(chairsVisited) + average_h_l) / 2)

After finding the estimated_value_of_occupied_chairs we can compute the estimated number of empty chairs

emptyChairs = total_number_of_chairs - estimated_value_of_occupied_chairs

These values estimate the real world behavior of a normal meeting group. Most of the assumptions will be checked via the questionnaire.

Playback problem

Another problem that we have encountered is the playback rate of the algorithm running on a video.

The video can either be a live feed, either a video format file (.mp4, .vmw, etc)

The solution for this has been discussed and stated as follows:

- In order to check the state of the room(the state meaning how is everyone sited and where are the objects placed) we are checking one the 50th frame from a video thus from a video of 30 seconds recorded in 60fps(thus 1800 frames) we check only 36 frames distributed evenly, thus gaining some performance.

Future Improvements

Future improvements can be done regarding 4 things:

- Managing to find a accurate way in order to determine the solution for the Third class problem

- Updating the classification problem with ore than 3 classes such as: books, pens, pencils, notebooks etc.

- Finding a good way to save the real position of the chairs

- Moving from notebook to cloud computing

Regarding the Third class problem one improvement can be as follows:

- Starting the algorithm before the students are present in the room and string the position of the chairs

- Running a separate Neural Network that focuses only on persons and can identify if a person is either standing either is sit

- If a person is sit on a chair then the result of these 2 Neural Networks will conclude that the person sits on that chair.

Regarding the Classification problem an improvement can be done as follows

- Taking into account more than 3 classes.

- Adding more classes can increase the accuracy of the model

- This technique increases the complexity of the model because then your cases will increase exponentially.

- A better algorithm must be developed in order to link multiple object between them that will result in a occupied chair

Regarding the third improvement a solution can be:

- Using a TOF sensor, in order to measure the real world distances between the chairs and between the chairs and the tables and so on.

- With this you could make an accurate map in the app and show exactly which chiar is placed where and which chair is occupied.

Regarding the 4th and final improvement the solution is as it states. Moving our algorithm from our stationary pc(in our case my laptop) to the cloud will give us much more computational power. Thus moving from 0.5 fps playback on a random .mp4 file to more than real time detection.

Rooms

State of the art at the TU/e

The current system which is used at the Technical University of Eindhoven is based upon Planon. This system manages the reservations for the rooms and the workplaces in auditorium and some others around the campus. Those workplaces are able to be recognised by the stickers and the QR-codes on the tables.

The TU/e has also expended upon it by using BookMySpace in combination with Planon, reservations made by BookMySpace will be fed into Planon. This results in that BookMySpace is still limited by the possibilities by Planon. For example Planon can not handle that a reservation is shared between multiple people. Currently BookMySpace sends invites via outlook to create the meeting, but the actual reservation can only be made by one person.

The system has also expanded upon by Pilots of BookMySpace. In the building Metaforum they implemented sensors in some room to detect no-showers, people who do not show up in time, and early leavers, people who leave earlier than expected. After 15 minutes of no movement the sensor could consider the room empty and cancel the current reservation. However there were some noticed problems with this system. While the system could detect no-showers correctly it had some problems with early-leavers. Since the detection of the system was based upon movement sensors it could happen that if a person was making a test or sits too still for 15 minute the system does not detect any movement and detects it as early-left. This could give the room free for other students to use while someone was in a test. That resulted into that the observer or examinee had to move or wave their hand every 15 minutes which was quite inconvenient.

Also from sources from BookMySpace there probably will be another pilot in the upcoming future in the building Atlas at the TU/e campus. This system will probably use some form of detection systems in the lamps. One difference with this pilot in comparison to the pilot in Metaforum is that this detection will be based upon heat. This will result that the detection system can detect if people are in the room, and even how many there are in the room. This will probably reduce the chance of a wrong detection of early leavers in comparison to the old system. Which could result into a wide implementation of a no-shower and early-leaver system into Atlas. Since those lamps are at this moment only compatible with the building Atlas.

Regulations

As opposed to the system with the chairs, it is actually possible to pursue people who do not follow the rules and regulations with the rooms system. People need to reserve rooms through their account, making it possible to make them responsible for what happens to the room as long as they have reserved it (name, picture of account holder, student number, etc. are all known once a person has reserved a room through his/her account). It should also be noted that everyone who is invited to the room and has accepted the invitation, is also responsible for what happens and will receive the same punishments as the person who made the reservation.

The rules and regulations for reserving a room are as follows:

- A person can only make use of a room when he or she has reserved it

- Rooms can be reserved up to 7 days in advance

- Reservations may not conflict with other requests of for using the room

- A person can only make one reservation each day

- A person can only reserve a room for a maximum of 4 hours

- If no one is in the room after 15 minutes during the reservation, the reservation gets cancelled

- No one is allowed to stay in a room outside of the available reservation times

- No vandalism or anything else of the sort

- The room should be left the same way it was found. If chairs and/or tables have been moved in the room, they need to be put back in their original positions before leaving the room. Other items that did not originally belong in the room should be taken away or thrown in the bin.

- Every day, all items left behind found in the rooms will be stored safely and can be picked up at the help desk of the same building as the room where the object was left behind.

In order to prevent people from abusing the room reservation system, those who violate the rules will receive a punishment, which is based on the severity of the violation and the number of previous violations. All previous violations will be reset every quartile. However, if someone violates the rules in the last week of the quartile, the punishment will stay for a minimum of two weeks, starting in the first week of the next quartile. This is to prevent people from trying to minimise the punishment by violating the rules at the last moment. If a violation happens in the last quartile (which is the 4th quartile) and the person who violated the rule graduates, all punishments will be removed.

1. If someone has violated the rules and regulations for the first time during the current quartile, and the severity is not extraordinarily high, he/she will be punished for one week. During this week, the following rules apply:

- He/she can only reserve up to 3 days in advance instead of 7

- He/she can reserve a room for only 2.5 hours

- A person can only reserve a room every 2 days

- (He/she has lower priority during room reservations, this is just an idea, don't really know yet how to apply this clearly)

2. If someone has violated the rules and regulations for a second time during the current quartile, and the severity is not extraordinarily high, the same punishment will last for 3 weeks.

3. If someone has violated the rules and regulations for a third time during the current quartile, and the severity is not extraordinarily high, these rules and conditions will last until the end of the quartile.

- He/she can only reserve up to 1 day in advance instead of 7

- He/she can reserve a room for only 1 hour and a half

- A person can only reserve a room every 3 days

- (He/she has even lower priority during room reservations, this is just an idea, don't really know yet how to apply this clearly)

In case of a severe violation (there is no clear "threshold" of what counts as a severe violation, therefore it might be a bit subjective), different rules and conditions may apply.

Test Plan

In order to confirm whether we have met our algorithm and user interface requirements the following test plan has been made.

(((We have decided to use one of the OGO rooms in gemini north to test our application. In these rooms one of our laptops can easily be mounted in one of the corners of the room, close to the roof. This laptop will have its camera pointed towards the table below and will have the algorithm running on the images from the camera feed))) Location needs to be changed due to the restriction surrounding the coronavirus.

We plan to make 10 short videos of 30 seconds with all five of us using the room in a variety of scenarios:

- Sitting in chair with laptop

- Sitting in chair without laptop

- Sitting in chair with notebooks

- Different sitting positions (straight/sloppy)

- Walking out of the room

- Walking into the room

- Walking/standing still around the chairs

- Repositioning the chairs

Since our accuracy requirement was 80% eight out of ten chairs need to be correctly identified as occupied or empty. The frames that were analyzed during the testing videos are also saved so that we can later check how accurate the algorithm was. Additionally, we also require that the amount of chairs available in the room also needs to be correctly calculated 80% of the time. Since there are multiple chairs this requirement will most probably be harder to meet, which is necessary, as the amount of free chairs in an area is what is ultimately communicated to the user in our application and this should therefore be the main measure of accuracy. The app will be used to check the amount of free chairs calculated, thereby also validating the correct functioning of the database where the information is stored and the app that displays the information and can be used to refresh it.

Privacy Policy

Draft version

The Seat Finder app is built as a free app. This app is provided by us at no cost and is intended for use as is.

This section is used to inform visitors regarding our policies with the collection, use, and disclosure of personal information if anyone decides to use the Seat Finder app.

If you choose to use the Seat Finder app, then you agree to the collection and use of information in relation to this policy. The personal information that we collect is used for providing and improving the service. We will not use or share your information with anyone except as described in this Privacy Policy.

1. Information Collection and Use

For a better experience, while using our Service, we may require you to provide us with certain personally identifiable information. The information that we request will be retained on your device and is not collected by us in any way. The app may use third party services that may collect information used to identify you.

2. Log Data*

We want to inform you that whenever you use the Seat Finder app, in a case of an error in the app, we collect data and information on your phone called Log Data. This Log Data may include information such as your device Internet Protocol (“IP”) address, device name, operating system version, the configuration of the app when utilizing my Service, the time and date of your use of the Seat Finder app, and other statistics.

3. Cookies

We use cookies and similar tracking technologies to track the activity on our Service and we hold certain information. Cookies are files with a small amount of data which may include an anonymous unique identifier. Cookies are sent to your browser from a website and stored on your device. Other tracking technologies are also used such as beacons, tags and scripts to collect and track information and to improve and analyse our service. You can instruct your browser to refuse all cookies or to indicate when a cookie is being sent. However, if you do not accept cookies, you may not be able to use some portions of our Service.

Examples of Cookies we use:

- Session Cookies: We use Session Cookies to operate our Service.

- Preference Cookies: We use Preference Cookies to remember your preferences and various settings.

- Security Cookies: We use Security Cookies for security purposes.

4. Service Providers

We may employ third-party companies and individuals due to the following reasons:

- To facilitate our the Seat Finder app;

- To provide the Seat Finder app on our behalf;

- To perform the Seat Finder app-related services;

- To assist us in analyzing how the Seat Finder app is used; or

- With your consent in any other cases;

We want to inform users of this Service that these third parties have access to your Personal Information. The reason is to perform the tasks assigned to them on our behalf. However, they are obligated not to disclose or use the information for any other purpose.

5. Security

We value your trust in providing us your Personal Information, thus we are striving to use commercially acceptable means of protecting it. But remember that no method of transmission over the internet, or method of electronic storage is 100% secure and reliable, and we cannot guarantee its absolute security.

6. Children’s Privacy*

These services do not address anyone under the age of 13. We do not knowingly collect personally identifiable information from children under 13. In the case we discover that a child under 13 has provided me with personal information, we will immediately delete this from our servers. If you are a parent or guardian and you are aware that your child has provided us with personal information, please contact us so that we will be able to do necessary actions.

7. Changes to This Privacy Policy

We may update our Privacy Policy from time to time. Thus, you are advised to review this page periodically for any changes. We will notify you of any changes by posting the new Privacy Policy on this page. These changes are effective immediately after they are posted on this page.

8. In the Event of Sale or Bankruptcy

The ownership of the Site may change at some point in the future. Should that occur, we want this site to be able to maintain a relationship with you. In the event of an (actual or potential) sale, merger, public offering, bankruptcy, acquisition of any part of our business, or other change in control of the Seat App, your user information may be shared with the person or business that owns or controls (or potentially will own or control) the app, provided that we inform the buyer (or prospective buyer) that it must use your user information only for the purposes disclosed in this Privacy Policy.

9. Your right to information, correction, deletion and disclosure of your data

In accordance with legal provisions, you have the right to correct, delete, and block your personal data. Additionally, you have the right to obtain the following information from us at any time:

- Which of your personal data we store

- What our purpose for storing this data is

- Requesting the origin and recipient, or recipient category of this data.

If you wish to perform any of the aforementioned actions with your data, please contact us with the contact information provided in section 9.

10. Contact Us

If you have any questions, requests and/or suggestions about our Privacy Policy, do not hesitate to contact us at [Group 12 contact information].

* This might be not necessary for us, but I saw it in other privacy policies so it might be useful

Logbook

Week 1

| Name | Activities (hours) | Total time spent |

|---|---|---|

| Boris | Brainstorming (1), Research Papers (3), Write Planning (2.5), Write State-of-the-art (4), Write Subject and Problem Statement (0.5), Other Wiki Writing (2) | 13 |

| Winston | Brainstorming (1), Look for relevant papers (2), Write subject, objectives, and approach (3),Clean up state of the art (1), clean up the references (1), Wiki writing (2), Rules and Regulations (2) | 12 |

| Jelle | Brainstorming (1), Writing users, society and enterprise (4), exploring relevant papers (2), adding to approach (2), Other wiki writing (1) | 10 |

| Roy | Brainstorming (1), Exploring relevant papers (2), Write Approach (2) | 5 |

| Horea | Brainstorming (1), Look for papers (2), Object detection and neural network (4), State-of-the-art (2), Wiki writing (2) | 11 |

Week 2

| Name | Activities (hours) | Total time spent |

|---|---|---|

| Boris | Meeting (0.5), Update planning (0.5), Clean up state-of-the-art (0.5), Group Discussion (2), App Creation (8) | 11.5 |

| Horea | Meeting (0.5), Group Discussion (2), Neural Network Creation (6), Learning to create the Neural Network(4) | 12.5 |

| Jelle | Meeting (0.5), User Requirements and questionnaire creation (4), Group Discussion (2) | 6.5 |

| Roy | Meeting (0.5), Keep in Contact with Peter Engels (0.5), Group Discussion (2), Design interface (7) | 10 |

| Winston | Group Discussion (2), write rules and regulations, mostly the chairs part (4) | 6 |

Week 3

| Name | Activities (hours) | Total time spent |

|---|---|---|

| Boris | Meeting (0.5), Group Discussion (0.5), Contact Engels (0.5), App Creation (10) | 11.5 |

| Horea | Meeting (0.5), Group Discussion (0.5), Implementing Yolo object detection on live feed (5) | 6.5 |

| Jelle | Meeting (0.5), Group Discussion (0.5), Survey development (5) Contact Engels (0.5) | 6.5 |

| Roy | Meeting (0.5), Group Discussion (0.5), Design interface (5), Creating Mockups (2) | 8 |

| Winston | Meeting (0.5), Group Discussion (0.5), Contact Engels (0.5), adjust rules and regulations, mostly rooms (5) | 6.5 |

| Name | Activities (hours) | Total time spent |

|---|---|---|

| Boris | App Creation (3), Update Wiki (1), Discussing mockups with Roy (0.5) | 4.5 |

| Horea | Creating the database(3), Linking the database to the Neural Network(3), Finalizing the Frameowork (6), Update Wiki(1) | 13 |

| Jelle | Asking student outside our group for survey improvement (1), Survey development and layout (3), Researching survey analysis (3) Update wiki (1) | 8 |

| Roy | Searching for floor plans (0.5), Discussing mockups with Boris (0.5), Making disregarded mockups(4), Writing Wiki (1) | 6 |

| Winston | Adjusted Rules and Regulations (rooms), mostly punishment part (3.5) ; Asked people from other universities what rules and conditions apply (room reservations) and some online searching (3), research on privacy policy (2) | 8.5 |

Week 4

| Name | Activities (hours) | Total time spent |

|---|---|---|

| Boris | Meeting (0.5), Meeting BookMySpace (1), Group Meeting (1.5), App Development (11), Update Wiki (1) | 15 |

| Horea | Group Meeting (1.5), Updating the Model (5), Creating the Occupied Chair Classification (5), Linking the Final Model to the database (1), Polishing the code (6) | 18,5 |

| Jelle | User interface and model requirements for testing (6), Survey development (1), Survey testing (2), Survey analysis research (1.5), Group Meeting (1.5) | 12 |

| Roy | Meeting (0.5), Meeting BookMySpace (1), Writing SoA Planon (2), Adding Design Interface (0.5), Making Floor Plan MF 0 (9), Writing Wiki (0.5) | 12.5 |

| Winston | Meeting (0.5), Meeting BookMySpace and finishing the notes (2), Group Meeting (1.5), reviewed the wiki (1.5), continuing Privacy Policy (6) | 11.5 |

Notes Meeting BookMySpace

- Pilots have been done in Meta (for about half a year) to prevent empty rooms from being on reserved. They wanted to test if 15 minutes of no movement in a room was a good way decide if a room should be set to available or not (they used movement detectors). It worked alright but there were some drawbacks (examples: some people don’t move a lot during studying or if someone wants to grab something to eat or drink it would also set the room to available after 15 min).

- At the moment they are also looking at a new sensor from Philips. They have a sensor that can measure body heat and some other things (to prevent various problems of the motion sensor). The problem with this, is that it costs a lot of money, which is also why they didn’t couldn’t implement sensors for each reservable study/workplace.

- Maybe it is possible to combine Planon with our project (but this is more for later if possible, probably not since we only have about 4 weeks left).

- The Planon app can already find available workspaces to study or work at. But you need to reserve it (ours can also be used if you just want to find an available spot somewhere in, for example, the library). But they are still trying to improve it (not really specified on how they’re improving it iirc).

- Apparently, there is not a shortage of study places/workplaces in general, but a shortage in study/workplaces in Metaforum specifically. This is likely because a lot people just prefer to study in that specific building (could be due to the building being relatively silent and there are comfortable to study at).