PRE2018 3 Group2

0LAUK0 - 2018/2019 - Q3 - group 2

Group members

| Name | Student ID |

|---|---|

| Koen Botermans | 0904507 |

| Ruben Hendrix | 1236095 |

| Jakob Limpens | 1019496 |

| Iza Linders | 0945517 |

| Noor van Opstal | 0956340 |

Introduction

Planning

The tasks and milestones (both the ones already achieved and those on our to do lists) of this group are elaborated on in the Gannt Chart accessed by the following link: Gannt Chart

Problem Statement

We live in an ageing society, the number of elderly people is ever increasing [1]. As a consequence, pressure on caregivers is rising. The research area on possibilities to alleviate this pressure by means of a robotic platform is increasing in size. Emotional comfort tends to be vital in medical environments, and such a robotic platform needs to conform to this aspect of elderly care [2]. For our project, we want to zoom in on a particular part of many modern robots, the part having as primary task the portrayal of emotional information: screen-based faces. During the eight weeks of this project, we will set up a research plan, carry out test with the target users – seniors – and attempt to design and validate a screen-based robotic face. Since our group is a multidisciplinary team this problem will be approached from both a technical perspective and a user centered perspective.

Initial ideas

Objectives

To get a better overview of what needs to be done to complete the project, we set ourselves a couple objectives:

System objectives:

- The system should be able to express emotions in a manner that is natural, non-ambiguous and clear.

- The system should behave like a human, without entering the uncanny valley.

Research objectives:

- We want to understand how elderly react to different facial emotions/designs expressed by a robot.

- We want to optimize the way that robots show emotions to elderly-users.

State of the Art

Different themes related to our project can be identified. They are listed here, together with the relevant papers that provide the stated information.

Robot emotion expression

Different papers provide an insight into the existing technology in the field of recognizing human emotion in HCI research.[3] Article 1 provides a discussion of existing literature on social robots paired with care for elderly suffering from dementia. It also discusses what contributions and cautions are bound to the use of these assistive robotic agents, and provied literature related to care of elderly with dementia.[4] Article 2 places its focus on the more general user, and contains a study about the effect of a robot's expressions and physical appearance on the perception of said robot, by the user.[5] Article 3 and Article 4 present a description of the development, testing and evaluation of a robotic system, and a framework for emotion interaction for service robots, respectively. Article 3 focuses on the development of EDDIE, a flexible low-cost emotion-display with 23 degrees of freedom for expressing realistic emotions through a humanoid face, while Article 4 presents a framework for recoginition, analysing and generation of emotion based on touch, voice and dialogue.[6][7] The results of a different project, KOBIAN, are described in Article 5. Here, different ways of expressing emotions through the entire body of the robot are tested and evaluated.[8]

Emotion recognition

In Article 6, titled "Affective computing for HCI", multiple broad areas related to HCI are adressed, discussing recent and ongoing work at the MIT Media Lab. Affective computing aims to reduce user frustration during interaction. It enables machines to recognize meaningful patterns of such expression, and explains different types of communication (parallel vs. non-parallel).[9] Article 7 consists of a large elaboration on emotion, mood, effects of affect which yields performance and memory, along with its causes and measurements. There is an interaction of affective design and HCI.[10] A study on emotion-specific autonomic nervous system activity in elderly people was done in Article 8. The elderly taking part followed muscle-by-muscle instructions for constructing facial proto-types of emotional expressions and re-lived past emotional experiences.[11] Reporting on two studies, Article 9 shows how elderly have difficulty differentiating anger and sadness, when shown faces. The two studies provide methods for measuring the ability of emotion recognition in humans.[12] Lastly, a definition of several emotions and their relation to physical responses is needed (breathing, facial expressions, body language, etc.). Article 10 describes how a computer can track and analyse a face to compute the emotion the subject is showing. Useful as an insight in how input data can be gathered, analysed and used for the purpose of mimicking or mirroring by a robot.[13]

Assistive technology for elderly

This category focusses mostly on robotic care for elderly living at home. Articles such as article 15 [14] and article 11 [15] explore the effect of robots to the social, psychological and physiological levels, providing a base for knowing what types of results certain actions yield.

Article 14 [16] explores six ethical issues related to deploying robots for elderly care, article 13 [17] provides more knowledge when it compares children, elderly and robots and their roles in relationships with humans.

Article 12 [18] looks at the available technologies for tracking elderly at home, keeping them safe and alerting others when needed.

Dementia and Alzheimer's

Closely related to elderly are the diseases Dementia and Alzheimer’s. These diseases affect the brain of a patient, making them forgetful or unable to complete tasks in their day-to-day life without help. Article 16 [19] elaborates on this and explains the impacts and a possible framework to counter these diseases is proposed. Article 17 [20] discusses whether an entertainment robot is useful to be deployed in care for elderly with dementia.

Measuring tools

Here, papers are collected that provide aid for designing a robotic system, article 18 [21] discusses some of the difficulties in measuring affection in Human Computer Interaction (HCI). For designing a system that is capable of expressing emotion, article 19[22] and article 20 [23] show recent developments when designing a ‘face’ or whole body to express emotion to a user.

Miscellaneous

In the study of emotions, models are often useful. Article 21 elaborates on social aspects of emotions, together with the implementation in human-computer interaction. A model is tested and accordingly a system is designed with the goal of providing a means of affective input (for example: emoticons). [24]

Article 22 describes emotional comfort was researched in patients that found themselves in a therapeutic state. It provides an understanding of the role of personal control in recovery and aspects of the hospital environment that impact hospitalized patients’ feelings of personal control.[25]

A more medical approach is taken in Article 23: evidence is provided for altered functional responses in brain regions subserving emotional behaviour in elderly subjects, during the perceptual processing of angry and fearful facial expressions, compared to youngsters.[26]

Statistics about the greying of global population provide insight in challenges paired with this greying. Article 24 is a collection of evidence for the greying of the wordl's population, with causes and consequences. It states three challenges.[27] Article 25 indicates the importance of a healthy community to the efficiency and economic growth of said community. It points out the goals of improving the healthcare system and how these improvements can be made.[28]

Characterizing the Design Space of Rendered Robot Faces[29]

A study on the design space of robots with a digital (screen-based) face. Summary can be found here.

Project setup

Approach

Milestones

Deliverables

At the end of this project period, these are the things we want to have completed to present:

- This wiki page

- A study report

- A prototype

User Research

Introduction

Method

This section elaborates on how the structures of the interviews. The interviews conducted

Interview plan ( First try, before Feedback)

Interview Guide

INLEIDING - vragen bedoeld om de mensen vast in het thema te laten denken.

- Zou u een robot kunnen vertrouwen? Wat heeft u nodig om een robot te kunnen vertrouwen?

- Wat verwacht u van een robot?

- Welke positieve/ negatieve ervaringen heeft u al met robots? Wat zou u graag anders zien aan robots?

- Zou u gebruik willen maken van een robot? Waarom wel/niet?

- Hoe zou je willen dat het gezicht van een robot eruit ziet?

- Wat voor materiaal zou je willen hebben bij een robot?

ROBOT IN DE ZORG- VRAGEN

- Wat is, voor u, het belangrijkste aan het ontvangen van zorg?

- Wat zou u er van vinden, deze zorg van robots te ontvangen?

- Welke taken denkt u dat een robot kan uitvoeren in de context van (thuis)zorg?

- Hoe belangrijk vindt u het, dat contact met uw verzorger emotioneel is?

- Welke eigenschappen moet een robot hebben om betrouwbaar te zijn/over te komen?* Wil je liever dat een robot zijn emotie prettig/comfortabel of duidelijk is?

TESTPLAN

Test 1

- Select various shapes (squares, triangles, circles), and variations of these shapes (tall, broad, small, large, etc.)

- Show these to people and ask them to rate them on likert scale of emotions

- Use 6 basic emotions: happiness, anger, sadness, surprise, disgust, fear

- Sidenote: movement/dynamic shapes not included in this test, while the transitions between shapes may affect the implied emotion.

- Sidenote: no knowledge is gained about the expected, context-dependent reaction of an emotional interface (i.e. nuance in mirroring emotion; laughing when expected to be angry; etc.)

- Alle vragen doornemen met de ouderen, aan het einde van de vragenlijst, de vragen doornemen om meer inzichten te kunnen krijgen.

- Welke van de vormen zou u het liefst in een gezicht zien?

- Welke van de vormen zou u het liefst zien als ogen voor een robot?

- Welke van de vormen zou u het liefst zien als neus voor een robot?

- Welke van de vormen zou u het liefst zien als mond voor een robot?

Doel van deze test is erachter komen welke vormen het meest toepasselijk zijn voor het gebruiken als communicatiemiddel voor emoties.

Test 2

- Select various robots displaying emotions from visual media.

- Rank robots on the amount of humanity displayed

- Ask questions about the various pictures:

- Which is preferred for tasks inside their personal space?

- What emotion expresses the emotion angry/happy/etc. the best/the most clear?

- Welke robot vond u het vrolijkste (met alle emoties)?

- For various tasks, which robot would you pick to do this task?

- Stofzuigen

- Koffie zetten

- Medicijnen geven

- Eten geven/koken

- Hulp met douchen

- Activiteiten: schilderen, etc.

Side note: we are aware of the fact that there may be some bias met de characters van de robots.

Doel van deze test is een richtlijn vinden om te zien welke trekken van een robot, daarbij ook welke mate van menselijkheid gewenst is voor een robot die ouderen zouden gebruiken in hun eigen omgeving.

Interview guide

Via the link below you can see the interview guide we are using, including the pictures and scales that we use for the two tests.

https://drive.google.com/open?id=1E91jO9-nM1NU0apAUHHymIyyJo98OSrf

Interview plan ( Second try, after Feedback)

https://drive.google.com/open?id=1DhAuVQ5AHjHgi6Yt51g0OR6pSJITHXHh

Results

Thematic Analysis

The analysis and results of the thematic analysis can be found in this folder on the drive: https://drive.google.com/open?id=1KeOm4rlhoSu8TOCgDGDPvSoPuMc2ijd1 This folder includes:

- The recorded interviews - The informed consent forms - The transcribed and coded interviews - The determined codes and themes - The relational scheme of themes - The results section - The discussion section

Results

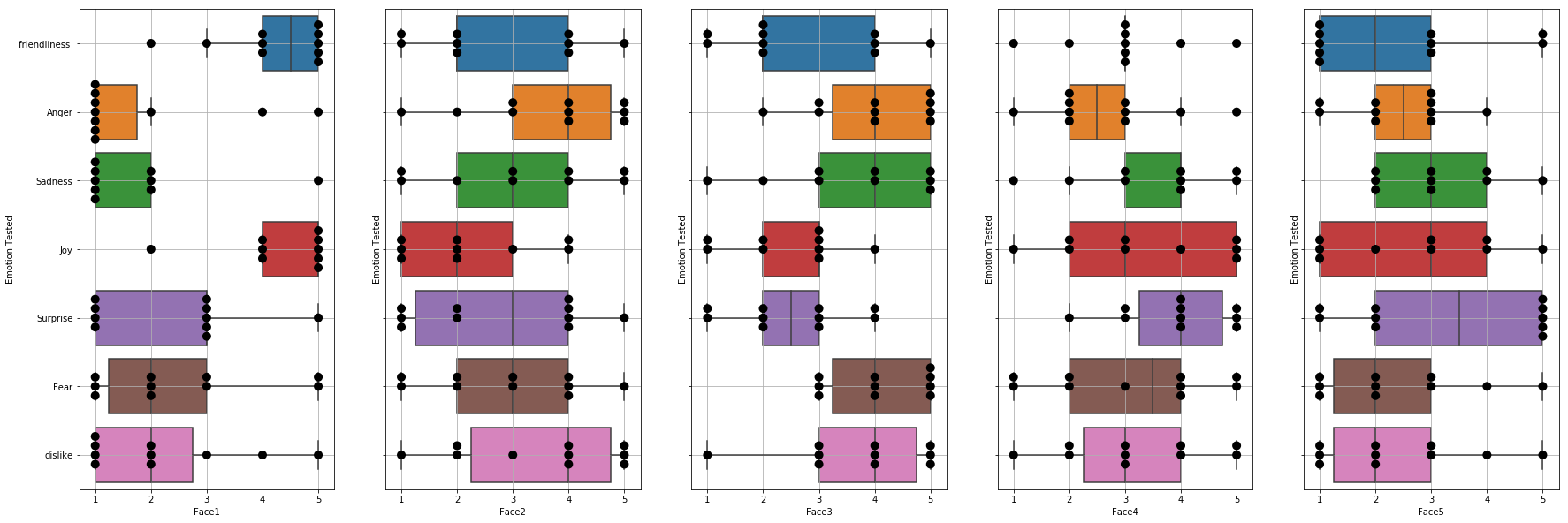

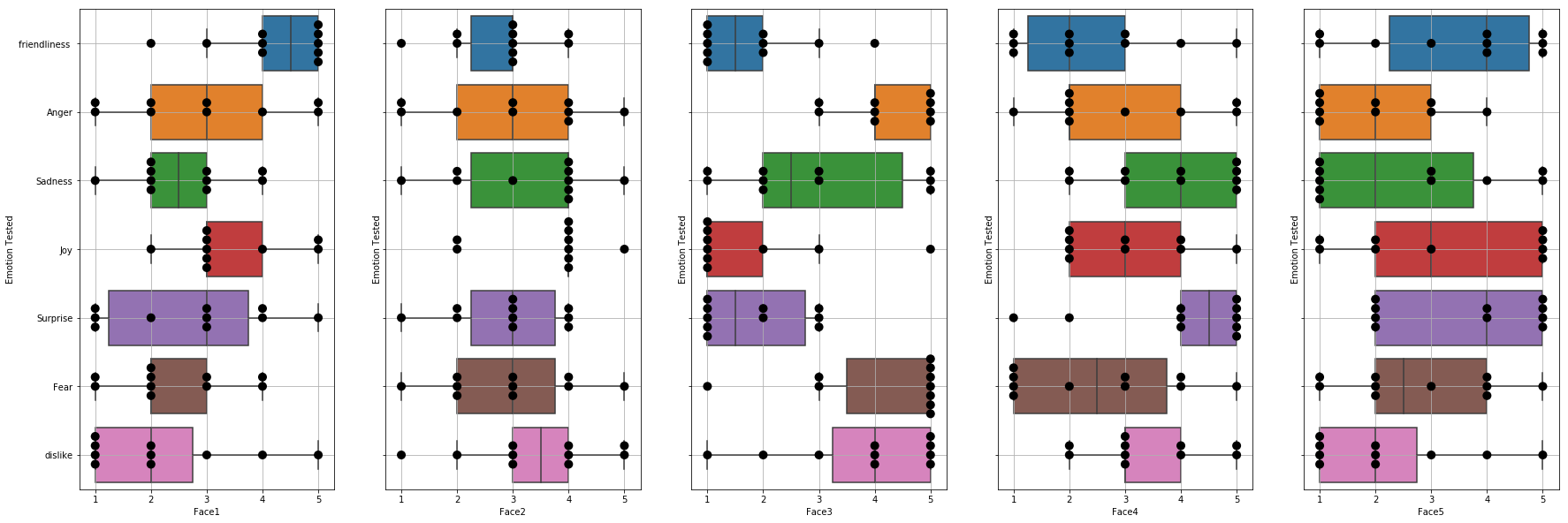

To obtain and visualize results, both STATA and the scikit-learn where used. The results for tests 1 and 2 are given in figure 1 and figure 2 respectively. In these figures a score of 5 means that the face is “the most” friendly/angry/sad of all the faces shown to the interviewee. And a score of 1 means “the least”. The excel file with the raw results can be found in the following link: Raw results

Normality

With STATA the normality was checked, for each combination of face and emotion. The normality was fine for all faces and emotions except for;

- face 5, the normality was rejected for the emotion of surprise;

- face 8 for the emotions joy and fear.

Since the normality was pretty close to being supported we decided to keep face 5 and face 8 in the dataset.

Regression

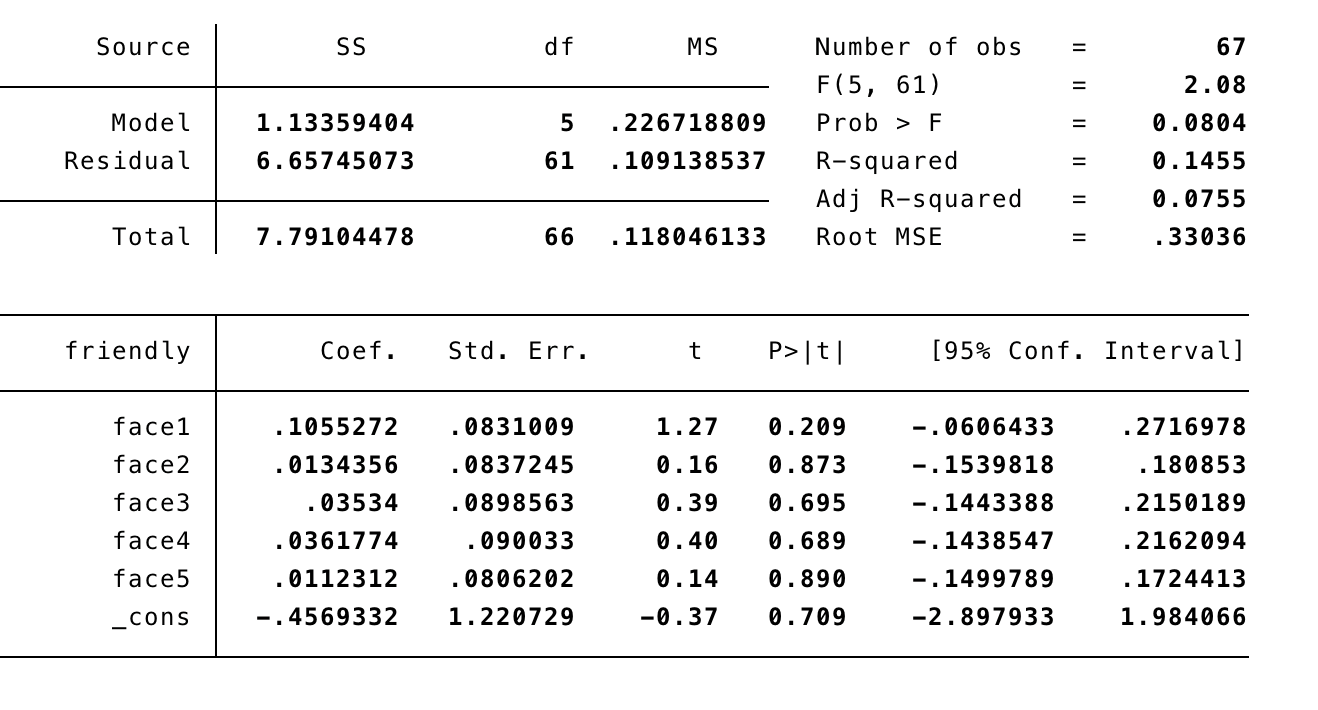

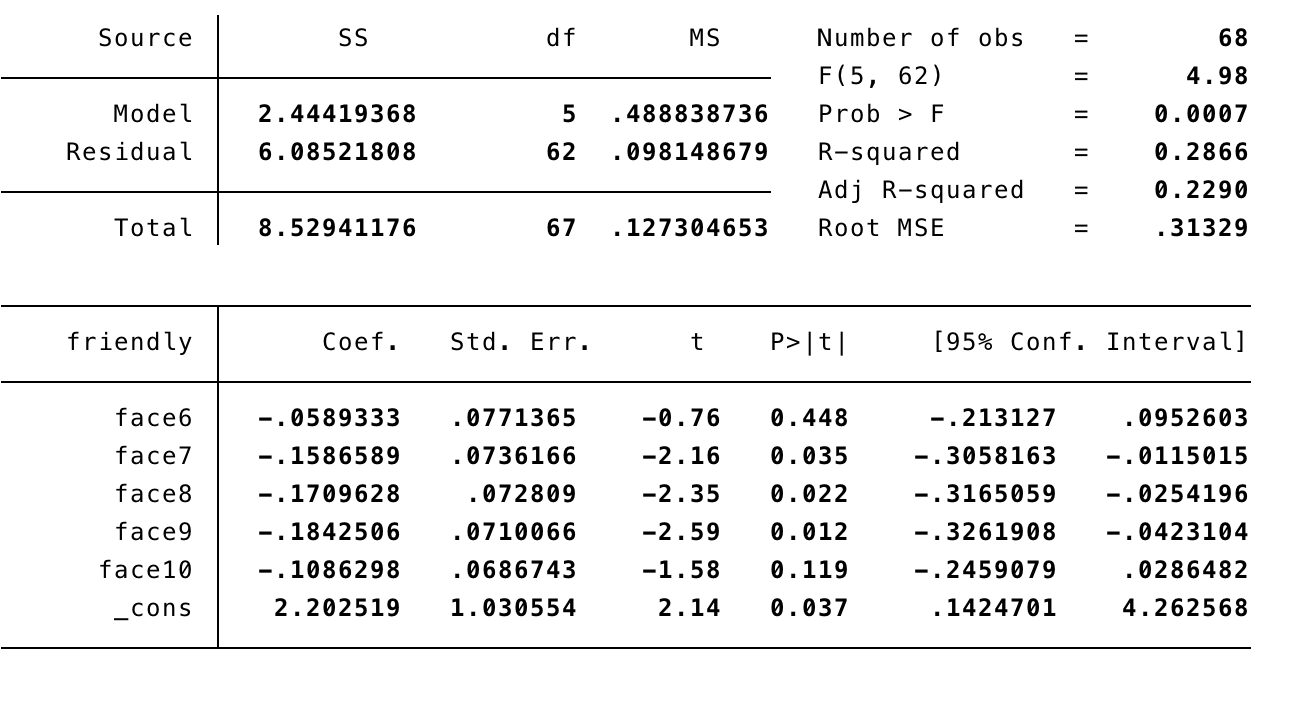

After checking the normality we ran regressions on all emotions. The used emotions are friendliness, anger, sadness, joy, surprise, dislike and fear. The used faces can be found in the method. The results of these regressions will be discussed in this section. In the explanations down below, the effect of the coefficient will be mentioned. To elaborate this; having a coefficient of +3 means that, if the unit of the emotion increases by 1, the unit of the variable with a coefficient of +3 increases with 3. However, the coefficients are very small in each regression, therefore they are almost negligible. First the results of the regressions on the faces and emotions of test 1 are assessed, which will be followed by the assessment of the regressions of test 2.

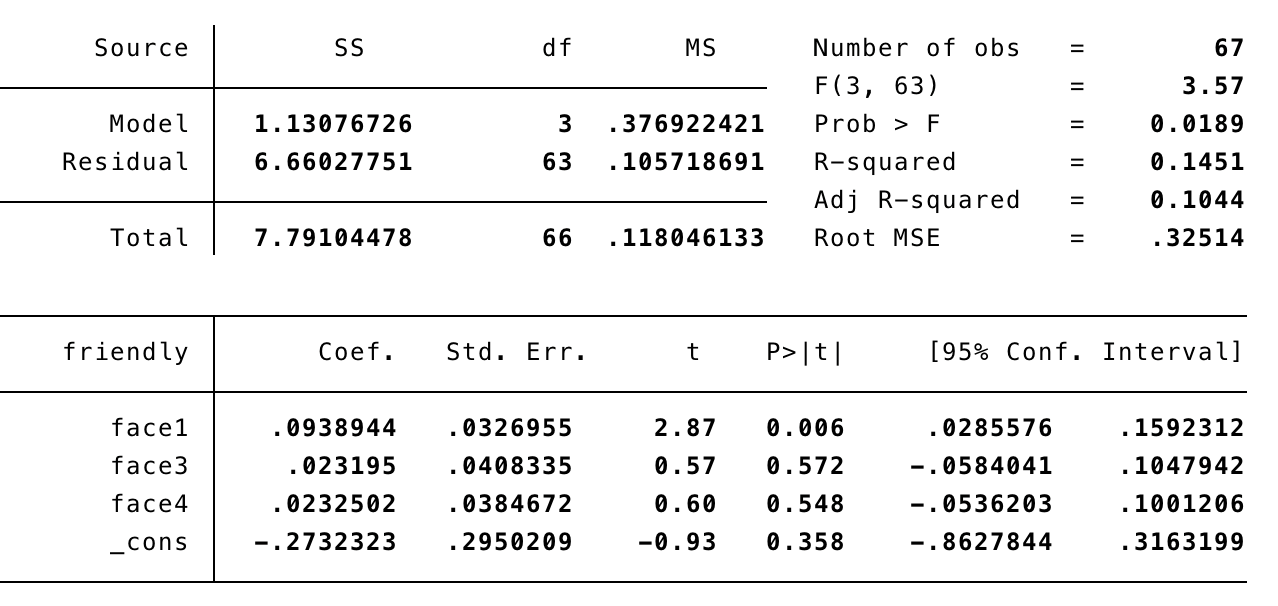

- The results of the regression on the emotion of friendliness are given in figure 3. Since none of the variables were significant in this model, we improved the model further, which can be found in figure 4.

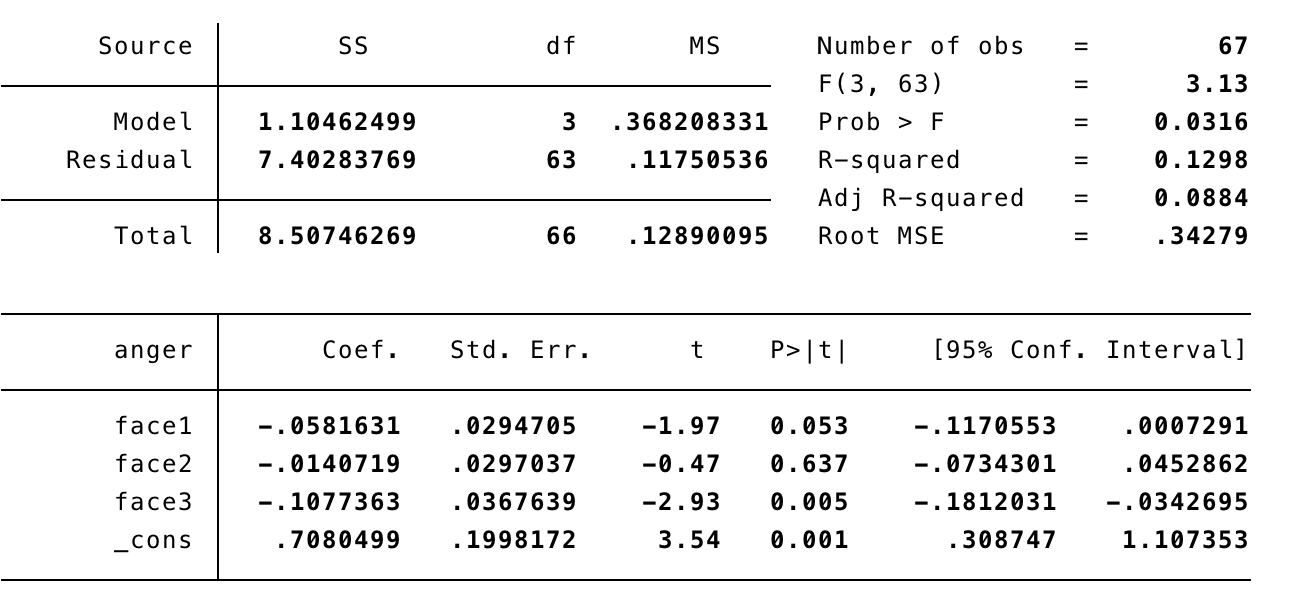

- The results of the regression on the emotion of anger are given in figure 5. Since none of the variables were significant we improved the model further, hence faces 4 and 5 are missing in figure 5.

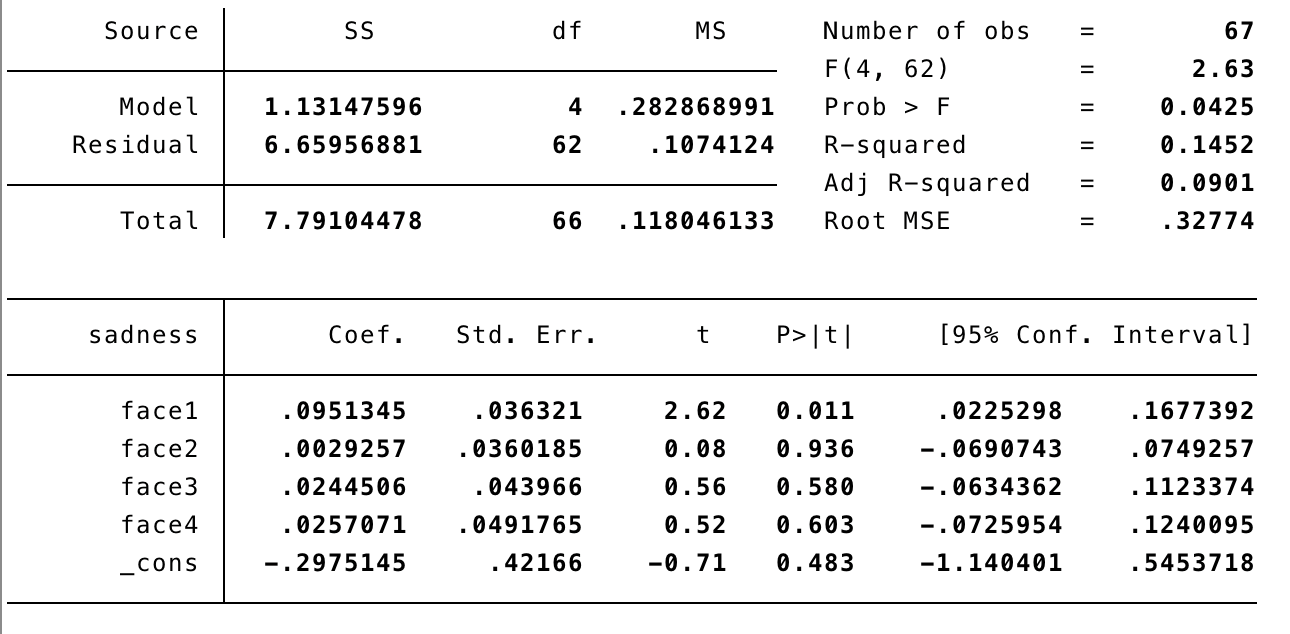

- The results of the regression on the emotion of sadness are given in figure 6. Since none of the variables were significant, just as with the regressions of the previous models, we improved the model further and the result is shown in figure 6.

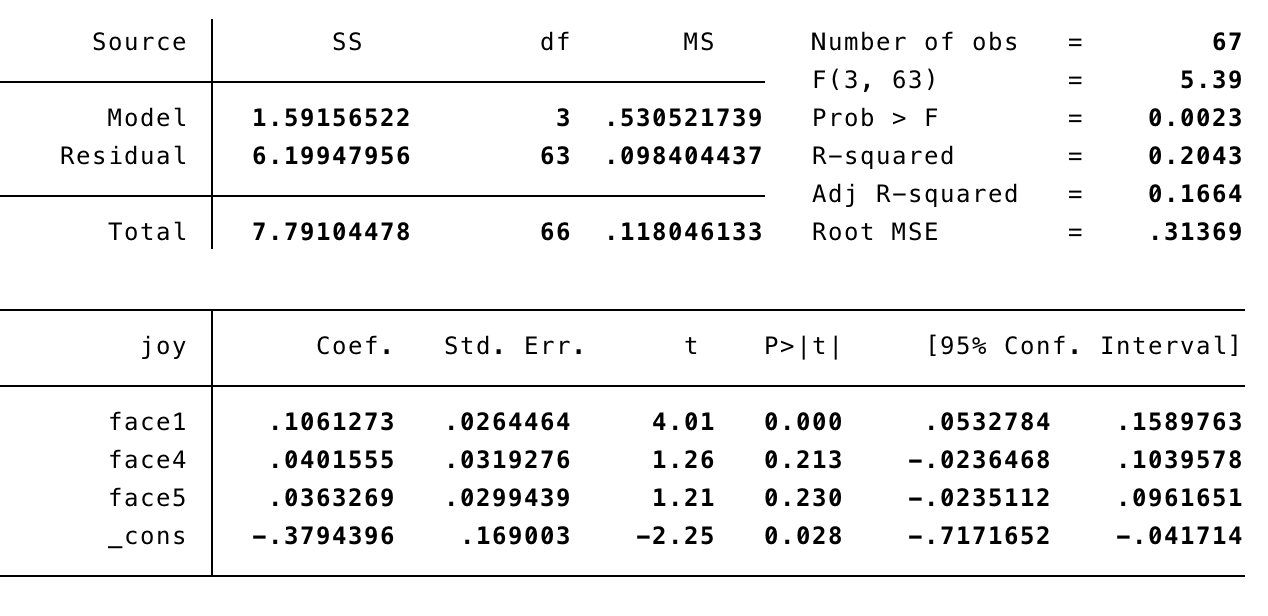

- The results of the regression on the emotion of joy are given in figure 7, again with an improved model.

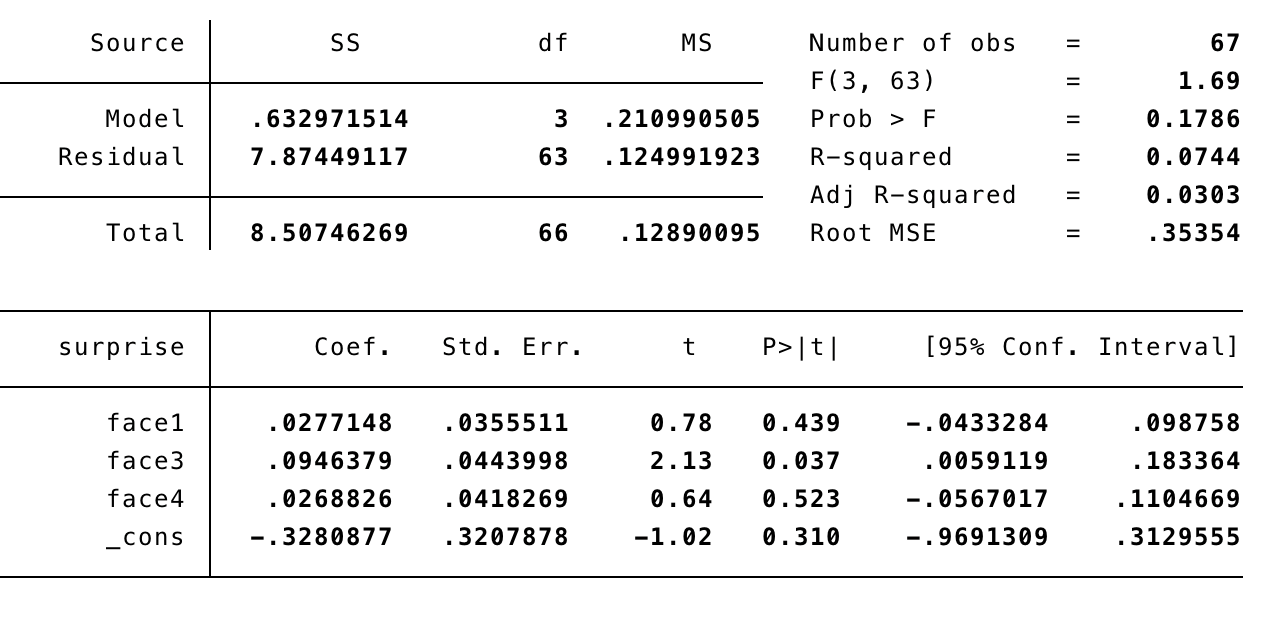

- The results of the regression on the emotion of surprise are given in figure 8, again with an improved model.

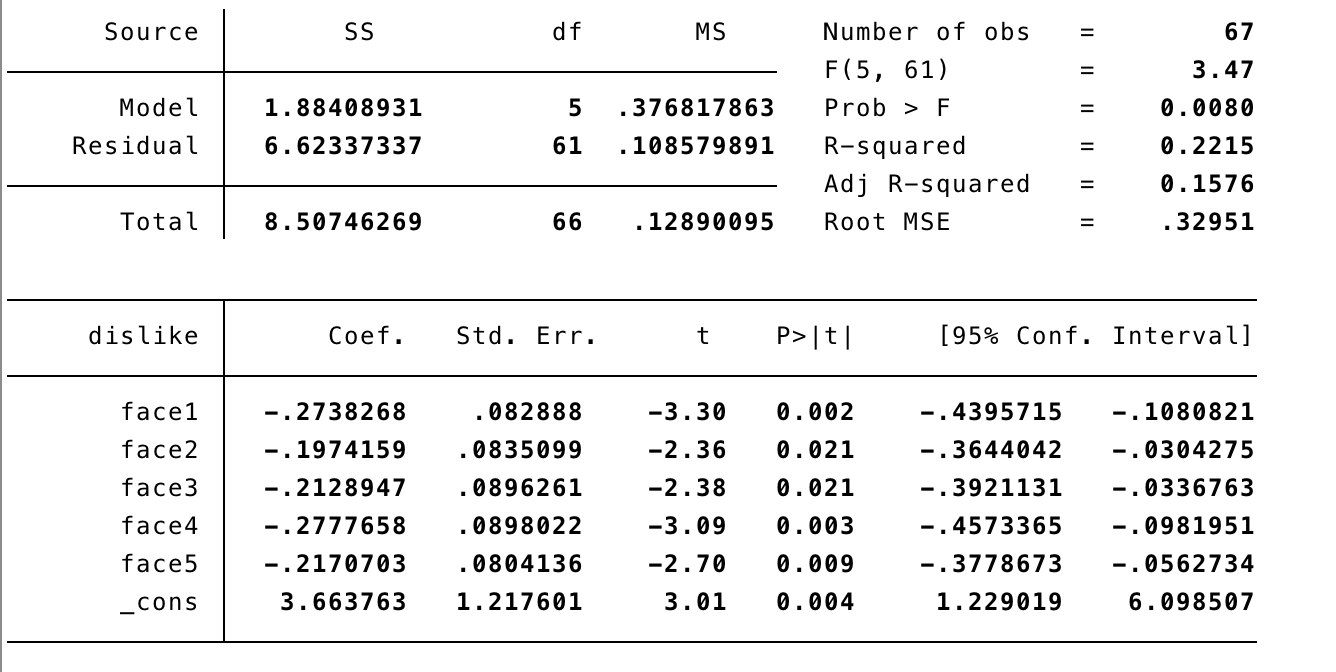

- The results of the regression on the emotion of dislike are given in figure 9, this model did not required any improvement.

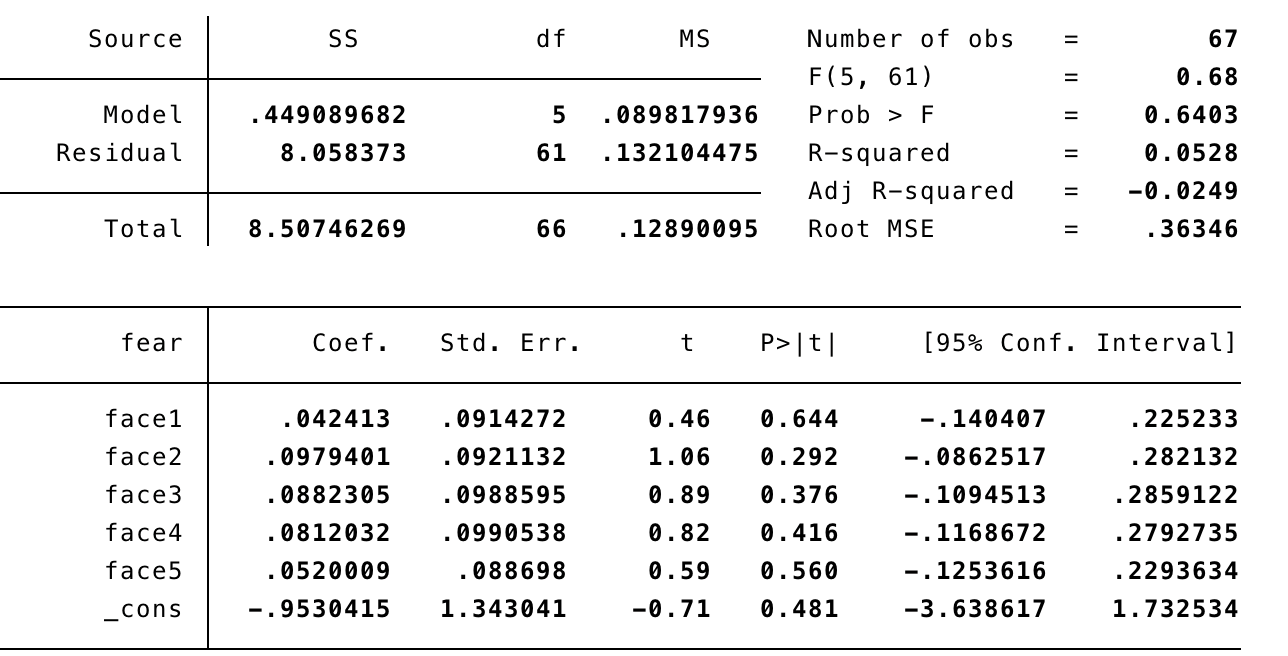

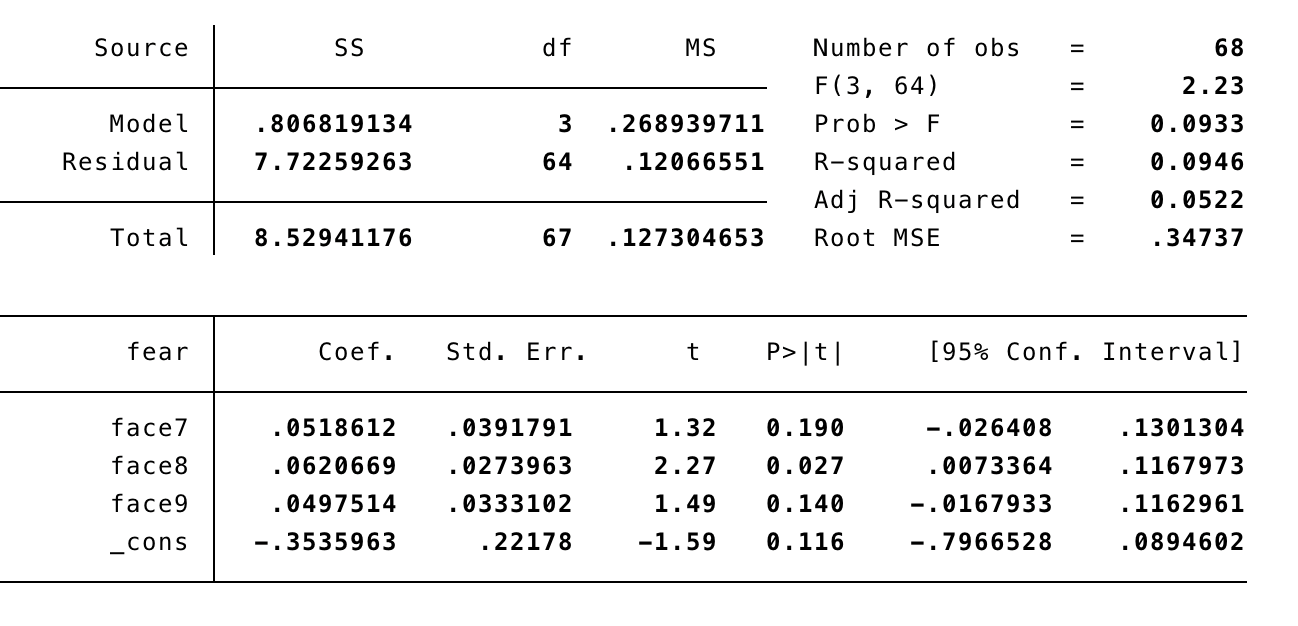

- The results of the regression on the emotion of fear are given in figure 10.

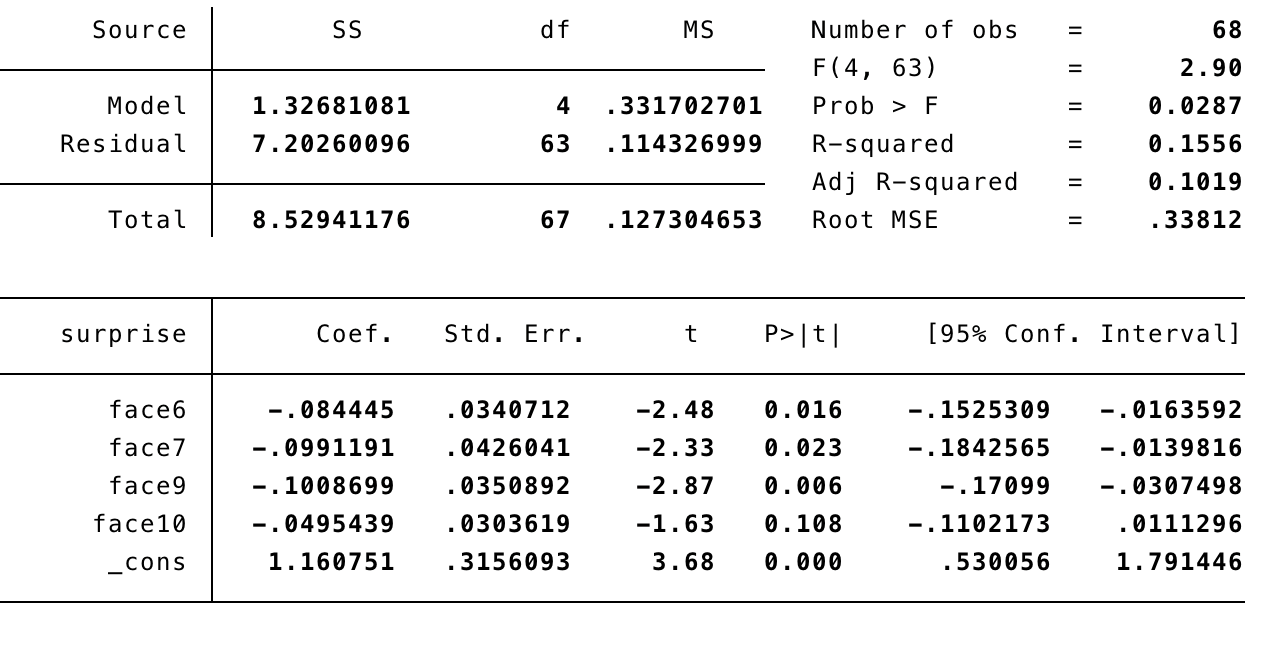

Next the results of the regressions on the faces and emotions of test 2 are assessed:

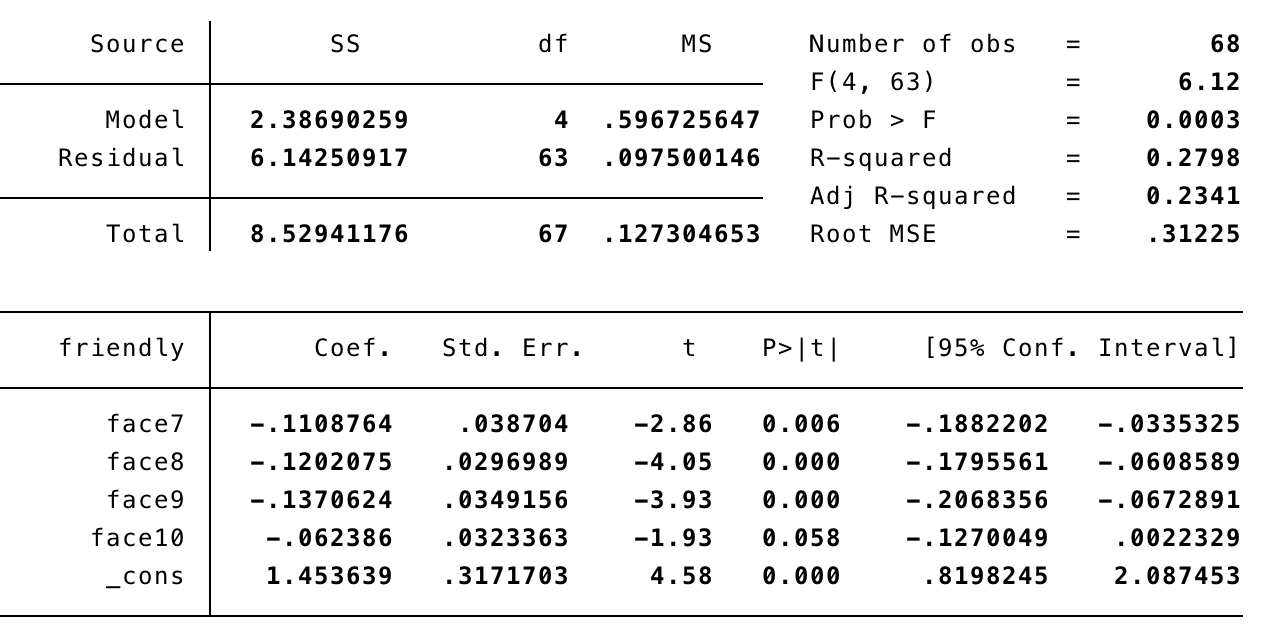

- The results of the regression on the emotion of friendliness are given in figure 11. Even though faces 7, 8 and 9 were significant in this model we tried to improve the model further, which resulted in the model shown in figure 12.

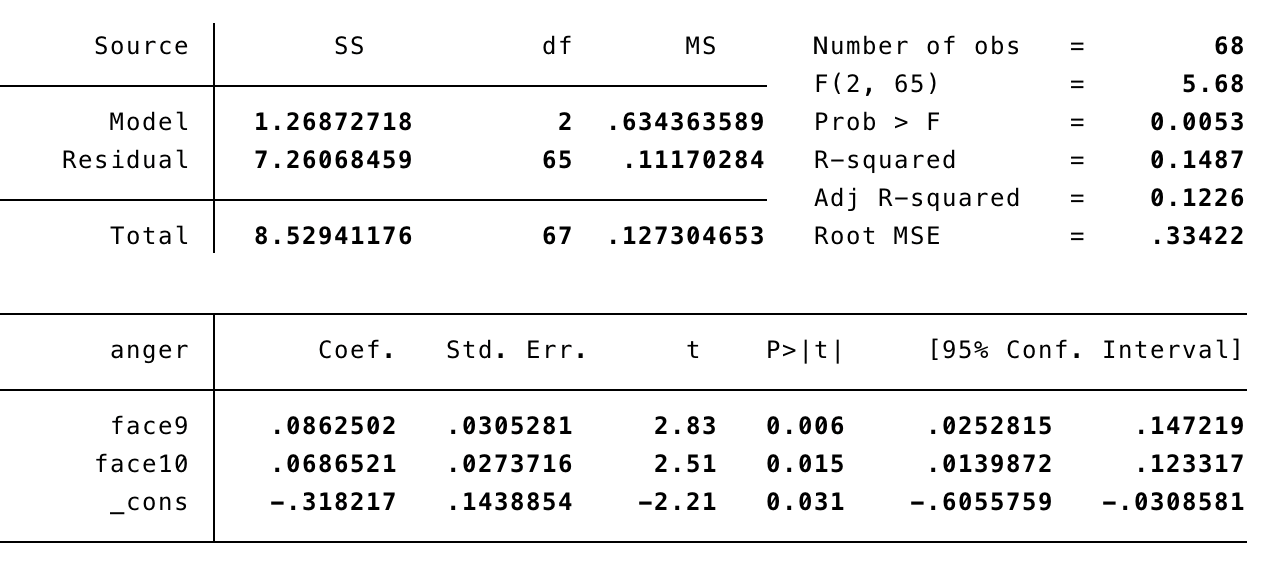

- The results of the regression on the emotion of anger are given in figure 13. Since none of the variables were significant we improved the model further, hence faces 6, 7 and 8 are missing in figure 5.

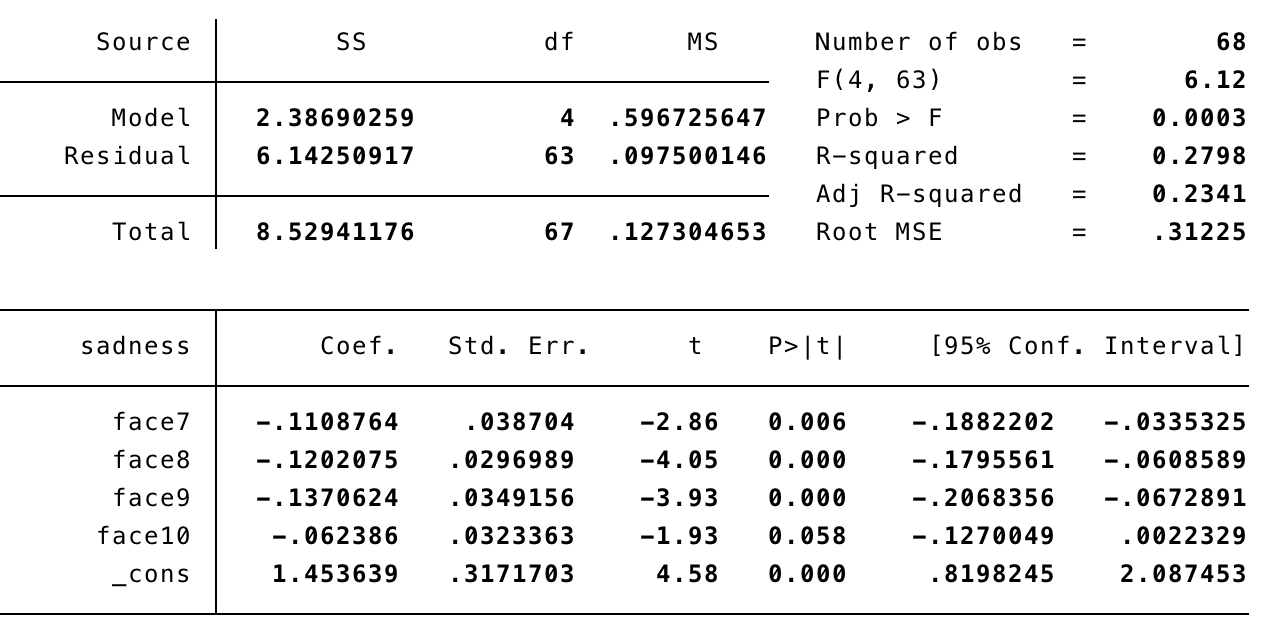

- The results of the regression on the emotion of anger are given in figure 14. We tried to improve the model further by removing face 6.

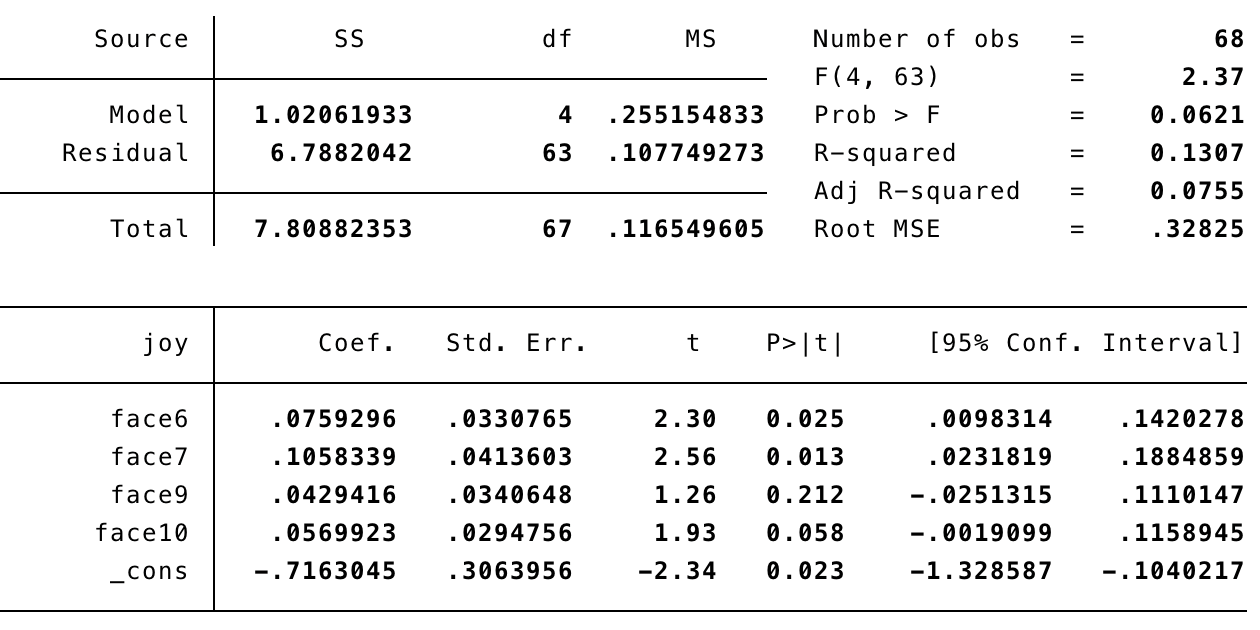

- The results of the regression on the emotion of joy are given in figure 15. Since no variables were significant, the model was improved by removing face 8.

- The results of the regression, after improvement, of the emotion of surprise are given in figure 16. Since no variables were significant, the model was improved by removing face 8.

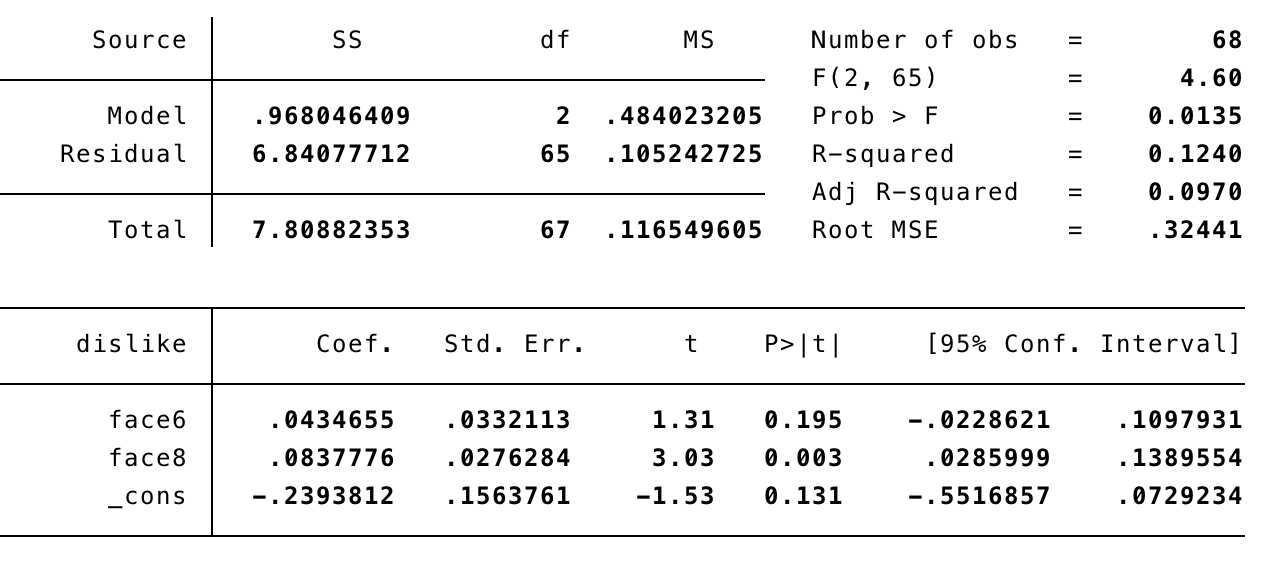

- The results of the regression, after improvement, of the emotion of dislike are given in figure 17. Since no variables were significant, the model was improved by removing face 8.

- The results of the regression, after improvement, of the emotion of fear is given in figure 18.

From these regression tables one can draw the following conclusions: For test 1;

- Face 1 (round eyes) was judged as expressing friendliness, sadness and joy;

- Face 3 (cubic eyes with sharp angles) was judged expressing the emotion of anger and surprise;

- For the emotion of dislike, all faces were significant;

- None of the faces were significant to the emotion of fear in this test, this yields that none of the faces were judged as expressing fear in particular by the elderly.

For test 2;

- The distribution of emotions to the faces was not quite as distinguishable as in test 1. Here, all faces express more than 1 emotion to be identified with, except for;

- face 10 (rounded yellow eyes), which is only matched with anger;

- face 6 (rounded blue eyes) express joy and surprise;

- face 7 (rounded green eyes) expresses friendliness, sadness, joy and surprise;

- face 8 (rounded red eyes) expresses, according to the elderly, friendliness, sadness, dislike and fear;

- face 9 (rounded white eyes) expresses friendliness, anger, sadness and surprise. For surprise, face 9 was highly significant compared to the other faces.

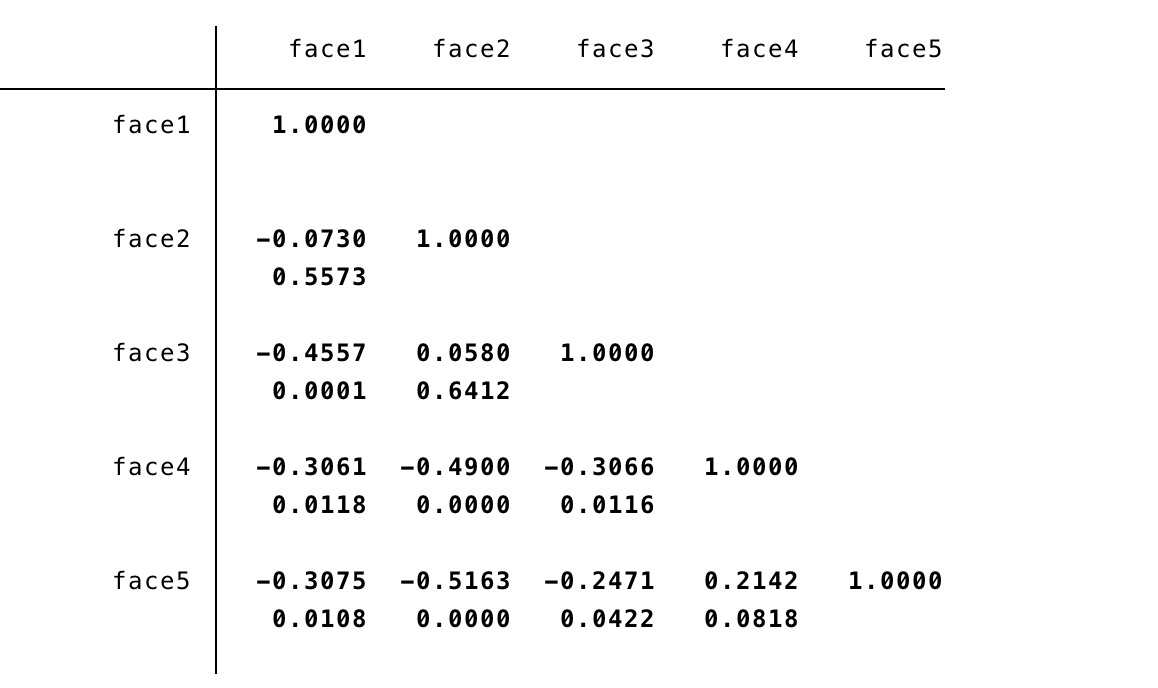

Correlation

Figures 20 and 21 show a correlation tests for both test 1 and test 2. From these figures one can conclude that faces 1 and 3 are not likely to be assessed with the same score (thus are usually not ordered next to each other).

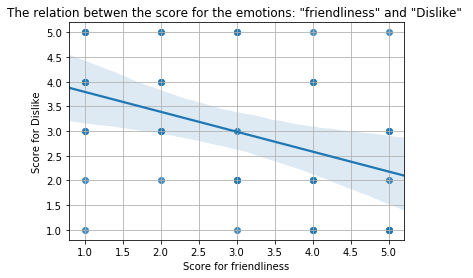

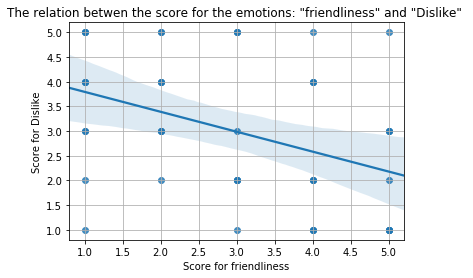

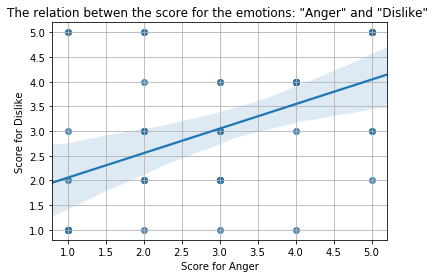

For the correlation between different emotions a regression is done as well, for the emotions which have the clearest correlation the regression plots are given in figure 22, 23 and 24 for the combination of emotions;

- From figure 22, one can derive that if an elderly assesses a face with a high score of the the emotion sadness this person will most likely asses the same face with a low score for the emotion of Friendliness. So quite trivially, one can conclude that those emotions are each others opposites.

- From figure 23, one can clearly derive a relationship between the emotions friendliness and joy, that is, a face with a high score for the emotion of friendliness is most likely assessed with a high score for the emotion of joy as well.

- Idem dito for the emotions anger and dislike

Conclusion

Discussion

Looking back at the test we designed and executed the majority went according to plan, but there were a couple things that should be improved for a future test.

First of all, the face design we used as a base for testing the connection of shapes and color to the emotion of a robot’s expression had a slight grin for all variations. At first we anticipated this would not cause an issue because it was the same for each face, but noticed that during the test there was at least one participant that did not want to rate the proposed variations on anger or sadness because it smiled. To solve this for a future test a more neutral mouth shape should be used.

Secondly, we had only ten participants to collect our data from that also were in the same caring facility. This means the test population of our experiment, together with our analysis and conclusion do not represent the target group on a real-world scale. To solve this, we would have to split up our group over different facilities while also making the test population larger to make the results more meaningful.

Thirdly, as we aimed to gather both quantitative and qualitative data, we asked participants to explain their reasoning behind the sorting of the faces in both tests. The problem we encountered was when the questions were asked, the answers were generally superficial and did not provide enough added value. As a result of this, the interviewers tended to veer off the interview guide to still collect the qualitative part of the data.

USE aspects

Users

Society

Enterprise

Conclusion

Discussion

References

Useful links

Netflix film 'Next gen' [30]

- ↑ https://www.oecd.org/newsroom/38528123.pdf OECD. (2007). Annual Report 2007. Paris: OECD Publishing.

- ↑ https://www.sciencedirect.com/science/article/pii/S0897189704000874 ANR. (2005). Applied Nursing Research 18 (2005) 22-28

- ↑ https://www.researchgate.net/publication/235328873_Dominance_and_valence_A_two-factor_model_for_emotion_in_HCI Dryer, Christopher. (1998). Dominance and valence: A two-factor model for emotion in HCI.

- ↑ https://www.ncbi.nlm.nih.gov/pubmed/23177981 Elaine Mordoch, Angela Osterreicher, Lorna Guse, Kerstin Roger, Genevieve Thompson (2013), Use of social commitment robots in the care of elderly people with dementia: A literature review, Maturitas, p 14-20

- ↑ http://www.cs.cmu.edu/~social/reading/breemen2004c.pdf

- ↑ https://ieeexplore.ieee.org/document/4058873 S. Sosnowski, A. Bittermann, K. Kuhnlenz and M. Buss (2006), Design and Evaluation of Emotion-Display EDDIE, 2006 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 3113-3118.

- ↑ https://ieeexplore.ieee.org/document/4415108 D. Kwon et al. (2007), Emotion Interaction System for a Service Robot, RO-MAN 2007 - The 16th IEEE International Symposium on Robot and Human Interactive Communication, pp. 351-356.

- ↑ https://ieeexplore.ieee.org/document/5326184 M. Zecca et al. (2009), Whole body emotion expressions for KOBIAN humanoid robot — preliminary experiments with different Emotional patterns —, RO-MAN 2009 - The 18th IEEE International Symposium on Robot and Human Interactive Communication, pp. 381-386.

- ↑ https://affect.media.mit.edu/pdfs/99.picard-hci.pdf R. W. Picard (2003), Affective computing for HCI

- ↑ https://www.researchgate.net/publication/242107189_Emotion_in_Human-Computer_Interaction Brave, Scott & Nass, Clifford. (2002). Emotion in Human–Computer Interaction. The Human-Computer Interaction Handbook: Fundamentals, Evolving Technologies and Emerging Applications. 10.1201/b10368-6.

- ↑ https://www.ncbi.nlm.nih.gov/pubmed/2029364 Levenson, R. W., Carstensen, L. L., Friesen, W. V., & Ekman, P. (1991). Emotion, physiology, and expression in old age. Psychology and Aging, 6(1), 28-35.

- ↑ https://www.tandfonline.com/doi/abs/10.1080/00207450490270901 Susan Sullivan & Ted Ruffman (2004) Emotion recognition deficits in the elderly, International Journal of Neuroscience, 403-432.

- ↑ https://ieeexplore.ieee.org/document/911197 R. Cowie et al., "Emotion recognition in human-computer interaction," in IEEE Signal Processing Magazine, 32-80, Jan 2001.

- ↑ https://www.researchgate.net/publication/3450481_Living_With_Seal_Robots_-_Its_Sociopsychological_and_Physiological_Influences_on_the_Elderly_at_a_Care_House Wada, K & Shibata, Takanori. (2007). Living With Seal Robots - Its Sociopsychological and Physiological Influences on the Elderly at a Care House. Robotics, 972-980.

- ↑ https://www.researchgate.net/publication/229058790_Assistive_social_robots_in_elderly_care_A_review Broekens, Joost & Heerink, Marcel & Rosendal, Henk. (2009). Assistive social robots in elderly care: A review. Gerontechnology, 94-103.

- ↑ https://www.researchgate.net/publication/226452328_Granny_and_the_robots_Ethical_issues_in_robot_care_for_the_elderly Sharkey, Amanda & Sharkey, Noel. (2010). Granny and the robots: Ethical issues in robot care for the elderly. Ethics and Information Technology. 27-40.

- ↑ https://ieeexplore.ieee.org/document/5751987 A. Sharkey and N. Sharkey, "Children, the Elderly, and Interactive Robots," in IEEE Robotics & Automation Magazine, vol. 18, no. 1, pp. 32-38, March 2011. doi: 10.1109/MRA.2010.940151

- ↑ https://www.ncbi.nlm.nih.gov/pubmed/11742772 FG. Miskelly, Assistive technology in elderly care. Department of Medicine for the Elderly

- ↑ https://alzres.biomedcentral.com/articles/10.1186/alzrt143 M. Wortmann, Alzheimer's Research & Therapy (2012)

- ↑ https://academic.oup.com/biomedgerontology/article/59/1/M83/533605 The Journals of Gerontology: Series A, Volume 59, Issue 1, 1 January 2004, Pages M83–M85

- ↑ https://dl.acm.org/citation.cfm?id=1358952&dl=ACM&coll=DL N. Sadat Shami, Jeffrey T. Hancock, Christian Peter, Michael Muller, and Regan Mandryk. 2008. Measuring affect in hci: going beyond the individual. In CHI '08 Extended Abstracts on Human Factors in Computing Systems (CHI EA '08). ACM, New York, NY, USA, 3901-3904. DOI: https://doi.org/10.1145/1358628.1358952

- ↑ https://ieeexplore.ieee.org/document/1642261 H. Shibata, M. Kanoh, S. Kato and H. Itoh, "A system for converting robot 'emotion' into facial expressions," Proceedings 2006 IEEE International Conference on Robotics and Automation, 2006. ICRA 2006., Orlando, FL, 2006, pp. 3660-3665. doi: 10.1109/ROBOT.2006.1642261

- ↑ https://ieeexplore.ieee.org/document/4755969 M. Zecca, N. Endo, S. Momoki, Kazuko Itoh and Atsuo Takanishi, "Design of the humanoid robot KOBIAN - preliminary analysis of facial and whole body emotion expression capabilities-," Humanoids 2008 - 8th IEEE-RAS International Conference on Humanoid Robots, Daejeon, 2008, pp. 487-492. doi: 10.1109/ICHR.2008.4755969

- ↑ https://www.researchgate.net/publication/235328873_Dominance_and_valence_A_two-factor_model_for_emotion_in_HCI Dryer, Christopher. (1998). Dominance and valence: A two-factor model for emotion in HCI.

- ↑ https://www.ncbi.nlm.nih.gov/pubmed/15812732 AM. Williams et al., 'Enhancing the therapeutic potential of hospital environments by increasing the personal control and emotional comfort of hospitalized patients.' (2005)

- ↑ https://www.ncbi.nlm.nih.gov/pubmed/15936178 A. Tessitore et al., 'Functional changes in the activity of brain regions underlying emotion processing in the elderly.' (2005)

- ↑ https://academic.oup.com/ppar/article-abstract/17/4/12/1456824?redirectedFrom=fulltext Adele M. Hayutin; Graying of the Global Population, Public Policy & Aging Report, Volume 17, Issue 4, 1 September 2007, Pages 12–17

- ↑ https://www.who.int/hrh/com-heeg/reports/en/ World Health Organisation, 'Working for health and growth: investing in the health workforce' (2016)

- ↑ https://www.researchgate.net/publication/323590718_Characterizing_the_Design_Space_of_Rendered_Robot_Faces Kalegina, Alisa & Schroeder, Grace & Allchin, Aidan & Berlin, Keara & Cakmak, Maya. (2018). Characterizing the Design Space of Rendered Robot Faces. 96-104. 10.1145/3171221.3171286

- ↑ https://www.youtube.com/watch?v=uf3ALGKgpGU netflix film trailer 'Next gen'