Embedded Motion Control 2013 Group 10

Group Name

Team Picobello

Group Members

| Name: | Student id: | Email: |

| Pepijn Smits | 0651350 | p.smits.1@student.tue.nl |

| Sanne Janssen | 0657513 | s.e.m.janssen@student.tue.nl |

| Rokus Ottervanger | 0650019 | r.a.ottervanger@student.tue.nl |

| Tim Korssen | 0649843 | t.korssen@student.tue.nl |

Introduction

Nowadays, many human tasks have been taken over by robots. Robot motion control and embedded motion control in the future allow us to use robots for many purposes, such as health care. The (humanoid) robots therefore have to be modeled such that they can adapt to any sudden situation.

The goal of this project is to get the real-time concepts in embedded software design operational. This wiki page reviews the design choices that have been made for programming Jazz robot Pico. Pico is programmed to navigate fully autonomously through a maze, without any user intervention.

The wiki contains three different programs. The first program was used for the corridor competition. In this program the basic skills like avoiding collision with the walls, finding corners and turning are implemented.

After the corridor competition, a new design strategy was adopted. The second program consists of a low level code, namely a wall follower. By keeping the right wall at a fixed distance from Pico, a fairly simple, but robust code will guide Pico through the maze.

Besides this wall follower strategy, a high level approach was used. This maze solving program uses wall (line) detection to build a navigation map, which Pico uses to create and follow path lines to solve the maze. To update the position of Pico, the odometry information, local and global maps are used.

The latter two programs use Pico’s camera to detect arrows in the maze that point Pico in the right direction.

Data processing

Pico outputs a lot of sensordata. Most of the data needs to be preprocessed for use the maze-solving algorithm. The odometry data, laserscandata and imagedata are discussed below.

Odometry data

One of the incoming data types is the odometry. The odometry information is based on the angular positions of the robot wheels. These angular positions are converted into a position based on a Cartesian odometry system, which gives the position and orientation of Pico, relative to its starting point. For the navigation software of Pico, only the x,y-position and [math]\displaystyle{ \theta }[/math]-orientation are of interest.

Due to slip of the wheels, the odometry information is never fully accurate. Therefore the odometry is only used as initial guess in the navigating map-based software. For accurate localization, it always needs to be corrected.

This correction is based on the deviation vector obtained from the map updater. This deviation vector gives the (x,y,θ)-shift that is used to fit the local map onto the global map and therefore represent the error in the odometry. Because of the necessary amount of communication between the parts of the software that create the global map and the part that keeps track of the accurate position, these parts are programmed together in one node. This node essentially runs a custom SLAM (Simultaneous Localization and Mapping) algorithm.

Laserscan data

To reduce measurement noise and faulty measurements the laser data is filtered. Noise is reduced by implementing a Gaussian filter, which smoothens each data point over eight other data points.

[math]\displaystyle{ G(x)=\frac{1}{\sqrt{\sigma \pi}}e^{\frac{-x^2}{2 \sigma^2}} }[/math] with [math]\displaystyle{ \sigma = 2.0 }[/math]

Faulty measurements are eliminated in three different ways. First data points which deviate a lot from their neighbors are ignored in the Gaussian filter so that the filter does not close openings. When ignoring data points the Gaussian is normalized based on the used points.

Secondly the first and last 15 data points are ignored, decreasing the angle of view by 7.5 degrees at each side, but preventing the robot to measure itself. At last, only data points are used which are between a certain range, not only to prevent measuring the robot itself, but also to eliminate outliers.

Arrow detection

Binary image

From a full color camera image we want to determine whether there is an arrow and to determine its direction. Because this full color camera image is too complex to process, we transform it into a binary image. Since the arrows used in the competition are red, everything that is red (with a certain margin) is made white and the remaining is set as black.

Canny edge detection

Next edges are detected with a canny edge detection algorithm from openCV. This algorithm uses a Gaussian filter to reduce noise, similar to the one explained above. But it also uses the gradient of this Gaussian filter. At and edge there is a very strong transition from white to black, resulting in an extreme value in the gradient. So by thresholding the gradient, edges can be detected.

Find contours

The binary image from the canny edge detection is used as input in the FindContours function from openCV. This function finds contours by connecting nearby points, giving a vector of contours as output. Each contour consists of a vectors filled with points.

Arrow detection

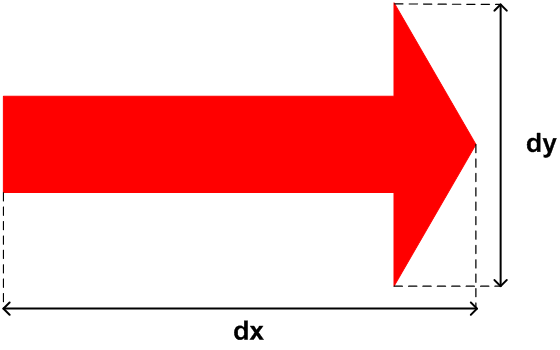

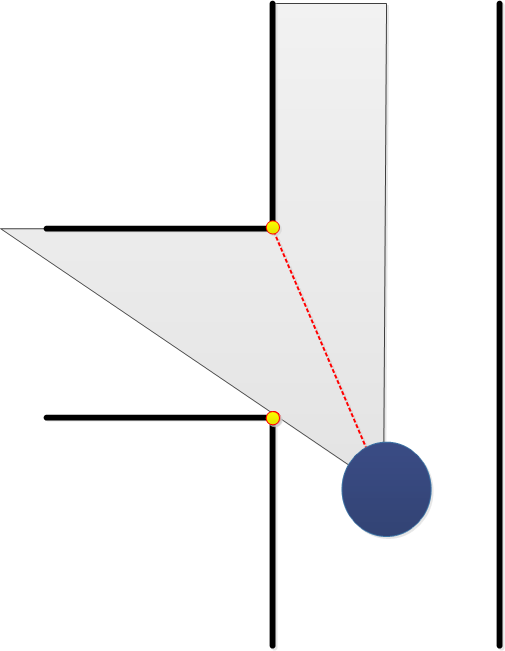

Finally the image is processed so that we can start detecting arrows. Arrows are detected in two steps. First the ratio of the height and the width of each contour is determined, to see if the contour can be an arrow, see figure 1. The arrow used in the competition has a ratio of 2.9, with a lower and upper bound, this results in: [math]\displaystyle{ 2.4\lt \frac{dx}{dy} \lt 3.8 }[/math]

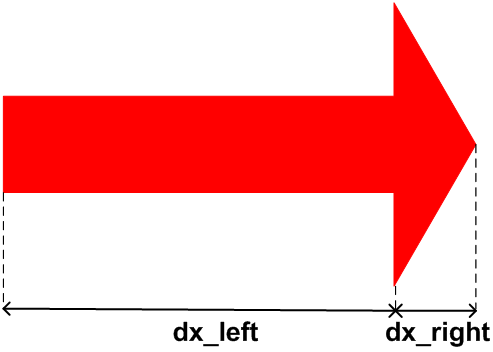

Next if a contour can be an arrow, the direction is investigated. This is done by determining the ratio of dx_right and dx_left, see figure 2. If the largest width (dy) is shifted towards the right dx_left > dx_right and the other way around. With some lower and upper bound this results into:

Right arrow if:

- [math]\displaystyle{ dx_{left} \gt \frac{5}{4} dx_{right} }[/math]

Left arrow if:

- [math]\displaystyle{ dx_{left} \lt \frac{4}{5} dx_{right} }[/math]

No arrow if:

- [math]\displaystyle{ \frac{4}{5} dx_{right} \lt = dx_{left} \lt = \frac{5}{4} dx_{right} }[/math]

If the largest width is in between the margins, the contour cannot be an arrow and is treated as such. This may not be the most robust way to detect an arrow, but during the tests it seemed to work just fine. A few other methods that can be used or combined to make the arrow detection more robust are circularity, shape matching, matching of moments or finding lines with the Hough transform.

Program 1: Corridor Challenge solver

Wall avoidance

Edge detection

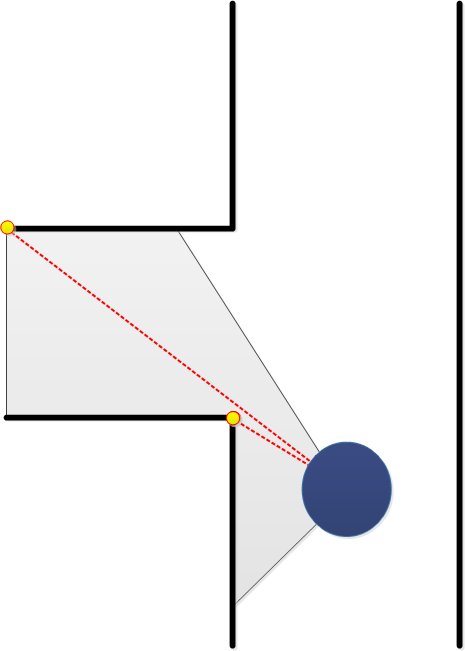

For the corridor competition, Pico should first be able to detect an outside corner (see picture 1). To detect corners, the laser range data is used.

The robot searches left and right for large differences in wall distance. If the length ratio between two neighboring ranges exceeds a certain threshold, the position (1) of the outside corner is saved. Also the side of the corridor is set to left or right. If the first corner has been found, Pico searches at this side for other corners. This search algorithm starts at the first corner and checks for the shortest laser range distance. This coordinate is saved as second edge position (see picture 2). The coordinates of the edges are sent to the make turn function, which controls Pico’s movement.

If Pico is past the first corner, the first algorithm explained above cannot recognize this first corner anymore. To avoid problems, the second algorithm is activated for both the first and last corner (see picture x.x).

Turning into the corridor

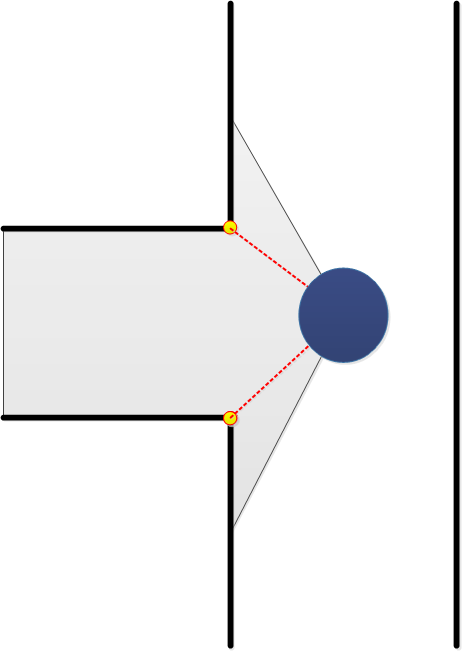

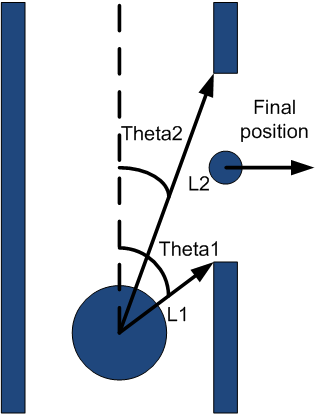

If the first corner is passed, a boolean is set to true and the centering algorithm is replaced by the algorithm used to make a turn. This algorithm uses the two corners of the side exit determined by the corner detection algorithm.

First it drives a little towards the side exit but prevents a collision with the first corner. If the middle of the side exit is reached, L1 = L2 in figure XX, Pico is turned until both angles to the corners are equal, Theta1 = Theta2. It then drives in the exit while keeping the difference between the distances to the corners within a margin. If the absolute values of the angles to the corners are both larger than 90 degrees, the make turn algorithm is switched off and the centering algorithm is switched on again.

The algorithm could be improved by not only using the distances to the corners but also preventing coming to close to the other wall of the corridor. This is not a big problem since the wall avoidance algorithm prevents collisions.

Centering algorithm

This algorithm uses the shortest length to left and right and the corresponding angle. These properties are derived in the function ‘detectWalls’ and put together in one variable. Its output is a forward velocity and a rotation rate.

First the current corridor width is determined by summing up the shortest distances to the left and the right. This sum is wrong when a side exit is reached, but this is not a problem since a different function is used to make the turn.

If the distance to one of the sides is smaller than one third of the corridor width, it is determined if Pico is facing the wall or the middle of the corridor, see the red area in figure XX. If it is facing the wall it is turned back without driving forward, if it is facing the middle, it drives with a velocity of 0.2.

If Pico is in the middle third if the corridor, it also drives forward with a velocity of 0.2, see green area in figure XX.

The function could be improved by determining the angle that has to be made to face the right direction. This angle should not be used as the rotation rate, but the odometry should be used to determine if the preferred angle is reached.

Another improvement would be a better controller, which also steers if Pico is still in the middle part of the corridor. This was not implemented since in this stadium the function wall_avoidence was still very basic and could not handle driving and steering well.

Program structure

This program has a single node structure. All functions are defined internally in a so called ‘awesome node’. The wall avoidance has the highest priority in the node to avoid wall collision. The second most important function is the centering algorithm. if the edge detection has detected a side corridor, the turning algorithm is activated. A schematic view is shown in picture x.x.

Program evaluation

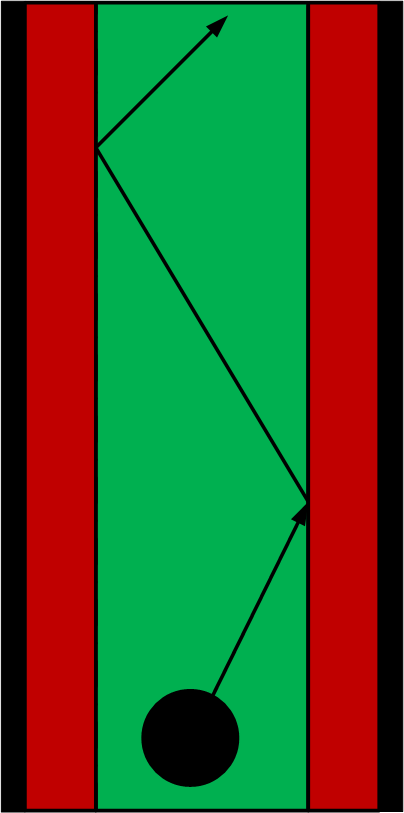

During the corridor competition, the program was fixed, but some bugs came up. The functions stay in middle and wall avoidance worked well, but during the last simulations before the corridor competition, the corner detection showed some undesired results.

The first and second corners are detected correctly. While turning however, Pico starts to recognize an outside corner at the end of the corridor, as depicted in the figure left. This third corner confuses the program, because the turning algorithm now questions which corners to use as a reference. Due to this unexpected corner, Pico was not able to solve the corridor competition. Therefore two new strategies were developed to win the upcoming competition: solving the maze. The wall follower and high-level maze solving program.