Embedded Motion Control 2012 Group 6: Difference between revisions

| Line 183: | Line 183: | ||

Characteristic properties of each line are saved. The typical equation of a straight line (y=c*x+b) can be used to virtually extend a wall to any desired position, since the coefficients are saved. Also, the slope of the line indicates the angle of the wall relative to the robot. Furthermore the endpoints are saved by their x- and y-coordinate and index inside the array of laser measurements. Some other parameters (range of interest, tolerances, etc) are introduced to match the algorithm to the simulator and real robot. Several experiments proved the algorithm to function properly. This is an important step, since all higher level algorithms are fully dependent on the correctness of the provided line information. | Characteristic properties of each line are saved. The typical equation of a straight line (y=c*x+b) can be used to virtually extend a wall to any desired position, since the coefficients are saved. Also, the slope of the line indicates the angle of the wall relative to the robot. Furthermore the endpoints are saved by their x- and y-coordinate and index inside the array of laser measurements. Some other parameters (range of interest, tolerances, etc) are introduced to match the algorithm to the simulator and real robot. Several experiments proved the algorithm to function properly. This is an important step, since all higher level algorithms are fully dependent on the correctness of the provided line information. | ||

See this video for a better understanding of how this works. | |||

http://youtu.be/2SzBqLgB6DU?hd=1 | |||

==Navigation algorithm== | ==Navigation algorithm== | ||

Revision as of 23:18, 24 June 2012

Progress

Progress of the group. The newest item is listed on top.

- Week 1

- Started with tutorials (all)

- Installed ROS + Eclipse (all)

- Installed Ubuntu (all)

- Week 2

- Finished with tutorials (all)

- Practiced with simulator (all)

- Investigated sensor data(all)

- Week 3

- Overall design of the robot control software

- Devision of work for the various blocks of functionality

- First rough implementation: the robot is able to autonomously drive through the maze without hitting the walls

- Progress on youtube http://youtu.be/qZ7NUgJI8uw?hd=1

- The Presentation is available online now.

- Week 4

- Studying chapter 8

- Creating presentation slides (finished)

- Rehearsing presentation over and over again (finished)

- Creating dead end detection (finished)

- Creating pre safe system (finished)

- Week 5

- Progress on youtube http://youtu.be/p8DRld3NVaY?hd=1

- Week 6

- Perormed fantasticly during the CorridorChallenge

- Progress on youtube http://youtu.be/hWepaa2UPkg?hd=1

- Discussed and created a software architecture

- Week 7

- Progress on youtube http://youtu.be/2SzBqLgB6DU?hd=1

This progress report aims to give an overview of the results of group 6. In some cases details are lacking due confidence reasons.

The software to be developed has one specific goal: driving out of the maze. It is yet uncertain whether the robot will start in the maze or needs to enter the maze himself. Hitting the wall results in a time penalty, which yields to the constraint not to hit the wall. In addition, speed is also of importance to set an unbeatable time.

For the above addressed goal and constraints, requirements for the robot can be described. Movement is necessary to even start the competition, secondly vision (object detection) is mandatory to understand what is going on in the surroundings. But, the most important of course is strategy. Besides following the indicating arrows, a back-up strategy will be implemented. Clearly, the latter should be robust and designed such that it always yields a satisfying result (i.e. team 6 wins).

Different modules will be designed the upcoming weeks are being developped at this very moment, which form the building blocks of the complete software framework.

But first things first: Our lecture coming Monday! Be sure all to be present, because it's going to be legendary ;) seriously, you don't want to miss this event! Ow, and also be sure to have read Chapter 8 on beforehand, otherwise you won't be able to ask intelligent questions.

To be continued...

ToDo

List of planned items.

- Improve arrow-recognition

- Use recognized arrows in navigation algorithm

- Add maze-specific cases to navigation algorithm (such as dead end detection)

- Apply speed and error dependent corrections for aligning with walls

- Fix overflow 90 deg turns

- Make presentation for final competition

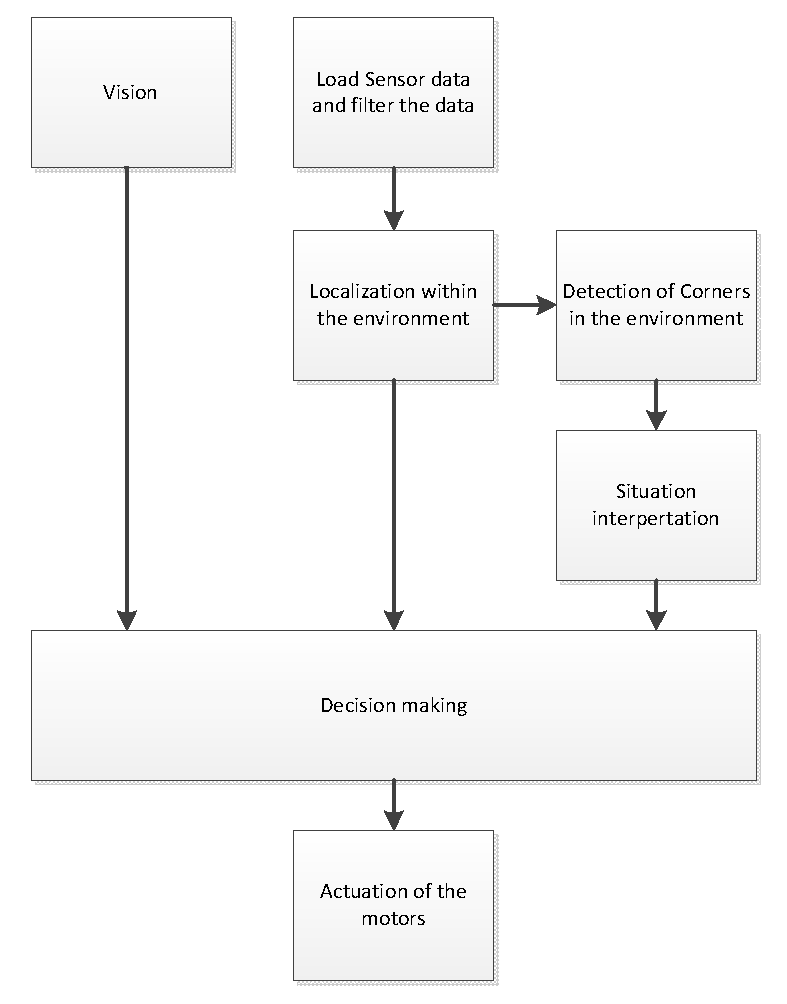

Software Architecture

edit in progress by [JU]

The architecture of our software can be seen in the Figure above. The three nodes on the left will process the raw input data gathered from the different sensors. The processed data will be published onto three different topics. The controlNode is subscribed to these three topics in order to read the data. The controlNode contains the algorithm which controls the robot and thus sends the twistMessages. The algorithm within the controlNode is divided into separate functions which are implemented in separate source files. It has been chosen however, not to devide these separate functions into different nodes, in order to reduce the communication overhead. Since the functions within the controlNode do not need to be executed parallel, there is no need for separate nodes.

Arrow recognition

edit in progress by [RN]

The arrows help the robot navigating through the maze. In order to decide where to go, the robot should be able to process the following steps:

- identify an arrow shape on the wall

- check if the arrow is red

- determine the arrow point direction

- use the obtained information

Brainstorm

The ROS package provides a package for face detection[1]. One can see the image as a poor representation of a face, and teach the robot the possible appearances of this face. The program is thought to recognise a left pointing "face" and a right pointing "face". However, one can doubt the robustness of this system. First because the system is not intended for arrow recognition, i.e. it is misused. Possibly, some crucial elements for arrow recognition are not implemented in this package. Secondly, face recognition requires a frontal view check. If the robot and wall with the arrow are not perfectly in line, the package can possibly not identify the arrow. Thirdly, an arrow in a slight different orientation can not be detected.

With these observations in mind, clearly, a different approach is necessary. Different literature is studied to find the best practice. It turned out that image recognition does not cover the objective correctly (see for example[2] ), it is better to use the term pattern recognition. Using this term in combination with Linux one is directed to various sites mentioning OpenCV[3][4] , which is also incorporated in ROS[5][6]. In addition different books discus OpenCV Open Source Computer Vision Library - Reference Manual by Intel Learning openCV by O'Reilly

Algorithm

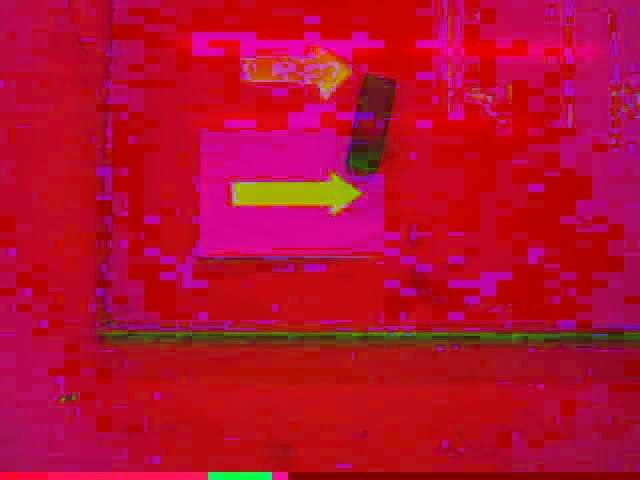

After studying the material discussed in the previous section, the following arrow detection algorithm is deduced:

- Open image

- Apply HSV transform

- Filter red colour from image

- Find contours using Canny algorithm

- Approximate the found polygons

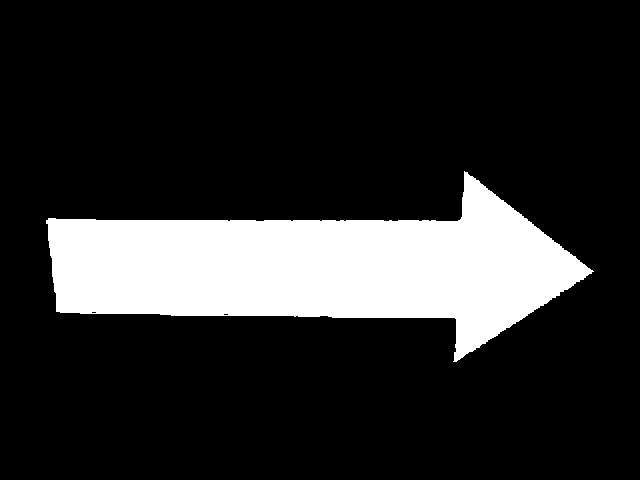

- Detect the biggest arrow using characteristic arrow properties (e.g. number of corners)

- If an arrow is found

- Determine arrow bounding box

- Find corners on the bounding box

- Determine arrow orientation

- Report direction (i.e. left or right)

- Report no result

- If no arrow is found

- Report no result

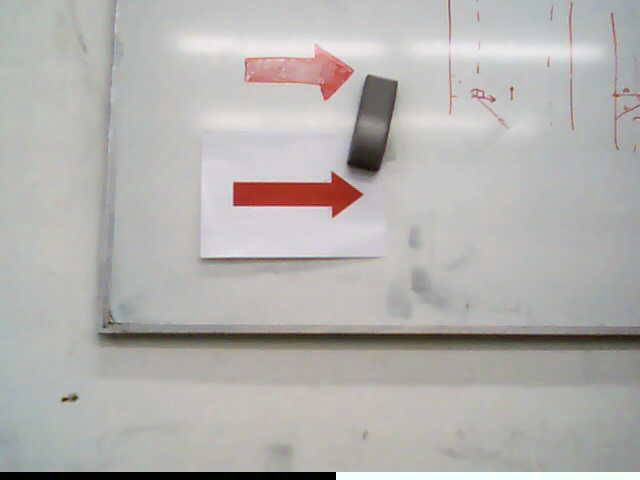

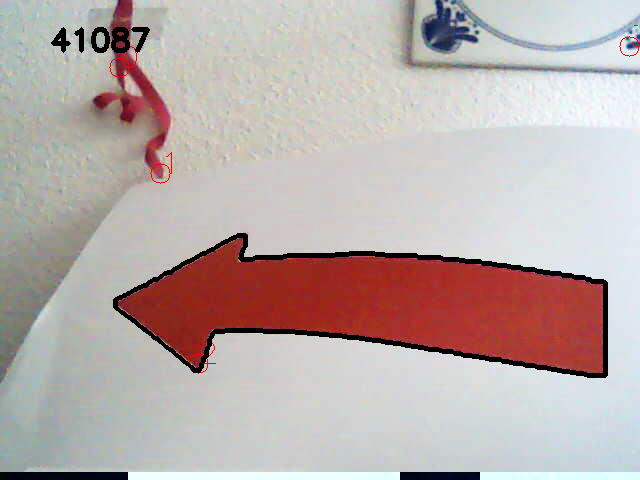

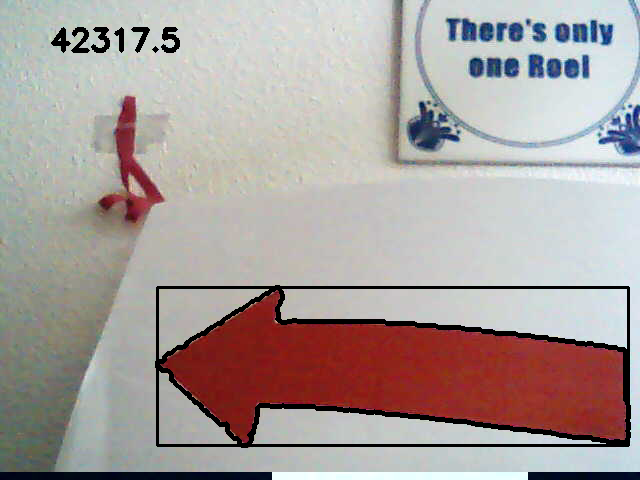

Preliminary results

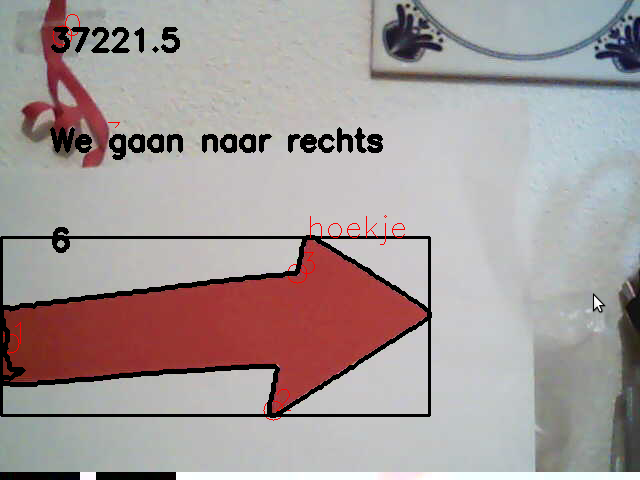

The pictures below show the first steps from the algorithm, were an arrow is correctly detected.

Before continuing the algorithm, some remarks on possible flaws are made.

Pitfalls

Robustness is an important criteria. Among others, the the following issues can fool the above procedure:

- different light source

- colours close to red

The picture below, however not so clearly, indicates the light source problem. The HSV transform is not homogeneous for the arrow, due the white stripe in the original file. This resulted in a very noise canny detection and other issues in the rest of the algorithm.

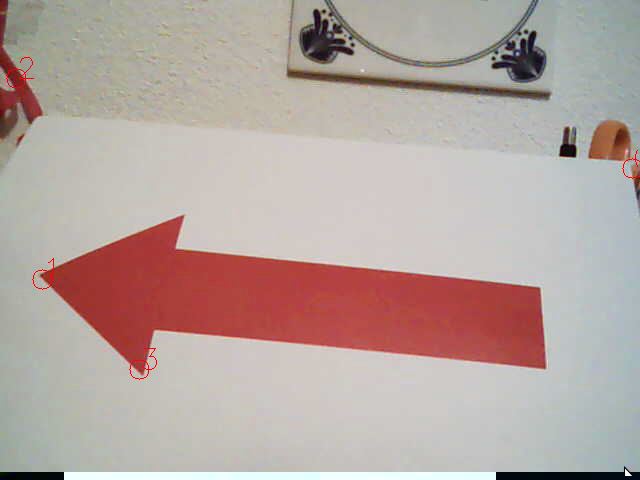

For sure, the arrow is printed on a white sheet of paper. Concerning the environment of the maze nothing is told, this forced us to check the robustness in an not so ideal environment. Colours close to red are, e.g. pink and orange. A test set-up was created consisting of orange scissors on one side and a nice pink ribbon on the other, see below.

Clearly, the algorithm is fooled. False corners are detected on both objects. Light influences the way red is detected, as a result setting the boundary's for the HSV extraction is quite tricky and dependent on the actual light environment.

Final results

After shaping all the parameters, results as shown below are obtained.

In these files we see some numbers, the above one (e.g. 25911) is used to determine the biggest red object in the current view. We assume that we will not be fouled with a larger red object besides the arrow. note: point of improvement to check for real arrow One specific property of an arrow is checked, which enables us to handle glitches as seen in the arrow indicating a left direction. Clearly, due to the light circumstances, a white dash is formed on the arrow body. The algorithm is able to handle these glitches without problem.

Using some maths, the coordinate of "hoekje" is checked against a reference frame to indicate the direction.

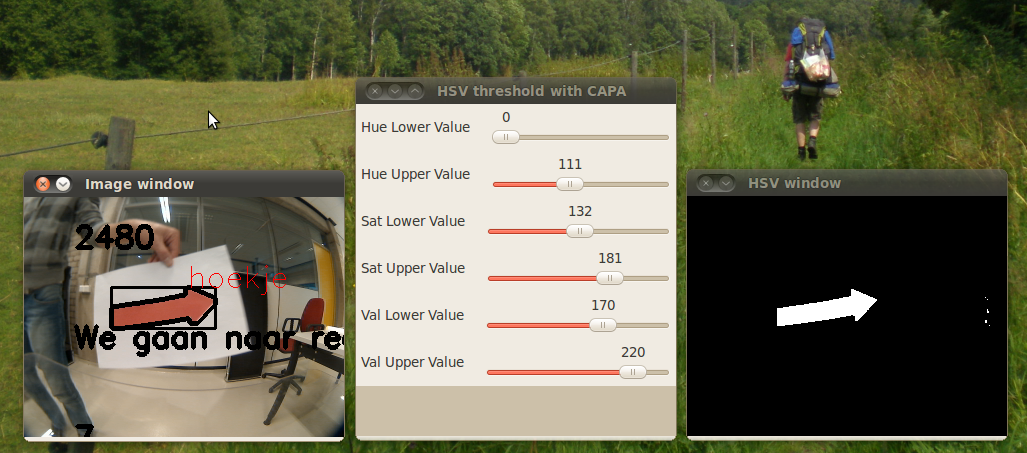

Work in Progress

As mentioned in the section pitfalls, problems with the HSV extraction were expected. During testing, it turned out the detection on the Pico camera differed from the used USB webcam (possible different colour schemes, lenses, codecs). As the algorithm is on first glance robust, the flaw was expected in the colour extraction (i.e. the HSV image) as a result of hardware differences as well as the light conditions. With the published rosbag file, this problem was studied in more detail. Apparently, the yellow door and red chair are challenging objects for proper filtering. As changing variables, making the code and testing the result got boring, some proper Computer Aided Parameter Adjustment (CAPA) was developed. See the result in the figure below.

To-Do

- Adjust variables with CAPA such that the arrow is correctly detected for all frames in the rosbag file.

- Output the result to the main frame

- Stabilise the output

Line detection

edit in progress by [AJ]

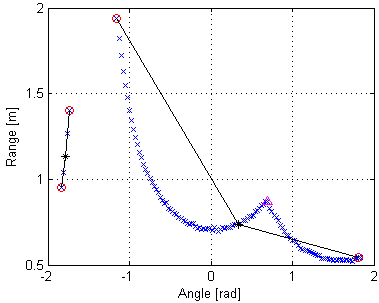

Since both the corridor and maze challenge do only contain straight walls, without other disturbing objects, the strategy is based upon information about walls in the neighborhood of the robot. These can be walls near the actual position of the robot, but also a history of waypoints derived from the wall-information. The wall-information is extracted from the lasersensor data. The detection algorithm needs to be robust, since sensor data will be contaminated by noise and the actual walls will not be perfectly straight lines and may be non-ideally aligned.

Each arrival of lasersensor data is followed by a call to the wall detection algorithm, which returns an array of line objects. These line objects contain the mathematical description of the surrounding walls and are used by the strategy algorithm. The same lines are also published as markers for visualization and debugging in RViz.

The wall detection algorithm consists of the following steps:

- Set measurements outside the range of interest to a large value, to exclude them from being detected as a wall.

- Using the first and second derivative of the measurement points, the sharp corners and end of walls are detected. This gives a first rough list of lines.

- A line being detected may still contain one or multiple corners. Two additional checks are done recursively at each detected line segment.

- The line is split in half. The midpoint is connected to both endpoints. For each measurement point the distance to these linear lines is calculated (in the polar-grid). Most embedded corners are now detected by looking at the peak values.

- In certain unfavorable conditions a detected line may still contain a corner. The endpoints of the line are connected (in the xy-grid) and the absolute peaks are observed to find any remaining corners.

- Lines that do not contain enough measurement points to be regarded as reliable are thrown away.

The figures shown below do give a rough indication of the steps involved and the result.

Characteristic properties of each line are saved. The typical equation of a straight line (y=c*x+b) can be used to virtually extend a wall to any desired position, since the coefficients are saved. Also, the slope of the line indicates the angle of the wall relative to the robot. Furthermore the endpoints are saved by their x- and y-coordinate and index inside the array of laser measurements. Some other parameters (range of interest, tolerances, etc) are introduced to match the algorithm to the simulator and real robot. Several experiments proved the algorithm to function properly. This is an important step, since all higher level algorithms are fully dependent on the correctness of the provided line information.

See this video for a better understanding of how this works. http://youtu.be/2SzBqLgB6DU?hd=1

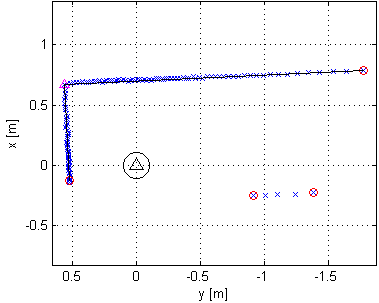

edit in progress by [DG]

Testing the line detection algorithm together with RViz shows correctness of the algorithm. These lines will be used for the navigation algorithm in the following steps:

- 1: When both a left and a right wall is detected, used the calculated distances to this wall to find the middle of the corridor

- 2: When both a left and a right wall is detected, used the calculated angle with respect to the driving direction of the robot to drive parallel with the walls

- 3: When a line in front is detected, check whether left, right, both or none of these options are available to make a turn and decide what action to take as prescribed by the strategy

These steps where implemented and tested. During the corridor competition, this algorithm successfully guided Jazz out of the corridor!! List of improvements can be found in the ToDo section.

Project agreements

Coding standards

- Names are given using camelCase standard

- Parameters are placed in header files by use of defines

- For indents TAB characters are used

- An opening { follows right behind an if, while, etc... statement and not on the next line

- Else follows directly after an closing }

- Comment the statement that is being closed after a closing }

SVN guidelines

- Always first update the SVN with the following command

svn up

- Commit only files that actually do work

- Clearly indicate what is changed in the file, this comes handy for logging purposes

- As we all work with the same account, identify yourself with a tag (e.g. [AJ], [DG], [DH], [JU], [RN])

- To add files, use

svn add $FILENAME

For multiple files, e.g. all C-files usesvn add *.c

Note: again, update only source files - If multiple files are edited simultaneously, more specifically the same part of a file, a conflict will arise.

- use version A

- use version B

- show the differences

Add laser frame to rviz

rosrun tf static_transform_publisher 0 0 0 0 0 0 /base_link /laser 10

TAB problems in Kate editor

In Kate, TAB inserts spaces instead of true tabs. According to [7] you should set tab width equal indention width. Open Settings -> Configure Kate, in the editing section you can set tab width (e.g. 8). Another section is found for Indention, set indention width to the same value.

How to create a screen video?

- Go to Applications --> Ubuntu software centre.

- Search for recordmydesktop and install this.

- When the program is running, you will see a record button top right and a window pops-up.

- First use the "save as" button to give your video a name and after that you can push the record button.

- This software creates .OGV files, no problem for youtube but a problem with edditing tools.

- Want to edit the movie? Use "handbrake" to convert OGV files to mp4.

Remarks

- The final assignment will contain one single run through maze

- Paths have fixed width, with some tolerances

- Every team starts at the same position

- The robot may start at a floating wall

- The arrow detection can be simulated by using a webcam

- Slip may occur -> difference between commands, odometry and reality

- Dynamics at acceleration/deceleration -> max. speed and acceleration will be set

References

- ↑ Face recognition in ROS.

- ↑ Noij2009239 on Springer.

- ↑ http://www.shervinemami.co.cc/blobs.html

- ↑ http://opencv.willowgarage.com/documentation/cpp/index.html

- ↑ http://www.ros.org/wiki/vision_opencv

- ↑ http://pharos.ece.utexas.edu/wiki/index.php/How_to_Use_a_Webcam_in_ROS_with_the_usb_cam_Package

- ↑ [1]

- ↑ Documentation from Gostai.

- ↑ Standard Units of Measure and Coordinate Conventions.

- ↑ Specs of the robot (buy one!).